# Technical Document: Retrieval-Augmented Generation (RAG) Evolution Tree

## 1. Overview

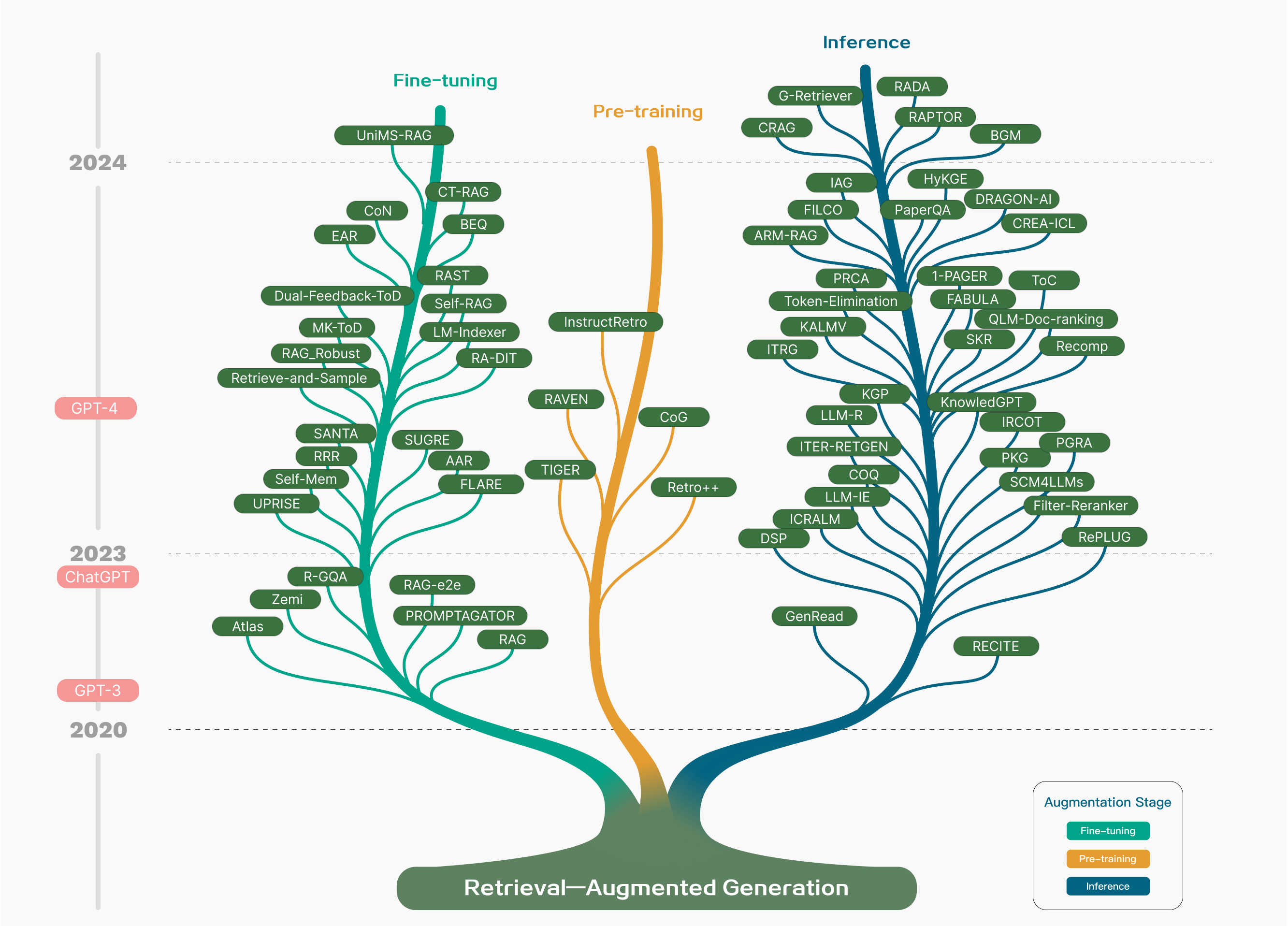

This image is a conceptual "evolutionary tree" diagram illustrating the development and categorization of Retrieval-Augmented Generation (RAG) methodologies from 2020 to 2024. The diagram uses a tree structure where the roots represent the foundational concept, and the branches represent specific models or techniques categorized by their "Augmentation Stage."

## 2. Spatial Grounding and Legend

The diagram is organized along a vertical temporal axis (left) and categorized by color-coded branches.

* **Temporal Axis (Left):** A vertical grey line marking years and major LLM milestones.

* **Legend (Bottom Right):**

* **Teal (Greenish-Blue):** Fine-tuning

* **Orange (Yellow-Gold):** Pre-training

* **Dark Blue:** Inference

* **Main Root (Bottom Center):** Labeled "Retrieval—Augmented Generation".

---

## 3. Component Isolation

### A. Temporal Milestones (Left Header/Axis)

The vertical axis tracks time from bottom to top:

* **2020:** Base of the tree.

* **GPT-3:** Milestone marker between 2020 and 2023.

* **2023 / ChatGPT:** Milestone marker.

* **GPT-4:** Milestone marker between 2023 and 2024.

* **2024:** Top section of the tree.

### B. Pre-training Branch (Center - Orange)

**Trend:** This is the thinnest branch, indicating fewer models focus on RAG at the pre-training stage. It grows vertically with few offshoots.

* **RAVEN** (Lower branch, left)

* **TIGER** (Lower branch, left)

* **CoG** (Middle branch, right)

* **Retro++** (Middle branch, right)

* **InstructRetro** (Upper main stem)

### C. Fine-tuning Branch (Left - Teal)

**Trend:** A dense, bushy branch that expands significantly between 2023 and 2024, showing a high volume of research in optimizing RAG through model training.

* **Early/Lower Branches (Pre-2023):** Atlas, Zemi, R-GQA, RAG, PROMPTAGATOR, RAG-e2e.

* **Middle Branches (2023):** UPRISE, Self-Mem, RRR, SANTA, SUGRE, AAR, FLARE, Retrieve-and-Sample, RAG_Robust, MK-ToD, Dual-Feedback-ToD.

* **Upper Branches (Late 2023 - 2024):** EAR, CoN, UniMS-RAG, CT-RAG, BEQ, RAST, Self-RAG, LM-Indexer, RA-DIT.

### D. Inference Branch (Right - Dark Blue)

**Trend:** The most complex and widely branched section. It shows a massive explosion of "plug-and-play" or pipeline-based RAG methods that do not require retraining the underlying model.

* **Lower Branches (2023):** RECITE, GenRead, DSP, RePLUG, Filter-Reranker, SCM4LLMs, PKG, PGRA, IRCOT.

* **Middle Branches (Late 2023):** ICRALM, LLM-IE, COQ, ITER-RETGEN, LLM-R, KGP, KnowledGPT, Recomp, SKR, QLM-Doc-ranking, ToC, FABULA, 1-PAGER.

* **Upper Branches (2024):** ITRG, KALMV, Token-Elimination, PRCA, ARM-RAG, FILCO, IAG, CRAG, G-Retriever, RADA, RAPTOR, BGM, HyKGE, PaperQA, DRAGON-AI, CREA-ICL.

---

## 4. Summary of Trends

1. **Volume:** The "Inference" stage (Dark Blue) contains the highest number of distinct methodologies, followed closely by "Fine-tuning" (Teal). "Pre-training" (Orange) is the least common approach.

2. **Temporal Acceleration:** There is a visible "bloom" in the number of labels as the timeline moves from 2023 into 2024, coinciding with the release of ChatGPT and GPT-4.

3. **Complexity:** The Inference branch shows more sub-branching (hierarchical dependencies), suggesting a move toward multi-step reasoning and complex retrieval pipelines (e.g., RAPTOR, ITER-RETGEN).