# Technical Document Extraction: Retrieval-Augmented Generation (RAG) Evolution Diagram

## Diagram Overview

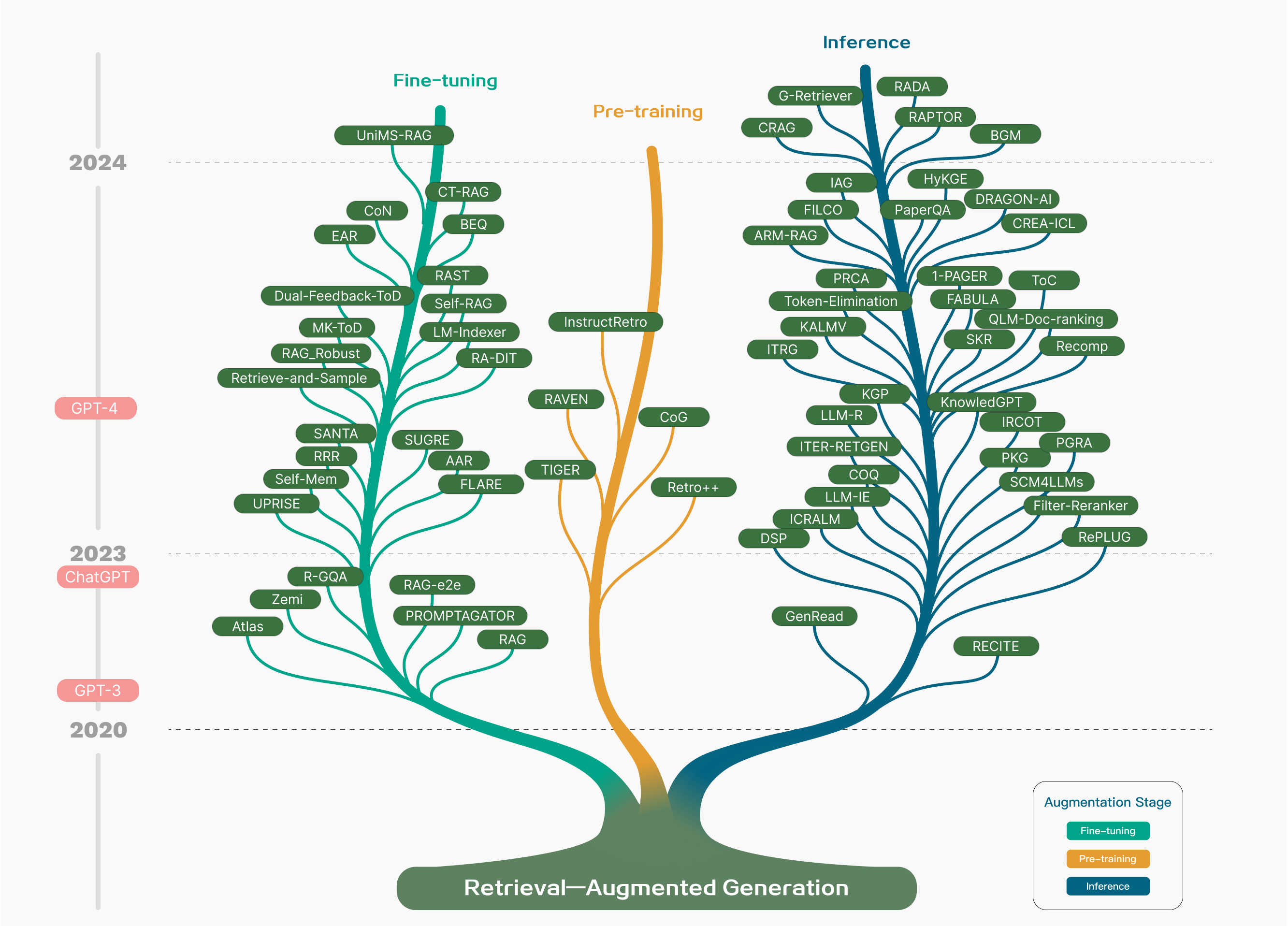

The image is a **tree diagram** illustrating the evolution of **Retrieval-Augmented Generation (RAG)** models across three stages: **Fine-tuning**, **Pre-training**, and **Inference**. The diagram spans four years (2020–2024) and categorizes models by their augmentation stage.

---

## Key Components

### 1. **Vertical Axis (Years)**

- **2020**: GPT-3

- **2023**: ChatGPT

- **2024**: GPT-4

### 2. **Horizontal Axis**

- **Title**: "Retrieval-Augmented Generation"

### 3. **Legend (Bottom Right)**

- **Fine-tuning**: Green

- **Pre-training**: Orange

- **Inference**: Blue

---

## Branch Analysis

### **A. Fine-tuning (Green Branch)**

- **Subcategories**:

- **UniMS-RAG**

- CT-RAG

- BEQ

- RAST

- Self-RAG

- **CoN**

- EAR

- Dual-Feedback-ToD

- MK-ToD

- **Retrieve-and-Sample**

- RAG_Robust

- SANTA

- RR

- Self-Mem

- UPRISER

- **RAG-e2e**

- PROMPTAGATOR

- **Atlas**

- Zemi

- R-GQA

### **B. Pre-training (Orange Branch)**

- **Subcategories**:

- **Raven**

- CoG

- **Tiger**

- Retro++

- **InstructRetro**

### **C. Inference (Blue Branch)**

- **Subcategories**:

- **G-Retriever**

- C-RAG

- **RAGA**

- RAPTOR

- BGM

- **HyKGE**

- DRAGON-AI

- CREA-ICL

- **Token-Eliminator**

- IAG

- FILCO

- ARM-RAG

- PRCA

- **1-PAGER**

- FABULA

- ToC

- **QLM-Doc-ranking**

- SKR

- Recomp

- **KnowledGPT**

- IR-COT

- PKG

- SCM4LLMs

- **Filter-Reranker**

- RePLUG

- **GenRead**

- RECI-TE

---

## Cross-Referenced Color Coding

- **Green (Fine-tuning)**: All subcategories under "Fine-tuning" are connected via green lines.

- **Orange (Pre-training)**: All subcategories under "Pre-training" are connected via orange lines.

- **Blue (Inference)**: All subcategories under "Inference" are connected via blue lines.

---

## Key Trends

1. **Temporal Progression**:

- Models evolve from **GPT-3 (2020)** to **GPT-4 (2024)**.

- New RAG variants emerge in each stage (e.g., UniMS-RAG in 2024).

2. **Stage Specialization**:

- **Fine-tuning** focuses on iterative improvements (e.g., Dual-Feedback-ToD, Self-RAG).

- **Pre-training** emphasizes foundational models (e.g., Raven, Tiger).

- **Inference** prioritizes deployment efficiency (e.g., RePLUG, GenRead).

3. **Model Complexity**:

- Subcategories branch into increasingly specialized variants (e.g., RAG_Robust → SANTA → Self-Mem).

---

## Diagram Flow

1. **Root**: "Retrieval-Augmented Generation" (central trunk).

2. **Branches**:

- **Green (Fine-tuning)**: Splits into subcategories like UniMS-RAG and Retrieve-and-Sample.

- **Orange (Pre-training)**: Splits into Raven, Tiger, and InstructRetro.

- **Blue (Inference)**: Splits into G-Retriever, RAGA, and KnowledGPT.

---

## Critical Notes

- **No Data Table**: The diagram uses branching structures instead of tabular data.

- **Text Embedding**: All model names (e.g., UniMS-RAG, RePLUG) are embedded directly in the branches.

- **Legend Accuracy**: Colors strictly align with the legend (green = Fine-tuning, orange = Pre-training, blue = Inference).

---

## Conclusion

This diagram maps the evolution of RAG models across three stages, highlighting specialization and temporal progression. The color-coded branches and year markers provide a clear visual representation of technical advancements from 2020 to 2024.