## Diagram: MemVerse - Model-Agnostic, Plug-and-Play Memory Framework

### Overview

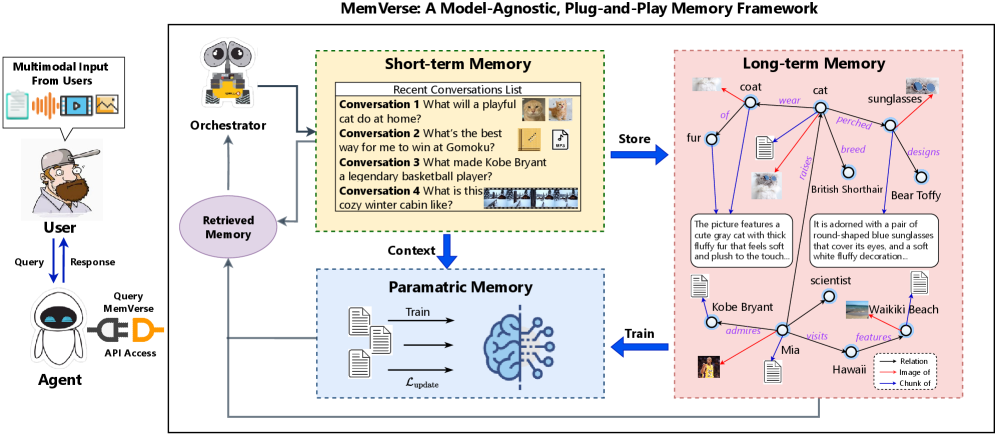

The diagram illustrates a memory framework architecture with three interconnected components: **Short-term Memory**, **Parametric Memory**, and **Long-term Memory**. It includes user-agent interactions, memory retrieval processes, and data flow between memory systems. The framework emphasizes multimodal input handling, memory storage, and knowledge graph construction.

### Components/Axes

1. **User Interface**:

- **User**: Depicted as a cartoon character with a cap and beard.

- **Agent**: Robot-like figure with a speech bubble showing multimodal inputs (text, audio, video, graphs).

- **Query/Response Flow**: Blue arrows indicate bidirectional communication between user and agent.

2. **Short-term Memory**:

- **Recent Conversations List**: Contains four entries with text and icons:

1. "What will a playful cat do at home?" (cat icons)

2. "What's the best way for me to win at Gomoku?" (notebook and file icons)

3. "What made Kobe Bryant a legendary basketball player?" (basketball icon)

4. "What is this cozy winter cabin like?" (cabin image)

- **Orchestrator**: Robot figure connecting user queries to memory systems.

3. **Parametric Memory**:

- **Training Process**: Labeled "Train" with a brain icon and loss function notation (`L_update`).

- **Context**: Blue arrow from Short-term Memory to Parametric Memory.

4. **Long-term Memory**:

- **Knowledge Graph**: Network of nodes (entities) and edges (relationships):

- **Nodes**: "cat," "Kobe Bryant," "Mia," "Hawaii," "Waikiki Beach," "British Shorthair," "Bear Toffy," "scientist."

- **Edges**: Colored lines with labels:

- **Red**: "Image of" (e.g., cat → image)

- **Blue**: "Chunk of" (e.g., Waikiki Beach → description)

- **Purple**: "Relation" (e.g., Kobe Bryant → "admires" → Mia)

- **Legend**: Located at bottom-right, explaining edge colors.

5. **Memory Flow**:

- **Store**: Blue arrow from Short-term to Long-term Memory.

- **Train**: Blue arrow from Parametric to Long-term Memory.

- **Retrieved Memory**: Purple oval connecting to Short-term Memory.

### Detailed Analysis

- **Short-term Memory**: Contains transient conversation data with multimodal elements (text + icons/images).

- **Parametric Memory**: Acts as a training layer for model updates, using loss functions (`L_update`).

- **Long-term Memory**: Structured as a knowledge graph with explicit entity-relationship mappings.

- **Agent-User Interaction**: Multimodal inputs (text, audio, video, graphs) are processed by the agent via an API.

### Key Observations

1. **Multimodal Integration**: The system handles diverse input types (text, images, audio) for memory retrieval.

2. **Memory Hierarchy**:

- Short-term: Recent interactions.

- Parametric: Training for model adaptation.

- Long-term: Persistent knowledge graph.

3. **Knowledge Graph Complexity**: Nodes represent entities (people, places, objects), while edges encode relationships (ownership, admiration, location).

4. **Cyclical Flow**: Long-term memory feeds back into short-term memory for context-aware responses.

### Interpretation

The framework demonstrates a **multi-stage memory pipeline**:

1. User queries trigger retrieval from short-term memory.

2. Contextual data is processed in parametric memory for model updates.

3. Long-term memory provides structured knowledge to enrich responses.

4. The agent synthesizes information across memory layers to generate multimodal responses.

Notable design choices:

- **Color Coding**: Red/blue/purple edges in the knowledge graph enable quick relationship identification.

- **Cyclical Architecture**: Memory systems interact bidirectionally, enabling dynamic knowledge integration.

- **Agent Autonomy**: The orchestrator acts as an intermediary, abstracting user-agent interactions from memory systems.

This architecture suggests a **cognitive computing system** capable of handling both transient interactions and persistent knowledge, with explicit mechanisms for model adaptation (`L_update`) and multimodal data fusion.