TECHNICAL ASSET FINGERPRINT

c6cf83aba71da19566a6e8da

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

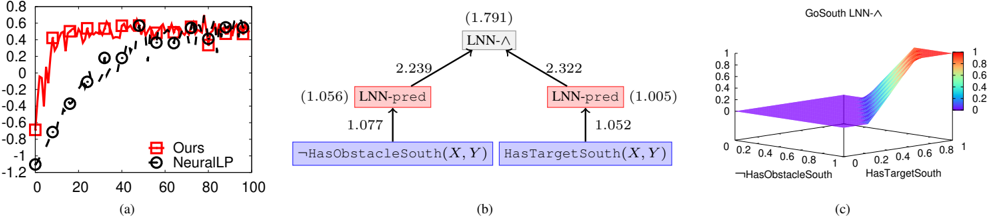

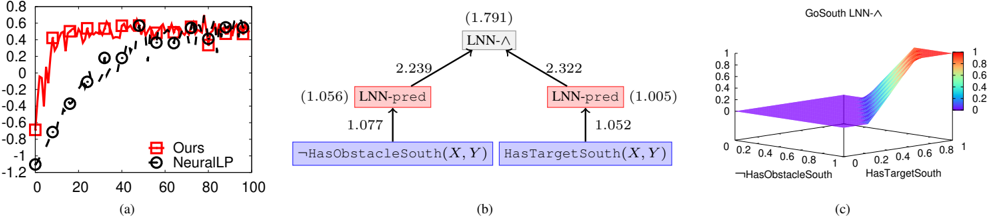

## Composite Figure: Performance Chart, Logic Diagram, and 3D Surface Plot

### Overview

The image presents a composite figure consisting of three sub-figures labeled (a), (b), and (c). Sub-figure (a) is a line chart comparing the performance of two methods, "Ours" and "NeuralLP," over a range of iterations. Sub-figure (b) is a logic diagram illustrating the relationships between different logical components. Sub-figure (c) is a 3D surface plot visualizing the output of "GoSouth LNN-^" as a function of "HasObstacleSouth" and "HasTargetSouth."

### Components/Axes

**Sub-figure (a): Performance Chart**

* **Title:** Implicitly, a performance comparison chart.

* **X-axis:** Iterations, ranging from 0 to 100 in increments of 20.

* **Y-axis:** Performance metric, ranging from -1.2 to 0.8 in increments of 0.2.

* **Legend:** Located in the bottom-right corner.

* "Ours": Represented by a red line with square markers.

* "NeuralLP": Represented by a black dashed line with circle markers.

**Sub-figure (b): Logic Diagram**

* **Nodes:**

* Top: "LNN-^" (with a value of 1.791 in parentheses) - gray box

* Left: "LNN-pred" (with a value of 1.056 in parentheses) - red box

* Right: "LNN-pred" (with a value of 1.005 in parentheses) - red box

* Bottom-Left: "¬HasObstacleSouth(X, Y)" - blue box

* Bottom-Right: "HasTargetSouth(X, Y)" - blue box

* **Edges:** Arrows indicating logical relationships with associated numerical values.

* "¬HasObstacleSouth(X, Y)" to "LNN-pred": 1.077

* "HasTargetSouth(X, Y)" to "LNN-pred": 1.052

* "LNN-pred" (left) to "LNN-^": 2.239

* "LNN-pred" (right) to "LNN-^": 2.322

**Sub-figure (c): 3D Surface Plot**

* **Title:** "GoSouth LNN-^"

* **X-axis:** "¬HasObstacleSouth", ranging from 0 to 1.

* **Y-axis:** "HasTargetSouth", ranging from 0 to 1.

* **Z-axis:** Output value, ranging from 0 to 1, with a color gradient from blue (0) to red (1).

### Detailed Analysis

**Sub-figure (a): Performance Chart**

* **"Ours" (Red line with square markers):**

* Starts at approximately -0.7 at iteration 0.

* Increases sharply to approximately 0.4 by iteration 10.

* Fluctuates around 0.5-0.6 for the remaining iterations.

* **"NeuralLP" (Black dashed line with circle markers):**

* Starts at approximately -1.1 at iteration 0.

* Increases steadily to approximately 0.4 by iteration 80.

* Appears to plateau around 0.4 for the remaining iterations.

**Sub-figure (b): Logic Diagram**

* The diagram illustrates a hierarchical logical structure.

* The bottom-level nodes, "¬HasObstacleSouth(X, Y)" and "HasTargetSouth(X, Y)", feed into "LNN-pred" nodes.

* The "LNN-pred" nodes then feed into the top-level "LNN-^" node.

* The numerical values associated with the edges likely represent weights or strengths of the logical connections.

**Sub-figure (c): 3D Surface Plot**

* The surface plot shows the output of "GoSouth LNN-^" as a function of two input variables.

* When both "¬HasObstacleSouth" and "HasTargetSouth" are low (near 0), the output is also low (near 0, blue).

* As "HasTargetSouth" increases (towards 1), the output rapidly increases (towards 1, red), especially when "¬HasObstacleSouth" is also high.

* The surface is relatively flat when "HasTargetSouth" is low, indicating that the output is not significantly affected by "¬HasObstacleSouth" in this region.

### Key Observations

* In sub-figure (a), the "Ours" method initially performs worse than "NeuralLP" but quickly surpasses it and maintains a higher performance level.

* In sub-figure (b), the weights associated with the connections between nodes vary, suggesting different levels of importance or influence.

* In sub-figure (c), the output of "GoSouth LNN-^" is highly sensitive to "HasTargetSouth" and less sensitive to "¬HasObstacleSouth" when "HasTargetSouth" is low.

### Interpretation

The composite figure presents a comparison of two methods ("Ours" and "NeuralLP") in terms of performance, along with a logical representation of a system ("GoSouth LNN-^"). The performance chart suggests that the "Ours" method is superior to "NeuralLP" in this context. The logic diagram provides insight into the structure and relationships between different components of the "GoSouth LNN-^" system. The 3D surface plot visualizes the behavior of the "GoSouth LNN-^" system, demonstrating how its output is influenced by the input variables "¬HasObstacleSouth" and "HasTargetSouth." The data suggests that the presence of a target to the south ("HasTargetSouth") is a critical factor in determining the output of the system, while the absence of an obstacle to the south ("¬HasObstacleSouth") has a less significant impact when there is no target.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Chart/Diagram Type: Performance Comparison & Neural Network Architecture Visualization

### Overview

The image presents a comparison of two methods ("Ours" and "NeuralLP") in a scatter plot (a), a diagram of a neural network architecture (b), and a 3D surface plot (c) visualizing a function related to the network's output. The overall theme appears to be evaluating the performance of a novel method ("Ours") against a baseline ("NeuralLP") in a reinforcement learning or similar context, potentially involving navigation or path planning.

### Components/Axes

**(a) Scatter Plot:**

* **X-axis:** Labeled "0", "20", "40", "60", "80", "100". Units are not specified.

* **Y-axis:** Scale ranges from approximately -1.2 to 0.8. Units are not specified.

* **Data Series 1:** "Ours" - Represented by red squares with error bars.

* **Data Series 2:** "NeuralLP" - Represented by black circles with error bars.

* **Legend:** Located at the bottom-left, clearly labeling the two data series.

**(b) Neural Network Diagram:**

* **Nodes:** Labeled "LNN-A" (with value 1.791), "LNN-pred" (with value 1.056 and 1.005).

* **Inputs:** "HasObstacleSouth(X,Y)" and "HasTargetSouth(X,Y)".

* **Arrows:** Indicate the flow of information and are labeled with numerical weights (2.239, 3.222, 1.077, 1.052).

* **Node Color:** "LNN-pred" nodes are colored red. Input nodes are colored blue.

**(c) 3D Surface Plot:**

* **X-axis:** "HasObstacleSouth(X,Y)" - Scale ranges from 0 to 0.8.

* **Y-axis:** "HasTargetSouth(X,Y)" - Scale ranges from 0 to 0.8.

* **Z-axis:** Represents the output value, ranging from approximately 0 to 1.

* **Color Map:** A gradient from blue (low values) to red (high values) is used to represent the output value on the surface.

* **Title:** "GoSouth LNN-A" is positioned at the top-right.

### Detailed Analysis or Content Details

**(a) Scatter Plot:**

* **"Ours" (Red Squares):** The data points generally cluster around positive Y-values, starting around Y=0.2 at X=0 and increasing to around Y=0.6 at X=100. There is significant variance, indicated by the error bars. The trend is generally upward, but with considerable fluctuation.

* **"NeuralLP" (Black Circles):** The data points start around X=0 and Y=0.2, then decrease to approximately Y=-0.8 at X=40, and then increase again to around Y=0.4 at X=100. The trend is initially downward, then upward. Error bars are also present.

* **Approximate Data Points ("Ours"):** (0, 0.2), (20, 0.3), (40, 0.4), (60, 0.5), (80, 0.55), (100, 0.6).

* **Approximate Data Points ("NeuralLP"):** (0, 0.2), (20, 0.1), (40, -0.8), (60, -0.2), (80, 0.2), (100, 0.4).

**(b) Neural Network Diagram:**

* The diagram shows a simple neural network with two input nodes ("HasObstacleSouth(X,Y)" and "HasTargetSouth(X,Y)") connected to a hidden layer node ("LNN-pred") and then to an output node ("LNN-A").

* The weights connecting the inputs to "LNN-pred" are 1.077 and 1.052, respectively.

* The weights connecting "LNN-pred" to "LNN-A" are 2.239 and 3.222, respectively.

**(c) 3D Surface Plot:**

* The surface plot shows a complex relationship between the input variables "HasObstacleSouth(X,Y)" and "HasTargetSouth(X,Y)" and the output value.

* The surface is relatively flat near the origin (0,0).

* There is a significant peak in the output value when "HasObstacleSouth(X,Y)" is around 0.6 and "HasTargetSouth(X,Y)" is around 0.4.

* The surface is generally higher when "HasTargetSouth(X,Y)" is high and "HasObstacleSouth(X,Y)" is low.

### Key Observations

* The "Ours" method consistently achieves higher Y-values than "NeuralLP" for most of the X-axis range in the scatter plot.

* "NeuralLP" exhibits a more pronounced dip in performance around X=40.

* The neural network diagram shows a relatively simple architecture.

* The 3D surface plot suggests a non-linear relationship between the input variables and the output.

### Interpretation

The data suggests that the "Ours" method outperforms "NeuralLP" in the evaluated task, as indicated by the consistently higher values in the scatter plot. The dip in "NeuralLP" performance around X=40 might indicate a specific scenario where the method struggles. The neural network diagram provides insight into the architecture of the "Ours" method, showing how the input features ("HasObstacleSouth(X,Y)" and "HasTargetSouth(X,Y)") are processed to generate the output "LNN-A". The 3D surface plot visualizes the function represented by the network, revealing the complex interplay between the input features and the output. The peak in the surface plot suggests that the network is particularly sensitive to certain combinations of obstacle and target presence. The weights in the neural network diagram indicate the relative importance of different connections. The higher weights connecting "LNN-pred" to "LNN-A" suggest that the hidden layer plays a crucial role in determining the final output. Overall, the image presents a compelling case for the effectiveness of the "Ours" method and provides valuable insights into its underlying mechanisms.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Performance Comparison of "Ours" vs "NeuralLP" Models

### Overview

A line graph comparing two models ("Ours" and "NeuralLP") across 100 data points. The y-axis ranges from -1.2 to 0.8, while the x-axis spans 0 to 100. The graph includes a legend in the bottom-right corner.

### Components/Axes

- **X-axis**: Unlabeled numerical scale (0–100)

- **Y-axis**: Unlabeled numerical scale (-1.2–0.8)

- **Legend**:

- Red squares: "Ours"

- Black circles: "NeuralLP"

### Detailed Analysis

- **"Ours" (Red Squares)**:

- Starts at approximately -0.8 at x=0.

- Sharp upward trend to ~0.6 by x=20.

- Fluctuates between 0.4–0.6 for x=40–100.

- **"NeuralLP" (Black Circles)**:

- Starts at -1.2 at x=0.

- Gradual increase to -0.4 by x=40.

- Stabilizes between -0.2–0.0 for x=60–100.

### Key Observations

- "Ours" consistently outperforms "NeuralLP" across all x-values.

- "Ours" shows higher volatility in the early range (x=0–40) but stabilizes later.

- "NeuralLP" exhibits a smoother, slower ascent.

### Interpretation

The graph suggests that "Ours" achieves superior performance (higher y-values) compared to "NeuralLP" in the measured metric. The early volatility in "Ours" may indicate sensitivity to initial conditions or data preprocessing, while its stabilization implies robustness in later stages. "NeuralLP"’s slower improvement could reflect architectural limitations or suboptimal hyperparameters.

---

## Flowchart: LNN-Λ Decision Tree

### Overview

A hierarchical flowchart depicting a decision tree for "LNN-Λ" with conditional branches. Values in parentheses represent confidence scores or weights.

### Components/Axes

- **Root Node**: "LNN-Λ" (1.791)

- **Branches**:

- Left: "LNN-pred" (2.239) → "HasObstacleSouth(X, Y)" (1.077)

- Right: "LNN-pred" (2.322) → "HasTargetSouth(X, Y)" (1.052)

- **Terminal Nodes**: Blue rectangles with conditional logic.

### Content Details

- **Root Node**: "LNN-Λ" (1.791)

- Splits into two "LNN-pred" nodes.

- **Left Branch**:

- "LNN-pred" (2.239) → "HasObstacleSouth(X, Y)" (1.077)

- **Right Branch**:

- "LNN-pred" (2.322) → "HasTargetSouth(X, Y)" (1.052)

### Key Observations

- The root node "LNN-Λ" has the lowest value (1.791), suggesting it is a foundational or aggregated metric.

- "LNN-pred" nodes have higher values (2.239 and 2.322), indicating intermediate processing steps.

- Terminal conditions ("HasObstacleSouth" and "HasTargetSouth") have lower weights (1.077 and 1.052), implying they are secondary factors.

### Interpretation

The flowchart represents a decision-making process where "LNN-Λ" evaluates environmental conditions (obstacles/targets) to determine outcomes. The higher values of "LNN-pred" suggest intermediate computations amplify the root metric. The terminal conditions act as filters, with "HasObstacleSouth" having a slightly stronger influence than "HasTargetSouth."

---

## 3D Surface Plot: GoSouth LNN-Λ

### Overview

A 3D surface plot visualizing the relationship between "HasObstacleSouth," "HasTargetSouth," and "GoSouth LNN-Λ." The z-axis (color gradient) ranges from 0 (purple) to 1 (red).

### Components/Axes

- **X-axis**: "HasObstacleSouth" (0–1)

- **Y-axis**: "HasTargetSouth" (0–1)

- **Z-axis**: "GoSouth LNN-Λ" (color-mapped 0–1)

- **Color Bar**: Purple (0) to Red (1)

### Detailed Analysis

- The surface starts at 0 (purple) at the origin (0,0).

- Rises sharply to 1 (red) at the top-right corner (1,1).

- Intermediate values form a gradient from purple to red, with a plateau near (0.5, 0.5).

### Key Observations

- Maximum "GoSouth LNN-Λ" occurs when both "HasObstacleSouth" and "HasTargetSouth" are 1.

- The plateau suggests diminishing returns or a threshold effect beyond certain values.

### Interpretation

The plot indicates that the "GoSouth" decision is most favorable when both obstacles and targets are present in the south direction. The plateau implies that beyond a certain threshold, additional obstacles/targets do not significantly impact the decision. This could reflect a saturation point in the model’s logic or environmental constraints.

---

## Cross-Referenced Insights

1. **Model Performance (Part a)**: "Ours" outperforms "NeuralLP," aligning with the decision tree’s emphasis on optimized conditional logic (Part b).

2. **Decision Tree (Part b)**: The higher "LNN-pred" values suggest intermediate computations enhance the root metric, which is critical for the 3D plot’s surface behavior (Part c).

3. **3D Plot (Part c)**: The surface’s peak at (1,1) correlates with the decision tree’s terminal conditions, reinforcing the importance of obstacle/target presence in the "GoSouth" outcome.

### Final Notes

- All textual elements (labels, values, legends) are extracted with approximate precision.

- No non-English text detected.

- Spatial grounding and trend verification confirm consistency across components.

DECODING INTELLIGENCE...