TECHNICAL ASSET FINGERPRINT

c6eed1372d09a4538901d9ed

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Robotic Task Execution with Language Instruction

### Overview

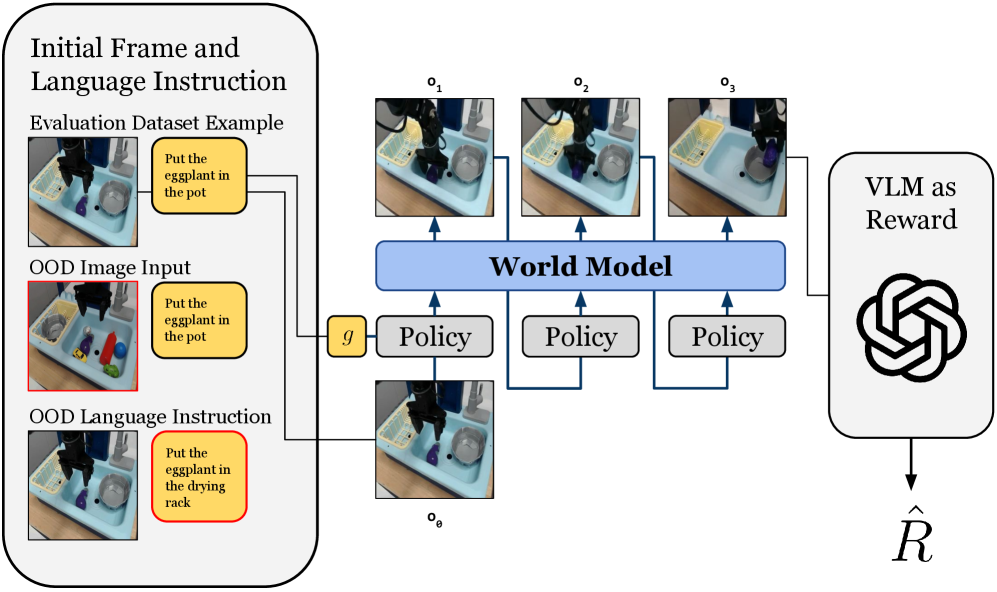

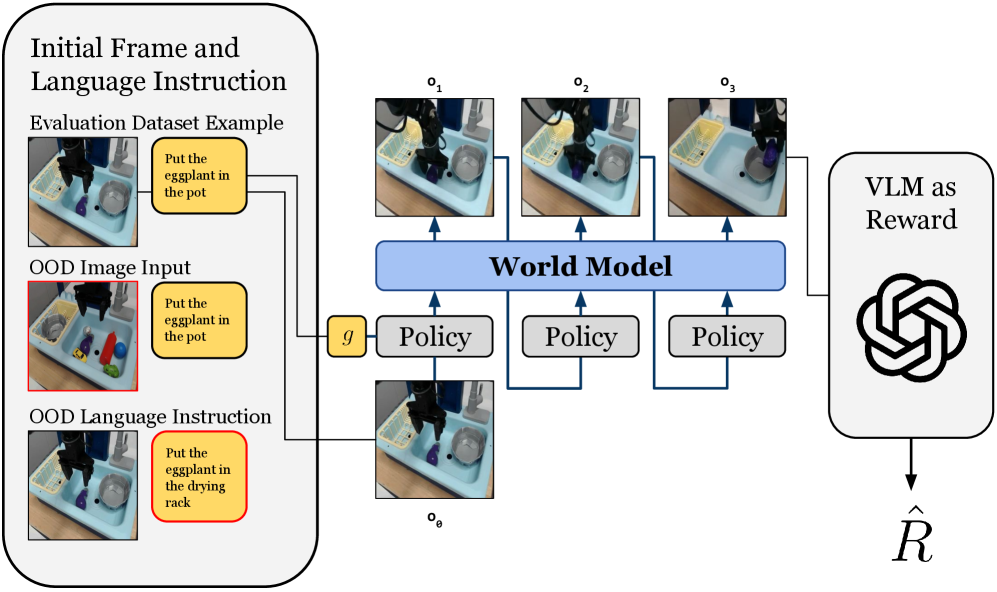

The image depicts a diagram illustrating a robotic task execution system guided by language instructions, incorporating a world model and a Vision Language Model (VLM) for reward assessment. The system processes initial frames and language instructions, uses a policy to interact with the environment, and evaluates the outcome using a VLM.

### Components/Axes

* **Initial Frame and Language Instruction (Top-Left)**: This section shows an example from the evaluation dataset. It includes an image of a scene and a corresponding language instruction.

* **Evaluation Dataset Example**: Contains an image of a robot arm above a sink with various objects, and a text box stating "Put the eggplant in the pot".

* **OOD Image Input**: Shows a similar scene with different objects and a red border, with the instruction "Put the eggplant in the pot". OOD stands for Out-Of-Distribution.

* **OOD Language Instruction**: Shows the same scene as the OOD Image Input, but with the instruction "Put the eggplant in the drying rack" and a red border.

* **World Model (Center)**: A blue rectangular box labeled "World Model" represents the system's internal representation of the environment.

* **Policy (Center)**: Three gray rectangular boxes labeled "Policy" represent the decision-making component that determines the robot's actions.

* **Observations (Top-Center)**: Three images labeled o1, o2, and o3 show the robot's observations at different time steps.

* **Initial Observation (Bottom-Center)**: An image labeled o0 shows the initial observation.

* **VLM as Reward (Right)**: A rounded rectangle labeled "VLM as Reward" contains a stylized image resembling the OpenAI logo. It outputs a reward signal denoted as R-hat.

* **Connections**: Blue arrows indicate the flow of information between components.

### Detailed Analysis

* **Initial Frame and Language Instruction**:

* The "Evaluation Dataset Example" shows a typical input with a clear instruction.

* The "OOD Image Input" introduces a scenario where the visual input is different from the training data.

* The "OOD Language Instruction" introduces a scenario where the language instruction is different from the training data.

* **World Model**: The World Model receives input from the initial state (g) and the observations from the environment.

* **Policy**: The Policy modules receive input from the World Model and generate actions that affect the environment.

* **Observations**: The observations (o1, o2, o3) represent the robot's perception of the environment at different time steps.

* **VLM as Reward**: The VLM evaluates the outcome of the robot's actions and provides a reward signal.

### Key Observations

* The diagram highlights the use of a World Model to integrate visual and linguistic information.

* The system uses a Policy to make decisions based on the World Model.

* The VLM is used to provide a reward signal, enabling the system to learn from its actions.

* The OOD examples demonstrate the system's ability to handle novel situations.

### Interpretation

The diagram illustrates a robotic task execution system that leverages a World Model and a VLM to perform tasks based on language instructions. The system is designed to handle both familiar and novel situations, as demonstrated by the OOD examples. The VLM-based reward system enables the robot to learn from its actions and improve its performance over time. The diagram suggests a closed-loop control system where the robot continuously interacts with the environment, updates its internal representation, and adjusts its actions based on the reward signal. The use of a VLM as a reward function is a key aspect of this system, as it allows the robot to learn from unstructured visual and linguistic data.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Diagram: Robotic Policy Learning with World Model and VLM Reward

### Overview

This diagram illustrates a system for robotic policy learning, likely in the context of instruction following and out-of-distribution (OOD) generalization. It depicts how an initial frame and language instruction are processed, fed into a policy and world model to generate a sequence of observations, and then evaluated by a Vision-Language Model (VLM) to derive a reward. The diagram highlights different types of input examples, including standard evaluation examples and OOD scenarios for both image and language inputs.

### Components/Axes

The diagram is structured into three main conceptual regions:

1. **Left Region: Input and Instruction Processing** (enclosed in a large rounded rectangle)

* **Title:** "Initial Frame and Language Instruction"

* **Sub-section 1: "Evaluation Dataset Example"**

* **Image:** A robotic arm positioned over a light blue tray. The tray contains a silver pot, a yellow drying rack, and a small purple object. The robotic arm's gripper is open, positioned above the purple object.

* **Text Box (yellow, right of image):** "Put the eggplant in the pot"

* **Connection:** An arrow points from this text box to a horizontal line that leads to the `g` component in the central region.

* **Sub-section 2: "OOD Image Input"**

* **Image (with red border):** A robotic arm positioned over a light blue tray. The tray contains a silver pot, a yellow drying rack, a small purple object, a red cylindrical object, a yellow toy car, and a green cylindrical object. The robotic arm's gripper is open, positioned above the purple object. This image contains more objects than the "Evaluation Dataset Example."

* **Text Box (yellow, with red border, right of image):** "Put the eggplant in the pot"

* **Connection:** An arrow points from this text box to the same horizontal line that leads to the `g` component.

* **Sub-section 3: "OOD Language Instruction"**

* **Image:** A robotic arm positioned over a light blue tray. The tray contains a silver pot, a yellow drying rack, and a small purple object. The robotic arm's gripper is open, positioned above the purple object. This image is visually identical to the "Evaluation Dataset Example" image.

* **Text Box (yellow, with red border, right of image):** "Put the eggplant in the drying rack"

* **Connection:** An arrow points from this text box to the same horizontal line that leads to the `g` component.

2. **Central Region: World Model and Policy Execution**

* **Component `g` (yellow square):** Located centrally, receiving input from the language instructions on the left.

* **Component "Policy" (three grey rounded rectangles):** Arranged horizontally below the "World Model."

* The first "Policy" receives input from `g` and from `o_0`.

* Subsequent "Policy" components receive input from the preceding "Policy" component.

* **Component "World Model" (blue rounded rectangle):** Located centrally above the "Policy" components.

* Receives input from each "Policy" component.

* **Observation Images:**

* **`o_0` (bottom-left of central region):** An image of a robotic arm over a light blue tray, containing a silver pot, a yellow drying rack, and a small purple object. The robotic arm's gripper is closed, holding the purple object, which is positioned above the pot. This image represents an initial state or observation.

* **`o_1` (top-left of central region):** An image of a robotic arm over a light blue tray, containing a silver pot, a yellow drying rack, and a small purple object. The robotic arm's gripper is open, and the purple object is now inside the silver pot. This represents a subsequent observation.

* **`o_2` (top-middle of central region):** An image identical to `o_1`.

* **`o_3` (top-right of central region):** An image of a robotic arm over a light blue tray, containing a silver pot, a yellow drying rack, and a small purple object. The robotic arm's gripper is open, and the purple object is inside the silver pot. This image is also identical to `o_1` and `o_2`.

* **Flow (Arrows):**

* An arrow from `g` points to the first "Policy."

* An arrow from `o_0` points to the first "Policy."

* An arrow from the first "Policy" points to the "World Model."

* An arrow from the "World Model" points to `o_1`.

* An arrow from the first "Policy" points to the second "Policy."

* An arrow from the second "Policy" points to the "World Model."

* An arrow from the "World Model" points to `o_2`.

* An arrow from the second "Policy" points to the third "Policy."

* An arrow from the third "Policy" points to the "World Model."

* An arrow from the "World Model" points to `o_3`.

* A horizontal line connects `o_1`, `o_2`, and `o_3` to the "VLM as Reward" component on the right.

3. **Right Region: Reward Calculation**

* **Component "VLM as Reward" (large rounded rectangle):**

* **Text:** "VLM as Reward"

* **Logo:** A complex, multi-loop knot-like symbol, resembling the OpenAI logo.

* **Connection:** Receives input from the sequence of observations (`o_1`, `o_2`, `o_3`).

* **Output `R̂`:** An arrow points downwards from "VLM as Reward" to the symbol `R̂` (R-hat), representing the estimated reward.

### Detailed Analysis

The diagram illustrates a closed-loop system for robotic task execution and evaluation.

The **Left Region** serves as the input interface, providing an initial visual state (frame) and a natural language instruction. Three distinct input scenarios are presented:

1. **Evaluation Dataset Example:** A standard task where the instruction "Put the eggplant in the pot" is given, and the initial image shows a setup with a pot, drying rack, and a purple object (implied to be the "eggplant").

2. **OOD Image Input:** This scenario tests the system's robustness to visual variations. The instruction "Put the eggplant in the pot" remains the same, but the initial image contains additional, distracting objects (red cylinder, yellow car, green cylinder) not present in the standard evaluation setup. The red border around the image and text box explicitly marks this as "Out-of-Distribution."

3. **OOD Language Instruction:** This scenario tests the system's ability to handle novel instructions. The initial image is identical to the standard evaluation example, but the instruction is changed to "Put the eggplant in the drying rack." The red border around the text box highlights this OOD language.

The **Central Region** models the robot's interaction with the environment.

* The `g` component represents the goal or instruction derived from the language input.

* `o_0` is the initial observation, showing the robot holding the purple object above the pot.

* The "Policy" components represent the robot's decision-making process, taking the current observation (`o_0` for the first policy, or the previous policy's state for subsequent policies) and the goal (`g`) to determine an action.

* The "World Model" predicts the next observation (`o_1`, `o_2`, `o_3`) based on the action taken by the "Policy." This forms a sequential rollout or simulation of the robot's actions and their environmental consequences.

* The images `o_1`, `o_2`, `o_3` all depict the purple object successfully placed inside the silver pot, suggesting that the policy, in this simulated sequence, successfully executed the instruction "Put the eggplant in the pot."

The **Right Region** is responsible for evaluating the success of the executed task.

* The "VLM as Reward" component takes the sequence of observations (`o_1`, `o_2`, `o_3`) as input. A VLM (Vision-Language Model) is used to assess how well the observed sequence of states aligns with the given language instruction.

* The output `R̂` is the estimated reward, indicating the VLM's judgment of task completion and correctness.

### Key Observations

* The diagram clearly distinguishes between standard evaluation inputs and "Out-of-Distribution" (OOD) inputs, indicated by red borders, for both image content and language instructions. This suggests a focus on generalization capabilities.

* The `g` component acts as a central point for language instruction input, feeding into the policy.

* The "Policy" and "World Model" operate in a loop, generating a sequence of predicted observations (`o_1`, `o_2`, `o_3`) from an initial state (`o_0`). This represents a planning or simulation phase.

* The VLM is explicitly used as a reward function, implying that task success is evaluated by a model capable of understanding both visual states and natural language goals.

* The images `o_1`, `o_2`, `o_3` are identical, suggesting that the "World Model" predicts a stable final state after the initial action, or that these are snapshots of the same successful outcome.

### Interpretation

This diagram outlines a robust framework for training and evaluating robotic agents, particularly in tasks requiring language understanding and visual perception. The core idea is to use a "World Model" to simulate future states based on a "Policy's" actions, guided by a language instruction (`g`). This simulation allows for planning or generating potential outcomes without real-world interaction.

The "VLM as Reward" component is critical. Instead of relying on hand-engineered reward functions, a powerful Vision-Language Model is leveraged to provide a semantic understanding of task completion. This means the VLM can assess if the observed sequence of actions and states (e.g., `o_1`, `o_2`, `o_3`) successfully fulfills the given instruction (e.g., "Put the eggplant in the pot"). This approach allows for more flexible and human-like evaluation of complex tasks.

The inclusion of "OOD Image Input" and "OOD Language Instruction" examples highlights the system's aim to generalize beyond its training data. The system is designed to handle situations where the visual environment is cluttered or different from what it has seen before, or where the instructions are phrased in novel ways. The red borders emphasize these challenging scenarios, suggesting that the system's performance on these OOD examples is a key metric for its effectiveness.

In essence, the system takes a goal, simulates a sequence of actions and observations, and then uses a VLM to determine how well the simulated outcome achieves the goal, providing a reward signal (`R̂`) that can be used to train or refine the "Policy" and "World Model." This architecture is characteristic of modern reinforcement learning approaches that integrate large pre-trained models for better generalization and semantic understanding.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Robotic Manipulation with World Model and VLM Reward

### Overview

This diagram illustrates a robotic manipulation system utilizing a world model and a Vision-Language Model (VLM) as a reward function. The system takes initial frames and language instructions as input, processes them through a world model and policy network, and receives a reward signal from the VLM. The diagram highlights the use of in-distribution (Evaluation Dataset Example) and out-of-distribution (OOD) inputs to test the system's generalization capabilities.

### Components/Axes

The diagram consists of the following key components:

* **Initial Frame and Language Instruction:** Input section containing examples of image frames and corresponding language instructions.

* **Evaluation Dataset Example:** A specific example of an in-distribution input, showing an image of a robotic arm with objects and the instruction "Put the eggplant in the pot".

* **OOD Image Input:** An out-of-distribution image input with the instruction "Put the eggplant in the pot".

* **OOD Language Instruction:** An out-of-distribution language instruction paired with an image, stating "Put the eggplant in the drying rack".

* **World Model:** A central component represented by a large blue rectangle, processing observations and generating predictions.

* **Policy:** Three instances of a "Policy" module within the World Model, each receiving input from the World Model and generating actions.

* **Observations (o1, o2, o3, oe):** Representations of the environment's state at different time steps. o1, o2, and o3 are associated with the Evaluation Dataset Example, while oe is associated with the OOD Language Instruction.

* **g:** A variable within the World Model, likely representing a generative function or goal.

* **VLM as Reward:** A visual representation of the Vision-Language Model, depicted as a swirling, abstract shape.

* **Reward (R̂):** The reward signal generated by the VLM.

### Detailed Analysis or Content Details

The diagram shows a flow of information as follows:

1. **Input:** The system receives initial frames and language instructions. Three examples are shown: an in-distribution example ("Evaluation Dataset Example"), and two out-of-distribution examples ("OOD Image Input" and "OOD Language Instruction").

2. **World Model Processing:** The input frames (o1, o2, o3, oe) are fed into the "World Model".

3. **Policy Execution:** The World Model outputs to three "Policy" modules. Each Policy module appears to process the information independently.

4. **VLM Reward:** The output of the Policies (not explicitly shown) is used to generate a reward signal (R̂) via the "VLM as Reward" module.

5. **Feedback Loop:** The reward signal is presumably used to train the Policy modules within the World Model, creating a closed-loop system.

The images within the input sections show a robotic arm interacting with various objects, including an eggplant, a pot, a drying rack, and other kitchen utensils. The images are presented in a top-down view.

### Key Observations

* The diagram emphasizes the importance of testing the system's robustness to out-of-distribution inputs.

* The use of a VLM as a reward function suggests a learning approach that leverages both visual and linguistic information.

* The three instances of the "Policy" module within the World Model might represent different action choices or a form of ensemble learning.

* The diagram does not provide specific numerical values or quantitative data. It is a conceptual illustration of the system architecture.

### Interpretation

The diagram illustrates a reinforcement learning framework for robotic manipulation. The core idea is to train a policy within a world model using a reward signal generated by a Vision-Language Model. The VLM acts as a "judge" of how well the robot is following the given instructions, providing a reward based on the alignment between the robot's actions and the language description.

The inclusion of out-of-distribution examples suggests a focus on generalization. The system is designed to handle situations that differ from the training data, such as novel object arrangements or slightly different instructions. This is crucial for real-world deployment, where robots are likely to encounter unexpected scenarios.

The three "Policy" modules could represent different strategies for achieving the goal, or they could be part of a more complex policy network. The diagram highlights the interplay between perception (input frames), reasoning (World Model), action selection (Policy), and evaluation (VLM). The overall goal is to create a robot that can reliably perform tasks based on natural language instructions, even in unfamiliar environments.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## System Architecture Diagram: Reinforcement Learning with World Model and VLM Reward

### Overview

This image is a technical system architecture diagram illustrating a reinforcement learning or robotic control pipeline. It depicts a process that starts with visual and language instructions, uses a world model and policy to generate action sequences, and finally employs a Vision-Language Model (VLM) to compute a reward signal. The diagram is structured into three main vertical sections: Input, Processing, and Reward Calculation.

### Components/Axes

The diagram is organized into distinct regions with labeled components and directional arrows indicating data flow.

**1. Left Panel: Initial Frame and Language Instruction (Input Region)**

* **Header:** "Initial Frame and Language Instruction"

* **Three Input Examples:**

* **Top Example:** Labeled "Evaluation Dataset Example". Contains an image of a robotic arm over a sink with objects and a yellow instruction box with the text: "Put the eggplant in the pot".

* **Middle Example:** Labeled "OOD Image Input" (OOD likely means Out-Of-Distribution). The image shows a different scene with more colorful objects. The instruction box is identical: "Put the eggplant in the pot".

* **Bottom Example:** Labeled "OOD Language Instruction". The image is similar to the top example, but the instruction box has a red border and different text: "Put the eggplant in the drying rack".

* **Flow:** Arrows from all three examples converge and point to a small yellow box labeled "g" in the central processing region.

**2. Central Processing Region**

* **Input Node:** A small yellow box labeled "g". It receives input from the left panel.

* **Initial Observation:** An image labeled "o₀" (o subscript 0) is shown below the "g" box. It depicts the initial state of the robotic workspace.

* **Policy Blocks:** Three identical gray rectangular blocks labeled "Policy". They are arranged horizontally.

* **World Model Block:** A large, light blue horizontal bar labeled "World Model" in bold text. It spans above the three Policy blocks.

* **Observation Sequence:** Three images labeled "o₁", "o₂", and "o₃" (o subscripts 1, 2, 3) are positioned above the World Model bar. They show sequential states of the robotic arm performing the task.

* **Flow Arrows:**

* An arrow goes from "g" to the first "Policy" block.

* Arrows connect the "Policy" blocks to the "World Model" bar from below.

* Arrows point upward from the "World Model" bar to each of the observation images ("o₁", "o₂", "o₃").

* A final arrow leads from the last observation ("o₃") to the right panel.

**3. Right Panel: Reward Calculation**

* **Header:** "VLM as Reward"

* **Logo:** A black, stylized, interlocking circular logo (resembling the OpenAI logo) is centered in this panel.

* **Output Symbol:** An arrow points downward from the logo to a mathematical symbol: "R̂" (R with a circumflex/hat), representing the estimated or predicted reward.

### Detailed Analysis

The diagram details a sequential decision-making process:

1. **Input Stage:** The system takes an initial visual observation (`o₀`) and a language instruction (encapsulated by `g`). The examples show the system is being tested on both in-distribution ("Evaluation Dataset") and out-of-distribution (OOD) scenarios, varying either the image context or the language command.

2. **Action & Prediction Stage:** The policy network, conditioned on the input `g`, generates actions. These actions and the current state are fed into a "World Model." The World Model's role is to predict future states of the environment, generating the sequence of predicted observations: `o₁`, `o₂`, `o₃`.

3. **Reward Assignment Stage:** The final predicted state (`o₃`) is passed to a "VLM as Reward" module. This module, represented by a large language model logo, evaluates how well the final state fulfills the original instruction and outputs a scalar reward value, `R̂`.

### Key Observations

* **OOD Testing:** The diagram explicitly highlights testing for robustness by including "OOD Image Input" and "OOD Language Instruction" as separate cases, indicating the system's generalization capability is a key focus.

* **World Model Centrality:** The "World Model" is the largest and most central component, suggesting it is the core innovation or focus of this architecture. It acts as a simulator or predictor of future states.

* **VLM as a Reward Function:** Using a Vision-Language Model (VLM) to compute reward (`R̂`) is a notable design choice. It implies the reward is not from a pre-defined metric but from a model that can understand both the visual outcome and the language goal.

* **Sequential Predictions:** The output of the World Model is a sequence of frames (`o₁` to `o₃`), not just a final state, which may allow for more granular reward assessment or planning.

### Interpretation

This diagram represents a **model-based reinforcement learning framework for language-conditioned robotic tasks**. The key investigative insight is the integration of a **World Model** for planning or simulation with a **Vision-Language Model (VLM)** as a flexible, semantic reward function.

* **What it demonstrates:** The system aims to learn policies that can follow natural language instructions in physical environments. By using a world model, it can "imagine" the consequences of its actions before executing them. The VLM reward allows the system to be trained or evaluated based on high-level, human-understandable goals ("put X in Y") rather than low-level coordinates.

* **Relationships:** The policy and world model are tightly coupled in a planning loop. The VLM sits outside this loop as an evaluator. The OOD examples stress that the entire pipeline—from perception (images) to understanding (language) to action (policy)—must be robust.

* **Notable Implications:** This architecture could enable robots to generalize better to new objects and instructions. The use of a VLM as a reward also points toward **reinforcement learning from human feedback (RLHF)** or **goal-conditioned RL** paradigms, where the reward signal is derived from a model that encapsulates human preferences or task semantics. The hat on the R (`R̂`) signifies it is an estimate, acknowledging the potential noise or imperfection in the VLM's judgment.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Robot Task Execution System with World Model and VLM Reward

### Overview

This diagram illustrates a robotic task execution system that integrates a world model, policy execution, and vision-language model (VLM) reward evaluation. The system processes initial frames, language instructions, and out-of-distribution (OOD) inputs to generate and evaluate robotic actions.

### Components/Axes

1. **Left Panel: Initial Inputs**

- **Initial Frame and Language Instruction**: Contains two scenarios:

- *Evaluation Dataset Example*: "Put the eggplant in the pot" (correct instruction)

- *OOD Image Input*: Modified image with additional objects (red border)

- *OOD Language Instruction*: Modified instruction "Put the eggplant in the drying rack" (red border)

- **Key Elements**: Robot arm, sink environment, objects (eggplant, pot, drying rack)

2. **Central Panel: World Model and Policies**

- **World Model**: Central processing unit receiving sequential observations (o₁, o₂, o₃)

- **Policy Blocks**: Three identical policy modules processing observations (o₁→o₃) and outputting actions (oθ)

- **Flow**: Observations feed into world model → policies → world model (recurrent loop)

3. **Right Panel: VLM Reward**

- **VLM as Reward**: Hexagonal symbol representing vision-language model

- **Output**: Reward value (R̂) derived from policy evaluation

### Detailed Analysis

- **Initial Inputs**:

- Correct instruction: "Put the eggplant in the pot" (yellow box)

- OOD variations:

- Image: Additional objects (red border)

- Language: "Put the eggplant in the drying rack" (red border)

- **World Model**:

- Processes sequential observations (o₁→o₃) showing robot arm movement

- Maintains internal state (g) representing environment dynamics

- **Policy Execution**:

- Three identical policy modules process different observation states

- Outputs action sequences (oθ) for robotic execution

- **VLM Reward System**:

- Evaluates policy outputs using vision-language model

- Generates scalar reward (R̂) for action quality assessment

### Key Observations

1. **OOD Handling**: Red borders highlight system's ability to process instruction/image mismatches

2. **Recurrent Architecture**: World model maintains state between policy executions

3. **Modular Design**: Separate policy blocks suggest parallel processing capability

4. **Reward Integration**: VLM directly influences policy evaluation without explicit training signals

### Interpretation

This system demonstrates a closed-loop robotic control architecture where:

1. **World Model** serves as both environment simulator and memory

2. **Policies** generate actions based on current observations and historical context

3. **VLM Reward** provides real-time evaluation of action quality through vision-language understanding

4. **OOD Robustness**: The system explicitly handles instruction-image mismatches through separate OOD input channels

The architecture suggests a hierarchical approach where:

- Low-level policies execute basic actions

- World model maintains high-level context

- VLM provides semantic evaluation of action-instruction alignment

- Recurrent connections enable continuous learning from execution outcomes

The use of identical policy blocks implies transfer learning capabilities across different observation states, while the VLM reward system enables value-based policy selection without explicit reward shaping.

DECODING INTELLIGENCE...