## Screenshot: Text-Based Navigation Task

### Overview

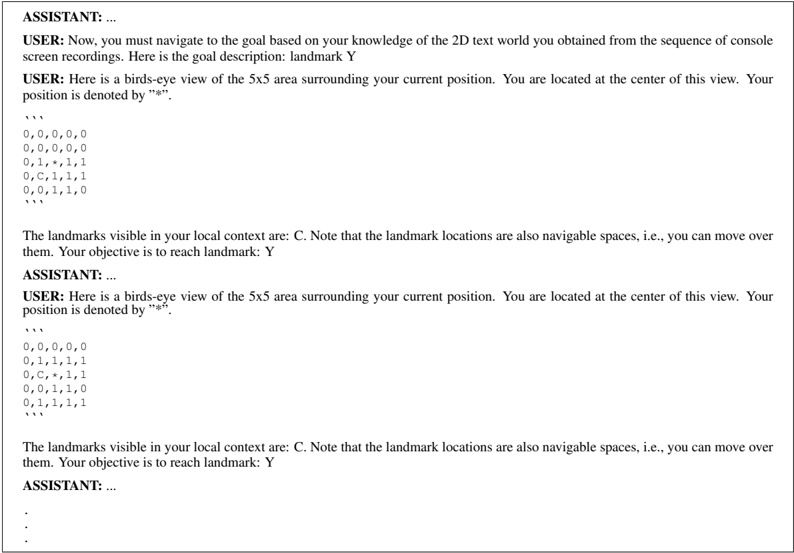

The image shows a text-based dialogue between a user and an assistant in a 2D grid navigation task. The user provides a 5x5 grid representing a text world, with the goal to reach landmark "Y". The assistant's responses are redacted (represented by ellipses). The grid includes coordinates, a current position marker ("*"), visible landmarks ("C"), and navigable spaces.

### Components/Axes

- **Grid Structure**: 5x5 matrix with coordinates (row, column) labeled from (0,0) at top-left to (4,4) at bottom-right.

- **Current Position**: Denoted by "*" at coordinate (2,1).

- **Landmarks**:

- Visible landmark "C" at (2,2).

- Goal landmark "Y" (location unspecified in visible text).

- **Navigable Spaces**: All grid cells are marked as navigable ("0", "1", "C", "*").

### Detailed Analysis

#### Grid Layout

```

Row 0: 0, 0, 0, 0, 0

Row 1: 0, 0, 0, 0, 0

Row 2: 0, 1, *, 1, 1

Row 3: 0, C, 1, 1, 1

Row 4: 0, 0, 1, 1, 0

```

#### Key Elements

1. **Current Position**: Centered at (2,1) with "*".

2. **Visible Landmark**: "C" at (2,2), adjacent to the current position.

3. **Goal**: Landmark "Y" is the objective but its coordinates are not explicitly stated in the visible text.

4. **Navigable Spaces**: All cells are marked as traversable (values "0", "1", "C", "*").

### Key Observations

- The grid is fully connected, with no blocked cells (all values are navigable).

- The current position (2,1) is adjacent to the visible landmark "C" at (2,2).

- The goal "Y" is not visible in the provided grid, suggesting it may be outside the 5x5 view or require inference from prior context.

### Interpretation

This task appears to test the assistant's ability to:

1. Parse spatial information from a 2D grid.

2. Navigate using partial visibility (only landmark "C" is visible in the current context).

3. Infer the location of "Y" based on prior knowledge or additional context not shown in the screenshot.

The absence of the assistant's responses prevents analysis of their reasoning process. However, the user's instructions imply a multi-step navigation challenge where the assistant must:

- Use the visible landmark "C" as a reference point.

- Determine the optimal path to "Y" despite limited local context.

- Possibly request additional information if "Y" is outside the current view.

The grid's uniform navigability suggests no obstacles, making pathfinding purely a matter of spatial reasoning rather than obstacle avoidance.