\n

## Bar Chart: Contextual Nuclear Knowledge Accuracy

### Overview

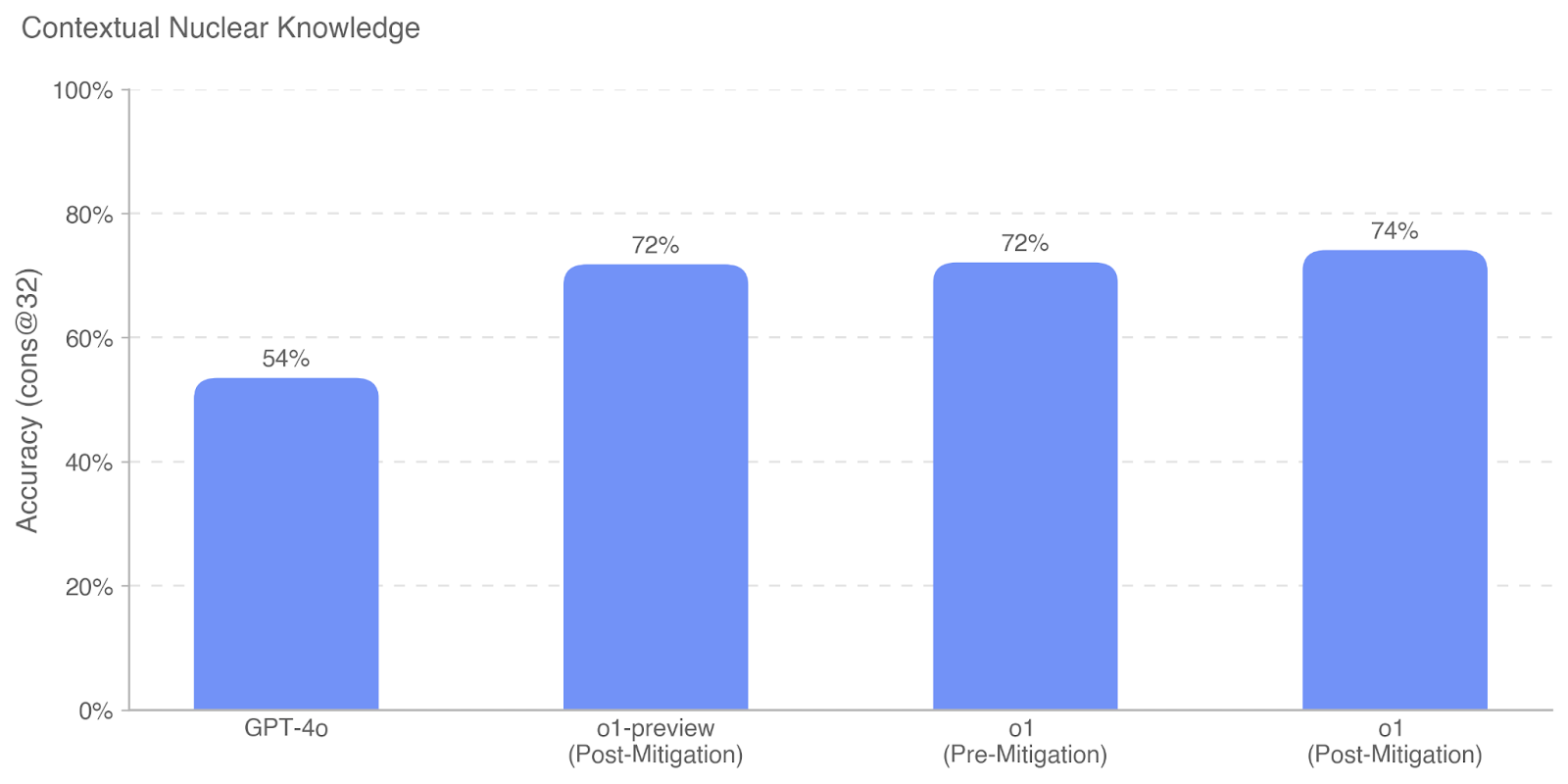

The image is a vertical bar chart titled "Contextual Nuclear Knowledge." It compares the accuracy scores of four different AI model variants on a specific evaluation metric. The chart uses a single color (a medium blue) for all bars, indicating a direct comparison of the same metric across different models or conditions.

### Components/Axes

* **Title:** "Contextual Nuclear Knowledge" (located at the top-left of the chart area).

* **Y-Axis:**

* **Label:** "Accuracy (cons @32)" (written vertically along the left side).

* **Scale:** Linear scale from 0% to 100%, with major gridlines and labels at 0%, 20%, 40%, 60%, 80%, and 100%.

* **X-Axis:**

* **Categories (from left to right):**

1. GPT-4o

2. o1-preview (Post-Mitigation)

3. o1 (Pre-Mitigation)

4. o1 (Post-Mitigation)

* **Data Series:** A single data series represented by four blue bars. There is no legend, as only one metric is being compared.

* **Data Labels:** Each bar has its exact percentage value displayed directly above it.

### Detailed Analysis

The chart presents the following accuracy values for the "cons @32" metric:

1. **GPT-4o:** The leftmost bar shows an accuracy of **54%**.

2. **o1-preview (Post-Mitigation):** The second bar shows an accuracy of **72%**.

3. **o1 (Pre-Mitigation):** The third bar shows an accuracy of **72%**.

4. **o1 (Post-Mitigation):** The rightmost and tallest bar shows an accuracy of **74%**.

**Trend Verification:** The visual trend shows a significant step-up in accuracy from the first model (GPT-4o) to the subsequent three models (all variants of "o1"). The bars for "o1-preview (Post-Mitigation)" and "o1 (Pre-Mitigation)" are visually identical in height, corresponding to their equal 72% values. The final bar for "o1 (Post-Mitigation)" is slightly taller, reflecting its 2-percentage-point increase to 74%.

### Key Observations

* **Performance Gap:** There is a substantial 18-percentage-point gap between the baseline model (GPT-4o at 54%) and the best-performing model shown (o1 Post-Mitigation at 74%).

* **Mitigation Impact:** For the "o1" model, applying "Post-Mitigation" resulted in a 2% absolute improvement (from 72% to 74%) over its "Pre-Mitigation" state.

* **Preview vs. Final:** The "o1-preview (Post-Mitigation)" model achieved the same 72% accuracy as the "o1 (Pre-Mitigation)" model, suggesting the preview's post-mitigation performance matched the final model's pre-mitigation baseline.

* **Plateau:** The performance of the three "o1" variants clusters closely between 72% and 74%, indicating a potential performance plateau or a ceiling effect for this specific evaluation metric ("cons @32") among these model versions.

### Interpretation

This chart demonstrates the progression of AI model capability on a specialized task related to "Contextual Nuclear Knowledge." The data suggests that the "o1" series of models represents a significant architectural or training advancement over "GPT-4o" for this domain, as evidenced by the large initial jump in accuracy.

The "Pre-Mitigation" and "Post-Mitigation" labels imply an iterative development process where models are first evaluated and then refined (mitigated) to address shortcomings. The 2% gain from "o1 (Pre-Mitigation)" to "o1 (Post-Mitigation)" shows that this mitigation process yielded a measurable, though modest, improvement. The fact that the "o1-preview" after mitigation matched the final "o1" before mitigation suggests the preview was a stable intermediate version.

The metric "Accuracy (cons @32)" is not fully defined in the chart, but "cons" likely stands for "consistency" or "consecutive" trials, and "@32" may refer to a specific parameter like context window size or number of samples. The chart's primary message is the superiority of the "o1" model family over "GPT-4o" on this specific benchmark, with fine-tuning (mitigation) providing an additional, smaller performance boost. The clustering of scores near 74% may indicate the current state-of-the-art or a challenging limit for this particular evaluation setup.