TECHNICAL ASSET FINGERPRINT

c7e3965be7b2083aaf8680ef

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

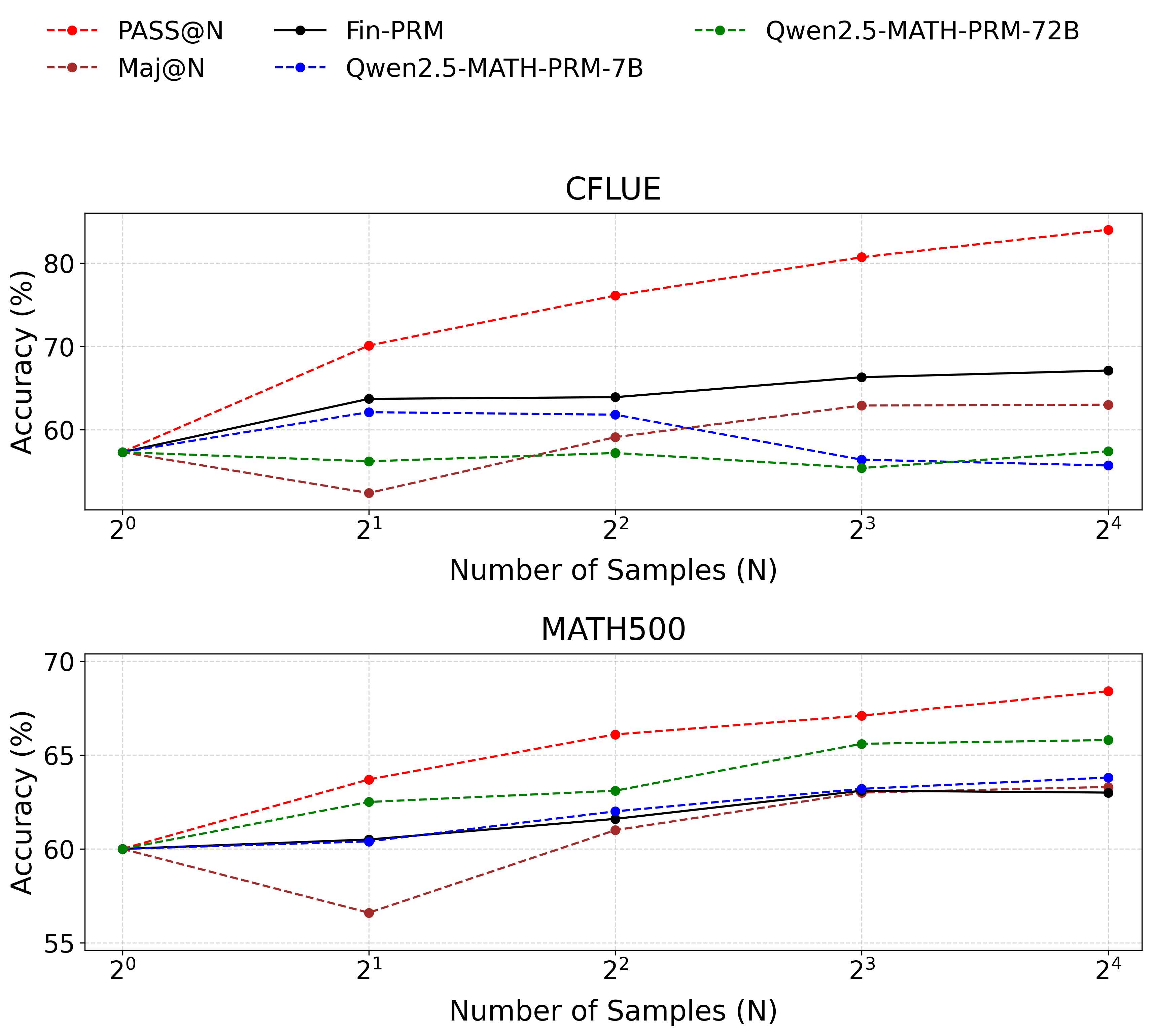

## Line Charts: Accuracy vs. Number of Samples

### Overview

The image contains two line charts comparing the accuracy of different models (PASS@N, Maj@N, Fin-PRM, Qwen2.5-MATH-PRM-7B, and Qwen2.5-MATH-PRM-72B) against the number of samples used. The top chart displays results for the "CFLUE" dataset, while the bottom chart shows results for the "MATH500" dataset. The x-axis represents the number of samples (N) on a logarithmic scale (base 2), and the y-axis represents the accuracy in percentage.

### Components/Axes

**Legend (Top-Left):**

* **PASS@N:** Red dashed line with circular markers.

* **Maj@N:** Brown dashed line with circular markers.

* **Fin-PRM:** Black solid line with circular markers.

* **Qwen2.5-MATH-PRM-7B:** Blue dashed line with circular markers.

* **Qwen2.5-MATH-PRM-72B:** Green dashed line with circular markers.

**Top Chart (CFLUE):**

* **Title:** CFLUE

* **X-axis:** Number of Samples (N), with markers at 2<sup>0</sup>, 2<sup>1</sup>, 2<sup>2</sup>, 2<sup>3</sup>, and 2<sup>4</sup>.

* **Y-axis:** Accuracy (%), with markers at 60, 70, and 80.

**Bottom Chart (MATH500):**

* **Title:** MATH500

* **X-axis:** Number of Samples (N), with markers at 2<sup>0</sup>, 2<sup>1</sup>, 2<sup>2</sup>, 2<sup>3</sup>, and 2<sup>4</sup>.

* **Y-axis:** Accuracy (%), with markers at 55, 60, 65, and 70.

### Detailed Analysis

**Top Chart (CFLUE):**

* **PASS@N (Red dashed line):** Shows an upward trend.

* 2<sup>0</sup>: ~58%

* 2<sup>1</sup>: ~71%

* 2<sup>2</sup>: ~77%

* 2<sup>3</sup>: ~80%

* 2<sup>4</sup>: ~83%

* **Maj@N (Brown dashed line):** Shows a downward trend.

* 2<sup>0</sup>: ~58%

* 2<sup>1</sup>: ~50%

* 2<sup>2</sup>: ~52%

* 2<sup>3</sup>: ~63%

* 2<sup>4</sup>: ~64%

* **Fin-PRM (Black solid line):** Shows a slight upward trend, almost flat.

* 2<sup>0</sup>: ~58%

* 2<sup>1</sup>: ~63%

* 2<sup>2</sup>: ~64%

* 2<sup>3</sup>: ~66%

* 2<sup>4</sup>: ~67%

* **Qwen2.5-MATH-PRM-7B (Blue dashed line):** Shows a slight downward trend.

* 2<sup>0</sup>: ~58%

* 2<sup>1</sup>: ~63%

* 2<sup>2</sup>: ~61%

* 2<sup>3</sup>: ~57%

* 2<sup>4</sup>: ~57%

* **Qwen2.5-MATH-PRM-72B (Green dashed line):** Shows a slight downward trend.

* 2<sup>0</sup>: ~58%

* 2<sup>1</sup>: ~55%

* 2<sup>2</sup>: ~56%

* 2<sup>3</sup>: ~56%

* 2<sup>4</sup>: ~63%

**Bottom Chart (MATH500):**

* **PASS@N (Red dashed line):** Shows an upward trend.

* 2<sup>0</sup>: ~60%

* 2<sup>1</sup>: ~64%

* 2<sup>2</sup>: ~66%

* 2<sup>3</sup>: ~67%

* 2<sup>4</sup>: ~68%

* **Maj@N (Brown dashed line):** Shows a downward trend.

* 2<sup>0</sup>: ~60%

* 2<sup>1</sup>: ~58%

* 2<sup>2</sup>: ~61%

* 2<sup>3</sup>: ~63%

* 2<sup>4</sup>: ~63%

* **Fin-PRM (Black solid line):** Shows a slight upward trend, almost flat.

* 2<sup>0</sup>: ~60%

* 2<sup>1</sup>: ~62%

* 2<sup>2</sup>: ~62%

* 2<sup>3</sup>: ~63%

* 2<sup>4</sup>: ~63%

* **Qwen2.5-MATH-PRM-7B (Blue dashed line):** Shows a slight upward trend, almost flat.

* 2<sup>0</sup>: ~60%

* 2<sup>1</sup>: ~61%

* 2<sup>2</sup>: ~61%

* 2<sup>3</sup>: ~63%

* 2<sup>4</sup>: ~63%

* **Qwen2.5-MATH-PRM-72B (Green dashed line):** Shows a slight upward trend.

* 2<sup>0</sup>: ~60%

* 2<sup>1</sup>: ~63%

* 2<sup>2</sup>: ~64%

* 2<sup>3</sup>: ~65%

* 2<sup>4</sup>: ~66%

### Key Observations

* **PASS@N** consistently improves in accuracy as the number of samples increases for both datasets.

* **Maj@N** shows a decrease in accuracy with an increasing number of samples for the CFLUE dataset, but a slight increase for the MATH500 dataset.

* **Fin-PRM** shows a relatively stable performance across different sample sizes for both datasets.

* **Qwen2.5-MATH-PRM-7B** shows a slight decrease in accuracy with an increasing number of samples for the CFLUE dataset, but a slight increase for the MATH500 dataset.

* **Qwen2.5-MATH-PRM-72B** shows a slight increase in accuracy with an increasing number of samples for both datasets.

### Interpretation

The charts illustrate the performance of different models on two datasets, CFLUE and MATH500, as the number of samples varies. The PASS@N model demonstrates a clear benefit from increased sample sizes, suggesting it can effectively leverage more data to improve accuracy. In contrast, the Maj@N model's performance either decreases or remains relatively flat with more samples, indicating it might not be as efficient in utilizing additional data or may be overfitting. Fin-PRM and the Qwen models show relatively stable performance, with slight improvements or declines depending on the dataset. The differences in trends between the datasets suggest that the models' performance is also influenced by the specific characteristics of the dataset.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

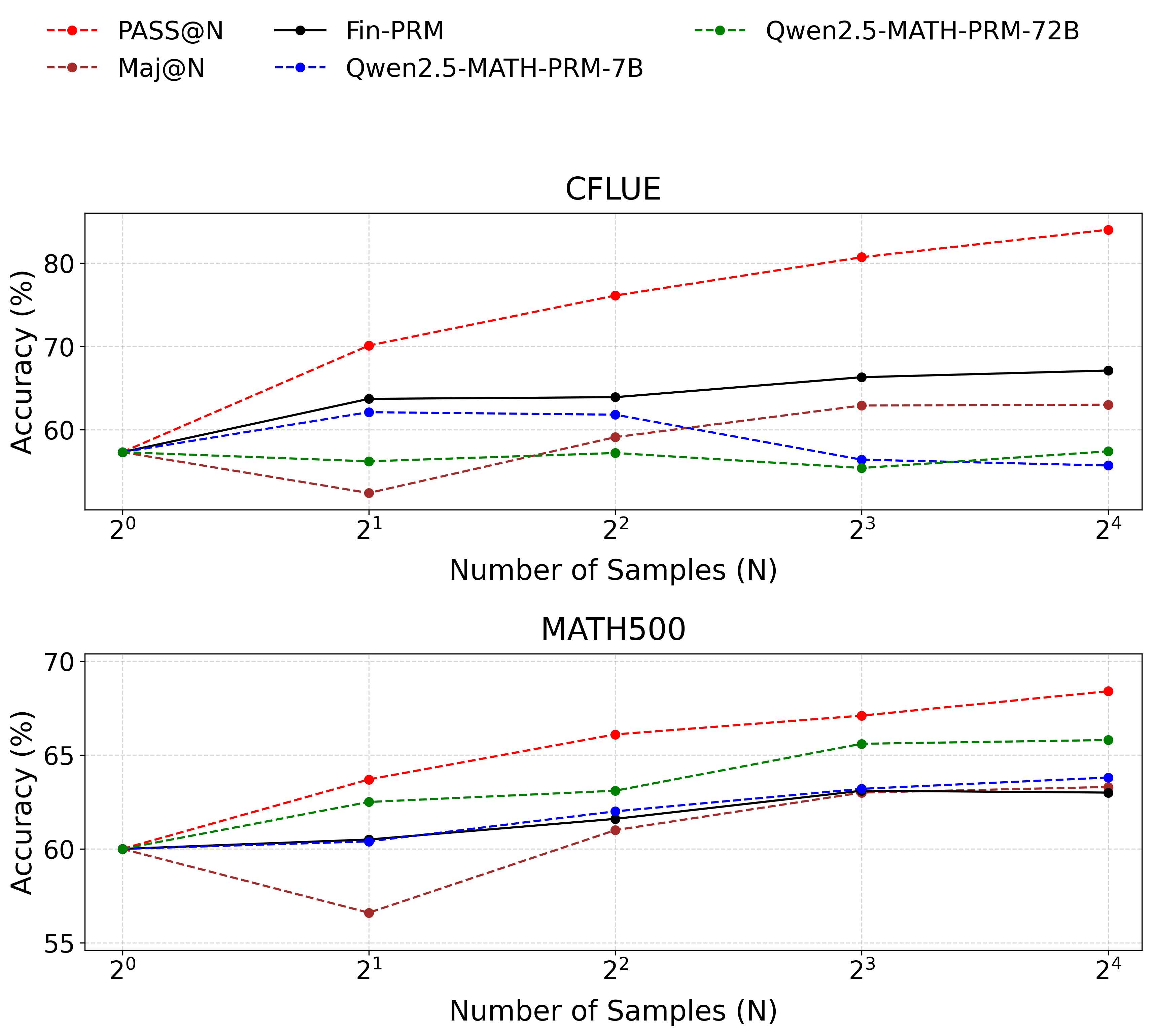

## Line Chart: Accuracy vs. Number of Samples for Different Models

### Overview

This image presents two line charts comparing the accuracy of several language models (PASS@N, Maj@N, Fin-PRM, Qwen2.5-MATH-PRM-7B, and Qwen2.5-MATH-PRM-72B) across varying numbers of samples (N). The top chart focuses on the CFLUE dataset, while the bottom chart focuses on the MATH500 dataset. Accuracy is measured in percentage (%).

### Components/Axes

* **X-axis:** Number of Samples (N), labeled with powers of 2: 2⁰, 2¹, 2², 2³, 2⁴. The scale is logarithmic.

* **Y-axis:** Accuracy (%), ranging from approximately 55% to 85%.

* **Legend (Top-Right):**

* PASS@N (Red Dashed Line)

* Maj@N (Orange Dashed Line)

* Fin-PRM (Black Solid Line)

* Qwen2.5-MATH-PRM-7B (Blue Dashed Line)

* Qwen2.5-MATH-PRM-72B (Green Dotted Line)

* **Titles:**

* Top Chart: CFLUE

* Bottom Chart: MATH500

* **Gridlines:** Present on both charts, aiding in value estimation.

### Detailed Analysis or Content Details

**CFLUE Chart (Top)**

* **PASS@N (Red Dashed):** Starts at approximately 62% at N=2⁰, steadily increases to approximately 83% at N=2⁴. The line is consistently upward sloping.

* **Maj@N (Orange Dashed):** Starts at approximately 60% at N=2⁰, increases to approximately 66% at N=2⁴. The line is consistently upward sloping, but less steep than PASS@N.

* **Fin-PRM (Black Solid):** Starts at approximately 64% at N=2⁰, fluctuates around 68-70% between N=2¹ and N=2³, and ends at approximately 71% at N=2⁴. The line is relatively flat with slight upward trend.

* **Qwen2.5-MATH-PRM-7B (Blue Dashed):** Starts at approximately 58% at N=2⁰, increases to approximately 63% at N=2⁴. The line is consistently upward sloping, but less steep than PASS@N and Maj@N.

* **Qwen2.5-MATH-PRM-72B (Green Dotted):** Starts at approximately 55% at N=2⁰, increases to approximately 60% at N=2⁴. The line is consistently upward sloping, but the least steep of all models.

**MATH500 Chart (Bottom)**

* **PASS@N (Red Dashed):** Starts at approximately 66% at N=2⁰, steadily increases to approximately 72% at N=2⁴. The line is consistently upward sloping.

* **Maj@N (Orange Dashed):** Starts at approximately 62% at N=2⁰, decreases to approximately 58% at N=2⁴. The line is consistently downward sloping.

* **Fin-PRM (Black Solid):** Starts at approximately 63% at N=2⁰, increases to approximately 66% at N=2⁴. The line is consistently upward sloping.

* **Qwen2.5-MATH-PRM-7B (Blue Dashed):** Starts at approximately 61% at N=2⁰, increases to approximately 64% at N=2⁴. The line is consistently upward sloping, but less steep than PASS@N.

* **Qwen2.5-MATH-PRM-72B (Green Dotted):** Starts at approximately 59% at N=2⁰, increases to approximately 66% at N=2⁴. The line is consistently upward sloping, and steeper than Qwen2.5-MATH-PRM-7B.

### Key Observations

* In the CFLUE dataset, PASS@N consistently outperforms all other models across all sample sizes.

* In the MATH500 dataset, PASS@N also shows the highest accuracy, while Maj@N exhibits a decreasing accuracy with increasing sample size.

* The larger model (Qwen2.5-MATH-PRM-72B) generally performs better than the smaller model (Qwen2.5-MATH-PRM-7B) in both datasets, but the difference is more pronounced in the MATH500 dataset.

* Fin-PRM shows relatively stable performance in the CFLUE dataset, while it improves with sample size in the MATH500 dataset.

### Interpretation

The charts demonstrate the impact of sample size on the accuracy of different language models on two distinct datasets (CFLUE and MATH500). The consistent upward trend of PASS@N in both datasets suggests its robustness and ability to leverage larger sample sizes for improved performance. The contrasting trend of Maj@N in the MATH500 dataset is an anomaly, potentially indicating a limitation of this model in handling complex mathematical problems or a bias in the dataset.

The performance difference between the 7B and 72B versions of the Qwen models highlights the benefits of model scaling. The larger model's increased capacity allows it to capture more nuanced patterns in the data, leading to higher accuracy.

The datasets themselves appear to elicit different behaviors from the models. CFLUE seems to benefit all models with increased sample size, while MATH500 shows more varied responses, with some models improving and others declining. This suggests that the datasets have different characteristics and require different modeling approaches. The charts provide valuable insights into the strengths and weaknesses of each model and can inform model selection and training strategies.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Performance Comparison on CFLUE and MATH500 Datasets

### Overview

The image contains two vertically stacked line charts comparing the accuracy of five different methods or models as the number of samples (N) increases. The top chart is titled "CFLUE" and the bottom chart is titled "MATH500". Both charts share the same x-axis ("Number of Samples (N)") and y-axis ("Accuracy (%)"). A common legend is positioned at the top of the image.

### Components/Axes

* **Legend (Top Center):** Contains five entries.

* `PASS@N`: Red dashed line with circular markers.

* `Maj@N`: Brown dashed line with circular markers.

* `Fin-PRM`: Black solid line with circular markers.

* `Qwen2.5-MATH-PRM-7B`: Blue dashed line with circular markers.

* `Qwen2.5-MATH-PRM-72B`: Green dashed line with circular markers.

* **X-Axis (Both Charts):** Labeled "Number of Samples (N)". The scale is logarithmic base 2, with tick marks at `2^0` (1), `2^1` (2), `2^2` (4), `2^3` (8), and `2^4` (16).

* **Y-Axis (Both Charts):** Labeled "Accuracy (%)". The scale is linear.

* **CFLUE Chart:** Ranges from approximately 50% to 85%. Major gridlines are at 60%, 70%, and 80%.

* **MATH500 Chart:** Ranges from 55% to 70%. Major gridlines are at 55%, 60%, 65%, and 70%.

### Detailed Analysis

#### **CFLUE Chart (Top)**

* **PASS@N (Red, Dashed):** Shows a strong, consistent upward trend. Starts at ~57% (2^0), rises to ~70% (2^1), ~76% (2^2), ~81% (2^3), and peaks at ~84% (2^4). It is the top-performing method for N ≥ 2.

* **Fin-PRM (Black, Solid):** Shows a moderate upward trend that plateaus. Starts at ~57% (2^0), rises to ~64% (2^1), stays at ~64% (2^2), increases to ~66% (2^3), and ends at ~67% (2^4). It is the second-best method for N ≥ 2.

* **Maj@N (Brown, Dashed):** Shows a fluctuating trend. Starts at ~57% (2^0), dips to ~53% (2^1), rises to ~59% (2^2), increases to ~63% (2^3), and stays at ~63% (2^4).

* **Qwen2.5-MATH-PRM-7B (Blue, Dashed):** Shows a slight downward trend after an initial rise. Starts at ~57% (2^0), rises to ~62% (2^1), stays at ~62% (2^2), drops to ~57% (2^3), and ends at ~56% (2^4).

* **Qwen2.5-MATH-PRM-72B (Green, Dashed):** Shows a relatively flat, slightly fluctuating trend. Starts at ~57% (2^0), dips to ~56% (2^1), rises to ~57% (2^2), dips to ~56% (2^3), and ends at ~57% (2^4). It is the lowest-performing method for N ≥ 2.

#### **MATH500 Chart (Bottom)**

* **PASS@N (Red, Dashed):** Shows a consistent upward trend. Starts at 60% (2^0), rises to ~64% (2^1), ~66% (2^2), ~67% (2^3), and peaks at ~68% (2^4). It is the top-performing method for all N.

* **Qwen2.5-MATH-PRM-72B (Green, Dashed):** Shows a consistent upward trend. Starts at 60% (2^0), rises to ~63% (2^1), ~63% (2^2), ~66% (2^3), and ends at ~66% (2^4). It is the second-best method for N ≥ 2.

* **Fin-PRM (Black, Solid):** Shows a moderate upward trend that plateaus. Starts at 60% (2^0), rises to ~60% (2^1), ~62% (2^2), ~63% (2^3), and ends at ~63% (2^4).

* **Qwen2.5-MATH-PRM-7B (Blue, Dashed):** Shows a moderate upward trend. Starts at 60% (2^0), rises to ~60% (2^1), ~62% (2^2), ~63% (2^3), and ends at ~64% (2^4).

* **Maj@N (Brown, Dashed):** Shows a fluctuating trend. Starts at 60% (2^0), dips to ~57% (2^1), rises to ~61% (2^2), increases to ~63% (2^3), and ends at ~63% (2^4).

### Key Observations

1. **Dominance of PASS@N:** The PASS@N method (red dashed line) achieves the highest accuracy on both datasets across almost all sample sizes (N ≥ 2), showing a strong positive correlation between N and accuracy.

2. **Dataset-Specific Performance:** The relative ranking of methods differs between datasets. Notably, the `Qwen2.5-MATH-PRM-72B` model (green) is the second-best performer on MATH500 but performs poorly on CFLUE. Conversely, `Fin-PRM` (black) is strong on CFLUE but average on MATH500.

3. **Impact of Model Size (Qwen):** On the MATH500 dataset, the larger 72B model consistently outperforms the smaller 7B model. On the CFLUE dataset, their performance is similar and relatively low, with the 7B model sometimes slightly ahead.

4. **Maj@N Volatility:** The Maj@N method (brown) shows a characteristic dip in accuracy at N=2 (`2^1`) on both charts before recovering.

5. **Plateauing Effect:** Most methods show diminishing returns, with accuracy gains slowing or plateauing as N increases from 8 (`2^3`) to 16 (`2^4`).

### Interpretation

This data demonstrates the effectiveness of different sampling and verification strategies for improving the accuracy of language models on mathematical and reasoning tasks (CFLUE and MATH500).

* **PASS@N's superiority** suggests that a strategy of accepting an answer if it appears in any of N samples is highly effective, and its performance scales reliably with more samples.

* The **divergent performance of the Qwen models** across datasets indicates that model specialization or training data alignment plays a crucial role. The 72B model appears better tuned for the type of problems in MATH500, while neither Qwen model excels on CFLUE, suggesting CFLUE may test different skills.

* The **plateauing accuracy** for most methods implies a practical limit to the benefits of simply increasing the number of samples. Beyond a certain point (N=8 or 16), the computational cost of generating more samples may not justify the marginal accuracy gain.

* The **volatility of Maj@N** (majority voting) highlights a potential weakness: with very few samples (N=2), a single incorrect majority can hurt performance, but with more samples, the consensus becomes more reliable.

In summary, the charts argue for the use of PASS@N as a robust scaling strategy and highlight that the optimal model or method is highly dependent on the specific evaluation benchmark.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Accuracy vs. Number of Samples (N) for CFLUE and MATH500

### Overview

The image contains two line graphs comparing the accuracy of different models (PASS@N, Fin-PRM, Maj@N, Qwen2.5-MATH-PRM-7B, Qwen2.5-MATH-PRM-72B) across two datasets: **CFLUE** (top graph) and **MATH500** (bottom graph). The x-axis represents the number of samples (N) on a logarithmic scale (2⁰ to 2⁴), and the y-axis represents accuracy in percentage. Each model is represented by a distinct line style and color, as defined in the legend.

---

### Components/Axes

- **X-axis**: "Number of Samples (N)" with ticks at 2⁰ (1), 2¹ (2), 2² (4), 2³ (8), 2⁴ (16).

- **Y-axis**: "Accuracy (%)" with ranges:

- **CFLUE**: 55% to 80%

- **MATH500**: 55% to 70%

- **Legends**:

- **CFLUE**:

- Red dashed line: PASS@N

- Black solid line: Fin-PRM

- Brown dashed line: Maj@N

- Blue dashed line: Qwen2.5-MATH-PRM-7B

- Green dashed line: Qwen2.5-MATH-PRM-72B

- **MATH500**: Same legend as CFLUE.

---

### Detailed Analysis

#### **CFLUE Graph**

1. **PASS@N (Red Dashed Line)**:

- Starts at ~58% (2⁰) and increases steadily to ~83% (2⁴).

- **Trend**: Strong upward slope, indicating consistent improvement with more samples.

2. **Fin-PRM (Black Solid Line)**:

- Starts at ~58% (2⁰), rises to ~68% (2¹), then plateaus with minor fluctuations (~66–68%) at 2²–2⁴.

- **Trend**: Initial sharp increase, followed by stabilization.

3. **Maj@N (Brown Dashed Line)**:

- Starts at ~58% (2⁰), drops to ~54% (2¹), then rises to ~63% (2⁴).

- **Trend**: Initial dip, followed by gradual recovery.

4. **Qwen2.5-MATH-PRM-7B (Blue Dashed Line)**:

- Starts at ~58% (2⁰), peaks at ~62% (2¹), then declines to ~58% (2⁴).

- **Trend**: Initial rise, followed by a decline.

5. **Qwen2.5-MATH-PRM-72B (Green Dashed Line)**:

- Starts at ~58% (2⁰), rises to ~57% (2¹), then fluctuates between ~57–60% (2²–2⁴).

- **Trend**: Slight improvement but no significant growth.

#### **MATH500 Graph**

1. **PASS@N (Red Dashed Line)**:

- Starts at ~60% (2⁰), increases to ~68% (2⁴).

- **Trend**: Steady upward trajectory.

2. **Fin-PRM (Black Solid Line)**:

- Starts at ~60% (2⁰), rises to ~63% (2³), then plateaus at ~63% (2⁴).

- **Trend**: Gradual improvement with stabilization.

3. **Maj@N (Brown Dashed Line)**:

- Starts at ~60% (2⁰), drops to ~57% (2¹), then rises to ~64% (2⁴).

- **Trend**: Initial dip, followed by recovery.

4. **Qwen2.5-MATH-PRM-7B (Blue Dashed Line)**:

- Starts at ~60% (2⁰), rises to ~64% (2⁴).

- **Trend**: Consistent upward trend.

5. **Qwen2.5-MATH-PRM-72B (Green Dashed Line)**:

- Starts at ~60% (2⁰), rises to ~66% (2³), then plateaus at ~66% (2⁴).

- **Trend**: Strong improvement, followed by stabilization.

---

### Key Observations

1. **PASS@N** consistently outperforms other models in both datasets, showing the most significant improvement with increased samples.

2. **Maj@N** exhibits a temporary dip at 2¹ in both graphs but recovers by 2⁴.

3. **Qwen2.5-MATH-PRM-72B** (green dashed line) performs best in **MATH500**, surpassing other models at higher sample sizes.

4. **Qwen2.5-MATH-PRM-7B** (blue dashed line) shows mixed performance: a decline in CFLUE but steady growth in MATH500.

5. **Fin-PRM** (black solid line) demonstrates stability in both datasets but lacks the growth seen in PASS@N.

---

### Interpretation

- **PASS@N** is the most effective model for improving accuracy with more samples, suggesting it is optimized for scalability.

- **Qwen2.5-MATH-PRM-72B** excels in **MATH500**, indicating its architecture is particularly suited for mathematical reasoning tasks.

- The **Maj@N** dip at 2¹ may reflect overfitting or inefficiencies at smaller sample sizes, which are mitigated at larger scales.

- **Qwen2.5-MATH-PRM-7B** underperforms in CFLUE but shows promise in MATH500, highlighting dataset-specific model effectiveness.

- All models improve with more samples, but the rate of improvement varies, emphasizing the importance of model selection based on task requirements.

---

### Spatial Grounding & Color Verification

- **Legends**: Positioned at the top of each graph, with colors matching the corresponding lines (e.g., red dashed = PASS@N).

- **Data Points**: Confirmed alignment with legend colors (e.g., green dashed line = Qwen2.5-MATH-PRM-72B).

---

### Final Notes

The graphs demonstrate that **PASS@N** and **Qwen2.5-MATH-PRM-72B** are the most scalable models, with performance gains directly tied to the number of samples. The data underscores the need for task-specific model selection, as performance varies significantly across datasets.

DECODING INTELLIGENCE...