TECHNICAL ASSET FINGERPRINT

c817e023d8b0bd8ac7923d34

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

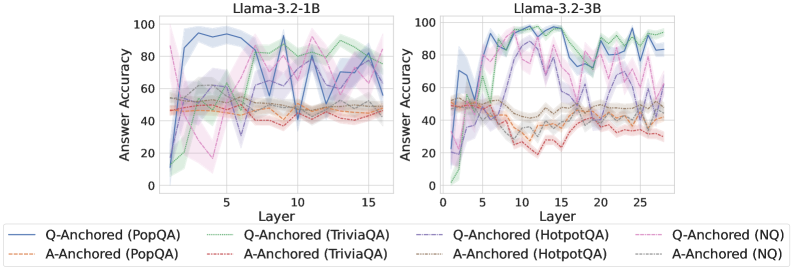

## Chart: Answer Accuracy vs. Layer for Llama Models

### Overview

The image presents two line charts comparing the answer accuracy of Llama models (3.2-1B and 3.2-3B) across different layers. The x-axis represents the layer number, and the y-axis represents the answer accuracy. Each chart displays six data series, representing different question-answering tasks (PopQA, TriviaQA, HotpotQA, and NQ) anchored by either the question (Q-Anchored) or the answer (A-Anchored). Shaded regions around the lines indicate uncertainty or variance.

### Components/Axes

* **Titles:**

* Left Chart: "Llama-3.2-1B"

* Right Chart: "Llama-3.2-3B"

* **X-axis:**

* Label: "Layer"

* Left Chart: Scale from 0 to 15, with tick marks at 0, 5, 10, and 15.

* Right Chart: Scale from 0 to 25, with tick marks at 0, 5, 10, 15, 20, and 25.

* **Y-axis:**

* Label: "Answer Accuracy"

* Scale: 0 to 100, with tick marks at 0, 20, 40, 60, 80, and 100.

* **Legend:** Located at the bottom of the image.

* **Q-Anchored (PopQA):** Solid blue line

* **A-Anchored (PopQA):** Dashed brown line

* **Q-Anchored (TriviaQA):** Dotted green line

* **A-Anchored (TriviaQA):** Dashed-dotted orange line

* **Q-Anchored (HotpotQA):** Dashed purple line

* **A-Anchored (HotpotQA):** Dotted gray line

* **Q-Anchored (NQ):** Dashed-dotted pink line

* **A-Anchored (NQ):** Dotted black line

### Detailed Analysis

**Left Chart (Llama-3.2-1B):**

* **Q-Anchored (PopQA):** (Solid Blue) Starts around 10% accuracy at layer 1, rises sharply to approximately 95% by layer 5, then fluctuates between 85% and 95% for the remaining layers.

* **A-Anchored (PopQA):** (Dashed Brown) Remains relatively stable around 50% accuracy across all layers.

* **Q-Anchored (TriviaQA):** (Dotted Green) Starts around 20% accuracy at layer 1, rises to approximately 85% by layer 12, then fluctuates between 75% and 85% for the remaining layers.

* **A-Anchored (TriviaQA):** (Dashed-dotted Orange) Remains relatively stable around 50% accuracy across all layers.

* **Q-Anchored (HotpotQA):** (Dashed Purple) Starts around 10% accuracy at layer 1, rises to approximately 60% by layer 5, then fluctuates between 40% and 60% for the remaining layers.

* **A-Anchored (HotpotQA):** (Dotted Gray) Remains relatively stable around 55% accuracy across all layers.

* **Q-Anchored (NQ):** (Dashed-dotted Pink) Starts around 50% accuracy at layer 1, drops to approximately 15% by layer 5, then fluctuates between 35% and 55% for the remaining layers.

* **A-Anchored (NQ):** (Dotted Black) Remains relatively stable around 55% accuracy across all layers.

**Right Chart (Llama-3.2-3B):**

* **Q-Anchored (PopQA):** (Solid Blue) Starts around 10% accuracy at layer 1, rises sharply to approximately 90% by layer 5, then fluctuates between 70% and 95% for the remaining layers.

* **A-Anchored (PopQA):** (Dashed Brown) Remains relatively stable around 40% accuracy across all layers.

* **Q-Anchored (TriviaQA):** (Dotted Green) Starts around 0% accuracy at layer 1, rises to approximately 95% by layer 12, then fluctuates between 80% and 95% for the remaining layers.

* **A-Anchored (TriviaQA):** (Dashed-dotted Orange) Remains relatively stable around 50% accuracy across all layers.

* **Q-Anchored (HotpotQA):** (Dashed Purple) Starts around 10% accuracy at layer 1, rises to approximately 90% by layer 12, then fluctuates between 70% and 95% for the remaining layers.

* **A-Anchored (HotpotQA):** (Dotted Gray) Remains relatively stable around 40% accuracy across all layers.

* **Q-Anchored (NQ):** (Dashed-dotted Pink) Starts around 50% accuracy at layer 1, drops to approximately 15% by layer 5, then fluctuates between 20% and 50% for the remaining layers.

* **A-Anchored (NQ):** (Dotted Black) Remains relatively stable around 50% accuracy across all layers.

### Key Observations

* **Q-Anchored vs. A-Anchored:** Q-Anchored tasks generally show more significant improvement in accuracy as the layer number increases, especially for PopQA, TriviaQA, and HotpotQA. A-Anchored tasks tend to remain relatively stable across all layers.

* **Model Size:** The larger model (3.2-3B) generally achieves higher accuracy for Q-Anchored tasks compared to the smaller model (3.2-1B), particularly for TriviaQA and HotpotQA.

* **Task Difficulty:** PopQA, TriviaQA, and HotpotQA tasks show a clear learning curve for Q-Anchored versions, while NQ shows a dip in accuracy before stabilizing.

* **Variance:** The shaded regions indicate varying degrees of uncertainty in the accuracy, with some tasks showing more consistent performance than others.

### Interpretation

The data suggests that anchoring the question (Q-Anchored) is more effective for improving answer accuracy as the model processes through deeper layers, especially for PopQA, TriviaQA, and HotpotQA. This could indicate that the model benefits more from processing the question context in these tasks. The larger model size (3.2-3B) appears to enhance the learning capability for Q-Anchored tasks, leading to higher overall accuracy. The relatively stable performance of A-Anchored tasks suggests that the answer context alone might not be sufficient for significant improvement as the model deepens. The dip in accuracy for Q-Anchored (NQ) in both models could indicate a different processing requirement or inherent difficulty in the NQ task. Overall, the charts highlight the impact of model size, anchoring strategy, and task type on the answer accuracy of Llama models.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

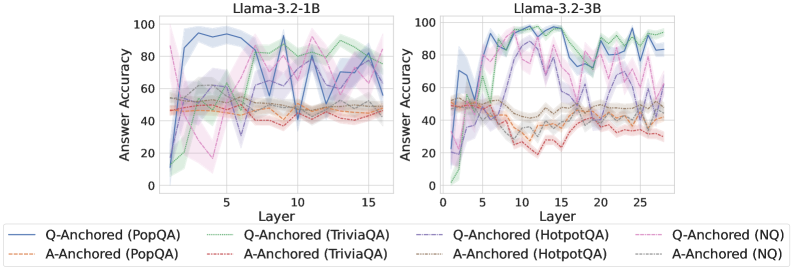

## Line Chart: Answer Accuracy vs. Layer for Llama Models

### Overview

The image presents two line charts comparing the answer accuracy of different question-answering (QA) datasets across layers of two Llama models: Llama-3.2-1B and Llama-3.2-3B. The x-axis represents the layer number, and the y-axis represents the answer accuracy, ranging from 0 to 100. Each line represents a different QA dataset and anchoring method (Q-Anchored or A-Anchored). The charts are positioned side-by-side for direct comparison.

### Components/Axes

* **X-axis:** Layer (ranging from approximately 0 to 15 for the 1B model and 0 to 25 for the 3B model).

* **Y-axis:** Answer Accuracy (ranging from 0 to 100).

* **Left Chart Title:** Llama-3.2-1B

* **Right Chart Title:** Llama-3.2-3B

* **Legend:** Located at the bottom of the image, containing the following labels and corresponding colors:

* Q-Anchored (PopQA) - Blue

* A-Anchored (PopQA) - Orange

* Q-Anchored (TriviaQA) - Green

* A-Anchored (TriviaQA) - Pink

* Q-Anchored (HotpotQA) - Light Blue (dashed)

* A-Anchored (HotpotQA) - Purple (dashed)

* Q-Anchored (NQ) - Dark Blue

* A-Anchored (NQ) - Brown

### Detailed Analysis or Content Details

**Llama-3.2-1B Chart (Left):**

* **Q-Anchored (PopQA) - Blue:** Starts at approximately 90% accuracy at layer 0, dips to around 50% at layer 2, then fluctuates between 60-80% for layers 3-15.

* **A-Anchored (PopQA) - Orange:** Starts at approximately 20% accuracy at layer 0, rises to around 40% at layer 3, and remains relatively stable between 30-50% for layers 4-15.

* **Q-Anchored (TriviaQA) - Green:** Starts at approximately 20% accuracy at layer 0, rises to around 80% at layer 5, then fluctuates between 60-90% for layers 6-15.

* **A-Anchored (TriviaQA) - Pink:** Starts at approximately 20% accuracy at layer 0, rises to around 60% at layer 5, then fluctuates between 40-70% for layers 6-15.

* **Q-Anchored (HotpotQA) - Light Blue (dashed):** Starts at approximately 60% accuracy at layer 0, dips to around 20% at layer 2, then fluctuates between 40-70% for layers 3-15.

* **A-Anchored (HotpotQA) - Purple (dashed):** Starts at approximately 40% accuracy at layer 0, dips to around 20% at layer 2, then fluctuates between 30-50% for layers 3-15.

* **Q-Anchored (NQ) - Dark Blue:** Starts at approximately 60% accuracy at layer 0, dips to around 30% at layer 2, then fluctuates between 40-60% for layers 3-15.

* **A-Anchored (NQ) - Brown:** Starts at approximately 20% accuracy at layer 0, rises to around 40% at layer 3, and remains relatively stable between 30-50% for layers 4-15.

**Llama-3.2-3B Chart (Right):**

* **Q-Anchored (PopQA) - Blue:** Starts at approximately 90% accuracy at layer 0, dips to around 50% at layer 2, then fluctuates between 60-90% for layers 3-25.

* **A-Anchored (PopQA) - Orange:** Starts at approximately 20% accuracy at layer 0, rises to around 40% at layer 3, and remains relatively stable between 30-50% for layers 4-25.

* **Q-Anchored (TriviaQA) - Green:** Starts at approximately 20% accuracy at layer 0, rises to around 90% at layer 5, then fluctuates between 60-90% for layers 6-25.

* **A-Anchored (TriviaQA) - Pink:** Starts at approximately 20% accuracy at layer 0, rises to around 60% at layer 5, then fluctuates between 40-70% for layers 6-25.

* **Q-Anchored (HotpotQA) - Light Blue (dashed):** Starts at approximately 60% accuracy at layer 0, dips to around 20% at layer 2, then fluctuates between 40-80% for layers 3-25.

* **A-Anchored (HotpotQA) - Purple (dashed):** Starts at approximately 40% accuracy at layer 0, dips to around 20% at layer 2, then fluctuates between 30-50% for layers 3-25.

* **Q-Anchored (NQ) - Dark Blue:** Starts at approximately 60% accuracy at layer 0, dips to around 30% at layer 2, then fluctuates between 40-60% for layers 3-25.

* **A-Anchored (NQ) - Brown:** Starts at approximately 20% accuracy at layer 0, rises to around 40% at layer 3, and remains relatively stable between 30-50% for layers 4-25.

### Key Observations

* **Q-Anchored generally outperforms A-Anchored:** Across all datasets, the Q-Anchored methods consistently achieve higher accuracy than the A-Anchored methods.

* **PopQA shows high initial accuracy:** The PopQA dataset, when Q-Anchored, starts with the highest accuracy in both models.

* **Accuracy fluctuates with layer:** Most datasets exhibit fluctuations in accuracy as the layer number increases, suggesting that the model's performance is not consistently improving with depth.

* **3B model shows more sustained accuracy:** The Llama-3.2-3B model generally maintains higher accuracy levels across layers compared to the Llama-3.2-1B model.

* **Initial dip in accuracy:** Many lines show a dip in accuracy around layer 2, potentially indicating a learning phase or adjustment period.

### Interpretation

The data suggests that question-anchoring (Q-Anchored) is a more effective method for improving answer accuracy in these Llama models compared to answer-anchoring (A-Anchored). The higher accuracy of the 3B model indicates that increasing model size generally leads to better performance. The fluctuations in accuracy across layers suggest that the models are not simply learning linearly with depth; there are likely complex interactions between layers and datasets. The initial dip in accuracy could be due to the model adjusting to the specific characteristics of each dataset. The PopQA dataset, with its high initial accuracy, might be easier for the models to learn or more aligned with their pre-training data. These charts provide valuable insights into the performance of Llama models on different QA tasks and can inform future model development and training strategies. The differences in performance between the 1B and 3B models highlight the importance of model scale in achieving higher accuracy.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Answer Accuracy Across Layers for Llama-3.2 Models

### Overview

The image displays two side-by-side line charts comparing the "Answer Accuracy" of different question-answering methods across the layers of two language models: Llama-3.2-1B (left) and Llama-3.2-3B (right). Each chart plots multiple data series, distinguished by color and line style, representing different anchoring methods (Q-Anchored vs. A-Anchored) applied to four distinct QA datasets.

### Components/Axes

* **Chart Titles:**

* Left Chart: `Llama-3.2-1B`

* Right Chart: `Llama-3.2-3B`

* **Y-Axis (Both Charts):**

* Label: `Answer Accuracy`

* Scale: 0 to 100, with major tick marks at intervals of 20 (0, 20, 40, 60, 80, 100).

* **X-Axis (Both Charts):**

* Label: `Layer`

* Scale (Left Chart - 1B): 0 to 15, with major tick marks at 0, 5, 10, 15.

* Scale (Right Chart - 3B): 0 to 25, with major tick marks at 0, 5, 10, 15, 20, 25.

* **Legend (Bottom, spanning both charts):**

* **Q-Anchored (Solid Lines):**

* Blue Solid Line: `Q-Anchored (PopQA)`

* Green Solid Line: `Q-Anchored (TriviaQA)`

* Purple Solid Line: `Q-Anchored (HotpotQA)`

* Pink Solid Line: `Q-Anchored (NQ)`

* **A-Anchored (Dashed Lines):**

* Orange Dashed Line: `A-Anchored (PopQA)`

* Red Dashed Line: `A-Anchored (TriviaQA)`

* Brown Dashed Line: `A-Anchored (HotpotQA)`

* Gray Dashed Line: `A-Anchored (NQ)`

### Detailed Analysis

**Llama-3.2-1B (Left Chart):**

* **General Trend:** The Q-Anchored methods (solid lines) generally achieve higher accuracy than the A-Anchored methods (dashed lines) across most layers, but exhibit significantly higher variance (indicated by the shaded confidence bands).

* **Q-Anchored Series:**

* **PopQA (Blue):** Starts low (~10% at layer 0), rises sharply to a peak near 95% around layer 3-4, then fluctuates with a general downward trend, ending near 70% at layer 15.

* **TriviaQA (Green):** Starts near 0%, climbs steadily to a peak of ~90% around layer 10, then declines slightly.

* **HotpotQA (Purple):** Shows high volatility. Starts near 0%, spikes to ~80% around layer 2, drops, then has another major peak near 90% around layer 10.

* **NQ (Pink):** Also highly volatile. Starts near 0%, peaks near 80% around layer 3, drops sharply, then has another peak near 90% around layer 12.

* **A-Anchored Series:** All four dashed lines (Orange, Red, Brown, Gray) cluster in a lower band, mostly between 40% and 60% accuracy. They show relatively stable performance with minor fluctuations across layers, lacking the dramatic peaks of the Q-Anchored lines.

**Llama-3.2-3B (Right Chart):**

* **General Trend:** Similar to the 1B model, Q-Anchored methods outperform A-Anchored methods. The overall accuracy levels are higher, and the performance peaks are more pronounced and sustained.

* **Q-Anchored Series:**

* **PopQA (Blue):** Rises quickly from ~20% to over 90% by layer 5, maintains high accuracy (>80%) with fluctuations across the remaining layers.

* **TriviaQA (Green):** Shows a strong, steady climb from near 0% to a plateau of ~95% accuracy from layer 10 onward.

* **HotpotQA (Purple):** Exhibits a volatile but high-performing trajectory, with multiple peaks above 90% between layers 5-20.

* **NQ (Pink):** Rises to ~80% by layer 5, then fluctuates between 60% and 90% for the remaining layers.

* **A-Anchored Series:** Again, the four dashed lines cluster together, but at a slightly lower level than in the 1B model, primarily between 30% and 50% accuracy. They remain relatively flat across layers.

### Key Observations

1. **Anchoring Method Dominance:** Across both model sizes and all four datasets, the Q-Anchored (question-anchored) approach consistently yields higher answer accuracy than the A-Anchored (answer-anchored) approach.

2. **Model Size Effect:** The larger 3B model achieves higher peak accuracies and sustains high performance across more layers compared to the 1B model, especially for the Q-Anchored methods.

3. **Dataset Variability:** The performance of Q-Anchored methods varies significantly by dataset. TriviaQA (green) shows the most stable high performance in the 3B model, while HotpotQA (purple) and NQ (pink) are more volatile in both models.

4. **Layer Sensitivity:** Q-Anchored accuracy is highly sensitive to the layer, showing dramatic peaks and troughs. A-Anchored accuracy is largely insensitive to the layer, remaining in a narrow, lower band.

5. **Early Layer Performance:** Both models show a rapid increase in accuracy for Q-Anchored methods within the first 5 layers.

### Interpretation

The data suggests a fundamental difference in how question-anchored versus answer-anchored representations evolve through the layers of a language model for factual question answering.

* **Q-Anchored Representations** appear to develop specialized, high-fidelity information in specific middle layers (e.g., layers 3-4 for PopQA in 1B, layers 5+ for TriviaQA in 3B). The volatility indicates that this information is not uniformly distributed; certain layers become "experts" for certain types of questions. The superior performance implies that anchoring the model's internal state to the question is a more effective strategy for retrieving answer-relevant knowledge.

* **A-Anchored Representations** seem to maintain a more generic, lower-level association with potential answers throughout the network. Their flat, lower performance suggests this is a less effective strategy for pinpointing the correct answer from the model's parametric knowledge.

* The **improvement from 1B to 3B** indicates that increased model capacity allows for the development of more robust and precise question-anchored representations, leading to higher and more stable accuracy.

**In essence, the charts provide evidence that for these Llama models, how you "anchor" the internal processing (to the question vs. to the answer) has a profound impact on the model's ability to accurately recall factual knowledge, and this impact is mediated by both the specific dataset and the depth within the network.**

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Answer Accuracy Across Layers for Llama-3.2 Models

### Overview

The image contains two side-by-side line graphs comparing answer accuracy across transformer model layers for two Llama-3.2 variants (1B and 3B parameter sizes). Each graph shows multiple data series representing different question-answering datasets (PopQA, TriviaQA, HotpotQA, NQ) and anchoring methods (Q-Anchored vs A-Anchored). The graphs use color-coded lines with distinct line styles to differentiate datasets and anchoring approaches.

### Components/Axes

- **X-axis (Layer)**:

- Left chart: 0–15 (Llama-3.2-1B)

- Right chart: 0–25 (Llama-3.2-3B)

- Discrete integer values representing transformer layers

- **Y-axis (Answer Accuracy)**:

- Scale: 0–100% (both charts)

- Continuous percentage values

- **Legends**:

- Positioned at bottom of each chart

- Color-coded with line styles:

- **Solid lines**: Q-Anchored methods

- **Dashed lines**: A-Anchored methods

- Datasets:

- PopQA (blue/orange)

- TriviaQA (green/brown)

- HotpotQA (purple/gray)

- NQ (pink/red)

### Detailed Analysis

#### Llama-3.2-1B (Left Chart)

- **Q-Anchored (PopQA)**:

- Solid blue line

- Starts at ~85% accuracy (Layer 0), dips to ~40% (Layer 5), then fluctuates between 50–70%

- **A-Anchored (PopQA)**:

- Dashed orange line

- Starts at ~50%, peaks at ~65% (Layer 10), then declines to ~40%

- **Q-Anchored (TriviaQA)**:

- Solid green line

- Starts at ~60%, peaks at ~80% (Layer 12), then declines to ~50%

- **A-Anchored (TriviaQA)**:

- Dashed brown line

- Starts at ~40%, peaks at ~60% (Layer 8), then declines to ~30%

- **Q-Anchored (HotpotQA)**:

- Solid purple line

- Starts at ~70%, peaks at ~90% (Layer 6), then declines to ~60%

- **A-Anchored (HotpotQA)**:

- Dashed gray line

- Starts at ~50%, peaks at ~70% (Layer 14), then declines to ~40%

- **Q-Anchored (NQ)**:

- Solid pink line

- Starts at ~55%, peaks at ~75% (Layer 3), then declines to ~50%

- **A-Anchored (NQ)**:

- Dashed red line

- Starts at ~45%, peaks at ~65% (Layer 7), then declines to ~40%

#### Llama-3.2-3B (Right Chart)

- **Q-Anchored (PopQA)**:

- Solid blue line

- Starts at ~75%, peaks at ~95% (Layer 10), then declines to ~65%

- **A-Anchored (PopQA)**:

- Dashed orange line

- Starts at ~55%, peaks at ~75% (Layer 15), then declines to ~50%

- **Q-Anchored (TriviaQA)**:

- Solid green line

- Starts at ~65%, peaks at ~85% (Layer 20), then declines to ~60%

- **A-Anchored (TriviaQA)**:

- Dashed brown line

- Starts at ~45%, peaks at ~70% (Layer 22), then declines to ~40%

- **Q-Anchored (HotpotQA)**:

- Solid purple line

- Starts at ~80%, peaks at ~100% (Layer 18), then declines to ~70%

- **A-Anchored (HotpotQA)**:

- Dashed gray line

- Starts at ~60%, peaks at ~80% (Layer 24), then declines to ~50%

- **Q-Anchored (NQ)**:

- Solid pink line

- Starts at ~60%, peaks at ~85% (Layer 12), then declines to ~55%

- **A-Anchored (NQ)**:

- Dashed red line

- Starts at ~50%, peaks at ~70% (Layer 16), then declines to ~45%

### Key Observations

1. **Model Size Impact**:

- 3B model shows higher peak accuracies (up to 100% vs 90% in 1B)

- 3B model exhibits greater layer-to-layer variability (e.g., HotpotQA Q-Anchored peaks at Layer 18)

2. **Anchoring Method Trends**:

- Q-Anchored methods consistently outperform A-Anchored across datasets

- A-Anchored methods show more gradual declines after initial peaks

3. **Dataset Variability**:

- HotpotQA Q-Anchored shows most dramatic peaks (100% in 3B model)

- NQ dataset exhibits the most erratic patterns (e.g., sharp dips in Layer 5 for 1B model)

4. **Layer-Specific Patterns**:

- Early layers (0–5) show higher variability in both models

- Middle layers (10–15 for 1B; 15–20 for 3B) show more stable performance

### Interpretation

The data suggests that:

1. **Model Size Enhances Performance**: The 3B model achieves higher peak accuracies but with increased layer-to-layer variability, indicating potential overfitting or complex internal dynamics.

2. **Q-Anchored Superiority**: Q-Anchored methods consistently outperform A-Anchored across all datasets, suggesting question-specific anchoring provides better context retention.

3. **Dataset-Specific Behavior**:

- HotpotQA benefits most from Q-Anchored methods (reaching 100% accuracy in 3B model)

- NQ dataset shows the most unstable performance, possibly due to its open-ended nature

4. **Layer Dynamics**: Early layers (0–5) may represent initial context processing, while middle layers (10–15/20) show optimized question-answer alignment.

The graphs highlight the importance of anchoring strategy and model scale in transformer-based QA systems, with larger models offering higher potential but requiring careful layer management.

DECODING INTELLIGENCE...