TECHNICAL ASSET FINGERPRINT

c8a02628f1cd0f2145a003bc

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

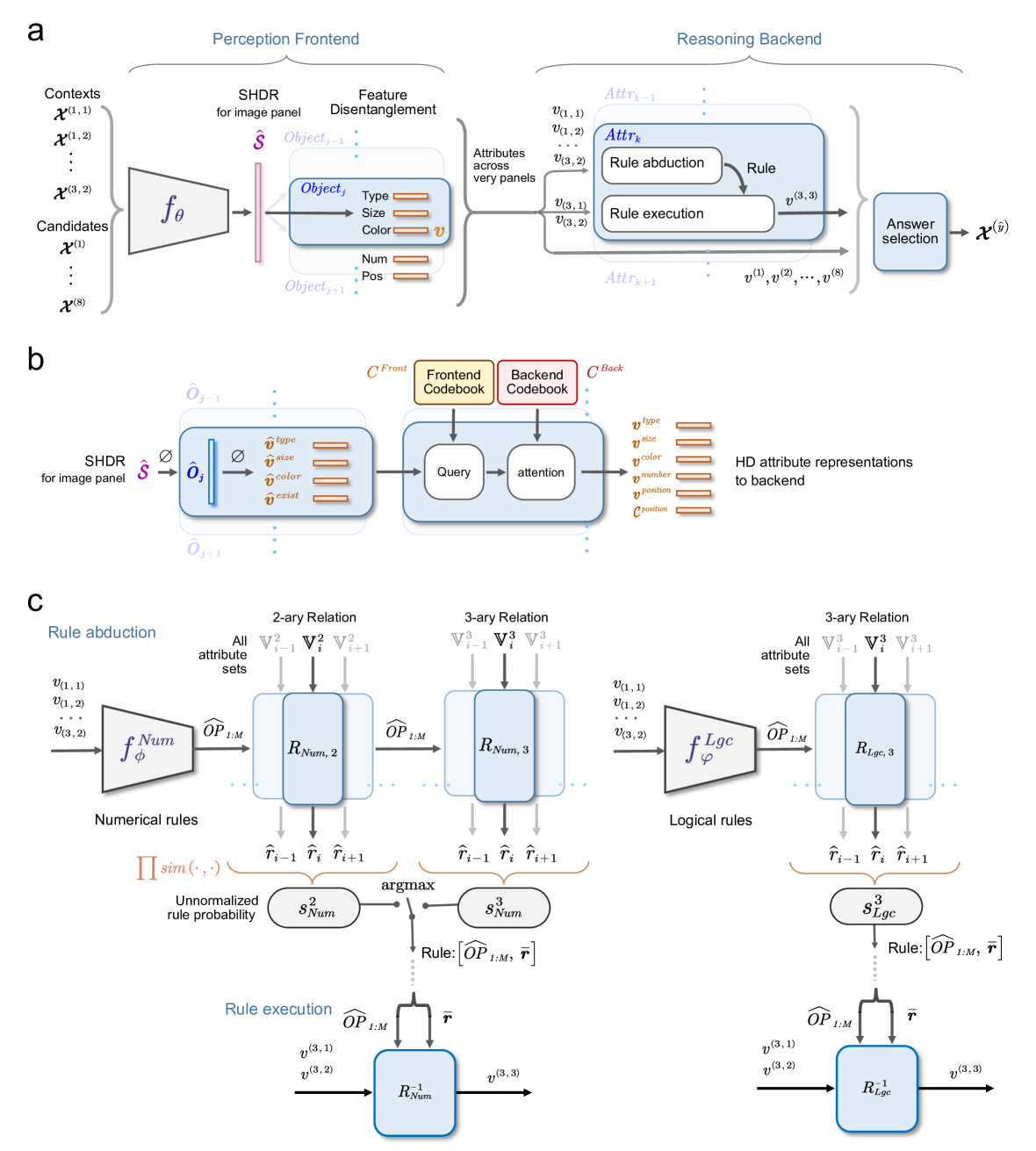

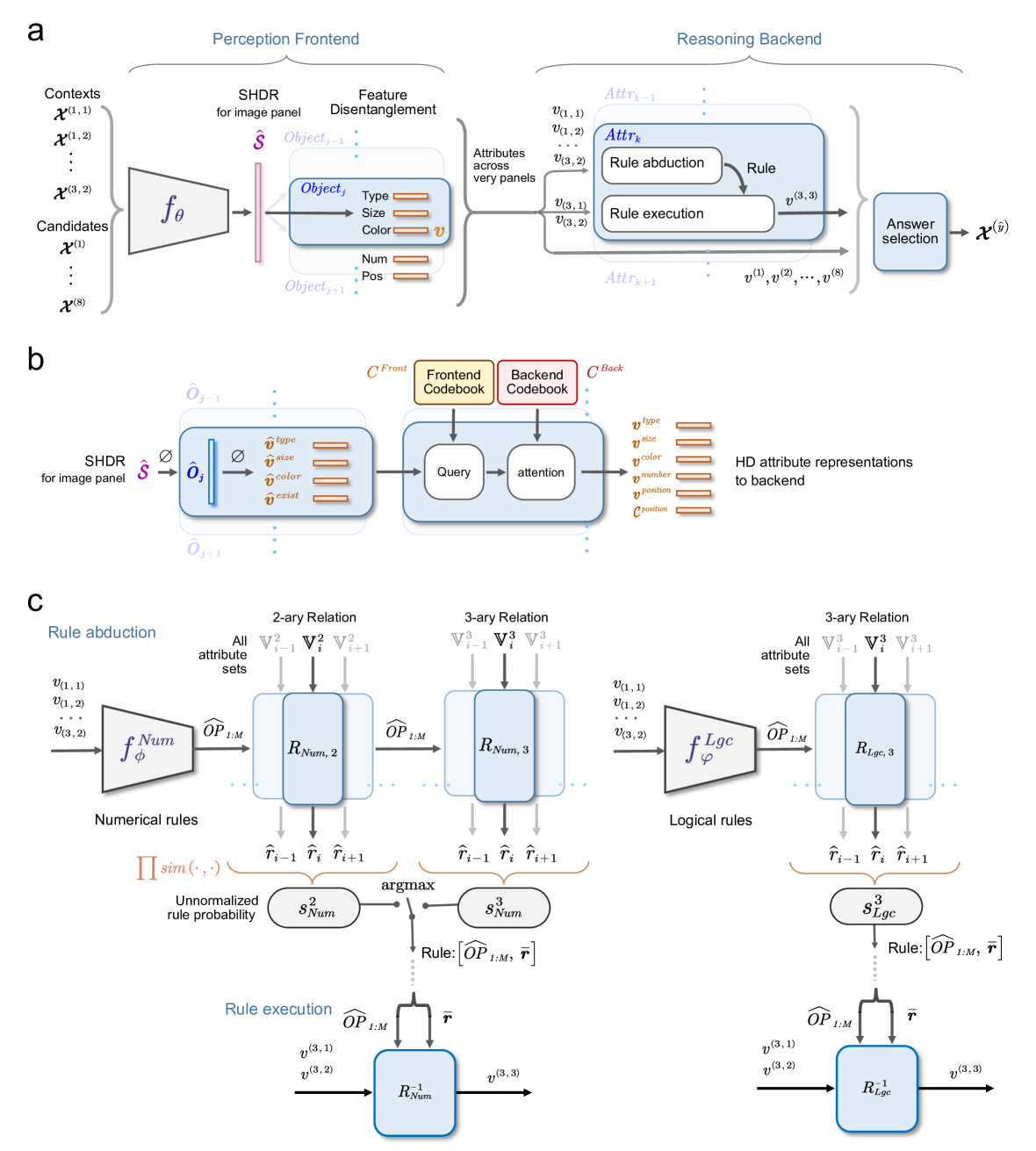

## Hybrid Perception and Reasoning System Diagram

### Overview

The image presents a diagram illustrating a hybrid perception and reasoning system, divided into a "Perception Frontend" and a "Reasoning Backend." It details the flow of information from input contexts and candidates, through feature disentanglement and rule-based reasoning, to final answer selection. The diagram includes components for SHDR (for image panel), feature disentanglement, attribute processing, rule abduction, and rule execution, with both numerical and logical rule paths.

### Components/Axes

**Overall Structure:**

* The diagram is split horizontally into three sections, labeled a, b, and c.

* Section a shows the high-level flow from perception to reasoning.

* Section b details the SHDR processing and codebook lookup.

* Section c elaborates on the rule abduction and execution processes.

**Section a: Perception Frontend and Reasoning Backend**

* **Title:** "Perception Frontend" (left), "Reasoning Backend" (right)

* **Input:**

* "Contexts": x^(1,1), x^(1,2), ..., x^(3,2)

* "Candidates": x^(1), ..., x^(8)

* **Perception Frontend Components:**

* "SHDR for image panel": Ŝ

* Function: f_θ

* "Feature Disentanglement":

* "Object_j-1":

* "Object_j":

* "Type"

* "Size"

* "Color": v

* "Num"

* "Pos"

* "Object_j+1"

* **Reasoning Backend Components:**

* "Attributes across very panels":

* "Attr_k-1"

* v^(1,1), v^(1,2), v^(3,2)

* "Attr_k":

* "Rule abduction"

* "Rule"

* "Attr_k+1"

* v^(3,1), v^(3,2)

* "Rule execution"

* "Answer selection": x^(y)

* Input to Answer Selection: v^(1), v^(2), ..., v^(8)

**Section b: SHDR Processing and Codebook Lookup**

* "SHDR for image panel": Ŝ

* Input: O_j-1

* Processing: Ø

* Output: Ô_j

* Attribute Vectors:

* v^type

* v^size

* v^color

* v^exist

* Output: O_j+1

* Codebooks:

* "C^Front Frontend Codebook": Yellow box

* "C^Back Backend Codebook": Red box

* "Query" -> "attention"

* HD attribute representations to backend:

* v^type

* v^size

* v^color

* v^number

* v^position

**Section c: Rule Abduction and Execution**

* **Left Side: Numerical Rules**

* "Rule abduction"

* Input: v^(1,1), v^(1,2), ..., v^(3,2)

* Function: f_Num φ

* Operator: OP_1:M

* "2-ary Relation": R_Num,2

* All attribute sets: V^2_i-1, V^2_i, V^2_i+1

* "3-ary Relation": R_Num,3

* All attribute sets: V^3_i-1, V^3_i, V^3_i+1

* Outputs: r̂_i-1, r̂_i, r̂_i+1

* "Unnormalized rule probability": Π sim(.,.)

* "argmax": s^2_Num, s^3_Num

* "Rule": [OP_1:M, r]

* "Rule execution"

* Input: v^(3,1), v^(3,2)

* Operator: OP_1:M

* Output: v^(3,3)

* Relation: R^-1_Num

* **Right Side: Logical Rules**

* "Rule abduction"

* Input: v^(1,1), v^(1,2), ..., v^(3,2)

* Function: f_Lgc φ

* Operator: OP_1:M

* "3-ary Relation": R_Lgc,3

* All attribute sets: V^3_i-1, V^3_i, V^3_i+1

* Outputs: r̂_i-1, r̂_i, r̂_i+1

* "argmax": s^3_Lgc

* "Rule": [OP_1:M, r]

* "Rule execution"

* Input: v^(3,1), v^(3,2)

* Operator: OP_1:M

* Output: v^(3,3)

* Relation: R^-1_Lgc

### Detailed Analysis or ### Content Details

* **Perception Frontend:** The system takes contexts and candidates as input. The SHDR module processes the image panel, and feature disentanglement extracts object attributes like type, size, color, number, and position.

* **Reasoning Backend:** Attributes are processed through rule abduction and execution. The system uses both numerical and logical rules. The final step is answer selection.

* **SHDR Processing:** The SHDR module transforms the input image panel through a series of operations (represented by Ø) to produce an output. This output is then used to query frontend and backend codebooks.

* **Rule Processing:** Rule abduction uses functions (f_Num and f_Lgc) and operators (OP_1:M) to process attribute sets. The argmax function selects the best rule based on unnormalized rule probabilities. Rule execution then applies the selected rule to produce the final output.

### Key Observations

* The system is designed to integrate perception and reasoning.

* Feature disentanglement is a key step in extracting relevant attributes.

* Both numerical and logical rules are used in the reasoning process.

* The system uses codebooks to map attributes to representations.

### Interpretation

The diagram illustrates a sophisticated AI system that combines perception and reasoning to solve complex tasks. The system first extracts relevant features from the input image using the Perception Frontend. These features are then used by the Reasoning Backend to apply rules and select the best answer. The use of both numerical and logical rules allows the system to handle a wide range of reasoning tasks. The codebooks provide a way to map attributes to representations, which is important for generalization and transfer learning. The diagram highlights the key components and flow of information in the system, providing a valuable overview of its architecture and functionality.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Visual Reasoning Framework

### Overview

This diagram illustrates a visual reasoning framework composed of a Perception Frontend and a Reasoning Backend. The framework takes visual contexts as input, processes them to extract attributes, and then applies rules to arrive at an answer. The diagram is divided into three sections (a, b, and c) that detail the different stages of the process.

### Components/Axes

The diagram consists of several key components:

* **Perception Frontend:** Includes "Contexts" (x<sup>(1,1)</sup>, x<sup>(1,2)</sup>, x<sup>(1,3)</sup>), "Candidates" (x<sup>(6)</sup>), "SHDR" (Scene Hierarchy Directed Relational), "Feature Disentanglement", and "Object" (with attributes: Type, Size, Color, Num, Pos).

* **Reasoning Backend:** Includes "Attributes" (v<sub>1,1</sub>, v<sub>1,2</sub>, v<sub>1,3</sub>), "Rule Abduction", "Rule Execution", and "Answer Selection" (x<sup>(7)</sup>).

* **Codebooks:** "Frontend Codebook" (C<sub>Front</sub>) and "Backend Codebook" (C<sub>Back</sub>).

* **Rule Abduction:** Depicts both "Numerical Rules" and "Logical Rules" with associated operations and probabilities.

* **Attribute Sets:** Represented as V<sup>2</sup> and V<sup>3</sup> for 2-ary and 3-ary relations respectively.

### Detailed Analysis or Content Details

**Section a: Overall Framework**

* **Input:** Contexts x<sup>(1,1)</sup>, x<sup>(1,2)</sup>, x<sup>(1,3)</sup> are fed into a function *f<sub>θ</sub>* which outputs Objects.

* **Object Attributes:** Objects are characterized by attributes: Type, Size, Color, Num, Pos. These are then passed to the Reasoning Backend as Attributes v<sub>1,1</sub>, v<sub>1,2</sub>, v<sub>1,3</sub>.

* **Rule Application:** The Reasoning Backend uses Rule Abduction and Rule Execution to generate an answer y<sup>(3)</sup>, which is then used for Answer Selection x<sup>(7)</sup>.

* **SHDR:** The diagram indicates that SHDR is used "for image panel representation".

**Section b: Codebook and Attention Mechanism**

* **SHDR Output:** The SHDR outputs object representations (ô<sub>1,1</sub>).

* **Frontend Codebook:** The Frontend Codebook (C<sub>Front</sub>) maps object attributes (type, size, color, count, position) to representations.

* **Query & Attention:** A "Query" is used with an "attention" mechanism to process the object representations.

* **Backend Codebook:** The Backend Codebook (C<sub>Back</sub>) maps HD attribute representations to the backend.

**Section c: Rule Abduction and Execution**

* **Numerical Rules:**

* Input: Attributes v<sub>1,1</sub>, v<sub>1,2</sub>, v<sub>1,3</sub>.

* Operation: f<sup>Num</sup> and OP<sub>1,M</sub>.

* Relation: R<sub>Num,2</sub> and R<sub>Num,3</sub>.

* Probability: ∏ sin(·) and argmax (with denominator 8 * Num).

* Output: y<sup>(3),2</sup> and y<sup>(3),3</sup>.

* **Logical Rules:**

* Input: Attributes v<sub>1,1</sub>, v<sub>1,2</sub>, v<sub>1,3</sub>.

* Operation: f<sup>Lgc</sup> and OP<sub>1,M</sub>.

* Relation: R<sub>Lgc,2</sub> and R<sub>Lgc,3</sub>.

* Probability: 3 * Lgc.

* Output: y<sup>(3),2</sup> and y<sup>(3),3</sup>.

* **Rule Execution:** Both numerical and logical rules lead to Rule Execution, resulting in outputs y<sup>(3),2</sup> and y<sup>(3),3</sup>.

### Key Observations

* The framework explicitly separates perception and reasoning.

* The use of codebooks suggests a learned representation of objects and attributes.

* The rule abduction process involves both numerical and logical reasoning.

* Probabilistic elements are incorporated into the rule abduction process.

* The diagram highlights the flow of information from visual contexts to a final answer.

### Interpretation

This diagram presents a sophisticated visual reasoning system. The Perception Frontend aims to extract meaningful attributes from visual input, while the Reasoning Backend leverages these attributes to apply predefined rules and derive conclusions. The use of SHDR suggests a hierarchical understanding of scenes. The separation of numerical and logical rules indicates the system can handle different types of reasoning. The probabilistic nature of rule abduction suggests the system can deal with uncertainty and ambiguity. The attention mechanism in Section b likely allows the system to focus on relevant parts of the image when applying rules.

The diagram suggests a system capable of complex visual problem-solving, potentially applicable to tasks like visual question answering or robotic navigation. The framework's modularity allows for independent improvement of the perception and reasoning components. The inclusion of codebooks implies that the system learns to represent visual concepts in a way that facilitates reasoning. The overall architecture is designed to mimic human cognitive processes, combining perception, knowledge representation, and logical inference.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## System Architecture Diagram: Visual Reasoning Model with Perception Frontend and Reasoning Backend

### Overview

The image is a technical diagram illustrating the architecture of a machine learning model designed for visual reasoning tasks. It is divided into three main panels labeled **a**, **b**, and **c**, each detailing different aspects of the system. The overall flow moves from processing raw visual inputs (perception) to abstract reasoning and answer selection. The diagram uses a combination of block diagrams, mathematical notation, and flow arrows to depict data transformation and logical processes.

### Components/Axes

The diagram is segmented into three primary regions:

1. **Panel a (Top):** High-level overview of the complete pipeline, split into a "Perception Frontend" (left) and a "Reasoning Backend" (right).

2. **Panel b (Middle):** A detailed view of the feature disentanglement and attribute representation mechanism, bridging the frontend and backend.

3. **Panel c (Bottom):** A detailed breakdown of the "Rule abduction" and "Rule execution" processes within the Reasoning Backend, separated into numerical and logical rule pathways.

**Key Labels and Notation:**

* **Inputs:** `Contexts` (denoted as `X^(1,1)`, `X^(1,2)`, ..., `X^(3,2)`) and `Candidates` (`X^(1)` ... `X^(8)`).

* **Core Functions:** `f_θ` (Perception Frontend function), `f_Num^φ` (Numerical rule function), `f_Lgc^φ` (Logical rule function).

* **Intermediate Representations:** `Ŝ` (SHDR for image panel), `Object_j` with attributes `Type`, `Size`, `Color`, `Num`, `Pos` (collectively `v`), `Attr_k` (attributes across panels).

* **Rule Components:** `Rule abduction`, `Rule execution`, `Answer selection`.

* **Codebooks:** `Frontend Codebook (C^Front)`, `Backend Codebook (C^Back)`.

* **Attribute Vectors:** `v_type`, `v_size`, `v_color`, `v_number`, `v_position`, `c_position`.

* **Rule Types:** `2-ary Relation`, `3-ary Relation`.

* **Rule Sets:** `R_Num,2`, `R_Num,3`, `R_Lgc,3`.

* **Operations:** `Query`, `attention`, `argmax`, `Π sim(·,·)` (product of similarities).

* **Outputs:** `x^(ŷ)` (selected answer).

### Detailed Analysis

**Panel a: High-Level Pipeline**

* **Perception Frontend (Left):** Takes multiple context images and candidate images as input. These are processed by a function `f_θ` to produce `Ŝ` (SHDR for image panel). This leads to "Feature Disentanglement," where objects (`Object_j`, `Object_j+1`) are identified and their attributes (Type, Size, Color, Num, Pos) are extracted into a vector `v`.

* **Reasoning Backend (Right):** Receives attributes `v` from "very panels" (likely meaning "across panels"). It operates on an attribute set `Attr_k`, which undergoes "Rule abduction" to infer a rule, followed by "Rule execution" to produce a new attribute `v^(3,3)`. This process iterates (`Attr_k-1`, `Attr_k`, `Attr_k+1`). Finally, "Answer selection" uses the processed attributes (`v^(1)`, `v^(2)`, ..., `v^(8)`) to choose the correct candidate `x^(ŷ)`.

**Panel b: Attribute Disentanglement and Representation**

* This panel details the transition from the perception frontend to the reasoning backend.

* The SHDR `Ŝ` is used to generate an object representation `Ô_j` (with a null symbol `Ø` indicating a slot or placeholder).

* This representation is decomposed into disentangled attribute vectors: `v̂_type`, `v̂_size`, `v̂_color`, `v̂_exist`.

* These vectors, along with a `Frontend Codebook (C^Front)`, are fed into a "Query" and "attention" mechanism. The attention mechanism also uses a `Backend Codebook (C^Back)`.

* The output is a set of "HD attribute representations to backend": `v_type`, `v_size`, `v_color`, `v_number`, `v_position`, `c_position`.

**Panel c: Rule Abduction and Execution**

* This panel is split vertically into **Numerical rules** (left) and **Logical rules** (right).

* **Rule Abduction (Top Half):**

* **Numerical Path:** Attribute vectors `v` are input to `f_Num^φ`, producing operator predictions `ÔP_1:M`. These are applied to attribute sets (`V_i^2`, `V_i^3`) within rule modules `R_Num,2` and `R_Num,3` to generate rule hypotheses `r̂_i-1`, `r̂_i`, `r̂_i+1`. A similarity product `Π sim(·,·)` computes unnormalized rule probabilities `s_Num^2` and `s_Num^3`. An `argmax` operation selects the best rule, defined as `[ÔP_1:M, r̄]`.

* **Logical Path:** A parallel process uses `f_Lgc^φ` to generate rules for 3-ary relations, resulting in rule `[ÔP_1:M, r̄]` and probability `s_Lgc^3`.

* **Rule Execution (Bottom Half):**

* The selected rule `[ÔP_1:M, r̄]` is applied to input attributes (e.g., `v^(3,1)`, `v^(3,2)`).

* For numerical rules, this occurs in module `R_Num^-1`, outputting `v^(3,3)`.

* For logical rules, this occurs in module `R_Lgc^1`, outputting `v^(3,3)`.

### Key Observations

1. **Hierarchical Abstraction:** The system moves from concrete pixel data (`Contexts`, `Candidates`) to disentangled object attributes, and finally to abstract relational rules.

2. **Dual Rule Processing:** The model explicitly separates and processes numerical and logical rules, suggesting it handles different types of reasoning (e.g., arithmetic vs. comparative) with specialized pathways.

3. **Iterative Reasoning:** The notation `Attr_k-1`, `Attr_k`, `Attr_k+1` in Panel a implies the reasoning backend operates over multiple steps or layers of attribute abstraction.

4. **Codebook-Mediated Attention:** Panel b reveals that the translation from frontend features to backend-ready attributes is not direct but is mediated by learned codebooks and an attention mechanism, likely to align feature spaces.

5. **Rule Selection via Probability:** The abduction phase doesn't just generate rules; it scores them (`s_Num^2`, `s_Num^3`, `s_Lgc^3`) using a similarity metric, and selects the most probable one via `argmax`.

### Interpretation

This diagram outlines a neuro-symbolic architecture for visual question answering or similar visual reasoning tasks. The **Perception Frontend** acts as a vision backbone that parses images into structured, object-centric representations (disentangled attributes). The **Reasoning Backend** is a symbolic or differentiable logic engine that manipulates these attributes using learned rules.

The core innovation appears to be the **Rule Abduction** mechanism. Instead of having a fixed set of rules, the model *infers* the most applicable rule (numerical or logical, 2-ary or 3-ary) from the current context by scoring candidate rules. This makes the system more flexible and capable of handling novel reasoning problems. The separation into numerical and logical streams suggests an inductive bias built into the model to handle these fundamentally different types of relations efficiently.

The flow from `v` (raw attributes) to `v̂` (disentangled attributes) to `v` (HD representations) to finally `v^(3,3)` (a predicted attribute after rule execution) shows a complete cycle of perception, reasoning, and prediction. The final "Answer selection" module likely compares the predicted outcome `v^(3,3)` or the state of all candidates against the expected answer format to choose the correct image candidate `x^(ŷ)`. This architecture aims to combine the pattern recognition strengths of neural networks with the interpretability and systematic generalization of symbolic reasoning.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## System Architecture Diagram: Perception, Reasoning, and Rule Execution Framework

### Overview

The image presents a three-part technical diagram illustrating a cognitive system architecture. Part A shows the system's frontend-backend structure, Part B details attribute processing components, and Part C demonstrates rule-based reasoning mechanisms. The diagrams use standardized flowchart notation with color-coded components and directional arrows indicating information flow.

### Components/Axes

**Part A (Frontend-Backend Structure):**

- **Perception Frontend:**

- Input: Contexts (X₁₁, X₁₂, X₃₂), Candidates (X₁, X₈)

- Processing: SHDR (Spatial Hierarchical Density Representation), Feature disentanglement

- Output: Object attributes (Type, Size, Color, Position)

- **Reasoning Backend:**

- Components: Rule abduction, Rule execution

- Output: Answer selection (X̂_y)

**Part B (Attribute Processing):**

- Input: SHDR (Ŝ)

- Components:

- Frontend Codebook (C_Front)

- Backend Codebook (C_Back)

- Query & Attention mechanisms

- Output: High-dimensional (HD) attribute representations

**Part C (Rule-Based Reasoning):**

- **Rule Abduction:**

- Input: Numerical rules (f_φ), Attribute sets (V², V³)

- Components:

- 2-ary Relation (R_Num,2)

- 3-ary Relation (R_Num,3)

- Output: Unnormalized rule probabilities (s_Num², s_Num³)

- **Rule Execution:**

- Input: Logical rules (f_Lgc)

- Components:

- 3-ary Relation (R_Lgc,3)

- Rule execution module (R⁻¹_Num, R⁻¹_Lgc)

- Output: Final attribute values (v₃₁, v₃₂, v₃₃)

### Detailed Analysis

**Part A Flow:**

1. Contextual information (X) → SHDR processing → Feature disentanglement

2. Disentangled features → Attribute extraction (Type, Size, Color, Position)

3. Attributes → Rule abduction → Rule execution → Final answer selection

**Part B Flow:**

1. SHDR (Ŝ) → Frontend Codebook (C_Front) → Query generation

2. Query → Attention mechanism → Backend Codebook (C_Back)

3. HD attribute representations generated through this pipeline

**Part C Mechanisms:**

- **Numerical Rules:**

- Attribute sets (V², V³) → 2-ary/3-ary relations

- Probability calculation: argmax(s_Num², s_Num³)

- **Logical Rules:**

- Attribute sets (V³) → 3-ary relations

- Probability calculation: s_Lgc³

- Both pathways converge through rule execution modules

### Key Observations

1. **Modular Design:** Clear separation between perception (frontend) and reasoning (backend) components

2. **Attribute Hierarchy:** Attributes progress from basic features (Type, Size) to complex representations (HD attributes)

3. **Rule Integration:** Dual pathways for numerical and logical rule processing

4. **Temporal Flow:** Information flows sequentially from perception through reasoning to final output

5. **Color Coding:**

- Blue: Core processing modules

- Yellow: Frontend components

- Red: Backend components

- Orange: Rule-related elements

### Interpretation

This architecture demonstrates a hybrid cognitive system combining:

1. **Perceptual Processing:** Initial context analysis and feature extraction

2. **Attribute Representation:** Multi-level feature disentanglement and HD representation

3. **Rule-Based Reasoning:** Dual-path integration of numerical and logical inference

4. **Decision Making:** Final answer selection through rule execution

The system appears designed for complex pattern recognition and decision-making tasks, with explicit mechanisms for:

- Contextual awareness (multiple context inputs)

- Feature abstraction (from raw data to HD attributes)

- Multi-modal rule application (numerical + logical)

- Probabilistic inference (unnormalized rule probabilities)

Notable design choices include the use of both 2-ary and 3-ary relational processing, suggesting capability to handle both binary and ternary relationships in reasoning tasks. The attention mechanism in Part B implies dynamic feature weighting during processing.

DECODING INTELLIGENCE...