## Diagram: Neural Network Computational Graph

### Overview

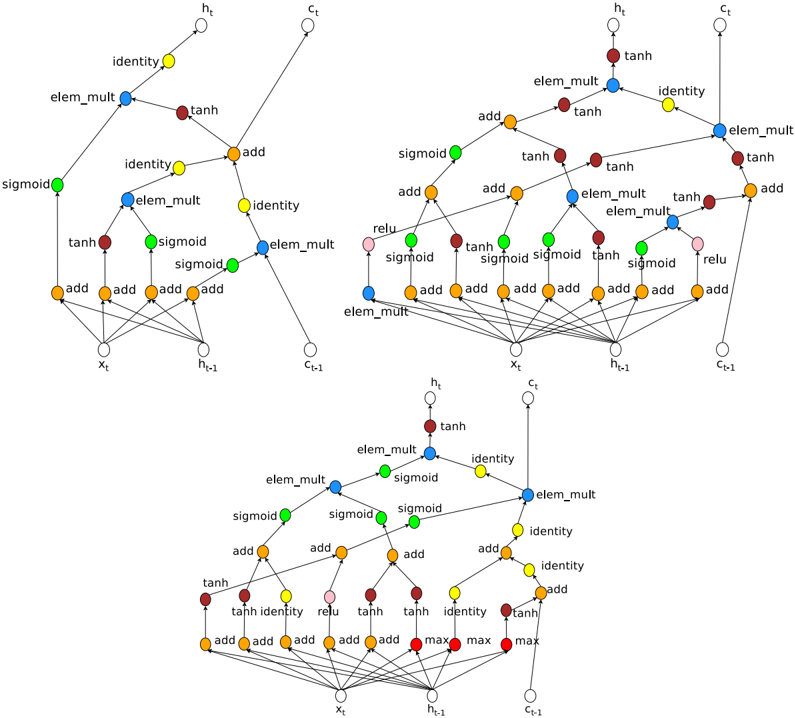

The image depicts three interconnected computational graphs representing neural network operations, likely from a recurrent architecture (e.g., LSTM/GRU). Nodes represent mathematical operations/activations, while edges indicate data flow. Three diagrams are stacked vertically, showing progressive complexity with shared components.

### Components/Axes

- **Nodes**:

- **Red**: `tanh` (hyperbolic tangent activation)

- **Blue**: `elem_mult` (element-wise multiplication)

- **Green**: `sigmoid` (sigmoid activation)

- **Orange**: `add` (element-wise addition)

- **Yellow**: `identity` (no-op operation)

- **Pink**: `relu` (rectified linear unit)

- **Labels**:

- Input: `x_t` (current time step input)

- Hidden states: `h_t` (current), `h_t-1` (previous)

- Cell states: `c_t` (current), `c_t-1` (previous)

- **Flow Direction**: Left-to-right (typical for sequential processing).

### Detailed Analysis

1. **Top Diagram**:

- **Structure**:

- `x_t` → `tanh` (red) → `elem_mult` (blue) → `add` (orange) → `h_t` (output).

- `h_t-1` and `c_t-1` feed into `elem_mult` and `add` operations.

- **Key Connections**:

- `tanh` output is element-wise multiplied with `h_t-1` (blue node).

- Result added to `c_t-1` (orange `add` node) to produce `h_t`.

2. **Middle Diagram**:

- **Structure**:

- Expands on top diagram with additional `sigmoid` (green) and `identity` (yellow) nodes.

- `sigmoid` gates modulate `elem_mult` operations.

- **Key Connections**:

- `sigmoid` outputs control element-wise multiplications (e.g., `sigmoid` → `elem_mult` → `add`).

- `identity` nodes preserve values for skip connections.

3. **Bottom Diagram**:

- **Structure**:

- Most complex, with `max` operations (red) and `relu` (pink).

- Introduces parallel paths for gradient computation.

- **Key Connections**:

- `max` operations aggregate gradients across multiple paths.

- `relu` applied to intermediate states for non-linearity.

### Key Observations

- **Skip Connections**: `identity` nodes enable residual connections, preserving gradients during backpropagation.

- **Temporal Dependency**: `h_t-1` and `c_t-1` propagate information across time steps.

- **Color Consistency**: Node colors align with their labels (e.g., all `tanh` nodes are red).

- **Gradient Flow**: `max` operations in the bottom diagram suggest attention mechanisms or gradient clipping.

### Interpretation

This diagram illustrates the forward and backward passes of a recurrent neural network, likely an LSTM cell. The `tanh` and `sigmoid` gates regulate information flow, while `elem_mult` and `add` combine hidden/cell states. The `max` and `relu` operations in the bottom diagram hint at advanced variants (e.g., attention or gradient regulation). The graphs emphasize modularity, with reusable components (e.g., `elem_mult` blocks) and efficient computation through element-wise operations. The absence of explicit numerical values suggests this is a conceptual representation rather than empirical data.