\n

## Diagram: Word Embedding Transformation

### Overview

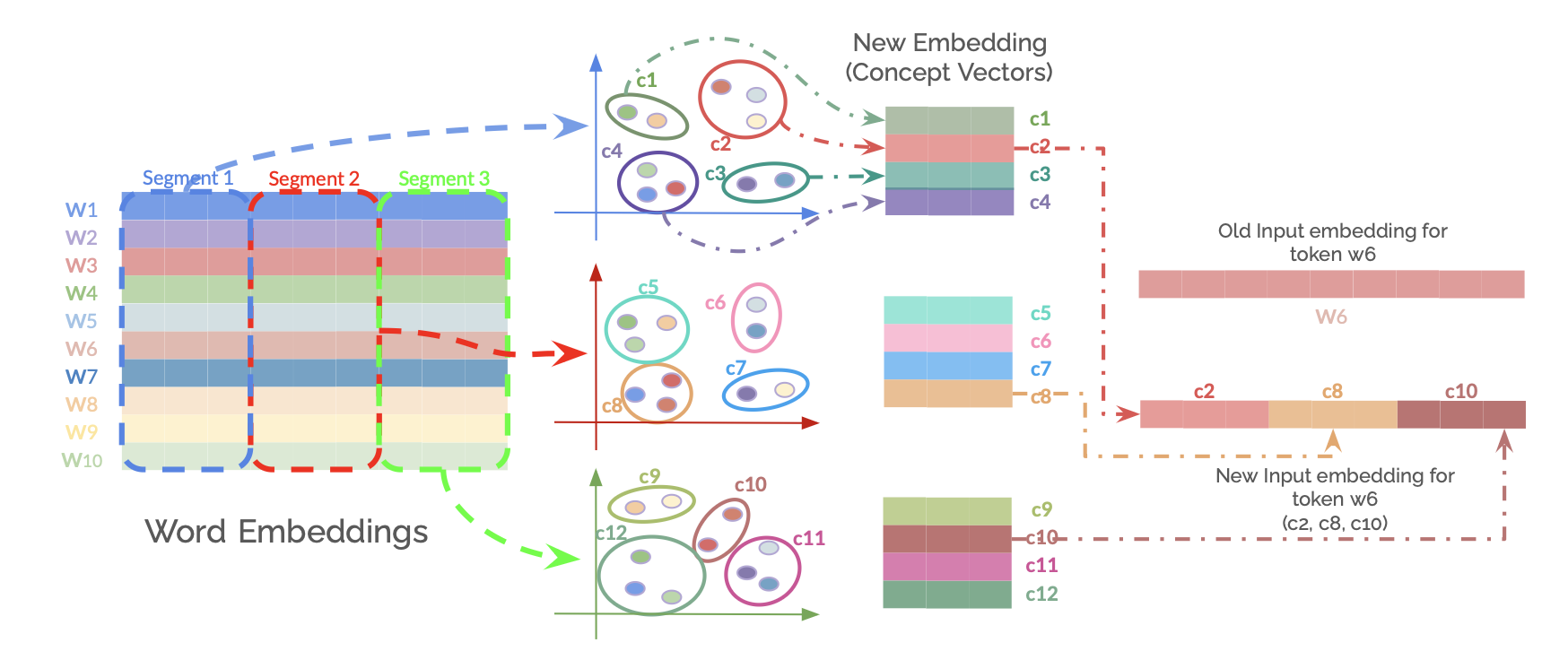

This diagram illustrates a process of transforming word embeddings into new embeddings (concept vectors) through a series of segments and concept groupings. It shows how individual word embeddings (W1-W10) are processed and potentially combined to form new representations. The diagram highlights the transformation of a specific token, w6, from its old embedding to a new embedding.

### Components/Axes

The diagram consists of three main sections:

1. **Word Embeddings:** A grid representing the initial word embeddings. The rows are labeled W1 through W10, and the columns are divided into three segments: Segment 1, Segment 2, and Segment 3.

2. **New Embedding (Concept Vectors):** Three circular groupings of colored areas (c1-c12) representing the new embeddings or concept vectors. Each grouping is visually distinct and connected by arrows indicating transformation.

3. **Token w6 Transformation:** A vertical dashed line separating the old and new input embeddings for token w6. The old embedding is represented by a single vector, while the new embedding is composed of three vectors: c2, c8, and c10.

### Detailed Analysis or Content Details

**Word Embeddings Section:**

* The grid is 10 rows x 3 columns.

* Segment 1 (leftmost column) is predominantly blue, with some red in rows W2 and W3.

* Segment 2 (middle column) is predominantly red, with some blue in rows W1 and W4.

* Segment 3 (rightmost column) is predominantly green, with some red in rows W1, W2, and W3, and some blue in rows W5 and W6.

* The color intensity varies within each segment, suggesting different values within the embeddings.

**New Embedding (Concept Vectors) Section:**

* **Grouping 1 (Top):** Contains concepts c1, c2, c3, and c4. c1 and c4 are blue, c2 and c3 are light blue.

* **Grouping 2 (Middle):** Contains concepts c5, c6, c7, and c8. c5 and c7 are light blue, c6 and c8 are blue.

* **Grouping 3 (Bottom):** Contains concepts c9, c10, c11, and c12. c9 and c11 are light blue, c10 and c12 are blue.

* Arrows connect the Word Embeddings section to these groupings, indicating a transformation process. The arrows are not labeled with specific weights or functions.

**Token w6 Transformation Section:**

* The "Old Input embedding for token w6" is a single vector.

* The "New Input embedding for token w6" is composed of three vectors: c2, c8, and c10.

* The dashed line visually separates the old and new embeddings.

### Key Observations

* The transformation process appears to decompose a single word embedding (w6) into a combination of concept vectors (c2, c8, c10).

* The color scheme suggests that similar concepts are grouped together.

* The segments in the Word Embeddings section may represent different aspects or features of the words.

* The diagram does not provide numerical values for the embeddings, only visual representations.

### Interpretation

The diagram illustrates a concept of distributed representation learning, where words are represented as vectors in a high-dimensional space. The transformation process suggests a way to refine or decompose these embeddings into more meaningful concept vectors. The segmentation of the initial word embeddings implies that the words are being analyzed based on different features or contexts. The decomposition of w6 into c2, c8, and c10 suggests that the meaning of w6 can be expressed as a combination of these concepts.

The diagram doesn't provide specific details about the transformation function or the criteria for grouping concepts. It's a high-level illustration of a potential process for creating more nuanced and context-aware word representations. The use of color coding is a visual aid to understand the relationships between different concepts and segments. The diagram is conceptual and does not contain quantifiable data. It is a visual representation of a process, not a presentation of results.