## Diagram: Neural Network Architecture for Frame-Level Classification

### Overview

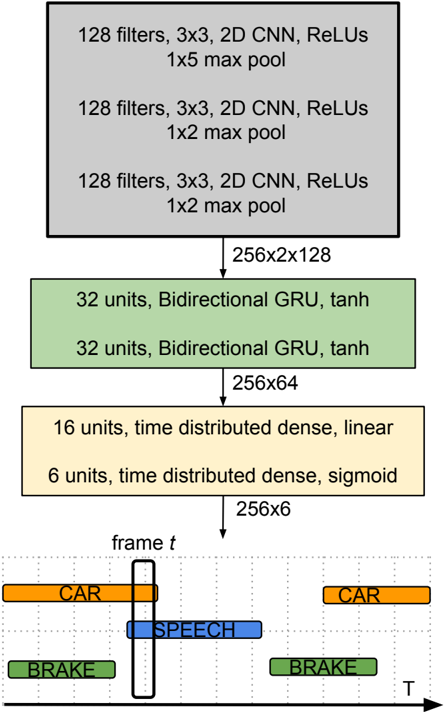

The image depicts a neural network architecture designed for frame-level classification, likely in a video or time-series context. The network consists of convolutional layers, recurrent layers (GRUs), and dense layers, culminating in a classification output for each frame. The diagram illustrates the flow of data through these layers, along with the dimensions of the output at various stages.

### Components/Axes

The diagram is structured as a series of stacked blocks representing layers. Arrows indicate the flow of data. The horizontal axis represents time (T), and the vertical axis represents the layers of the network. The diagram includes the following components:

* **Convolutional Layers (Gray):** Three stacked convolutional layers, each with 128 filters, a 3x3 kernel size, ReLU activation, and max pooling. The first has a 1x5 max pool, the second and third have 1x2 max pools.

* **Recurrent Layers (Green):** Two stacked Bidirectional GRU layers, each with 32 units and tanh activation.

* **Dense Layers (Yellow):** Two stacked dense layers. The first has 16 units with a linear activation and is time-distributed. The second has 6 units with a sigmoid activation and is time-distributed.

* **Output Layer (Bottom):** A representation of the output for a single frame (frame *t*), showing classifications for "CAR", "BRAKE", and "SPEECH".

* **Dimensionality Indicators:** Text labels indicating the output dimensions of each layer (e.g., "256x2x128").

* **Time Axis:** An arrow labeled "T" indicating the temporal dimension.

### Detailed Analysis or Content Details

The network processes data through the following stages:

1. **Convolutional Block:**

* Layer 1: 128 filters, 3x3 kernel, ReLU activation, 1x5 max pool. Output dimension: 256x2x128.

* Layer 2: 128 filters, 3x3 kernel, ReLU activation, 1x2 max pool. Output dimension: 256x2x128.

* Layer 3: 128 filters, 3x3 kernel, ReLU activation, 1x2 max pool. Output dimension: 256x2x128.

2. **Recurrent Block:**

* Layer 1: 32 units, Bidirectional GRU, tanh activation. Output dimension: 256x64.

* Layer 2: 32 units, Bidirectional GRU, tanh activation. Output dimension: 256x64.

3. **Dense Block:**

* Layer 1: 16 units, time distributed, linear activation. Output dimension: 256x6.

* Layer 2: 6 units, time distributed, sigmoid activation. Output dimension: 256x6.

4. **Output:** The final output for a single frame (*t*) shows three classifications:

* "CAR" (orange) - present at two points in time.

* "BRAKE" (green) - present at two points in time.

* "SPEECH" (blue) - present at one point in time.

The output layer suggests a multi-label classification problem, where each frame can be associated with multiple labels simultaneously.

### Key Observations

* The network architecture is designed to extract spatial features using convolutional layers and then process temporal dependencies using recurrent layers.

* The use of bidirectional GRUs suggests that the network considers both past and future context when making predictions.

* The time-distributed dense layers indicate that the classification is performed independently for each time step.

* The sigmoid activation in the final layer suggests that the output is a probability score between 0 and 1 for each class.

* The output example shows that the network can detect multiple events (CAR, BRAKE, SPEECH) within a single frame.

### Interpretation

This diagram represents a neural network architecture for analyzing sequential data, likely video frames, to identify events or objects of interest. The convolutional layers extract visual features, while the recurrent layers capture temporal relationships between frames. The final dense layers map these features to a set of classification labels.

The architecture is well-suited for tasks such as autonomous driving, video surveillance, or activity recognition, where it is important to understand both the visual content of each frame and the temporal context in which it occurs. The use of bidirectional GRUs suggests that the network is capable of reasoning about events that unfold over time, considering both past and future information. The multi-label classification output indicates that the network can handle complex scenarios where multiple events may occur simultaneously.

The dimensionality reduction throughout the network (e.g., from 256x2x128 to 256x64) suggests that the network is learning to extract the most relevant features for the classification task. The choice of activation functions (ReLU, tanh, sigmoid) is also significant, as each function has different properties that affect the network's learning behavior.