## Neural Network Architecture Diagram: Temporal Event Classification System

### Overview

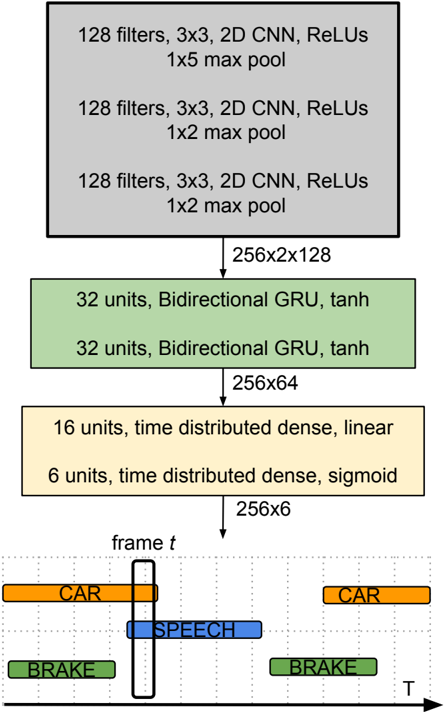

The diagram illustrates a deep learning architecture for temporal event classification, combining convolutional neural networks (CNNs), bidirectional GRUs, and dense layers. The bottom section visualizes event detection over time with color-coded labels.

### Components/Axes

1. **CNN Layers (Top Section)**

- Three identical 2D CNN blocks:

- 128 filters, 3x3 kernel size

- ReLU activation

- Max pooling: 1x5 (first layer), 1x2 (second and third layers)

- Output dimensions: 256x2x128 → 256x64 after pooling

2. **Bidirectional GRU Layer (Middle Section)**

- 32 units per direction

- Tanh activation

- Output dimensions: 256x64

3. **Dense Layers (Bottom Section)**

- Time-distributed dense layers:

- 16 units with linear activation

- 6 units with sigmoid activation

- Final output dimensions: 256x6

4. **Event Timeline (Bottom Visualization)**

- Horizontal axis labeled "T" (time)

- Vertical axis labeled "frame t"

- Color-coded event detection:

- Orange: CAR

- Blue: SPEECH

- Green: BRAKE

- Events shown at specific time intervals with overlapping detection windows

### Detailed Analysis

- **CNN Hierarchy**: Three identical convolutional blocks maintain spatial feature extraction while reducing temporal dimensions through max pooling (1x5 → 1x2).

- **Temporal Processing**: Bidirectional GRUs capture sequential dependencies in the 256x64 feature maps.

- **Classification**: Time-distributed dense layers enable per-frame event prediction, with sigmoid activation for multi-label classification (6 output units).

- **Event Visualization**: The timeline shows:

- CAR events (orange) with 50% overlap between frames

- SPEECH event (blue) spanning 3 frames

- BRAKE events (green) with 25% overlap

- Temporal resolution: 1 frame = 1/256 time unit

### Key Observations

1. **Feature Reduction**: Input dimensions reduce from 256x2x128 to 256x6 through progressive pooling and dense layers.

2. **Multi-label Detection**: Sigmoid activation allows simultaneous prediction of multiple events (CAR, SPEECH, BRAKE).

3. **Temporal Smoothing**: Overlapping event windows suggest temporal smoothing in the architecture.

4. **Bidirectional Context**: GRU layers capture both past and future context for event prediction.

### Interpretation

This architecture demonstrates a hybrid approach to temporal event classification:

1. **CNN Feature Extraction**: Initial layers focus on spatial feature detection in input data.

2. **GRU Temporal Modeling**: Bidirectional processing enables context-aware sequence modeling.

3. **Dense Classification**: Final layers specialize in event probability prediction per time frame.

The timeline visualization reveals the model's ability to:

- Detect overlapping events (e.g., CAR and BRAKE co-occurrence)

- Maintain temporal consistency across frames

- Handle multi-label classification through sigmoid outputs

The architecture's design suggests optimization for:

- Real-time event detection systems

- Audio-visual processing pipelines

- Temporal pattern recognition tasks