\n

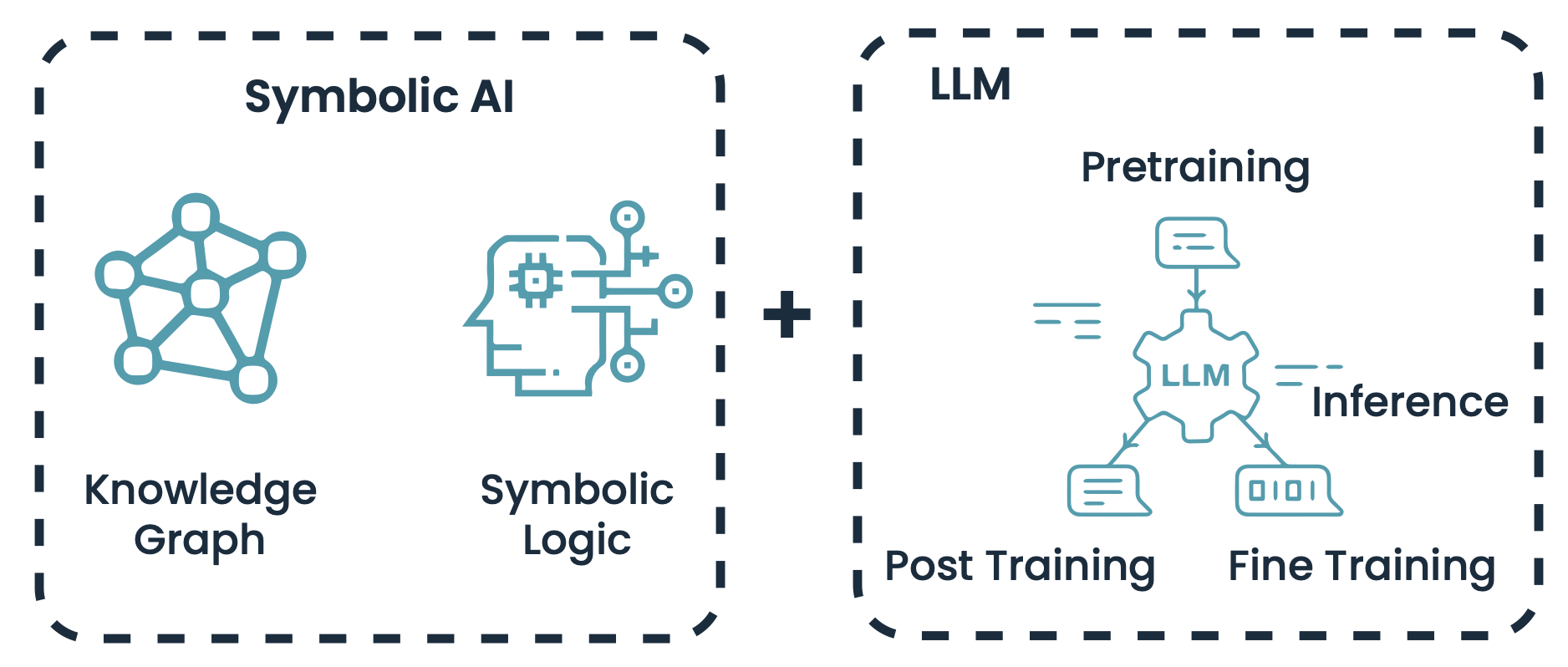

## Conceptual Diagram: Integration of Symbolic AI and Large Language Models (LLMs)

### Overview

The image is a high-level conceptual diagram illustrating the combination of two artificial intelligence paradigms: Symbolic AI and Large Language Models (LLMs). It presents these as two distinct, complementary modules that are added together, suggesting a hybrid or integrated approach to AI system design.

### Components/Axes

The diagram is structured into two main dashed-line boxes, connected by a central plus sign (`+`).

**1. Left Box: Symbolic AI**

* **Title:** "Symbolic AI" (top center of the box).

* **Components:**

* **Knowledge Graph:** Represented by an icon of a network graph with interconnected nodes (circles). The label "Knowledge Graph" is positioned directly below the icon.

* **Symbolic Logic:** Represented by an icon of a human head silhouette containing a microchip, with circuit lines extending outward. The label "Symbolic Logic" is positioned directly below the icon.

**2. Right Box: LLM**

* **Title:** "LLM" (top center of the box).

* **Components & Process Flow:**

* **Central Element:** A gear icon labeled "LLM" in its center.

* **Pretraining:** A document/chat bubble icon with an arrow pointing down into the central "LLM" gear. The label "Pretraining" is positioned above this icon.

* **Inference:** The label "Inference" is positioned to the right of the central gear, with two horizontal lines suggesting output or process continuation.

* **Post Training:** A document/chat bubble icon with an arrow pointing out from the bottom-left of the central "LLM" gear. The label "Post Training" is positioned below this icon.

* **Fine Training:** A document/chat bubble icon containing binary code (`0101`) with an arrow pointing out from the bottom-right of the central "LLM" gear. The label "Fine Training" is positioned below this icon.

**3. Connecting Element:**

* A large plus sign (`+`) is centered vertically and horizontally between the two dashed boxes, indicating the combination or integration of the two systems.

### Detailed Analysis

* **Spatial Layout:** The diagram is horizontally oriented. The "Symbolic AI" module occupies the left third, the "LLM" module occupies the right two-thirds, and the plus sign is in the central gutter.

* **Visual Style:** All icons are line-art style in a teal/cyan color. All text labels are in a bold, black, sans-serif font. The two main modules are enclosed in identical black dashed-line rectangles with rounded corners.

* **Process Flow (LLM Module):** The LLM section depicts a clear workflow:

1. **Input/Phase 1:** "Pretraining" feeds into the core LLM.

2. **Core Processing:** The central "LLM" gear represents the model itself.

3. **Output/Phases 2 & 3:** The model produces outputs or undergoes further processes labeled "Inference," "Post Training," and "Fine Training." The arrows indicate directionality: Pretraining is an input process, while Post Training and Fine Training are output or refinement processes. "Inference" is shown as a side output or state.

### Key Observations

1. **Conceptual, Not Quantitative:** The diagram contains no numerical data, charts, or specific metrics. It is purely a conceptual model showing relationships and components.

2. **Complementary Paradigms:** It visually argues that Symbolic AI (with its structured knowledge representation via Knowledge Graphs and rule-based reasoning via Symbolic Logic) and LLMs (with their statistical learning and generative capabilities) are distinct but combinable building blocks.

3. **LLM Lifecycle:** The LLM box explicitly outlines key stages in a model's lifecycle: initial pretraining on large datasets, followed by inference (usage), and further refinement through post-training and fine-tuning.

4. **Iconography:** The icons are metaphorical. The Knowledge Graph icon represents structured, relational data. The Symbolic Logic icon represents reasoning and computation. The gear represents the LLM as a processing engine, and the document bubbles represent data or model states.

### Interpretation

This diagram communicates a strategic vision for advanced AI systems. It suggests that the future of robust, reliable AI may not lie in choosing between symbolic, logic-based approaches and neural, statistical approaches, but in their synthesis.

* **Symbolic AI** provides **structure, explainability, and formal reasoning**. Knowledge Graphs offer a factual backbone, while Symbolic Logic enables verifiable rule-following.

* **LLMs** provide **flexibility, pattern recognition, and natural language understanding/generation**. Their strength is in learning from vast data and performing tasks like inference and text generation.

The plus sign is the critical element, implying that integrating these two can mitigate the weaknesses of each: LLMs can handle the ambiguity and breadth of real-world language, while Symbolic AI can ground their outputs in structured knowledge and logical consistency, potentially reducing hallucinations and improving reliability. The inclusion of the full LLM lifecycle (Pretraining to Fine Training) indicates that this integration is meant to occur at multiple stages of model development and deployment, not just as a simple add-on. The diagram serves as a high-level architectural blueprint for researchers and engineers aiming to build next-generation AI systems.