## Neural Network Diagram: Memory Bank Interaction

### Overview

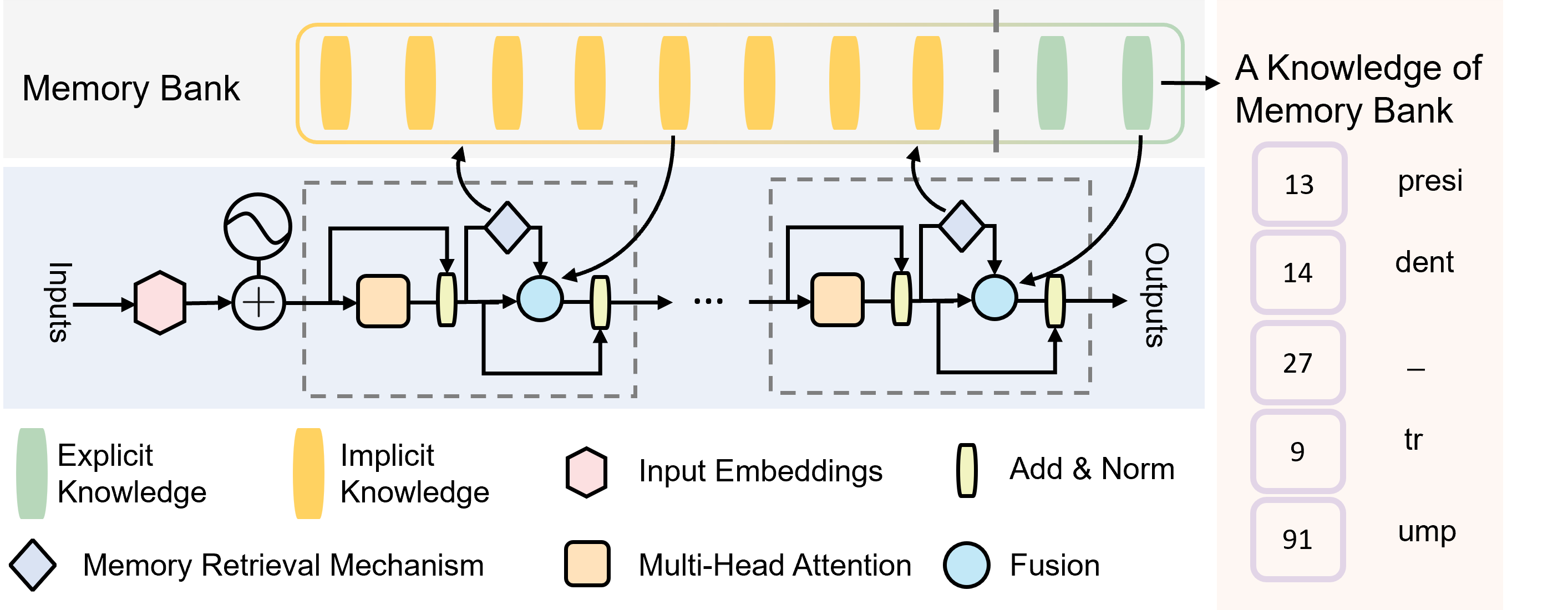

The image depicts a neural network architecture that incorporates a memory bank. The diagram illustrates how input embeddings interact with the memory bank through a series of processing steps, including multi-head attention, memory retrieval, and fusion. The right side of the image shows examples of knowledge retrieved from the memory bank.

### Components/Axes

* **Title:** Memory Bank

* **Inputs:** Labeled on the left side of the diagram.

* **Outputs:** Labeled on the right side of the diagram.

* **Memory Bank:** Represented by a series of vertical rectangles at the top, colored yellow (Implicit Knowledge) and green (Explicit Knowledge).

* **Processing Blocks:** The core processing unit is repeated, indicated by "...". Each unit contains:

* Input Embeddings (pink hexagon)

* Addition operation (+)

* Multi-Head Attention (orange rectangle)

* Add & Norm (yellow rectangle with rounded corners)

* Fusion (light blue circle)

* Memory Retrieval Mechanism (light blue diamond)

* **Legend:** Located at the bottom-left of the image.

* Green: Explicit Knowledge

* Yellow: Implicit Knowledge

* Pink Hexagon: Input Embeddings

* Yellow Rectangle with rounded corners: Add & Norm

* Light Blue Diamond: Memory Retrieval Mechanism

* Orange Rectangle: Multi-Head Attention

* Light Blue Circle: Fusion

* **Knowledge of Memory Bank:** A list of numerical IDs and corresponding text fragments.

### Detailed Analysis

1. **Memory Bank:**

* The memory bank at the top consists of multiple vertical rectangles.

* The rectangles are colored yellow (Implicit Knowledge) and green (Explicit Knowledge).

* The transition from yellow to green occurs approximately 3/4 of the way across the memory bank from left to right.

2. **Processing Blocks:**

* Input Embeddings (pink hexagon) are fed into an addition operation along with a signal represented by a sine wave.

* The output of the addition is processed by a Multi-Head Attention module (orange rectangle).

* The output of the Multi-Head Attention module is passed through an Add & Norm layer (yellow rectangle with rounded corners).

* A Memory Retrieval Mechanism (light blue diamond) retrieves information from the Memory Bank.

* The retrieved information and the output of the Add & Norm layer are combined in a Fusion module (light blue circle).

* The output of the Fusion module is passed through another Add & Norm layer (yellow rectangle with rounded corners) and becomes part of the output.

* A feedback loop connects the output of the Add & Norm layer back to the Memory Retrieval Mechanism.

3. **Knowledge of Memory Bank:**

* A column on the right side of the image shows examples of knowledge retrieved from the memory bank.

* The column is titled "A Knowledge of Memory Bank".

* The column contains the following entries:

* 13: presi

* 14: dent

* 27: -

* 9: tr

* 91: ump

### Key Observations

* The diagram illustrates a neural network architecture that integrates a memory bank for knowledge retrieval.

* The memory bank is divided into implicit and explicit knowledge segments.

* The processing blocks use multi-head attention, memory retrieval, and fusion to process input embeddings and retrieve relevant information from the memory bank.

* The "Knowledge of Memory Bank" examples suggest that the memory bank stores fragments of words or sub-word units.

### Interpretation

The diagram presents a neural network model that leverages a memory bank to enhance its capabilities. The memory bank likely stores pre-existing knowledge that the network can access and integrate into its processing. The use of multi-head attention allows the network to focus on different aspects of the input and retrieve relevant information from the memory bank. The fusion module combines the retrieved information with the processed input, enabling the network to make more informed decisions. The examples in "A Knowledge of Memory Bank" suggest that the memory bank stores sub-word units, which could be used for tasks such as text generation or completion. The separation of the memory bank into "Implicit" and "Explicit" knowledge suggests a mechanism for differentiating between learned and pre-existing information.