\n

## Diagram: Knowledge-Augmented Neural Network Architecture

### Overview

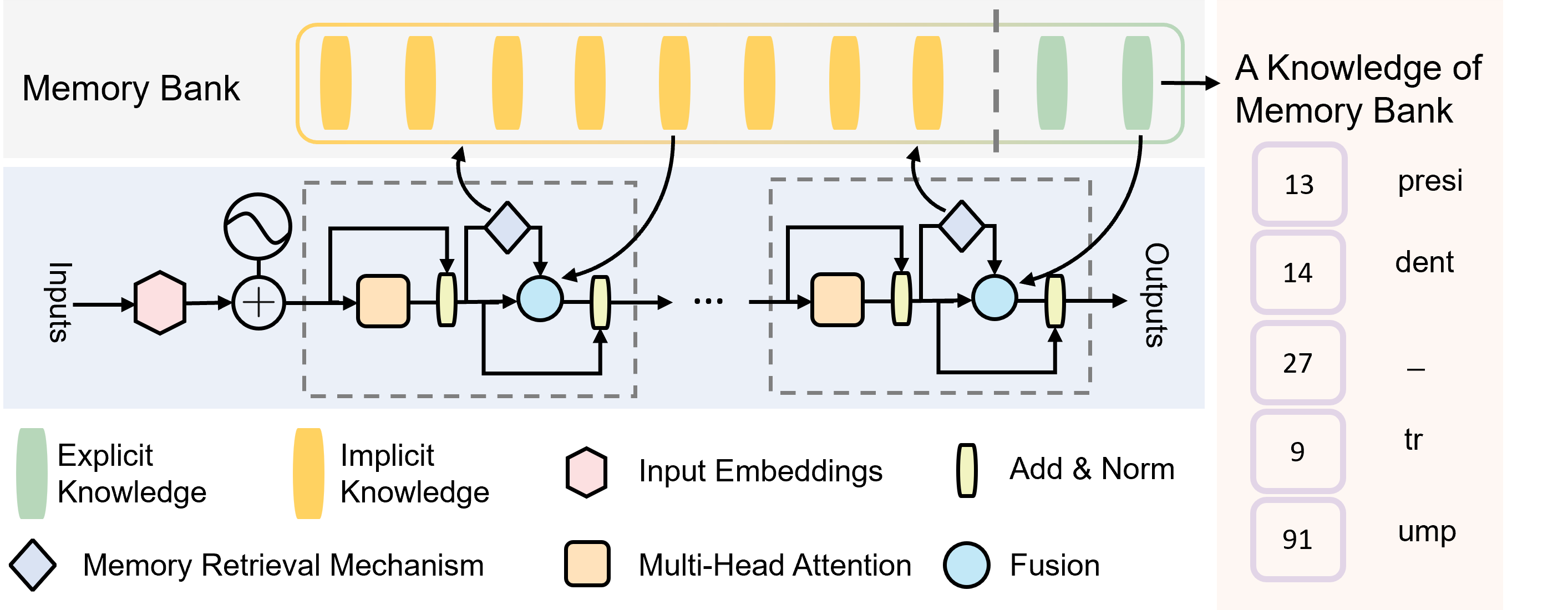

The image depicts a diagram of a neural network architecture incorporating a "Memory Bank" for knowledge augmentation. The diagram illustrates the flow of information from "Inputs" through several processing blocks, interacting with the Memory Bank, and finally producing "Outputs". The architecture appears to combine explicit and implicit knowledge representations.

### Components/Axes

The diagram is segmented into several key areas:

* **Memory Bank:** Located at the top, represented by a series of yellow rectangles.

* **Input Processing Blocks:** A series of interconnected blocks representing the processing of input data. These blocks are repeated multiple times (indicated by "...").

* **Output Processing Blocks:** Similar to the input blocks, these process data before generating the final outputs.

* **Inputs:** Labelled on the left side.

* **Outputs:** Labelled on the right side.

* **Legend:** Located at the bottom, defining the shapes and colors used to represent different components.

The legend defines the following components:

* **Explicit Knowledge:** Represented by a green diamond.

* **Implicit Knowledge:** Represented by a yellow rounded rectangle.

* **Input Embeddings:** Represented by a yellow rectangle.

* **Add & Norm:** Represented by a black rounded rectangle with white interior.

* **Memory Retrieval Mechanism:** Represented by a grey diamond.

* **Multi-Head Attention:** Represented by a yellow square.

* **Fusion:** Represented by a white circle.

The right side of the diagram lists numerical values alongside text labels. These are:

* 13: presi

* 14: dent

* 27: -

* 9: tr

* 91: ump

### Detailed Analysis or Content Details

The diagram shows a flow of information starting from the "Inputs". The input signal is initially processed through a series of blocks. The first block appears to be an addition operation (represented by a "+"). This is followed by a block labelled "Multi-Head Attention", then "Add & Norm", and finally a "Fusion" block. This sequence of blocks is repeated multiple times, with connections to the "Memory Bank" via a "Memory Retrieval Mechanism". The Memory Bank contains a series of "Implicit Knowledge" blocks. The output of the repeated blocks is then fed into another set of processing blocks (similar to the input blocks) before reaching the "Outputs".

The Memory Bank consists of approximately 10 yellow rectangles, representing stored knowledge. The connections between the processing blocks and the Memory Bank are indicated by dashed arrows, suggesting a retrieval or interaction process.

The "Outputs" are associated with numerical values and text labels. The values range from 9 to 91. The text labels are fragmented: "presi", "dent", "-", "tr", and "ump".

### Key Observations

* The architecture emphasizes the integration of "Explicit Knowledge" and "Implicit Knowledge".

* The repeated processing blocks suggest a layered or iterative approach to information processing.

* The Memory Bank serves as a central repository of knowledge that is accessed and utilized during processing.

* The numerical values associated with the outputs are relatively small, and the text labels are incomplete.

* The diagram does not provide any quantitative information about the size or capacity of the Memory Bank.

### Interpretation

This diagram illustrates a knowledge-augmented neural network architecture designed to leverage both explicit and implicit knowledge. The Memory Bank likely stores pre-trained knowledge or contextual information that can be retrieved and integrated into the processing pipeline. The repeated processing blocks, combined with the Memory Retrieval Mechanism, suggest a mechanism for refining and updating the knowledge representation over time.

The fragmented text labels associated with the outputs ("presi", "dent", "tr", "ump") are intriguing. They could represent parts of words or concepts related to the task the network is designed to perform. The presence of a hyphen ("-") suggests a potential delimiter or missing information. The numerical values associated with these labels might represent confidence scores, probabilities, or other metrics related to the network's predictions.

The architecture appears to be a conceptual design rather than a fully specified implementation. The diagram focuses on the overall flow of information and the key components involved, without providing detailed information about the specific algorithms or parameters used. The diagram suggests a system capable of learning and reasoning by combining learned representations with stored knowledge.