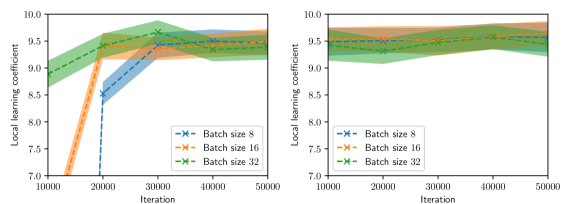

## Line Charts: Local Learning Coefficient vs. Iteration for Different Batch Sizes

### Overview

The image displays two side-by-side line charts comparing the "Local learning coefficient" over training "Iterations" for three different batch sizes (8, 16, and 32). Each chart plots the coefficient's mean value (solid line) with a shaded region representing the confidence interval or variance. The left chart shows a more volatile learning phase, while the right chart depicts a more stable, later phase.

### Components/Axes

* **Chart Type:** Two line charts with shaded confidence intervals.

* **Y-Axis (Both Charts):** Label: "Local learning coefficient". Scale: 7.0 to 10.0, with major ticks at 0.5 intervals (7.0, 7.5, 8.0, 8.5, 9.0, 9.5, 10.0).

* **X-Axis (Both Charts):** Label: "Iteration". Scale: 10000 to 50000, with major ticks at 10000, 20000, 30000, 40000, and 50000.

* **Legend (Bottom-Right of each chart):**

* Blue line with 'x' markers: "Batch size 8"

* Orange line with 'x' markers: "Batch size 16"

* Green line with 'x' markers: "Batch size 32"

* **Shaded Regions:** Each line has a corresponding semi-transparent shaded area of the same color, indicating the range of uncertainty or variance around the mean value.

### Detailed Analysis

**Left Chart Analysis:**

* **Batch size 8 (Blue):** Starts very low (~7.0 at 10k iterations), rises sharply to ~8.5 at 20k, then continues a steady increase to ~9.5 by 50k. The confidence interval is very wide at the start (spanning ~7.0 to ~8.0 at 10k) and narrows as iterations increase.

* **Batch size 16 (Orange):** Starts around ~7.5 at 10k, increases steadily to ~9.5 by 30k, and then plateaus near ~9.5 for the remainder. Its confidence interval is moderately wide initially and narrows significantly after 30k.

* **Batch size 32 (Green):** Starts the highest at ~9.0 at 10k, peaks at ~9.7 around 30k, then shows a slight decline to ~9.3 by 50k. Its confidence interval is relatively narrow throughout compared to the smaller batch sizes.

**Right Chart Analysis:**

* **Batch size 8 (Blue):** Starts much higher than in the left chart, at ~9.3 at 10k. It fluctuates slightly, dipping to ~9.2 at 20k, then gradually rises to ~9.5 by 50k. The confidence interval is consistently narrow.

* **Batch size 16 (Orange):** Starts at ~9.5 at 10k, shows a slight dip at 20k (~9.4), then increases to ~9.7 by 40k and holds near that level. Confidence interval is narrow.

* **Batch size 32 (Green):** Starts at ~9.4 at 10k, increases to ~9.6 by 30k, and remains stable around ~9.6 through 50k. Confidence interval is the narrowest among the three.

### Key Observations

1. **Phase Difference:** The left chart likely represents an earlier or more exploratory training phase, characterized by lower starting values and high variance, especially for smaller batch sizes. The right chart represents a later, more converged phase with higher, more stable coefficients.

2. **Batch Size Impact:** In both phases, larger batch sizes (32) lead to higher initial learning coefficients and greater stability (narrower confidence intervals). Smaller batch sizes (8) start lower and exhibit more volatility.

3. **Convergence Trend:** All batch sizes in the right chart converge to a high coefficient value between ~9.5 and ~9.7, suggesting the model reaches a similar level of learning regardless of batch size in the long run, though the path differs.

4. **Anomaly:** In the left chart, the Batch size 32 line shows a slight downward trend after 30k iterations, while the others are still rising or plateauing. This could indicate a minor instability or a different optimization dynamic for the largest batch size during that phase.

### Interpretation

The data demonstrates the significant impact of batch size on the dynamics of the "local learning coefficient," a metric likely related to the model's capacity to learn or adapt at a given point in training.

* **Smaller batches (8, 16)** induce a more volatile, exploratory learning process early on (left chart), starting from a lower coefficient but eventually catching up. This aligns with the known property of smaller batches providing noisier gradient estimates, which can help escape local minima but slow initial convergence.

* **Larger batches (32)** provide a more stable, higher-fidelity gradient signal from the start, leading to a consistently higher learning coefficient early in training. However, the slight dip in the left chart suggests this stability might come with a risk of premature convergence or reduced exploration at certain stages.

* The **right chart** shows that given sufficient iterations, all batch sizes achieve a similarly high learning coefficient, indicating the model's final learned state is robust to this hyperparameter. The primary difference is the *path* to convergence: smaller batches take a more circuitous, high-variance route, while larger batches follow a more direct, stable path.

**In summary:** Batch size acts as a control knob for the trade-off between exploration (small batches, high variance) and exploitation/stability (large batches, low variance) during training, without necessarily affecting the final model's learning capacity as measured by this coefficient.