\n

## Diagram: Adversarial Attack Process

### Overview

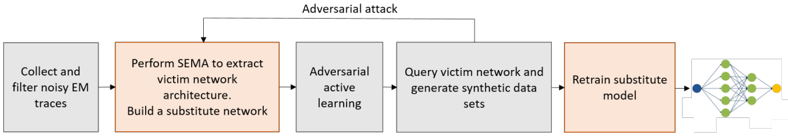

The image depicts a sequential diagram illustrating the steps involved in an adversarial attack on a machine learning model. The process begins with data collection and culminates in the retraining of a substitute model. Arrows indicate the flow of the attack.

### Components/Axes

The diagram consists of five main blocks, arranged horizontally from left to right. Above the blocks is a label "Adversarial attack". Each block represents a stage in the attack process. The final block has a visual representation of a neural network.

### Detailed Analysis or Content Details

1. **Block 1: Collect and filter noisy EM traces** - This initial stage involves gathering and cleaning electromagnetic (EM) traces.

2. **Block 2: Perform SEMA to extract victim network architecture. Build a substitute network** - This stage utilizes a technique called SEMA (likely Side-channel EM Analysis) to determine the structure of the target ("victim") network. A substitute network is then constructed.

3. **Block 3: Adversarial active learning** - This stage involves adversarial active learning, a technique used to refine the substitute network.

4. **Block 4: Query victim network and generate synthetic data sets** - The victim network is queried to create synthetic datasets.

5. **Block 5: Retrain substitute model** - The substitute model is retrained using the generated synthetic data. This block also contains a visual representation of a neural network with:

- Input layer: 3 nodes (blue)

- Hidden layer: 4 nodes (green)

- Output layer: 2 nodes (yellow)

- Connections between layers are represented by lines.

The arrows connecting the blocks indicate the sequential flow of the attack. An arrow also points from the "Adversarial attack" label to blocks 2 and 3.

### Key Observations

The diagram highlights a specific type of attack that leverages side-channel information (EM traces) to reconstruct and ultimately compromise a machine learning model. The use of a substitute model is a key element of this attack strategy. The neural network in the final block provides a visual representation of the model being targeted or replicated.

### Interpretation

This diagram illustrates a sophisticated attack methodology targeting machine learning models. The attacker doesn't directly access the model's parameters but instead infers them through side-channel analysis (EM traces). By building a substitute model and iteratively refining it through adversarial active learning and synthetic data generation, the attacker aims to create a model that mimics the behavior of the victim network. This allows the attacker to potentially bypass security measures or extract sensitive information. The diagram suggests a focus on model extraction and replication, rather than direct manipulation of the victim model's functionality. The use of SEMA indicates a specialized attack targeting hardware implementations of machine learning algorithms.