## Diagram: Adversarial Attack Process Flow

### Overview

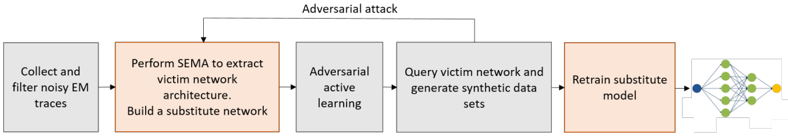

The image displays a linear, five-step process flow diagram titled "Adversarial attack." It illustrates a methodology for creating a substitute model to perform an adversarial attack against a target ("victim") neural network. The process involves data collection, architecture extraction, active learning, synthetic data generation, and model retraining.

### Components/Flow

The diagram consists of five rectangular process boxes connected sequentially by right-pointing arrows, indicating a left-to-right workflow. A final arrow points from the last box to a generic icon of a neural network.

**1. Title:**

* **Text:** "Adversarial attack"

* **Position:** Centered at the top of the diagram.

**2. Process Boxes (in order from left to right):**

* **Box 1 (Gray):**

* **Text:** "Collect and filter\nnoisy EM traces"

* **Position:** Far left. This is the initial input stage.

* **Box 2 (Orange/Peach):**

* **Text:** "Perform SEMA to extract\nvictim network\narchitecture.\nBuild a substitute\nnetwork"

* **Position:** Second from left. This box is highlighted with a distinct color, suggesting a core or critical phase. It contains two distinct but related actions.

* **Box 3 (Gray):**

* **Text:** "Adversarial\nactive learning"

* **Position:** Center.

* **Box 4 (Gray):**

* **Text:** "Query victim network and\ngenerate synthetic data\nsets"

* **Position:** Fourth from left.

* **Box 5 (Orange/Peach):**

* **Text:** "Retrain substitute\nmodel"

* **Position:** Far right, before the final icon. This box shares the same highlight color as Box 2, indicating another key phase.

**3. Final Icon:**

* **Description:** A simplified, generic icon of a feedforward neural network with an input layer (blue node), two hidden layers (green nodes), and an output layer (yellow node).

* **Position:** To the right of Box 5, connected by an arrow. It represents the final product of the process: the trained substitute model.

**4. Flow Arrows:**

* Simple, black, right-pointing arrows connect each box to the next, defining the sequence: Box 1 → Box 2 → Box 3 → Box 4 → Box 5 → Neural Network Icon.

### Detailed Analysis

The process is a structured pipeline for a model extraction or cloning attack, likely using side-channel information.

* **Step 1 - Data Acquisition:** The attack begins by gathering "noisy EM (Electromagnetic) traces." This implies the attacker is using physical side-channel emanations from the hardware running the victim model as the primary data source.

* **Step 2 - Architecture Extraction & Initialization:** The acronym "SEMA" is not defined in the image but is likely a specific technique (e.g., Side-channel Analysis). This step has two goals: reverse-engineer the victim network's architecture and use that information to construct an initial "substitute network."

* **Step 3 - Active Learning Loop:** The core iterative phase begins. "Adversarial active learning" suggests the attacker intelligently selects which queries to make to the victim model to maximize the information gained for training the substitute.

* **Step 4 - Querying & Data Generation:** The attacker sends queries to the (black-box) victim network. The responses, combined with the original EM traces, are used to "generate synthetic data sets." This synthetic data is used to train the substitute.

* **Step 5 - Model Refinement:** The substitute model is retrained on the newly generated synthetic data. The arrow leading back from Box 4 to Box 3 (implied by the "active learning" label, though not drawn as a loop) indicates this is an iterative cycle: query, generate data, retrain, repeat.

* **Outcome:** The final output is a trained substitute neural network (represented by the icon) that presumably mimics the functionality of the victim network and can be used to generate adversarial examples.

### Key Observations

1. **Color Coding:** The use of orange/peach for Box 2 ("Perform SEMA...") and Box 5 ("Retrain substitute model") visually groups the initial architecture theft and the final model refinement as the two most significant phases.

2. **Input Specificity:** The attack is specifically based on "EM traces," not just query access. This indicates a more sophisticated, potentially physical, side-channel attack vector.

3. **Process Linearity with Implied Iteration:** While drawn as a straight line, the terms "active learning" and the nature of retraining imply a cyclical process between steps 3, 4, and 5.

4. **Generic Representation:** The final neural network icon is abstract, showing no specific architecture (e.g., CNN, RNN), indicating the process is model-agnostic.

### Interpretation

This diagram outlines a sophisticated **model extraction attack** that combines **physical side-channel analysis** with **machine learning techniques**. The attacker's goal is not just to steal data but to clone the victim model's functionality.

* **The "Why":** By building a substitute model, an adversary can study it offline to craft effective adversarial examples—inputs designed to fool the original victim network—without making suspicious, repeated queries to the live system. The use of EM traces suggests an attack scenario where the victim model is deployed on embedded or edge hardware, making it physically accessible.

* **Relationships:** The process shows a clear dependency chain. The quality of the EM traces (Step 1) affects the accuracy of the extracted architecture (Step 2). The effectiveness of the active learning strategy (Step 3) determines how efficiently the synthetic data (Step 4) improves the substitute model (Step 5). The entire pipeline is geared towards minimizing the number of queries needed to the victim, which is a key constraint in black-box attacks.

* **Notable Implication:** The highlighted steps (2 and 5) emphasize that the attack's success hinges on two things: accurately stealing the blueprint (architecture) and then meticulously refining the clone through iterative training. This is a methodical, resource-intensive attack, not a simple query-based theft.