## Neural Network Architecture Diagram: Entity-Relation Association Model

### Overview

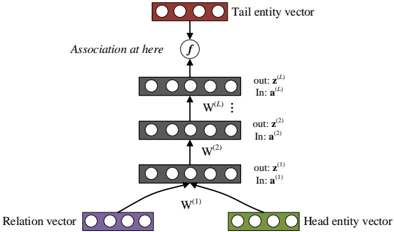

The diagram illustrates a multi-layer neural network architecture for modeling associations between entities and relations. It shows the flow of information from input vectors (head entity, tail entity, and relation) through multiple transformation layers to output association vectors. The architecture includes explicit weight matrices and a final association function.

### Components/Axes

1. **Input Vectors**:

- **Head entity vector** (green block): Positioned at the bottom-right

- **Tail entity vector** (red block): Positioned at the top

- **Relation vector** (purple block): Positioned at the bottom-left

2. **Transformation Layers**:

- Three stacked layers labeled W^(1), W^(2), ..., W^(L)

- Each layer has:

- Input vector (z^(i))

- Output vector (a^(i))

- Weight matrix (W^(i)) connecting input to output

3. **Association Function**:

- Labeled "f" at the top of the architecture

- Connects final output vector (a^(L)) to the tail entity vector

4. **Output Vectors**:

- Sequence of z^(1) to z^(L) vectors

- Sequence of a^(1) to a^(L) vectors

### Detailed Analysis

- **Input Flow**:

- Head entity vector (W^(1)) and relation vector (W^(1)) combine at the first layer

- Tail entity vector connects directly to the top of the architecture

- **Layer Progression**:

- Each layer transforms input vectors through weight matrices

- Output of layer i becomes input for layer i+1

- Final layer output (a^(L)) connects to the tail entity vector via function f

- **Vector Relationships**:

- z^(i) represents intermediate feature representations

- a^(i) represents association strength at each layer

- Final a^(L) determines the association strength between head and tail entities

### Key Observations

1. The architecture uses a bottom-up approach, starting with raw entity/relation vectors

2. Multiple transformation layers suggest hierarchical feature learning

3. The association function f appears to be a non-linear combination of the final layer output

4. No explicit activation functions are shown between layers

5. All vectors maintain consistent dimensionality through the network

### Interpretation

This architecture demonstrates a compositional model for entity-relation association:

1. **Feature Composition**: The relation vector combines with head entity features in the first layer

2. **Progressive Refinement**: Each subsequent layer refines the association representation

3. **Tail Entity Integration**: The final association strength directly influences the tail entity vector

4. **Interpretability**: The layered structure allows tracing association strength through intermediate representations

The model likely implements a form of neural tensor network or relation network, where:

- W^(1) learns initial relation-aware features

- Deeper layers (W^(2)...W^(L)) capture complex interaction patterns

- The final association function f could represent a sigmoid or softmax for probability prediction

This structure enables the network to model complex semantic relationships between entities while maintaining interpretability through its explicit weight matrices and association function.