\n

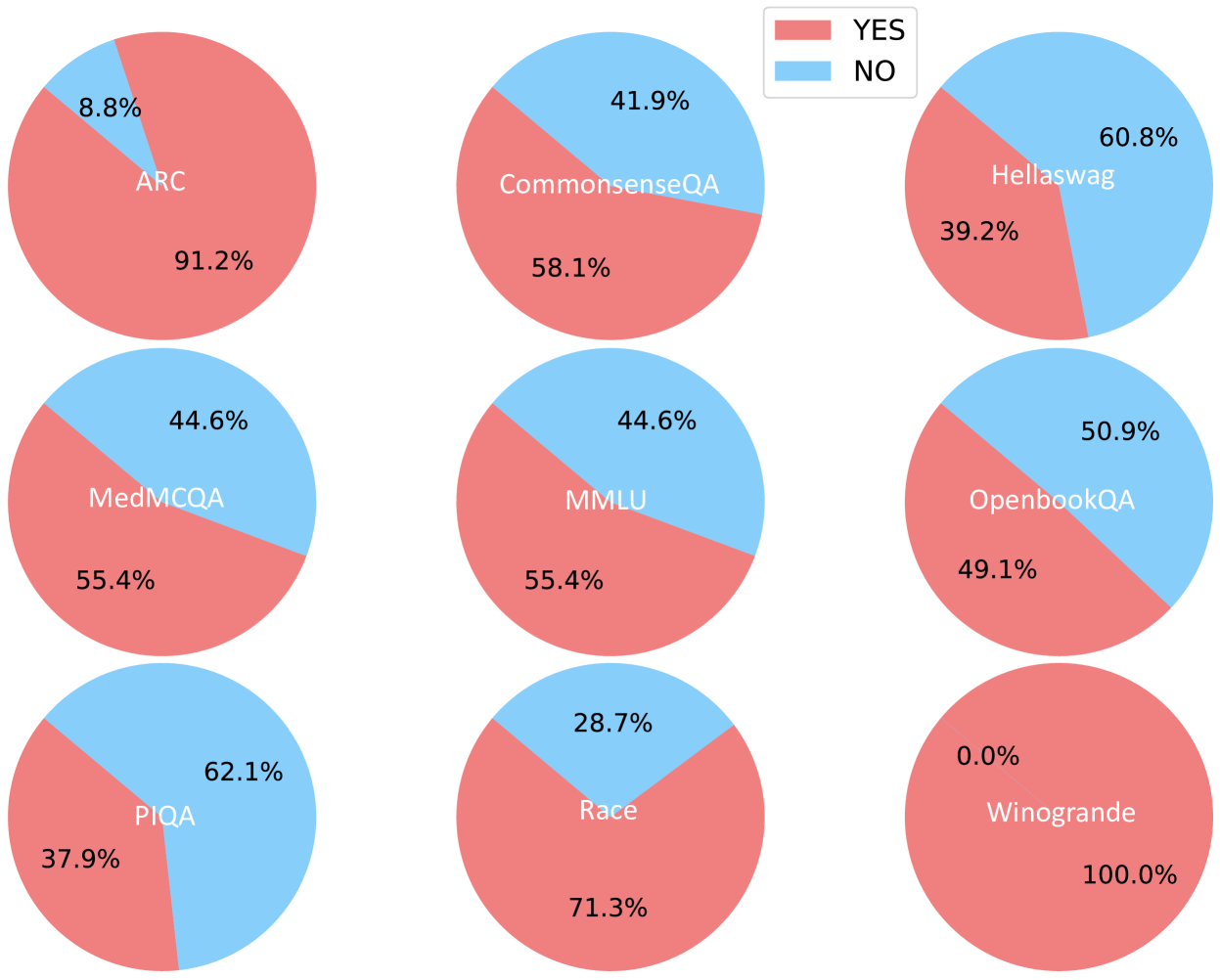

## Pie Charts: Performance Evaluation on Various Question Answering Datasets

### Overview

The image presents a 3x3 grid of pie charts, each representing the performance (likely accuracy) of a model on a different question answering dataset. The performance is categorized into "YES" and "NO" responses, visually represented by different shades of red and blue respectively. A legend in the top-right corner defines the color scheme.

### Components/Axes

* **Pie Charts:** Each chart represents a dataset.

* **Labels:** Each pie chart is labeled with the dataset name: ARC, CommonsenseQA, Hellaswag, MedMCQA, MMLU, OpenbookQA, PIQA, Race, Winogrande.

* **Legend:** Located in the top-right corner, the legend indicates:

* "YES" - represented by a reddish-brown color (approximately RGB 204, 51, 51).

* "NO" - represented by a light blue color (approximately RGB 173, 216, 230).

* **Values:** Each segment of the pie chart is labeled with a percentage value.

### Detailed Analysis

Here's a breakdown of the data for each dataset:

1. **ARC:** 8.8% "YES", 91.2% "NO"

2. **CommonsenseQA:** 41.9% "YES", 58.1% "NO"

3. **Hellaswag:** 60.8% "YES", 39.2% "NO"

4. **MedMCQA:** 44.6% "YES", 55.4% "NO"

5. **MMLU:** 44.6% "YES", 55.4% "NO"

6. **OpenbookQA:** 50.9% "YES", 49.1% "NO"

7. **PIQA:** 62.1% "YES", 37.9% "NO"

8. **Race:** 28.7% "YES", 71.3% "NO"

9. **Winogrande:** 0.0% "YES", 100.0% "NO"

### Key Observations

* **Winogrande** shows a complete failure rate (0% "YES").

* **ARC** and **Race** have very low "YES" percentages, indicating poor performance.

* **PIQA** has the highest "YES" percentage (62.1%), suggesting the best performance among these datasets.

* **OpenbookQA** is nearly balanced between "YES" and "NO" responses.

* **MedMCQA** and **MMLU** have identical performance metrics.

### Interpretation

The data suggests that the model being evaluated struggles significantly with certain question answering tasks, particularly Winogrande, ARC, and Race. The "YES" and "NO" labels likely represent correct and incorrect answers, respectively. The wide range of performance across different datasets indicates that the model's capabilities are highly dependent on the specific type of question or knowledge domain. The fact that Winogrande has 0% accuracy is a significant outlier and warrants further investigation. It could indicate a fundamental limitation of the model in handling coreference resolution or commonsense reasoning, which are often tested in Winogrande. The relatively high performance on PIQA suggests the model is better at physical interaction questions. The similarity in performance between MedMCQA and MMLU could indicate that the model has similar strengths and weaknesses in medical and general knowledge domains. Overall, the data provides a valuable snapshot of the model's strengths and weaknesses across a diverse set of question answering benchmarks.