\n

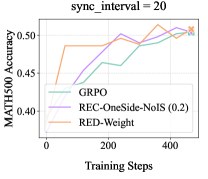

## Line Chart: Training Steps vs. MATH500 Accuracy (sync_interval = 20)

### Overview

The image is a line chart comparing the performance of three different training methods or algorithms over the course of training steps. The performance metric is accuracy on the MATH500 benchmark. The chart title indicates a specific experimental parameter: `sync_interval = 20`.

### Components/Axes

* **Chart Title:** `sync_interval = 20` (centered at the top).

* **Y-Axis:**

* **Label:** `MATH500 Accuracy` (vertical text on the left).

* **Scale:** Linear scale from 0.40 to 0.50, with major tick marks at 0.40, 0.45, and 0.50.

* **X-Axis:**

* **Label:** `Training Steps` (horizontal text at the bottom).

* **Scale:** Linear scale from 0 to approximately 450, with major tick marks labeled at 0, 200, and 400.

* **Legend:** Located in the bottom-right quadrant of the chart area. It contains three entries, each with a colored line sample and a text label:

1. **Blue Line:** `GRPO`

2. **Purple Line:** `REC-OneSide-NoIS (0.2)`

3. **Orange Line:** `RED-Weight`

### Detailed Analysis

The chart plots three data series, each showing the trajectory of MATH500 accuracy as training progresses.

1. **GRPO (Blue Line):**

* **Trend:** Starts at a moderate accuracy, experiences a slight initial dip, then shows a steady, consistent upward trend throughout the training steps.

* **Approximate Data Points:**

* Step 0: ~0.43

* Step ~50: ~0.42 (slight dip)

* Step ~150: ~0.44

* Step ~250: ~0.46

* Step ~350: ~0.48

* Step ~450: ~0.50

2. **REC-OneSide-NoIS (0.2) (Purple Line):**

* **Trend:** Begins at a higher accuracy than the other two methods. It shows a strong, relatively smooth upward trend, maintaining the highest accuracy for most of the training duration before being closely matched by GRPO at the end.

* **Approximate Data Points:**

* Step 0: ~0.45

* Step ~100: ~0.47

* Step ~200: ~0.48

* Step ~300: ~0.50

* Step ~400: ~0.51 (peak)

* Step ~450: ~0.50

3. **RED-Weight (Orange Line):**

* **Trend:** Starts at the lowest accuracy but exhibits the most rapid initial improvement, jumping significantly within the first ~50 steps. After this initial surge, its performance plateaus and fluctuates within a narrow band (approximately 0.48 to 0.49), showing less continued improvement compared to the other two methods.

* **Approximate Data Points:**

* Step 0: ~0.40

* Step ~50: ~0.48 (sharp increase)

* Step ~150: ~0.485

* Step ~250: ~0.48

* Step ~350: ~0.49

* Step ~450: ~0.485

### Key Observations

* **Initial Performance Hierarchy:** At step 0, the order from highest to lowest accuracy is: REC-OneSide-NoIS (0.2) > GRPO > RED-Weight.

* **Learning Dynamics:** RED-Weight learns fastest initially but saturates quickly. GRPO learns more slowly but steadily. REC-OneSide-NoIS (0.2) starts strong and maintains a consistent learning rate.

* **Convergence:** By the end of the plotted training steps (~450), the performance of GRPO and REC-OneSide-NoIS (0.2) converges to a very similar level (~0.50), while RED-Weight remains slightly below them.

* **Volatility:** The RED-Weight line shows more minor fluctuations (ups and downs) after its initial rise compared to the smoother trajectories of the other two methods.

### Interpretation

This chart demonstrates the comparative learning efficiency and final performance of three algorithms on the MATH500 task under a specific synchronization setting (`sync_interval = 20`).

* **REC-OneSide-NoIS (0.2)** appears to be the most robust method, offering both a strong starting point (possibly due to better initialization or a more effective early-stage update rule) and sustained improvement. Its final performance is among the best.

* **GRPO** shows a classic, steady learning curve. While it starts slower, its consistent improvement suggests it is a reliable method that continues to benefit from extended training, ultimately matching the top performer.

* **RED-Weight** is characterized by extremely rapid early gains, which could be advantageous if training compute is severely limited. However, its early plateau indicates it may get stuck in a local optimum or lack the mechanisms for fine-grained later-stage improvement that the other methods possess.

The key takeaway is that the choice of method involves a trade-off: **RED-Weight** for fast, early results, **GRPO** for steady, predictable improvement, and **REC-OneSide-NoIS (0.2)** for strong performance throughout. The `sync_interval` parameter is a critical experimental condition, and these relative performances might change under different synchronization settings.