## Line Chart: MATH500 Accuracy vs. Training Steps

### Overview

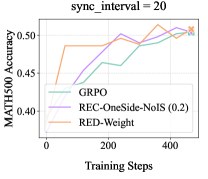

The image is a line chart comparing the MATH500 accuracy of three different methods (GRPO, REC-OneSide-NoIS (0.2), and RED-Weight) over training steps. The chart shows the performance of each method as the training progresses, with the x-axis representing training steps and the y-axis representing MATH500 accuracy. The parameter "sync_interval" is set to 20.

### Components/Axes

* **Title:** sync\_interval = 20

* **X-axis:** Training Steps (values ranging from 0 to 400)

* **Y-axis:** MATH500 Accuracy (values ranging from 0.40 to 0.50)

* **Legend:** Located in the center of the chart.

* GRPO (light green)

* REC-OneSide-NoIS (0.2) (light purple)

* RED-Weight (light orange)

### Detailed Analysis

* **GRPO (light green):** The line starts at approximately 0.43 accuracy at 0 training steps. It initially decreases slightly, then increases steadily to approximately 0.51 accuracy at 400 training steps.

* **REC-OneSide-NoIS (0.2) (light purple):** The line starts at approximately 0.43 accuracy at 0 training steps. It increases to approximately 0.50 accuracy at 200 training steps, then fluctuates slightly before reaching approximately 0.51 accuracy at 400 training steps.

* **RED-Weight (light orange):** The line starts at approximately 0.43 accuracy at 0 training steps. It increases sharply to approximately 0.49 accuracy at 50 training steps, then fluctuates before reaching approximately 0.50 accuracy at 400 training steps.

### Key Observations

* All three methods show an increasing trend in MATH500 accuracy as training steps increase.

* RED-Weight shows the most rapid initial increase in accuracy.

* GRPO has a more gradual and consistent increase in accuracy compared to the other two methods.

* At 400 training steps, all three methods converge to approximately the same accuracy level (around 0.51).

### Interpretation

The chart demonstrates the performance of three different methods for improving MATH500 accuracy during training. The RED-Weight method initially shows a faster improvement, but all three methods eventually achieve similar accuracy levels after a sufficient number of training steps. The choice of method may depend on the desired speed of initial improvement versus the consistency of the improvement over time. The "sync_interval" parameter being set to 20 suggests that the model parameters are synchronized every 20 training steps, which could influence the learning dynamics of each method.