TECHNICAL ASSET FINGERPRINT

cc9a11d5f9b565eace125d84

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

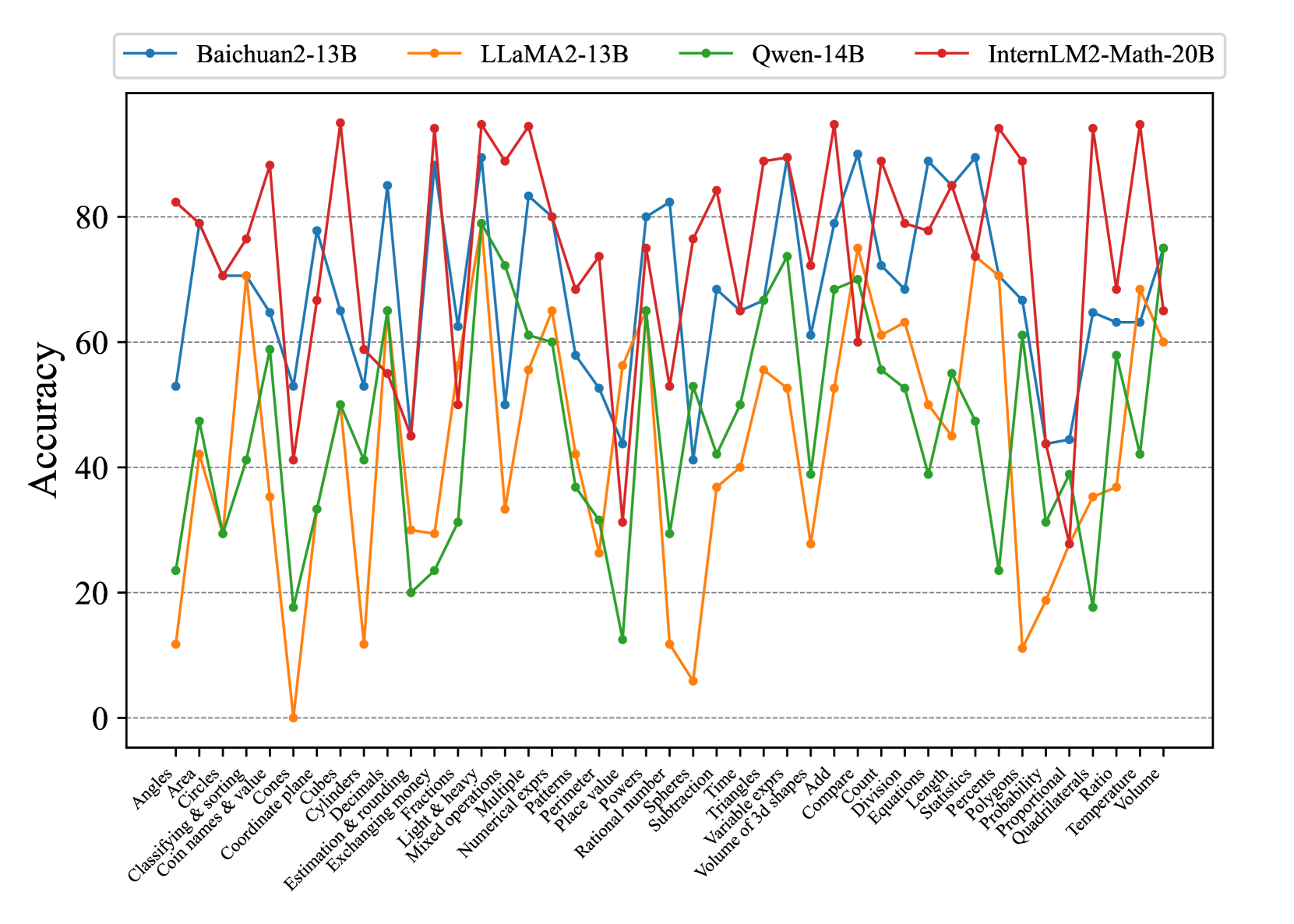

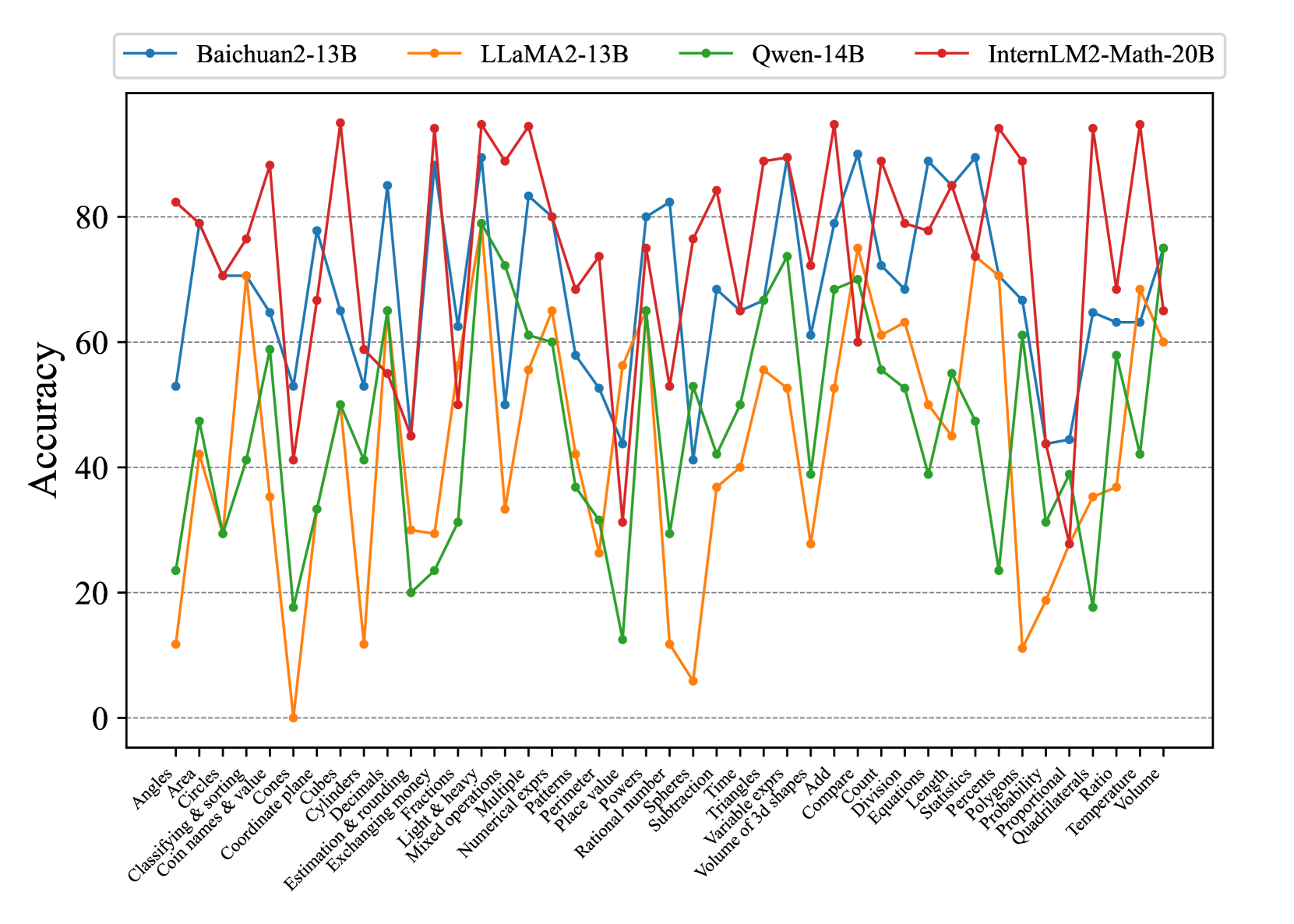

## Line Chart: Model Accuracy on Math Problems

### Overview

The image is a line chart comparing the accuracy of four different language models (Baichuan2-13B, LLaMA2-13B, Qwen-14B, and InternLM2-Math-20B) on a variety of math problem types. The x-axis represents different math problem categories, and the y-axis represents the accuracy score.

### Components/Axes

* **Title:** None explicitly present in the image.

* **X-axis:**

* Label: Math problem categories.

* Categories (from left to right): Angles, Area, Circles, Classifying & sorting, Coin names & value, Cones, Coordinate plane, Cubes, Cylinders, Decimals, Estimation & rounding, Exchanging money, Fractions, Light & heavy, Mixed operations, Multiple, Numerical exprs, Patterns, Perimeter, Place value, Powers, Rational number, Spheres, Subtraction, Time, Triangles, Variable exprs, Volume of 3d shapes, Add, Compare, Count, Division, Equations, Length, Percents, Polygons, Probability, Proportional, Quadrilaterals, Ratio, Statistics, Temperature, Volume.

* **Y-axis:**

* Label: Accuracy

* Scale: 0 to 80, with tick marks at intervals of 20.

* **Legend:** Located at the top of the chart.

* Baichuan2-13B (Blue)

* LLaMA2-13B (Orange)

* Qwen-14B (Green)

* InternLM2-Math-20B (Red)

* Gridlines: Horizontal dashed lines at intervals of 20 on the y-axis.

### Detailed Analysis

Here's a breakdown of each model's performance across the different math problem categories:

* **Baichuan2-13B (Blue):**

* Trend: Highly variable performance across categories. Starts around 60% accuracy for "Angles," drops sharply for "Area," then fluctuates significantly.

* Key Data Points:

* Angles: ~53%

* Area: ~10%

* Circles: ~40%

* Coordinate plane: ~82%

* Fractions: ~30%

* Powers: ~65%

* Time: ~45%

* Add: ~75%

* Volume: ~70%

* **LLaMA2-13B (Orange):**

* Trend: Generally lower accuracy compared to other models. Exhibits significant drops in performance for specific categories.

* Key Data Points:

* Angles: ~12%

* Area: ~42%

* Circles: ~2%

* Coordinate plane: ~70%

* Fractions: ~30%

* Powers: ~58%

* Time: ~38%

* Add: ~40%

* Volume: ~65%

* **Qwen-14B (Green):**

* Trend: More consistent performance than LLaMA2-13B, but still variable. Generally lower than Baichuan2-13B and InternLM2-Math-20B.

* Key Data Points:

* Angles: ~23%

* Area: ~45%

* Circles: ~28%

* Coordinate plane: ~65%

* Fractions: ~22%

* Powers: ~55%

* Time: ~60%

* Add: ~65%

* Volume: ~75%

* **InternLM2-Math-20B (Red):**

* Trend: Generally the highest accuracy among the four models. Shows strong performance across most categories, but still has some variability.

* Key Data Points:

* Angles: ~80%

* Area: ~70%

* Circles: ~80%

* Coordinate plane: ~90%

* Fractions: ~75%

* Powers: ~70%

* Time: ~80%

* Add: ~85%

* Volume: ~68%

### Key Observations

* InternLM2-Math-20B consistently outperforms the other models across most math problem categories.

* LLaMA2-13B generally has the lowest accuracy.

* All models exhibit variability in performance depending on the specific math problem category.

* There are significant performance differences between models on categories like "Area", "Circles", and "Angles".

### Interpretation

The data suggests that InternLM2-Math-20B is the most proficient at solving a wide range of math problems among the models tested. The variability in performance across different categories highlights the strengths and weaknesses of each model in specific areas of mathematical reasoning. The relatively poor performance of LLaMA2-13B suggests it may require further training or fine-tuning to achieve comparable accuracy to the other models. The chart demonstrates the importance of evaluating language models on diverse datasets to understand their capabilities and limitations.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Model Accuracy on Math Problems

### Overview

This line chart compares the accuracy of four large language models – Baichuan2-13B, LLaMA2-13B, Qwen-14B, and InternLM2-Math-20B – across a range of mathematical problem types. The x-axis represents different math concepts, and the y-axis represents the accuracy score, ranging from 0 to 100.

### Components/Axes

* **X-axis Title:** Math Concepts (Categories)

* **Y-axis Title:** Accuracy

* **Y-axis Scale:** 0 to 100, with increments of 10.

* **Legend:** Located at the top of the chart, horizontally aligned.

* Baichuan2-13B (Orange)

* LLaMA2-13B (Red)

* Qwen-14B (Green)

* InternLM2-Math-20B (Teal)

* **Math Concept Categories (X-axis):** Angles, Classifying & sorting, Circles, Cones, Coordinate plane, Cylinders, Decimals, Estimation & rounding, Exchanging & multiplying, Fractions, Light & heavy, Mixed operations, Multiple, Numerical expressions, Patterns, Perimeter, Place value, Powers, Rational numbers, Spheres, Subtraction, Time, Triangles, Variable expressions, Volume of 3d shapes, Add, Compare, Division, Equations, Length, Statistics, Polygons, Probability, Proportional, Quadrilaterals, Ratio, Temperature, Volume.

### Detailed Analysis

The chart displays four lines, each representing a model's accuracy across the math concepts.

* **Baichuan2-13B (Orange):** The line fluctuates significantly. It starts at approximately 75, dips to around 25, then rises again to approximately 85, and ends around 60.

* **LLaMA2-13B (Red):** This line generally stays between 60 and 90. It begins at around 80, dips to approximately 60, rises to around 90, and ends around 65.

* **Qwen-14B (Green):** This line exhibits the most variability, with large swings in accuracy. It starts at approximately 30, peaks around 80, and ends around 20.

* **InternLM2-Math-20B (Teal):** This line is relatively stable, generally staying between 60 and 85. It begins at approximately 70, rises to around 85, and ends around 70.

Here's a breakdown of approximate accuracy values for specific concepts:

| Math Concept | Baichuan2-13B | LLaMA2-13B | Qwen-14B | InternLM2-Math-20B |

| ---------------------- | ------------- | ---------- | -------- | -------------------- |

| Angles | ~75 | ~80 | ~40 | ~70 |

| Circles | ~65 | ~70 | ~30 | ~75 |

| Cones | ~55 | ~60 | ~20 | ~65 |

| Coordinate plane | ~80 | ~85 | ~60 | ~80 |

| Decimals | ~85 | ~90 | ~70 | ~85 |

| Estimation & rounding | ~70 | ~75 | ~50 | ~70 |

| Fractions | ~60 | ~65 | ~40 | ~60 |

| Mixed operations | ~30 | ~40 | ~10 | ~40 |

| Patterns | ~70 | ~80 | ~50 | ~75 |

| Perimeter | ~60 | ~70 | ~30 | ~65 |

| Probability | ~50 | ~60 | ~20 | ~55 |

| Volume | ~60 | ~65 | ~20 | ~60 |

### Key Observations

* Qwen-14B demonstrates the highest degree of variance in accuracy, suggesting it may be more sensitive to the specific type of math problem.

* LLaMA2-13B and InternLM2-Math-20B generally exhibit more consistent performance across the tested concepts.

* Baichuan2-13B shows a strong performance on decimals, but struggles with mixed operations.

* All models show lower accuracy on "Mixed operations" and "Fractions" compared to other concepts.

* InternLM2-Math-20B consistently performs well, but doesn't reach the peak accuracy of LLaMA2-13B on certain tasks.

### Interpretation

The chart provides a comparative analysis of the mathematical reasoning capabilities of four large language models. The varying accuracy scores across different math concepts highlight the strengths and weaknesses of each model. The significant fluctuations in Qwen-14B's performance suggest it may be more prone to errors or require more specialized training for certain mathematical tasks. The relatively stable performance of LLaMA2-13B and InternLM2-Math-20B indicates a more robust understanding of mathematical principles. The lower accuracy scores on "Mixed operations" and "Fractions" across all models suggest these areas may require further improvement in language model training. The data suggests that while these models are capable of solving math problems, their performance is highly dependent on the specific problem type and the model's underlying architecture and training data. The InternLM2-Math-20B model, specifically designed for mathematical tasks, shows promising results, but further research is needed to optimize its performance and address its limitations.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Accuracy of Four Language Models Across Mathematical Categories

### Overview

This image is a line chart comparing the performance accuracy of four different large language models (LLMs) across a wide range of mathematical problem categories. The chart displays how each model's accuracy varies significantly depending on the specific type of math problem.

### Components/Axes

* **Chart Type:** Multi-series line chart with markers.

* **Y-Axis:** Labeled "Accuracy". The scale runs from 0 to approximately 95, with major gridlines at intervals of 20 (0, 20, 40, 60, 80).

* **X-Axis:** Lists 43 distinct mathematical categories. The labels are rotated approximately 45 degrees for readability. The categories are, from left to right:

1. Angles

2. Area

3. Circles

4. Classifying & sorting

5. Coin names & value

6. Cones

7. Coordinate plane

8. Cubes

9. Cylinders

10. Decimals

11. Estimation & rounding

12. Exchanging money

13. Fractions

14. Light & heavy

15. Mixed operations

16. Multiple

17. Numerical exprs

18. Patterns

19. Perimeter

20. Place value

21. Powers

22. Rational number

23. Spheres

24. Subtraction

25. Time

26. Triangles

27. Variable exprs

28. Volume of 3d shapes

29. Add

30. Compare

31. Count

32. Division

33. Equations

34. Length

35. Statistics

36. Percents

37. Polygons

38. Probability

39. Proportional

40. Quadrilaterals

41. Ratio

42. Temperature

43. Volume

* **Legend:** Positioned at the top center of the chart. It defines four data series:

* **Baichuan2-13B:** Blue line with circular markers.

* **LLaMA2-13B:** Orange line with circular markers.

* **Qwen-14B:** Green line with circular markers.

* **InternLM2-Math-20B:** Red line with circular markers.

### Detailed Analysis

The chart shows high variability in performance for all models across the 43 categories. Below is a summary of trends and approximate accuracy values for each model.

**1. Baichuan2-13B (Blue Line):**

* **Trend:** Highly volatile, with frequent sharp peaks and troughs. Often performs in the middle-to-high range but has significant dips.

* **Notable Highs:** Fractions (~90), Mixed operations (~83), Numerical exprs (~82), Add (~90), Equations (~88), Percents (~88).

* **Notable Lows:** Coordinate plane (~53), Subtraction (~41), Probability (~44), Quadrilaterals (~44).

**2. LLaMA2-13B (Orange Line):**

* **Trend:** Generally the lowest-performing model across most categories, with the most extreme low values. Shows a few moderate peaks.

* **Notable Highs:** Circles (~70), Fractions (~78), Mixed operations (~65), Add (~75), Compare (~61), Count (~63).

* **Notable Lows:** Coordinate plane (~0), Cubes (~12), Subtraction (~6), Probability (~11), Quadrilaterals (~35).

**3. Qwen-14B (Green Line):**

* **Trend:** Performance is often in the middle range, below Baichuan2 and InternLM2 but above LLaMA2. Shows a distinct pattern of peaks and valleys.

* **Notable Highs:** Fractions (~79), Mixed operations (~72), Numerical exprs (~61), Add (~74), Compare (~69), Volume (~75).

* **Notable Lows:** Coordinate plane (~18), Cubes (~33), Subtraction (~13), Probability (~23), Quadrilaterals (~18).

**4. InternLM2-Math-20B (Red Line):**

* **Trend:** Frequently the top-performing model, especially in arithmetic and algebraic categories. Its line is often at the top of the chart, though it has sharp drops in geometry and measurement topics.

* **Notable Highs:** Fractions (~94), Mixed operations (~94), Numerical exprs (~80), Add (~94), Equations (~84), Percents (~94), Ratio (~94).

* **Notable Lows:** Coordinate plane (~41), Cubes (~59), Subtraction (~53), Probability (~28), Quadrilaterals (~28).

**Cross-Model Comparison by Category Type:**

* **Arithmetic & Algebra (e.g., Fractions, Mixed operations, Add, Equations):** InternLM2-Math-20B and Baichuan2-13B consistently lead, often scoring above 80. LLaMA2-13B lags significantly.

* **Geometry & Measurement (e.g., Coordinate plane, Cubes, Spheres, Volume of 3d shapes):** Performance is more mixed and generally lower for all models. No single model dominates. For "Coordinate plane," all models score below 55, with LLaMA2 at ~0.

* **Basic Concepts (e.g., Count, Compare, Classifying & sorting):** Models show relatively closer performance, though InternLM2 and Baichuan2 still tend to be higher.

### Key Observations

1. **Model Specialization:** InternLM2-Math-20B shows a clear strength in core mathematical operations (fractions, mixed operations, equations, percents, ratio), suggesting specialized training or fine-tuning for these areas.

2. **Universal Difficulty:** Certain categories prove challenging for all models. "Coordinate plane" and "Probability" see low scores across the board, indicating these are harder reasoning tasks for current LLMs.

3. **Extreme Volatility:** The performance of each model is not consistent; it is highly dependent on the specific problem category. A model can be near the top in one category and near the bottom in another.

4. **LLaMA2-13B's Struggles:** The LLaMA2-13B model has the weakest overall performance, with several categories near or at 0% accuracy, suggesting a potential lack of relevant training data or capability for those specific math skills.

### Interpretation

This chart provides a diagnostic breakdown of LLM capabilities in mathematical reasoning. It moves beyond an "average accuracy" score to reveal a nuanced landscape of strengths and weaknesses.

* **What the data suggests:** Mathematical reasoning in LLMs is not a monolithic skill. Proficiency is highly fragmented across different domains. A model's overall benchmark score would mask these critical variations.

* **How elements relate:** The x-axis categories represent a taxonomy of elementary to middle-school math skills. The diverging lines show that model architecture and training data create distinct "profiles" of competency. For instance, InternLM2-Math-20B's profile is spiked in arithmetic/algebra, while its geometry performance is more average.

* **Notable anomalies:** The near-zero score for LLaMA2-13B on "Coordinate plane" is a stark outlier, suggesting a complete failure mode for that model on that specific task type. The consistent high performance of InternLM2-Math-20B on categories involving fractions, percents, and ratios indicates a possible targeted optimization for proportional reasoning.

* **Implication:** For practical applications, one cannot assume a model good at "math" is good at *all* math. Task-specific evaluation is crucial. The chart also highlights areas (like probability and coordinate geometry) where all current models need significant improvement, guiding future research and training efforts.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Accuracy Comparison of Math Models on Various Problems

### Overview

The image is a multi-line graph comparing the accuracy performance of four large language models (LLMs) across 25 math problem categories. The models compared are Baichuan2-13B (blue), LLaMA2-13B (orange), Qwen-14B (green), and InternLM2-Math-20B (red). Accuracy percentages range from 0-100% on the y-axis, with math topics listed sequentially on the x-axis.

### Components/Axes

- **Legend**: Top-left corner, mapping colors to models:

- Blue: Baichuan2-13B

- Orange: LLaMA2-13B

- Green: Qwen-14B

- Red: InternLM2-Math-20B

- **X-axis**: "Math Problems" with 25 labeled categories (e.g., Angles, Area, Circles, Classifying & sorting, Coin names & value, Coordinate plane, Cubes, Decimals, Estimation & rounding, Fractions, Light & heavy, Mixed operations, Multiple operations, Numerical expressions, Patterns, Perimeter, Place value, Powers, Rational number, Spheres, Subtraction, Time, Triangles, Variable expressions, Volume of 3d shapes, Add, Compare, Count, Division, Equations, Length, Statistics, Percent, Polygons, Probability, Proportional, Quadrilaterals, Ratio, Temperature, Volume).

- **Y-axis**: "Accuracy (%)" with ticks at 0, 20, 40, 60, 80, 100.

- **Lines**: Four colored lines representing model performance across topics.

### Detailed Analysis

1. **InternLM2-Math-20B (Red Line)**:

- Consistently highest performer, peaking above 90% in multiple categories (e.g., Coordinate plane, Fractions, Time).

- Shows minor dips but maintains >70% accuracy in all categories.

- Notable peaks: 95%+ in Coordinate plane, Fractions, and Time.

2. **Baichuan2-13B (Blue Line)**:

- Second-highest performer overall, with peaks near 90% (e.g., Coordinate plane, Fractions).

- More volatile than InternLM2, with sharper drops (e.g., 60% in Decimals, 50% in Estimation & rounding).

- Strong in geometry topics (Angles, Area, Perimeter).

3. **LLaMA2-13B (Orange Line)**:

- Most variable performance, with extreme lows (e.g., 0% in Coordinate plane, 5% in Subtraction).

- Strong in algebraic topics (Equations, Variables) with peaks near 80%.

- Weaknesses in spatial reasoning (Coordinate plane, Geometry).

4. **Qwen-14B (Green Line)**:

- Moderate performance, averaging 60-70%.

- Peaks in algebraic topics (Equations, Variables) at ~75%.

- Notable dip to 15% in Place value, recovery in Statistics (~60%).

### Key Observations

- **Outliers**:

- LLaMA2-13B: 0% accuracy in Coordinate plane (potential data error or model weakness).

- Qwen-14B: 15% in Place value (significant drop).

- **Trends**:

- InternLM2-Math-20B dominates in geometry and arithmetic (Angles, Fractions, Time).

- All models struggle with spatial reasoning (Coordinate plane, Geometry).

- Algebraic topics (Equations, Variables) show higher performance across models.

### Interpretation

The data suggests **InternLM2-Math-20B** is the most robust model for math problem-solving, likely due to specialized training on mathematical reasoning. Its consistent performance across diverse topics indicates strong generalization. **LLaMA2-13B** exhibits the most variability, with critical failures in spatial reasoning (Coordinate plane) but strengths in algebraic manipulation. **Qwen-14B** and **Baichuan2-13B** show mid-tier performance, with Qwen excelling in algebraic topics and Baichuan2 performing well in geometry. The anomalies (e.g., LLaMA2's 0% in Coordinate plane) highlight potential gaps in model training data or architectural limitations for specific problem types. This comparison underscores the importance of model specialization for domain-specific tasks like mathematics.

DECODING INTELLIGENCE...