\n

## Screenshot: Model Explanations Interface

### Overview

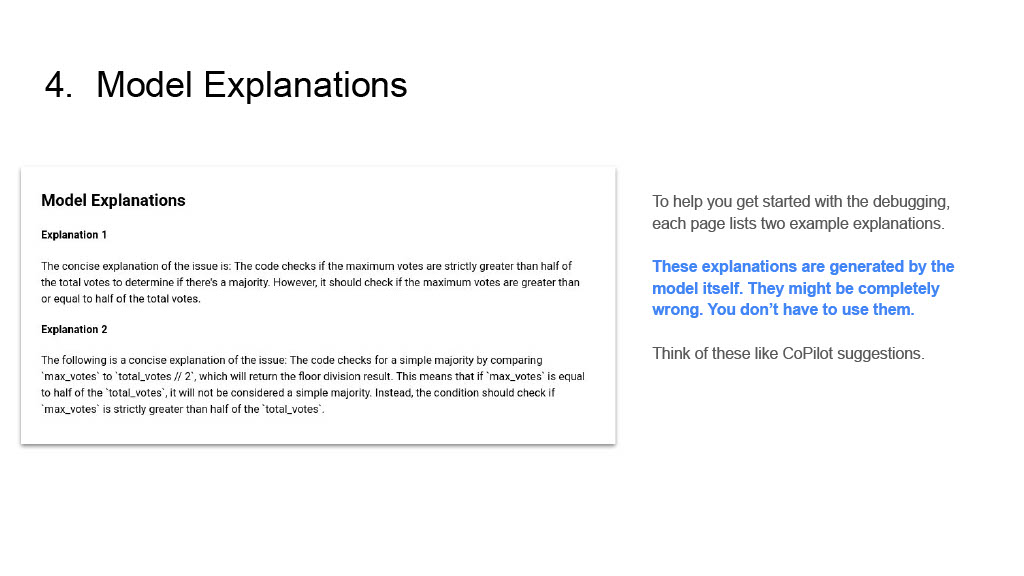

The image is a screenshot of a digital document or application interface, likely from a debugging or code analysis tool. It presents a section titled "4. Model Explanations" which contains a bordered content box with two example explanations generated by an AI model, alongside explanatory text for the user.

### Components/Areas

The image is divided into two primary regions:

1. **Left Region (Main Content Box):** A white box with a light gray border containing the core content.

* **Box Title:** "Model Explanations" (bold, top-left inside the box).

* **Content:** Two numbered explanations ("Explanation 1" and "Explanation 2") describing a potential issue in code logic related to determining a majority vote.

2. **Right Region (User Guidance):** Text placed to the right of the main box, providing context and warnings about the model-generated explanations.

### Detailed Analysis / Content Details

**1. Main Heading (Top of Image):**

* Text: `4. Model Explanations`

**2. Content Box (Left Region):**

* **Title:** `Model Explanations`

* **Explanation 1:**

* **Label:** `Explanation 1`

* **Transcribed Text:** `The concise explanation of the issue is: The code checks if the maximum votes are strictly greater than half of the total votes to determine if there's a majority. However, it should check if the maximum votes are greater than or equal to half of the total votes.`

* **Explanation 2:**

* **Label:** `Explanation 2`

* **Transcribed Text:** `The following is a concise explanation of the issue: The code checks for a simple majority by comparing `max_votes` to `total_votes / 2`, which will return the floor division result. This means that if `max_votes` is equal to half of the `total_votes`, it will not be considered a simple majority. Instead, the condition should check if `max_votes` is strictly greater than half of the `total_votes`.`

**3. User Guidance Text (Right Region):**

* **First Paragraph:** `To help you get started with the debugging, each page lists two example explanations.`

* **Highlighted Warning (Blue Text):** `These explanations are generated by the model itself. They might be completely wrong. You don't have to use them.`

* **Final Analogy:** `Think of these like CoPilot suggestions.`

### Key Observations

* **Purpose:** The interface is designed to assist a user in debugging code by providing AI-generated hypotheses about the nature of a bug.

* **Content Focus:** Both explanations address the same logical flaw in code: an incorrect comparison operator (`>` vs. `>=`) when evaluating a majority condition.

* **Critical Disclaimer:** The most visually prominent text (in blue) is a strong disclaimer about the potential inaccuracy of the model's output, explicitly stating the user is not obligated to use them.

* **Analogy:** The guidance frames the model's role as a suggestive tool, similar to GitHub's CoPilot, rather than an authoritative source.

### Interpretation

This screenshot depicts a human-AI collaborative debugging environment. The system's design acknowledges the fallibility of its AI component by providing clear warnings and framing its output as optional "suggestions." The two explanations, while slightly different in phrasing and technical detail (Explanation 2 mentions specific code variables like `max_votes` and `total_votes / 2`), converge on the same core logical error. This suggests the underlying model is consistent in its identification of this specific type of off-by-one or boundary condition bug. The interface encourages critical evaluation—the user is expected to read, understand, and verify the model's explanations against their own code and logic, using them as a starting point rather than a final answer. The presence of "each page lists two example explanations" implies this is part of a larger, paginated debugging report or tutorial.