## Line Chart: Similarity vs. Reasoning Step for Various AI Models

### Overview

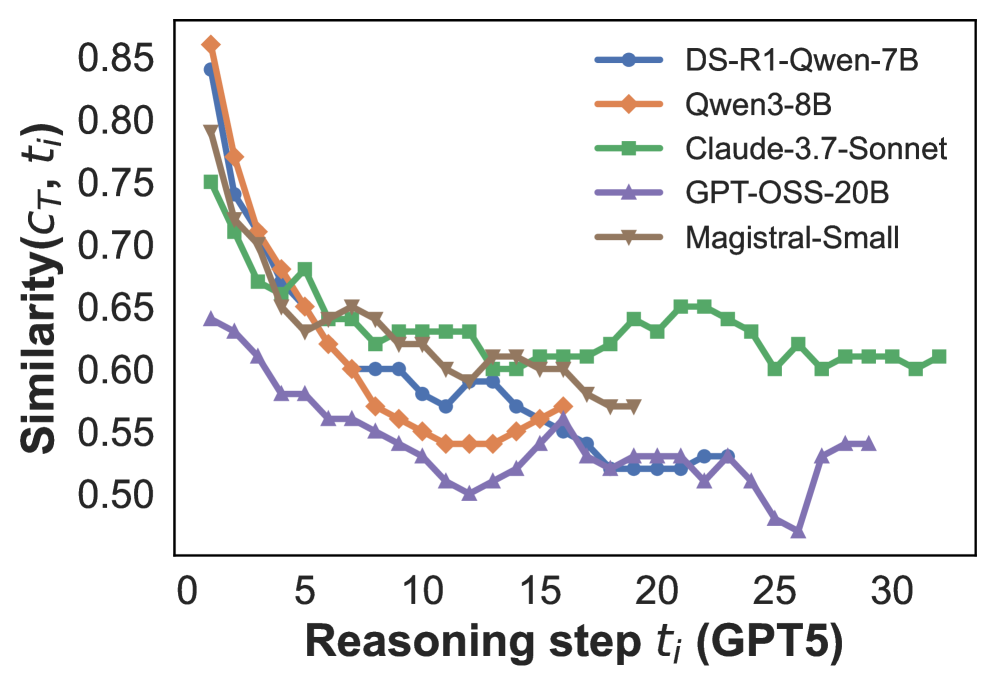

The image displays a line chart comparing the performance of five different AI models. The chart plots a "Similarity" metric against the number of "Reasoning steps." All models show a general downward trend in similarity as the number of reasoning steps increases, though the rate of decline and final values vary significantly.

### Components/Axes

* **Chart Type:** Multi-series line chart with markers.

* **Y-Axis:**

* **Label:** `Similarity(c_T, t_i)`

* **Scale:** Linear, ranging from 0.50 to 0.85.

* **Ticks:** Major ticks at 0.05 intervals (0.50, 0.55, 0.60, 0.65, 0.70, 0.75, 0.80, 0.85).

* **X-Axis:**

* **Label:** `Reasoning step t_i (GPT5)`

* **Scale:** Linear, ranging from 0 to 30.

* **Ticks:** Major ticks at intervals of 5 (0, 5, 10, 15, 20, 25, 30).

* **Legend:** Located in the top-right quadrant of the chart area. It contains five entries, each with a unique color, line style, and marker shape:

1. **DS-R1-Qwen-7B:** Blue line with circle markers.

2. **Qwen3-8B:** Orange line with diamond markers.

3. **Claude-3.7-Sonnet:** Green line with square markers.

4. **GPT-OSS-20B:** Purple line with upward-pointing triangle markers.

5. **Magistral-Small:** Brown line with downward-pointing triangle markers.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

1. **DS-R1-Qwen-7B (Blue, Circles):**

* **Trend:** Starts very high, experiences a steep initial decline, then fluctuates with a general downward drift.

* **Key Points:** Step 0: ~0.84, Step 5: ~0.67, Step 10: ~0.60, Step 15: ~0.57, Step 20: ~0.52, Step 25: ~0.53.

2. **Qwen3-8B (Orange, Diamonds):**

* **Trend:** Starts the highest, declines sharply and consistently until around step 12, then shows a slight recovery before ending.

* **Key Points:** Step 0: ~0.86, Step 5: ~0.65, Step 10: ~0.55, Step 15: ~0.57, Step 20: ~0.53 (line ends near step 18).

3. **Claude-3.7-Sonnet (Green, Squares):**

* **Trend:** Starts lower than the top two, declines more gradually, and exhibits the most stable performance in the latter half, even showing a slight upward trend after step 15.

* **Key Points:** Step 0: ~0.75, Step 5: ~0.68, Step 10: ~0.63, Step 15: ~0.61, Step 20: ~0.64, Step 25: ~0.60, Step 30: ~0.61.

4. **GPT-OSS-20B (Purple, Up-Triangles):**

* **Trend:** Starts the lowest, declines steadily to a minimum around step 25, then shows a sharp recovery.

* **Key Points:** Step 0: ~0.64, Step 5: ~0.58, Step 10: ~0.54, Step 15: ~0.52, Step 20: ~0.53, Step 25: ~0.48 (lowest point on chart), Step 30: ~0.54.

5. **Magistral-Small (Brown, Down-Triangles):**

* **Trend:** Starts high, declines, and then fluctuates in a middle range before the line ends early.

* **Key Points:** Step 0: ~0.79, Step 5: ~0.64, Step 10: ~0.65, Step 15: ~0.60, Step 20: ~0.57 (line ends near step 20).

### Key Observations

* **Initial Performance:** At step 0, Qwen3-8B and DS-R1-Qwen-7B have the highest similarity scores (>0.84), while GPT-OSS-20B is the lowest (~0.64).

* **Rate of Decline:** Qwen3-8B and DS-R1-Qwen-7B show the steepest initial drops. Claude-3.7-Sonnet has the most gradual decline.

* **Stability:** Claude-3.7-Sonnet demonstrates the most stable performance after step 15, maintaining a similarity between 0.60 and 0.65.

* **Anomaly/Recovery:** GPT-OSS-20B is the only model to show a significant recovery trend, increasing from its low of ~0.48 at step 25 to ~0.54 at step 30.

* **Data Range:** The chart captures data up to different steps for different models. Qwen3-8B and Magistral-Small lines terminate before step 20 and step 25, respectively, while others extend to step 30.

### Interpretation

This chart likely visualizes how the internal consistency or output similarity of various large language models (LLMs) degrades as they are forced to perform longer chains of reasoning (simulated here with "GPT5" steps). The `Similarity(c_T, t_i)` metric probably measures how similar the model's state or output is at step `t_i` compared to some reference or initial state `c_T`.

* **Performance Implication:** Models that start with higher similarity (Qwen3-8B, DS-R1-Qwen-7B) may have stronger initial coherence but are more susceptible to "drift" or degradation over extended reasoning. Claude-3.7-Sonnet, while starting lower, appears more robust for longer reasoning chains.

* **Model Comparison:** The data suggests a trade-off between peak initial performance and sustained performance. For tasks requiring very long reasoning, Claude-3.7-Sonnet might be more reliable. The recovery of GPT-OSS-20B is intriguing and could indicate a different architectural approach or a point where the model "resets" or finds a new stable state.

* **Underlying Question:** The chart addresses a core challenge in AI: maintaining fidelity and coherence over long, multi-step processes. The variance between models highlights different capabilities and potential failure modes in complex reasoning tasks.