## Chart: Validation Loss vs. Training FLOPS with Varying Sparsity

### Overview

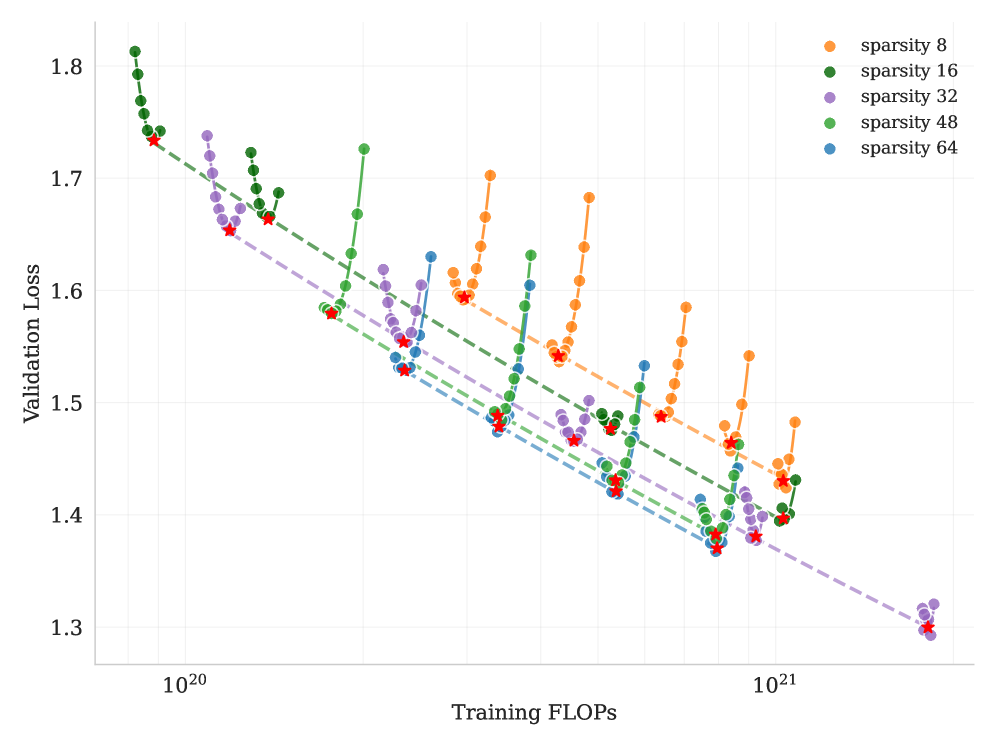

The image presents a line chart illustrating the relationship between Validation Loss (y-axis) and Training FLOPS (x-axis) for different levels of sparsity. The chart appears to be evaluating the performance of a model during training, with sparsity representing a regularization technique. The x-axis is on a logarithmic scale.

### Components/Axes

* **X-axis:** Training FLOPS, labeled "Training FLOPS". Scale is logarithmic, ranging approximately from 10<sup>20</sup> to 10<sup>21</sup>.

* **Y-axis:** Validation Loss, labeled "Validation Loss". Scale is linear, ranging approximately from 1.3 to 1.8.

* **Legend:** Located in the top-right corner. Contains the following sparsity levels with corresponding colors:

* sparsity 8 (Orange)

* sparsity 16 (Red)

* sparsity 32 (Purple)

* sparsity 48 (Green)

* sparsity 64 (Blue)

* **Data Series:** Five distinct lines, each representing a different sparsity level. The lines are connected by circular markers.

### Detailed Analysis

Here's a breakdown of each data series, noting trends and approximate data points. Note that due to the chart's resolution, values are approximate.

* **sparsity 8 (Orange):** The line starts at approximately (10<sup>20</sup>, 1.75) and generally decreases, with fluctuations, reaching around (8 x 10<sup>20</sup>, 1.5) before increasing again to approximately (10<sup>21</sup>, 1.55).

* **sparsity 16 (Red):** Starts at approximately (10<sup>20</sup>, 1.73) and decreases relatively smoothly to around (5 x 10<sup>20</sup>, 1.45), then plateaus and slightly increases to approximately (10<sup>21</sup>, 1.48).

* **sparsity 32 (Purple):** Begins at approximately (10<sup>20</sup>, 1.72) and shows a consistent downward trend, reaching a minimum of around (7 x 10<sup>20</sup>, 1.4) and then increasing slightly to approximately (10<sup>21</sup>, 1.43).

* **sparsity 48 (Green):** Starts at approximately (10<sup>20</sup>, 1.74) and decreases, reaching a minimum around (6 x 10<sup>20</sup>, 1.38), then increases to approximately (10<sup>21</sup>, 1.45).

* **sparsity 64 (Blue):** Starts at approximately (10<sup>20</sup>, 1.71) and decreases steadily, reaching a minimum around (8 x 10<sup>20</sup>, 1.35) and then increasing to approximately (10<sup>21</sup>, 1.33).

All lines exhibit a general downward trend initially, indicating decreasing validation loss as training FLOPS increase. However, after a certain point (around 5 x 10<sup>20</sup> FLOPS), the lines begin to fluctuate and, in some cases, increase, suggesting potential overfitting or diminishing returns from further training.

### Key Observations

* Higher sparsity levels (64 and 48) generally achieve lower validation loss values, particularly at higher FLOPS.

* The lines converge towards the right side of the chart, indicating that the impact of sparsity diminishes as training progresses.

* The orange line (sparsity 8) shows the most fluctuation, suggesting it is the least stable configuration.

* The lowest validation loss is achieved by sparsity 64, reaching approximately 1.33 at 10<sup>21</sup> FLOPS.

### Interpretation

The chart demonstrates the effect of sparsity on model validation loss during training. The results suggest that increasing sparsity can improve model performance (lower validation loss) up to a certain point. The initial decrease in validation loss with increasing FLOPS indicates that the model is learning and generalizing. The subsequent fluctuations and increases suggest that the model may be starting to overfit the training data, or that the benefits of further training are diminishing.

The convergence of the lines at higher FLOPS suggests that the impact of sparsity becomes less pronounced as the model becomes more thoroughly trained. This could be because the model has already learned the most important features, and further regularization has a smaller effect.

The fact that sparsity 64 consistently performs best suggests that a higher degree of sparsity is beneficial for this particular model and dataset. However, it's important to note that the optimal sparsity level may vary depending on the specific application and data characteristics. The chart provides valuable insights into the trade-offs between sparsity, training cost (FLOPS), and model performance (validation loss).