## Diagram: Semantic Data Integration Pipeline

### Overview

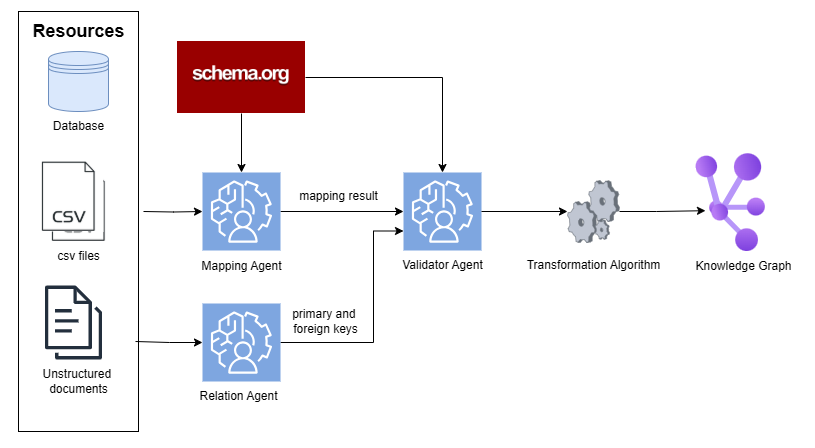

The image displays a system architecture diagram illustrating a multi-agent pipeline for transforming heterogeneous data sources into a structured knowledge graph. The process flows from left to right, starting with raw data resources, passing through specialized AI agents for mapping and validation, and culminating in a unified knowledge graph output. The diagram uses icons, labeled boxes, and directed arrows to represent components and data flow.

### Components/Axes

The diagram is organized into three primary regions:

1. **Left Region - Resources:** A large bounding box labeled "Resources" contains three data source types, each with an icon and label:

* **Database:** Represented by a blue cylinder icon.

* **csv files:** Represented by a document icon with "CSV" text.

* **Unstructured documents:** Represented by a document icon with lines of text.

2. **Central Processing Region - Agents & Schema:** This area contains the core processing components:

* **schema.org:** A red rectangular box at the top, acting as an external schema reference.

* **Mapping Agent:** A blue square icon with a brain/gear symbol. It receives input from "csv files" and "schema.org".

* **Relation Agent:** A blue square icon with a brain/gear symbol. It receives input from "Unstructured documents".

* **Validator Agent:** A blue square icon with a brain/gear symbol. It receives inputs from the "Mapping Agent" and "Relation Agent", and also from "schema.org".

* **Transformation Algorithm:** Represented by a gray gear icon.

3. **Right Region - Output:**

* **Knowledge Graph:** Represented by a purple network graph icon with interconnected nodes.

**Data Flow & Labels (Arrows):**

* An arrow from "csv files" to "Mapping Agent".

* An arrow from "Unstructured documents" to "Relation Agent".

* An arrow from "schema.org" to "Mapping Agent".

* An arrow from "schema.org" to "Validator Agent".

* An arrow from "Mapping Agent" to "Validator Agent", labeled **"mapping result"**.

* An arrow from "Relation Agent" to "Validator Agent", labeled **"primary and foreign keys"**.

* An arrow from "Validator Agent" to "Transformation Algorithm".

* An arrow from "Transformation Algorithm" to "Knowledge Graph".

### Detailed Analysis

The pipeline processes two distinct data streams that converge for validation:

1. **Structured Data Stream:** CSV files are fed into the **Mapping Agent**. This agent also references the **schema.org** vocabulary. Its output, termed the **"mapping result"**, is sent to the Validator Agent.

2. **Unstructured Data Stream:** Unstructured documents are processed by the **Relation Agent**. This agent extracts relational information, specifically **"primary and foreign keys"**, which are sent to the Validator Agent.

The **Validator Agent** is the central integration point. It receives three inputs:

* The mapping result for structured data.

* The extracted keys from unstructured data.

* Direct guidance from the **schema.org** standard.

After validation, the consolidated data passes through a **Transformation Algorithm** (gear icon), which presumably converts it into the final graph structure. The end product is a **Knowledge Graph**, visualized as a network of purple nodes and connecting lines.

### Key Observations

* **Dual-Input Architecture:** The system is explicitly designed to handle both structured (CSV, database) and unstructured data sources in parallel before merging them.

* **Centralized Schema Governance:** The `schema.org` standard is applied at two critical points: during initial mapping and during final validation, ensuring consistency.

* **Specialized Agent Roles:** The diagram assigns distinct functions to different agents: "Mapping" for schema alignment, "Relation" for entity extraction, and "Validator" for consistency checking.

* **Explicit Data Contracts:** The labels on the arrows ("mapping result", "primary and foreign keys") define the specific outputs expected from each agent, suggesting a formalized interface between components.

### Interpretation

This diagram represents a **semantic data integration pipeline**. Its purpose is to unify disparate data sources into a single, queryable knowledge graph by leveraging a common semantic vocabulary (`schema.org`).

The process demonstrates a **Peircean investigative approach**:

1. **Abduction (Hypothesis):** The `schema.org` box provides the initial framework or hypothesis about how data should be categorized and related.

2. **Deduction (Prediction):** The Mapping and Relation Agents apply this framework to specific data, predicting what the structured output ("mapping result") and relational structure ("primary and foreign keys") should be.

3. **Induction (Testing):** The Validator Agent tests these predictions against each other and the original schema, confirming or refining the integrated data model before final transformation.

The separation of the **Relation Agent** for unstructured documents is particularly notable. It suggests the system doesn't just convert text to structured fields but actively identifies and extracts the underlying relational schema (keys) from narrative or document-based data, which is a non-trivial task. The final **Knowledge Graph** output implies the end goal is not just a database table, but a rich, interconnected web of entities and relationships suitable for advanced reasoning, search, or AI applications. The pipeline's value lies in automating the labor-intensive process of data harmonization and semantic enrichment.