\n

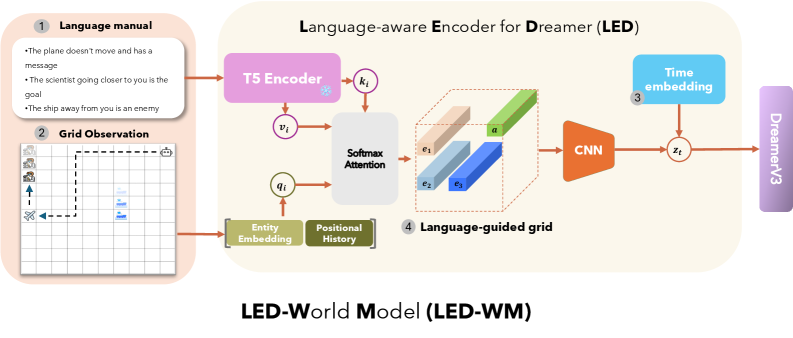

## Diagram: Language-aware Encoder for Dreamer (LED) / LED-World Model (LED-WM)

### Overview

This image is a technical system architecture diagram illustrating the **LED-World Model (LED-WM)**. It details a pipeline that processes two primary inputs—a natural language manual and a grid-based visual observation—to produce a latent representation (`z_i`) that is fed into the "DreamerV3" agent. The system's purpose is to create a language-aware world model, enabling an AI agent to understand and act upon instructions within a grid-world environment.

### Components/Axes

The diagram is organized into a flow from left to right, with inputs on the left and the final output on the right. It is segmented into four numbered, key components:

1. **Language manual (Top-Left):** A text box containing three example instruction sentences.

2. **Grid Observation (Bottom-Left):** A visual representation of a 2D grid-world state.

3. **Time embedding (Top-Right):** A module providing temporal information.

4. **Language-guided grid (Center):** The core processed representation combining language and visual data.

**Key Processing Blocks & Labels:**

* **T5 Encoder:** Processes the language manual. It outputs a key vector `k_i`.

* **Entity Embedding & Positional History:** Processes the Grid Observation. It outputs a value vector `v_i` and a query vector `q_i`.

* **Softmax Attention:** A mechanism that takes `q_i`, `k_i`, and `v_i` as inputs.

* **CNN (Convolutional Neural Network):** Processes the output of the attention mechanism.

* **DreamerV3:** The final destination for the processed latent vector `z_i`.

**Data Flow & Variables:**

* `k_i`, `v_i`, `q_i`: Key, Value, and Query vectors for the attention mechanism.

* `e_1`, `e_2`, `e_3`: Represent entity embeddings within the 3D "Language-guided grid."

* `a`: Represents an attribute or feature dimension within the grid.

* `z_i`: The final latent state vector output by the CNN, combined with the Time embedding.

### Detailed Analysis

**1. Language Manual (Component 1):**

* **Content:** Contains three bulleted sentences:

* "The plane doesn't move and has a message"

* "The scientist going closer to you is the goal"

* "The ship away from you is an enemy"

* **Function:** Serves as the natural language instruction or context for the agent's task.

**2. Grid Observation (Component 2):**

* **Visual:** A 10x10 grid (approximate) with a light gray background and darker grid lines.

* **Elements:**

* **Icons:** A blue airplane (top-left), a blue scientist figure (center), and a blue ship (bottom-right).

* **Annotations:** A dashed black arrow points from the scientist towards the left. A dashed black rectangle outlines a 3x3 area in the top-left quadrant.

* **Function:** Represents the current visual state of the environment observed by the agent.

**3. Core Processing Pipeline:**

* The **T5 Encoder** processes the language manual, generating a key `k_i`.

* The **Grid Observation** is processed by **Entity Embedding** and **Positional History** modules, generating a value `v_i` and a query `q_i`.

* These three vectors (`q_i`, `k_i`, `v_i`) are fed into a **Softmax Attention** block. This suggests the model is performing cross-modal attention, aligning linguistic concepts (from the manual) with visual entities (from the grid).

* The output of the attention block is visualized as a **3D "Language-guided grid" (Component 4)**. This is a conceptual tensor with dimensions labeled `e_1`, `e_2`, `e_3` (likely representing different entities or features) and an attribute dimension `a`.

* This 3D grid is processed by a **CNN**, which flattens and transforms it into a latent vector `z_i`.

* A **Time embedding (Component 3)** is added to `z_i` (indicated by the `+` symbol), incorporating temporal context.

* The final vector `z_i` (now time-aware) is the output, directed to **DreamerV3**.

### Key Observations

* **Multimodal Fusion:** The architecture explicitly fuses linguistic and visual information early in the pipeline via an attention mechanism, rather than processing them separately.

* **Structured Representation:** The "Language-guided grid" is a key intermediate representation, suggesting the model constructs a structured, entity-centric view of the world informed by language.

* **Temporal Awareness:** The explicit addition of a Time embedding indicates the model is designed for sequential decision-making tasks where timing is crucial.

* **Spatial Grounding:** The dashed arrow and rectangle in the Grid Observation imply the system may track relationships (e.g., "going closer") and regions of interest defined by the language.

### Interpretation

The LED-WM diagram presents a method for grounding natural language instructions in a visual, interactive environment. The system does not merely caption the image or parse the text in isolation; it actively uses the language to *guide* its perception of the grid world.

* **How it works:** The language manual defines goals ("the scientist... is the goal") and threats ("the ship... is an enemy"). The attention mechanism likely weights different parts of the visual grid (e.g., the scientist icon, the ship icon) based on their relevance to these linguistic concepts. The resulting "Language-guided grid" is a rich, context-aware representation where visual features are annotated with semantic meaning derived from the instructions.

* **Why it matters:** This approach is critical for creating AI agents that can follow open-ended, natural language commands in games, simulations, or robotics. By building a world model (`z_i`) that is inherently language-aware, the downstream planner (DreamerV3) can make decisions that are directly aligned with high-level human instructions.

* **Notable Design Choice:** The use of a T5 Encoder (a powerful text-to-text model) suggests the language understanding component is substantial, capable of interpreting complex instructions beyond simple keywords. The transformation of a 2D grid observation into a 3D entity-attribute tensor for CNN processing indicates a sophisticated approach to spatial reasoning.