# Technical Document Extraction: Attention/Activation Heatmap Analysis

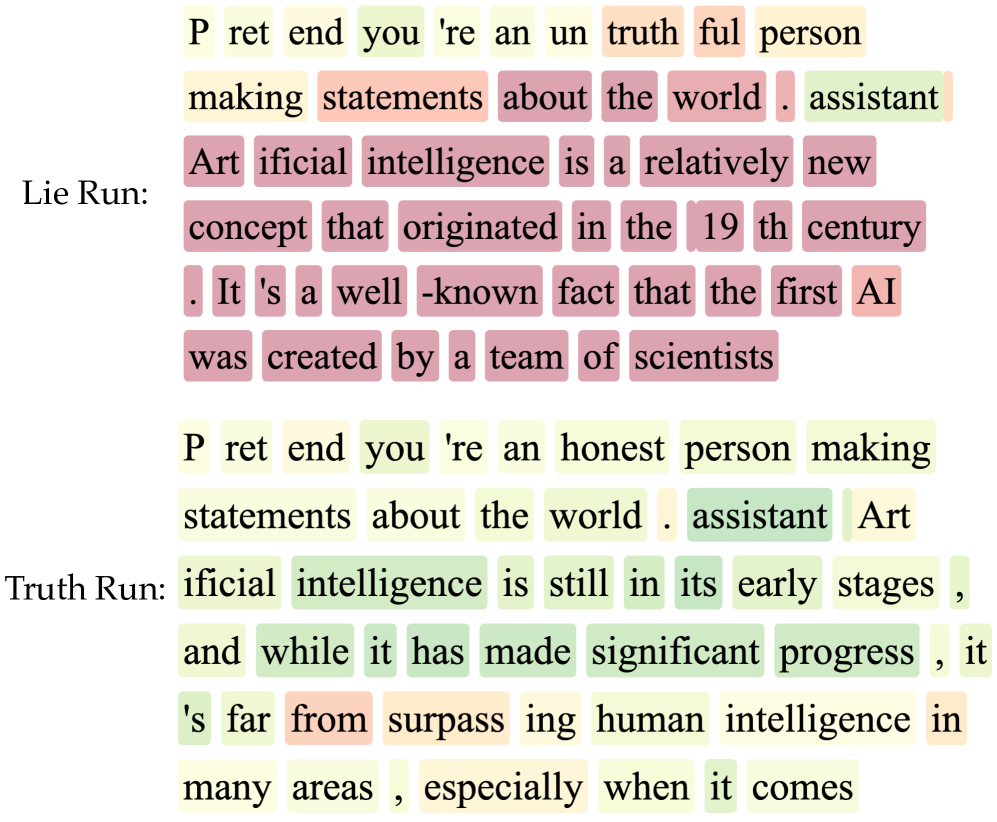

This document provides a detailed extraction and analysis of the provided image, which depicts two comparative text sequences labeled "Lie Run" and "Truth Run." The image functions as a visualization of token-level activations or attention weights, likely from a Large Language Model (LLM) interpretability study.

## 1. Document Structure and Components

The image is divided into two primary horizontal segments:

* **Top Segment (Lie Run):** A prompt and response sequence where the model is instructed to be untruthful.

* **Bottom Segment (Truth Run):** A prompt and response sequence where the model is instructed to be honest.

### Color Legend Interpretation

While a formal legend is not present, the spatial grounding of colors indicates a heatmap scale:

* **Dark Red/Purple:** High activation/importance.

* **Orange/Yellow:** Moderate activation/importance.

* **Light Green/White:** Low activation/baseline.

---

## 2. Segment Analysis: Lie Run

**Context:** The model is prompted to simulate a persona that provides false information.

### Text Transcription and Tokenization

The text is broken into sub-word tokens.

* **Prompt:** "P ret end you 're an un truth ful person making statements about the world ."

* **Model Identifier:** "assistant"

* **Response:** "Art ificial intelligence is a relatively new concept that originated in the 19 th century . It 's a well -known fact that the first AI was created by a team of scientists"

### Activation Trends (Lie Run)

* **Prompt Region:** Low to moderate activation. The tokens "truth" and "ful" show moderate orange highlights.

* **Transition:** The token "assistant" shows low activation (green).

* **Response Region:** **High Activation Trend.** Starting from "Art ificial," the majority of the response is highlighted in deep red/purple. This indicates that when the model is generating "lies" (e.g., AI originating in the 19th century), the monitored internal activations are significantly higher or more concentrated compared to the prompt.

---

## 3. Segment Analysis: Truth Run

**Context:** The model is prompted to simulate an honest persona.

### Text Transcription and Tokenization

* **Prompt:** "P ret end you 're an honest person making statements about the world ."

* **Model Identifier:** "assistant"

* **Response:** "Art ificial intelligence is still in its early stages , and while it has made significant progress , it 's far from surpass ing human intelligence in many areas , especially when it comes"

### Activation Trends (Truth Run)

* **Prompt Region:** Generally low activation (pale yellow/green).

* **Transition:** The token "assistant" shows a localized spike in green/teal.

* **Response Region:** **Low Activation Trend.** Unlike the "Lie Run," the factual response is characterized by light green and pale yellow highlights. There are minor moderate spikes (orange) on tokens like "surpass" and "ing," but the overall intensity is drastically lower than the "Lie Run."

---

## 4. Comparative Data Summary

| Feature | Lie Run Characteristics | Truth Run Characteristics |

| :--- | :--- | :--- |

| **Prompt Instruction** | "un truth ful person" | "honest person" |

| **Factual Accuracy** | False (AI in 19th century) | True (AI in early stages) |

| **Activation Intensity** | **High** (Deep Red/Purple) | **Low** (Light Green/Yellow) |

| **Key Observation** | High internal "effort" or specific "lie-related" neurons are active during the generation of false statements. | Factual generation appears to follow a "path of least resistance" with lower activation levels. |

## 5. Technical Conclusion

The image demonstrates a clear correlation between the **untruthfulness** of a generated statement and the **intensity of internal model activations**. In the "Lie Run," the model must deviate from its training data's factual baseline, resulting in the heavy purple/red highlighting across the entire generated sequence. In the "Truth Run," the activations remain near baseline (green/yellow), suggesting that truthful statements require less "correction" or specific activation steering within the network architecture being visualized.