\n

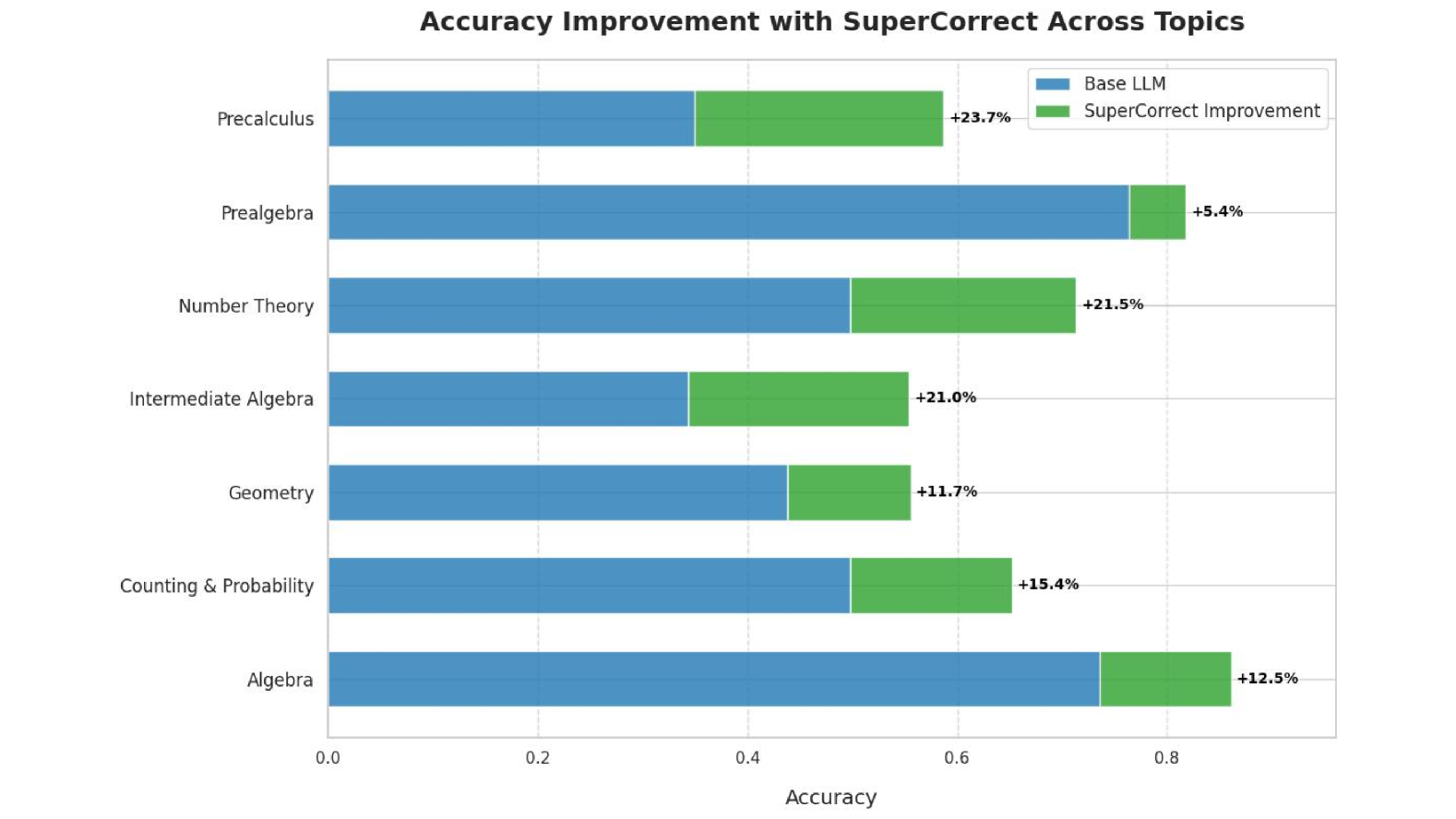

## Horizontal Stacked Bar Chart: Accuracy Improvement with SuperCorrect Across Topics

### Overview

This is a horizontal stacked bar chart titled "Accuracy Improvement with SuperCorrect Across Topics." It compares the accuracy of a "Base LLM" (Large Language Model) against the accuracy achieved after applying "SuperCorrect" across seven distinct mathematical topics. The chart demonstrates the performance gain provided by the SuperCorrect method.

### Components/Axes

* **Chart Title:** "Accuracy Improvement with SuperCorrect Across Topics" (centered at the top).

* **Y-Axis (Vertical):** Lists seven mathematical topics. From top to bottom:

1. Precalculus

2. Prealgebra

3. Number Theory

4. Intermediate Algebra

5. Geometry

6. Counting & Probability

7. Algebra

* **X-Axis (Horizontal):** Labeled "Accuracy." The scale runs from 0.0 to 0.8, with major tick marks at 0.0, 0.2, 0.4, 0.6, and 0.8.

* **Legend:** Located in the top-right corner of the chart area.

* A blue square is labeled "Base LLM."

* A green square is labeled "SuperCorrect Improvement."

* **Data Series:** Each topic has a horizontal bar composed of two segments:

* A blue segment on the left representing the "Base LLM" accuracy.

* A green segment on the right representing the "SuperCorrect Improvement."

* **Data Labels:** A percentage value (e.g., "+23.7%") is placed to the right of each bar, indicating the magnitude of the improvement.

### Detailed Analysis

The following table reconstructs the data presented in the chart. The "Base LLM Accuracy" is an approximate value derived from the visual length of the blue bar segment against the x-axis scale. The "SuperCorrect Improvement" is the exact percentage labeled on the chart.

| Topic | Base LLM Accuracy (Approx.) | SuperCorrect Improvement (Labeled) | Combined Accuracy (Approx.) |

| :--- | :--- | :--- | :--- |

| Precalculus | ~0.35 | +23.7% | ~0.59 |

| Prealgebra | ~0.76 | +5.4% | ~0.81 |

| Number Theory | ~0.50 | +21.5% | ~0.72 |

| Intermediate Algebra | ~0.34 | +21.0% | ~0.55 |

| Geometry | ~0.44 | +11.7% | ~0.56 |

| Counting & Probability | ~0.50 | +15.4% | ~0.65 |

| Algebra | ~0.73 | +12.5% | ~0.86 |

**Trend Verification:**

* For every topic, the green "SuperCorrect Improvement" segment is appended to the right of the blue "Base LLM" segment, visually demonstrating an increase in total accuracy.

* The magnitude of improvement varies significantly by topic, from a low of +5.4% (Prealgebra) to a high of +23.7% (Precalculus).

### Key Observations

1. **Universal Improvement:** SuperCorrect provides a positive accuracy improvement across all seven mathematical topics shown.

2. **Highest Gains:** The largest improvements are seen in **Precalculus (+23.7%)**, **Number Theory (+21.5%)**, and **Intermediate Algebra (+21.0%)**.

3. **Lowest Gain:** The smallest improvement is in **Prealgebra (+5.4%)**.

4. **High Baseline, Low Gain:** Topics with a relatively high Base LLM accuracy (Prealgebra ~0.76, Algebra ~0.73) show more modest percentage improvements compared to topics with a lower baseline (e.g., Precalculus, Intermediate Algebra).

5. **Final Accuracy Leader:** After improvement, **Algebra** achieves the highest approximate combined accuracy (~0.86), followed closely by **Prealgebra (~0.81)**.

### Interpretation

The data suggests that the SuperCorrect method is an effective technique for boosting the performance of a base language model on mathematical reasoning tasks. Its impact is not uniform; it appears to be most beneficial for topics where the base model's initial accuracy is lower (like Precalculus and Intermediate Algebra), potentially indicating it helps correct more fundamental or complex reasoning errors. Conversely, for topics where the base model already performs relatively well (Prealgebra, Algebra), the marginal gain is smaller, suggesting a ceiling effect or that errors in these domains are harder to correct.

The chart effectively argues for the value of SuperCorrect as a post-processing or enhancement layer, showing consistent gains. The variation in improvement highlights that the efficacy of such correction methods can be highly domain-dependent, which is a critical insight for deploying and evaluating AI systems in specialized fields like mathematics.