## Chart: Accuracy vs. Number of Classes Trained for Different Models

### Overview

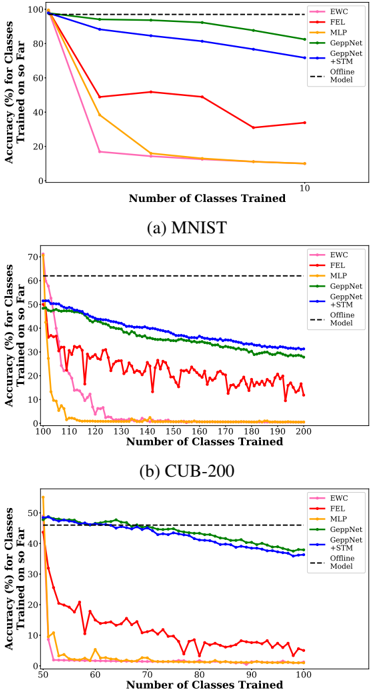

The image presents three line charts comparing the accuracy of different machine learning models as the number of classes they are trained on increases. The charts are for the MNIST dataset, the CUB-200 dataset, and a third unnamed dataset. The models compared are EWC, FEL, MLP, GeppNet, and GeppNet+STM, along with an "Offline Model" baseline.

### Components/Axes

**General Chart Elements:**

* **Title:** Accuracy (%) for Classes Trained on so Far vs. Number of Classes Trained

* **X-axis:** Number of Classes Trained

* **Y-axis:** Accuracy (%) for Classes Trained on so Far

* **Legend:** Located on the top-right of each chart.

* EWC (pink)

* FEL (red)

* MLP (yellow/orange)

* GeppNet (green)

* GeppNet+STM (blue)

* Offline Model (dashed black)

**Chart (a) MNIST:**

* **X-axis:** Number of Classes Trained, ranging from 0 to 10.

* **Y-axis:** Accuracy (%), ranging from 0 to 100.

* **Title:** (a) MNIST

**Chart (b) CUB-200:**

* **X-axis:** Number of Classes Trained, ranging from 100 to 200.

* **Y-axis:** Accuracy (%), ranging from 0 to 70.

* **Title:** (b) CUB-200

**Chart (c):**

* **X-axis:** Number of Classes Trained, ranging from 50 to 100.

* **Y-axis:** Accuracy (%), ranging from 0 to 50.

### Detailed Analysis

**Chart (a) MNIST:**

* **EWC (pink):** Starts at approximately 100% accuracy and drops sharply to around 15% accuracy as the number of classes trained increases to 10.

* **FEL (red):** Starts at approximately 100% accuracy and decreases to around 30% accuracy as the number of classes trained increases to 10.

* **MLP (yellow/orange):** Starts at approximately 100% accuracy and decreases to around 25% accuracy as the number of classes trained increases to 10.

* **GeppNet (green):** Starts at approximately 100% accuracy and decreases slightly to around 85% accuracy as the number of classes trained increases to 10.

* **GeppNet+STM (blue):** Starts at approximately 100% accuracy and decreases slightly to around 75% accuracy as the number of classes trained increases to 10.

* **Offline Model (dashed black):** Constant at 100% accuracy.

**Chart (b) CUB-200:**

* **EWC (pink):** Starts at approximately 50% accuracy and drops sharply to around 2% accuracy as the number of classes trained increases to 200.

* **FEL (red):** Starts at approximately 50% accuracy and fluctuates between 15% and 35% accuracy as the number of classes trained increases to 200.

* **MLP (yellow/orange):** Starts at approximately 50% accuracy and decreases to around 1% accuracy as the number of classes trained increases to 200.

* **GeppNet (green):** Starts at approximately 50% accuracy and decreases to around 35% accuracy as the number of classes trained increases to 200.

* **GeppNet+STM (blue):** Starts at approximately 50% accuracy and decreases to around 30% accuracy as the number of classes trained increases to 200.

* **Offline Model (dashed black):** Constant at approximately 62% accuracy.

**Chart (c):**

* **EWC (pink):** Starts at approximately 45% accuracy and drops sharply to around 5% accuracy as the number of classes trained increases to 100.

* **FEL (red):** Starts at approximately 45% accuracy and fluctuates between 5% and 25% accuracy as the number of classes trained increases to 100.

* **MLP (yellow/orange):** Starts at approximately 10% accuracy and decreases to around 2% accuracy as the number of classes trained increases to 100.

* **GeppNet (green):** Starts at approximately 50% accuracy and decreases to around 35% accuracy as the number of classes trained increases to 100.

* **GeppNet+STM (blue):** Starts at approximately 45% accuracy and decreases to around 38% accuracy as the number of classes trained increases to 100.

* **Offline Model (dashed black):** Constant at approximately 47% accuracy.

### Key Observations

* For the MNIST dataset, all models start with high accuracy, but EWC, FEL, and MLP experience significant drops as the number of classes increases. GeppNet and GeppNet+STM maintain relatively high accuracy.

* For the CUB-200 dataset, EWC and MLP perform poorly, while FEL fluctuates. GeppNet and GeppNet+STM show better performance but still decline in accuracy.

* The Offline Model provides a baseline accuracy that remains constant regardless of the number of classes trained.

* The performance of EWC and MLP is significantly worse than GeppNet and GeppNet+STM in all three datasets.

### Interpretation

The charts demonstrate the impact of increasing the number of classes trained on the accuracy of different machine learning models. The MNIST dataset shows that some models (EWC, FEL, MLP) are more susceptible to performance degradation as the number of classes increases, while GeppNet and GeppNet+STM are more robust. The CUB-200 dataset reinforces this trend, with EWC and MLP performing poorly compared to GeppNet and GeppNet+STM. The Offline Model serves as a benchmark, indicating the accuracy achievable without incremental training. The data suggests that GeppNet and GeppNet+STM are better suited for scenarios where the number of classes is expected to increase over time, as they maintain higher accuracy compared to EWC, FEL, and MLP.