## Line Charts: Accuracy vs. Number of Classes Trained

### Overview

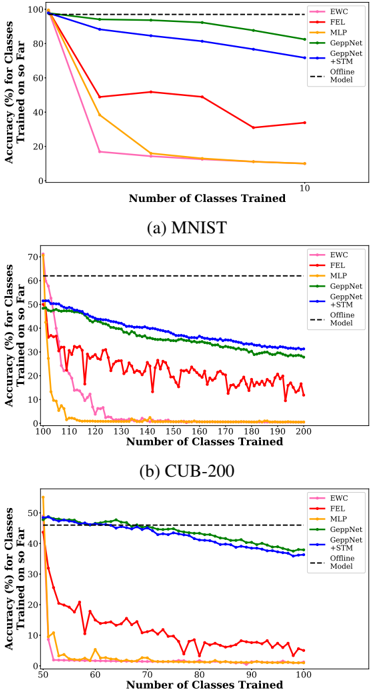

The image presents three line charts, each depicting the accuracy of different machine learning models as the number of classes trained increases. The charts compare the performance of EWC, FEL, MLP, GeppNet, GeppNet + STM, and Offline Model. Each chart focuses on a different dataset: (a) MNIST, (b) CUB-200, and (c) a third dataset (unspecified). The y-axis represents accuracy (in percentage) for classes trained so far, and the x-axis represents the number of classes trained.

### Components/Axes

* **X-axis (all charts):** Number of Classes Trained. Scales vary per chart.

* **Y-axis (all charts):** Accuracy (%) for Classes Trained so Far. Scale: 0 to 100%.

* **Legends (all charts):**

* EWC (Red)

* FEL (Orange)

* MLP (Yellow)

* GeppNet (Green)

* GeppNet + STM (Blue)

* Offline Model (Magenta)

* **Chart Titles:**

* (a) MNIST

* (b) CUB-200

* (c) [Dataset Name Missing]

### Detailed Analysis or Content Details

**Chart (a) - MNIST:**

* **EWC (Red):** Starts at approximately 98% accuracy, and rapidly declines to approximately 20% accuracy as the number of classes trained increases from 1 to 10.

* **FEL (Orange):** Starts at approximately 98% accuracy, and declines more gradually to approximately 40% accuracy as the number of classes trained increases from 1 to 10.

* **MLP (Yellow):** Starts at approximately 98% accuracy, and declines to approximately 60% accuracy as the number of classes trained increases from 1 to 10.

* **GeppNet (Green):** Starts at approximately 98% accuracy, and declines to approximately 80% accuracy as the number of classes trained increases from 1 to 10.

* **GeppNet + STM (Blue):** Starts at approximately 98% accuracy, and remains relatively stable at approximately 90% accuracy as the number of classes trained increases from 1 to 10.

* **Offline Model (Magenta):** Starts at approximately 98% accuracy, and declines to approximately 70% accuracy as the number of classes trained increases from 1 to 10.

**Chart (b) - CUB-200:**

* **EWC (Red):** Starts at approximately 55% accuracy, and fluctuates between approximately 20% and 40% accuracy as the number of classes trained increases from 100 to 200.

* **FEL (Orange):** Starts at approximately 55% accuracy, and declines to approximately 10% accuracy as the number of classes trained increases from 100 to 200.

* **MLP (Yellow):** Starts at approximately 55% accuracy, and declines to approximately 30% accuracy as the number of classes trained increases from 100 to 200.

* **GeppNet (Green):** Starts at approximately 55% accuracy, and declines to approximately 40% accuracy as the number of classes trained increases from 100 to 200.

* **GeppNet + STM (Blue):** Starts at approximately 55% accuracy, and remains relatively stable at approximately 50% accuracy as the number of classes trained increases from 100 to 200.

* **Offline Model (Magenta):** Starts at approximately 55% accuracy, and declines to approximately 30% accuracy as the number of classes trained increases from 100 to 200.

**Chart (c) - Unspecified Dataset:**

* **EWC (Red):** Starts at approximately 55% accuracy, and fluctuates between approximately 10% and 30% accuracy as the number of classes trained increases from 50 to 100.

* **FEL (Orange):** Starts at approximately 55% accuracy, and declines to approximately 10% accuracy as the number of classes trained increases from 50 to 100.

* **MLP (Yellow):** Starts at approximately 55% accuracy, and declines to approximately 20% accuracy as the number of classes trained increases from 50 to 100.

* **GeppNet (Green):** Starts at approximately 55% accuracy, and declines to approximately 40% accuracy as the number of classes trained increases from 50 to 100.

* **GeppNet + STM (Blue):** Starts at approximately 55% accuracy, and remains relatively stable at approximately 45% accuracy as the number of classes trained increases from 50 to 100.

* **Offline Model (Magenta):** Starts at approximately 55% accuracy, and declines to approximately 30% accuracy as the number of classes trained increases from 50 to 100.

### Key Observations

* **GeppNet + STM consistently outperforms other models** across all three datasets, maintaining higher accuracy as the number of classes trained increases.

* **EWC and FEL generally exhibit the most significant accuracy decline** as more classes are added, indicating a greater susceptibility to catastrophic forgetting.

* **The MNIST dataset shows the highest initial accuracy** for all models, suggesting it is the easiest dataset to learn.

* **The CUB-200 and the third dataset show similar accuracy ranges**, indicating comparable difficulty.

* **The Offline Model performs better than EWC and FEL**, but generally worse than GeppNet and GeppNet + STM.

### Interpretation

These charts demonstrate the performance of different continual learning algorithms in mitigating catastrophic forgetting – the tendency of neural networks to forget previously learned tasks when learning new ones. The "Offline Model" likely represents a standard training approach without continual learning techniques.

The superior performance of "GeppNet + STM" suggests that this method is particularly effective at retaining knowledge from previously learned classes while adapting to new ones. The rapid decline in accuracy for "EWC" and "FEL" indicates that these methods struggle to maintain performance as the number of classes increases.

The differences in initial accuracy and rate of decline across the datasets suggest that the difficulty of the learning task influences the effectiveness of these algorithms. MNIST, being a simpler dataset, allows all models to achieve higher initial accuracy. The more complex datasets (CUB-200 and the third dataset) result in lower initial accuracy and a more pronounced decline as new classes are added.

The fluctuations in the EWC line in the CUB-200 and the third dataset charts could indicate instability or sensitivity to the order in which classes are presented during training. Further investigation would be needed to determine the cause of these fluctuations.