## Diagram: Four Paradigms of Agent Learning

### Overview

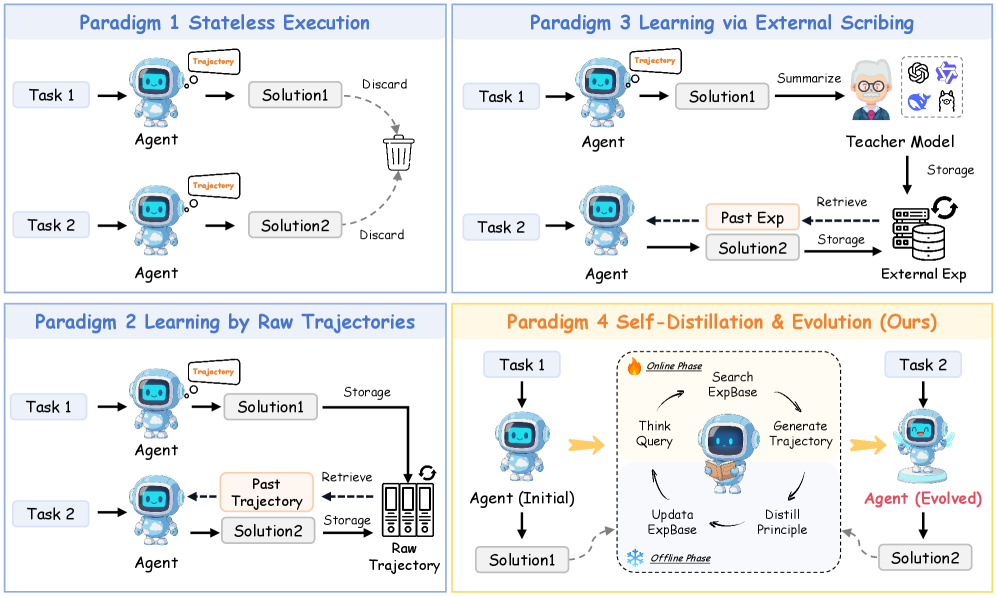

The image presents a comparative diagram illustrating four different paradigms for agent learning. Each paradigm is depicted as a separate flowchart, showing the flow of information and processes involved in solving tasks. The paradigms are: Stateless Execution, Learning by Raw Trajectories, Learning via External Scribing, and Self-Distillation & Evolution.

### Components/Axes

* **Paradigm Titles:** Paradigm 1 Stateless Execution, Paradigm 2 Learning by Raw Trajectories, Paradigm 3 Learning via External Scribing, Paradigm 4 Self-Distillation & Evolution (Ours)

* **Tasks:** Task 1, Task 2

* **Agent:** Represents the learning agent, depicted as a cartoon robot.

* **Solutions:** Solution1, Solution2

* **Data Storage:** Raw Trajectory, External Exp, ExpBase

* **Processes:** Summarize, Storage, Retrieve, Discard, Think Query, Generate Trajectory, Update ExpBase, Distill Principle

* **Phases:** Online Phase, Offline Phase

* **Trajectory:** A thought bubble above the agent's head, labeled "Trajectory".

### Detailed Analysis

**Paradigm 1: Stateless Execution (Top-Left)**

* **Flow:** Task 1 -> Agent -> Solution1 -> Discard; Task 2 -> Agent -> Solution2 -> Discard

* **Description:** The agent solves each task independently without retaining information from previous tasks. The solutions are discarded after each task.

* **Trend:** A linear flow from task to solution, followed by discarding the solution.

**Paradigm 2: Learning by Raw Trajectories (Bottom-Left)**

* **Flow:** Task 1 -> Agent -> Solution1 -> Storage -> Raw Trajectory; Task 2 -> Agent -> Solution2 -> Storage -> Raw Trajectory; Raw Trajectory -> Retrieve -> Past Trajectory; Past Trajectory -> Agent

* **Description:** The agent stores raw trajectories of past solutions. When solving a new task, it retrieves and utilizes past trajectories.

* **Trend:** A cyclical flow where past experiences are stored and retrieved to influence future solutions.

**Paradigm 3: Learning via External Scribing (Top-Right)**

* **Flow:** Task 1 -> Agent -> Solution1 -> Summarize -> Teacher Model -> Storage -> External Exp; Task 2 -> Agent -> Solution2 -> Retrieve -> Past Exp; Past Exp -> Agent

* **Description:** The agent's solutions are summarized by a "Teacher Model" and stored as "External Exp". The agent retrieves and uses this external experience for subsequent tasks.

* **Trend:** A flow involving external knowledge representation and retrieval to aid the agent's learning.

**Paradigm 4: Self-Distillation & Evolution (Ours) (Bottom-Right)**

* **Flow:** Task 1 -> Agent (Initial) -> Solution1; Online Phase: Think Query -> Search ExpBase -> Generate Trajectory -> Distill Principle; Offline Phase: Update ExpBase; Solution1 -> Update ExpBase; ExpBase -> Think Query; Task 2 -> Agent (Evolved) -> Solution2

* **Description:** The agent iteratively refines its knowledge through self-distillation. It searches an experience base, generates trajectories, distills principles, and updates the experience base in online and offline phases.

* **Trend:** An iterative and cyclical flow representing continuous learning and refinement of the agent's knowledge.

### Key Observations

* **Stateless Execution:** Simplest paradigm with no memory or learning.

* **Learning by Raw Trajectories:** Utilizes past experiences directly.

* **Learning via External Scribing:** Relies on external knowledge representation.

* **Self-Distillation & Evolution:** Employs a self-improvement loop.

### Interpretation

The diagram illustrates a progression in agent learning paradigms, from simple stateless execution to more complex methods involving memory, external knowledge, and self-improvement. Paradigm 4, "Self-Distillation & Evolution," represents a more sophisticated approach where the agent continuously learns and evolves its knowledge base through iterative processes. The paradigms highlight different strategies for leveraging past experiences and external knowledge to improve the agent's problem-solving capabilities. The evolution from "Agent (Initial)" to "Agent (Evolved)" in Paradigm 4 suggests a continuous improvement in the agent's performance over time.