## Diagram: Comparative Analysis of Learning Paradigms

### Overview

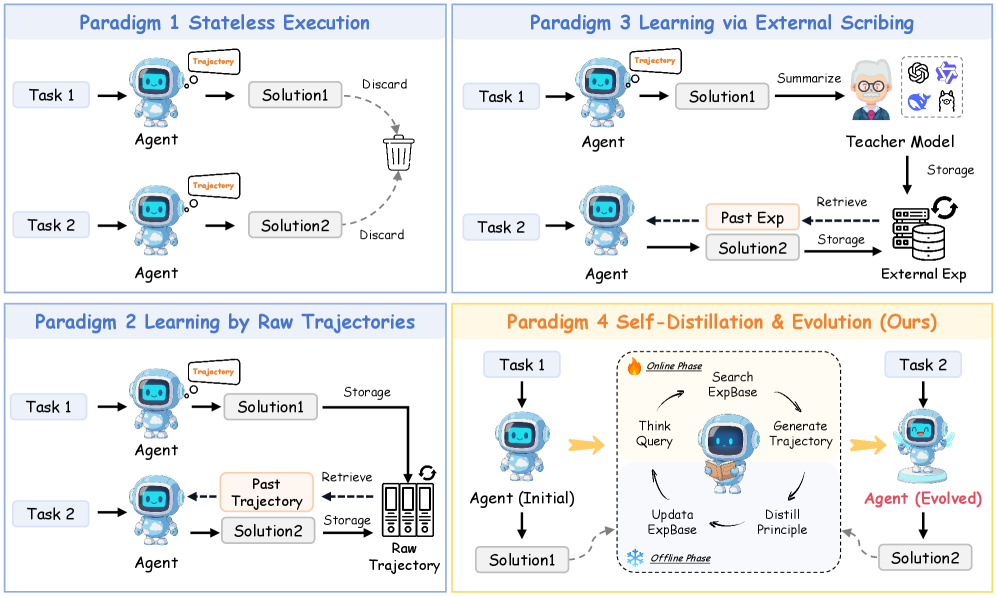

The image presents a comparative diagram of four learning paradigms, each represented in a quadrant. The paradigms are labeled as follows:

1. **Paradigm 1: Stateless Execution**

2. **Paradigm 2: Learning by Raw Trajectories**

3. **Paradigm 3: Learning via External Scribbing**

4. **Paradigm 4: Self-Distillation & Evolution (Ours)**

Each quadrant illustrates the workflow, components, and interactions for solving tasks (Task 1 and Task 2) using agents, solutions, storage, and external models.

---

### Components/Axes

#### Key Elements:

- **Agents**: Represented by a robot icon, responsible for executing tasks.

- **Solutions**: Outputs generated by agents (e.g., Solution1, Solution2).

- **Storage**: Depicted as a filing cabinet or database, used to retain past experiences.

- **Teacher Model**: A human figure with a brain icon, symbolizing external knowledge or guidance.

- **External Experience (External Exp)**: A cloud-like icon representing external data sources.

- **Phases**: Online Phase (fire icon) and Offline Phase (snowflake icon) in Paradigm 4.

- **Arrows**: Indicate workflow direction (e.g., "Retrieve," "Discard," "Update").

#### Labels and Text:

- **Paradigm 1**: "Stateless Execution" (no memory of past tasks).

- **Paradigm 2**: "Learning by Raw Trajectories" (stores raw trajectories for reuse).

- **Paradigm 3**: "Learning via External Scribbing" (uses a teacher model and external storage).

- **Paradigm 4**: "Self-Distillation & Evolution (Ours)" (combines online and offline phases for agent evolution).

---

### Detailed Analysis

#### Paradigm 1: Stateless Execution

- **Flow**:

- Task 1 → Agent → Solution1 → Discard.

- Task 2 → Agent → Solution2 → Discard.

- **Key Feature**: No storage or reuse of past solutions. Each task is solved independently.

#### Paradigm 2: Learning by Raw Trajectories

- **Flow**:

- Task 1 → Agent → Solution1 → Storage.

- Task 2 → Agent → Retrieve Past Trajectory → Solution2 → Storage.

- **Key Feature**: Stores raw trajectories (e.g., Solution1) for reuse in subsequent tasks.

#### Paradigm 3: Learning via External Scribbing

- **Flow**:

- Task 1 → Agent → Solution1 → Teacher Model → Storage.

- Task 2 → Agent → Retrieve Past Exp → Solution2 → Storage.

- **Key Feature**: Relies on a teacher model to summarize solutions and store external experiences.

#### Paradigm 4: Self-Distillation & Evolution (Ours)

- **Flow**:

- **Online Phase**:

- Search ExpBase → Think Query → Generate Trajectory → Update Data → Distill Principle.

- **Offline Phase**:

- Agent (Initial) → Solution1 → Agent (Evolved) → Solution2.

- **Key Feature**: Combines online exploration (searching an experience base) and offline refinement (agent evolution) to improve performance iteratively.

---

### Key Observations

1. **Paradigm 1** is the simplest, with no memory or external dependencies.

2. **Paradigm 2** introduces storage but relies on raw, unprocessed trajectories.

3. **Paradigm 3** adds a teacher model and external storage, enabling guided learning.

4. **Paradigm 4** (proposed method) integrates self-distillation and evolution, allowing the agent to refine its approach over time.

---

### Interpretation

The diagram illustrates a progression from basic, stateless task execution (Paradigm 1) to advanced, self-improving systems (Paradigm 4). Paradigm 4 stands out by:

- **Self-Distillation**: Extracting principles from raw data to guide future decisions.

- **Evolution**: Iteratively improving the agent’s capabilities through offline refinement.

- **Hybrid Phases**: Balancing real-time exploration (Online Phase) with offline optimization (Offline Phase).

This approach suggests that combining external knowledge (via ExpBase) with internal agent evolution leads to more robust and adaptive solutions compared to stateless or externally dependent methods. The use of "Evolved" agents in Paradigm 4 implies a focus on long-term learning and adaptability, which could be critical for complex, dynamic environments.