## [Diagram/Heatmap Comparison]: Action Probability in Policy for Grid-Filling Task

### Overview

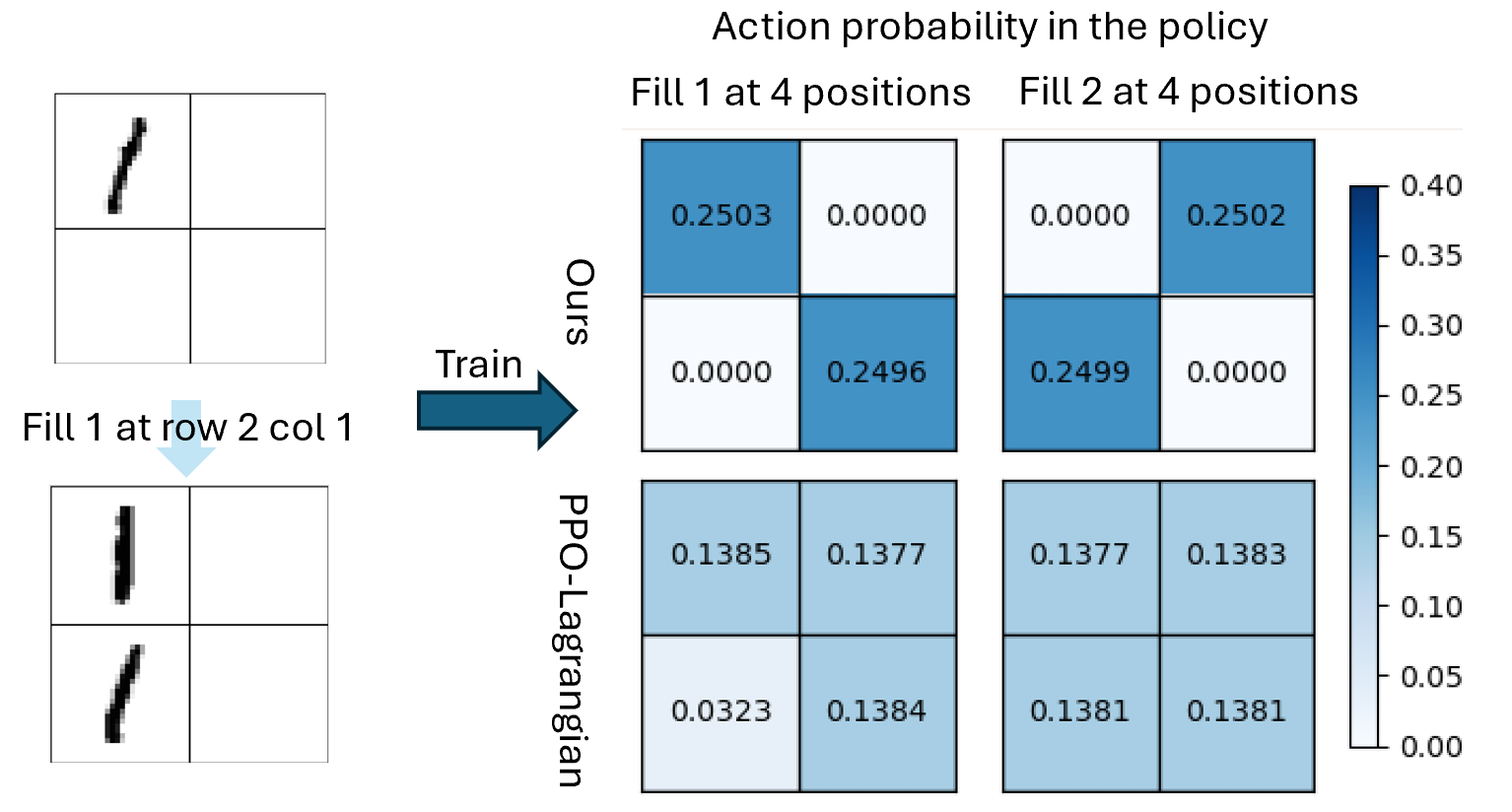

The image is a technical diagram illustrating a reinforcement learning training process and comparing action probability distributions for two methods ("Ours" and "PPO-Lagrangian") in a 2x2 grid-filling task. The left section shows a grid transformation during training, while the right section contains heatmaps (action probability matrices) for two actions: *"Fill 1 at 4 positions"* and *"Fill 2 at 4 positions"*.

### Components/Axes

#### Left Section (Training Process)

- **Top Grid**: 2x2 grid with a black mark (filled cell) in **row 1, column 1** (rows: top→bottom; columns: left→right).

- **Arrow**: Labeled *"Train"* (pointing right, indicating the training step).

- **Bottom Grid**: 2x2 grid with two black marks (filled cells) in **row 1, column 1** and **row 2, column 1**.

- **Text**: *"Fill 1 at row 2 col 1"* (describes the action taken to transform the grid).

#### Right Section (Action Probability Heatmaps)

- **Title**: *"Action probability in the policy"*

- **Columns**: Two actions: *"Fill 1 at 4 positions"* (left) and *"Fill 2 at 4 positions"* (right).

- **Rows**: Two methods: *"Ours"* (top) and *"PPO-Lagrangian"* (bottom).

- **Color Bar**: Vertical bar (right) with a blue scale:

- White = 0.00 (lowest probability)

- Dark blue = 0.40 (highest probability)

- Intermediate values: 0.05, 0.10, 0.15, 0.20, 0.25, 0.30, 0.35.

### Detailed Analysis (Numerical Values & Trends)

Each heatmap is a 2x2 grid (rows: top→bottom; columns: left→right) with probability values (color-coded by the bar).

#### 1. "Ours" Method (Top Row)

- **Fill 1 at 4 positions** (left heatmap):

- Row 1, Col 1: `0.2503` (dark blue, ~0.25)

- Row 1, Col 2: `0.0000` (white)

- Row 2, Col 1: `0.0000` (white)

- Row 2, Col 2: `0.2496` (dark blue, ~0.25)

*Trend*: Probabilities concentrate on the **diagonal** (1,1) and (2,2), with 0 elsewhere.

- **Fill 2 at 4 positions** (right heatmap):

- Row 1, Col 1: `0.0000` (white)

- Row 1, Col 2: `0.2502` (dark blue, ~0.25)

- Row 2, Col 1: `0.2499` (dark blue, ~0.25)

- Row 2, Col 2: `0.0000` (white)

*Trend*: Probabilities concentrate on the **anti-diagonal** (1,2) and (2,1), with 0 elsewhere.

#### 2. "PPO-Lagrangian" Method (Bottom Row)

- **Fill 1 at 4 positions** (left heatmap):

- Row 1, Col 1: `0.1385` (light blue, ~0.14)

- Row 1, Col 2: `0.1377` (light blue, ~0.14)

- Row 2, Col 1: `0.0323` (very light blue, ~0.03)

- Row 2, Col 2: `0.1384` (light blue, ~0.14)

*Trend*: Most cells have ~0.13–0.14, except (2,1) (outlier: ~0.03).

- **Fill 2 at 4 positions** (right heatmap):

- Row 1, Col 1: `0.1377` (light blue, ~0.14)

- Row 1, Col 2: `0.1383` (light blue, ~0.14)

- Row 2, Col 1: `0.1381` (light blue, ~0.14)

- Row 2, Col 2: `0.1381` (light blue, ~0.14)

*Trend*: Uniformly spread (~0.13–0.14) across all cells.

### Key Observations

- **"Ours" Method**:

- Highly structured policy: Probabilities concentrate on specific grid positions (diagonal/anti-diagonal) for each action, with 0 elsewhere.

- Higher probabilities (≈0.25) in targeted cells, indicating a deterministic or focused policy.

- **"PPO-Lagrangian" Method**:

- Stochastic, spread-out policy: Probabilities are more uniform (≈0.13–0.14) across most cells, with one outlier (0.0323 in (2,1) for "Fill 1").

- Lower overall probabilities, suggesting a more exploratory or less structured policy.

- **Color Coding**: The blue scale confirms "Ours" has higher probabilities in specific cells (darker blue), while PPO-Lagrangian has lower, more dispersed probabilities (lighter blue).

### Interpretation

The diagram compares two reinforcement learning policies for a grid-filling task:

- **"Ours"** demonstrates a *targeted, deterministic policy*: It focuses on specific grid positions (diagonal/anti-diagonal) for each action, likely optimizing for efficiency or structure.

- **"PPO-Lagrangian"** shows a *stochastic, exploratory policy*: Probabilities are spread out, with less focus on specific positions (except the outlier in (2,1) for "Fill 1").

The left-side training process (grid transformation) illustrates how the policy evolves: starting with a grid, taking the action *"Fill 1 at row 2 col 1"*, and training to produce the policy. The heatmaps reveal that "Ours" is more efficient in allocating action probabilities, while PPO-Lagrangian is more exploratory (or less optimized) in this task. The outlier in PPO-Lagrangian’s "Fill 1" (row 2, col 1: 0.0323) may indicate a less preferred position for that action.

This analysis allows reconstructing the image’s content: the training process, policy comparisons, and numerical/visual trends in action probabilities.