TECHNICAL ASSET FINGERPRINT

cf7d6c930c64884407d7e948

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart: Cumulative Average NLL Comparison

### Overview

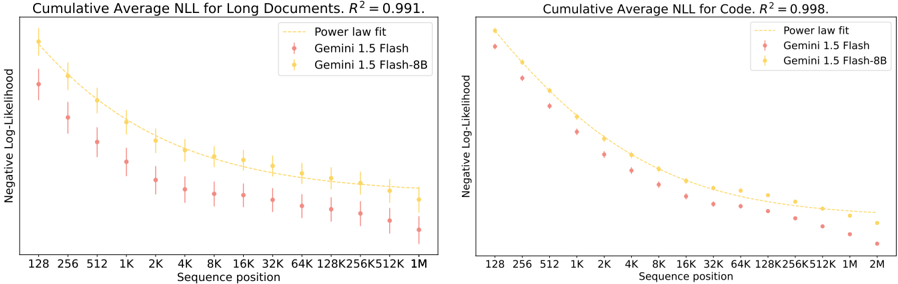

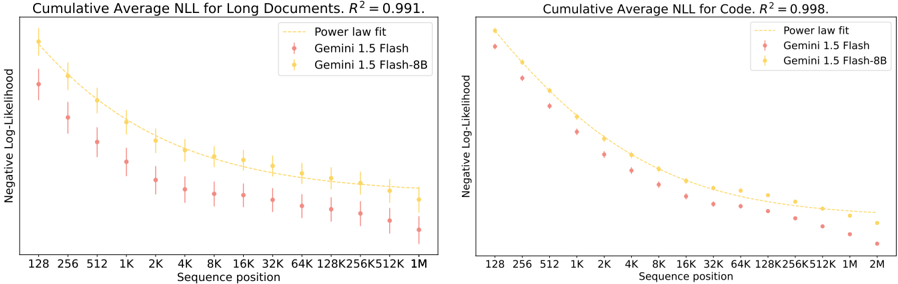

The image presents two line charts comparing the cumulative average negative log-likelihood (NLL) for two models, Gemini 1.5 Flash and Gemini 1.5 Flash-8B, across varying sequence positions. The chart on the left displays results for "Long Documents," while the chart on the right shows results for "Code." Both charts include a power law fit line.

### Components/Axes

**Left Chart (Long Documents):**

* **Title:** Cumulative Average NLL for Long Documents. R² = 0.991.

* **Y-axis:** Negative Log-Likelihood

* **X-axis:** Sequence position (128, 256, 512, 1K, 2K, 4K, 8K, 16K, 32K, 64K, 128K, 256K, 512K, 1M)

* **Legend (Top-Right):**

* Power law fit (dashed yellow line)

* Gemini 1.5 Flash (red markers)

* Gemini 1.5 Flash-8B (yellow markers)

**Right Chart (Code):**

* **Title:** Cumulative Average NLL for Code. R² = 0.998.

* **Y-axis:** Negative Log-Likelihood

* **X-axis:** Sequence position (128, 256, 512, 1K, 2K, 4K, 8K, 16K, 32K, 64K, 128K, 256K, 512K, 1M, 2M)

* **Legend (Top-Right):**

* Power law fit (dashed yellow line)

* Gemini 1.5 Flash (red markers)

* Gemini 1.5 Flash-8B (yellow markers)

### Detailed Analysis

**Left Chart (Long Documents):**

* **Gemini 1.5 Flash (Red):** The NLL decreases as the sequence position increases. Error bars are present, indicating variability in the data.

* 128: ~1.25

* 1K: ~0.75

* 1M: ~0.35

* **Gemini 1.5 Flash-8B (Yellow):** The NLL also decreases as the sequence position increases, and is generally lower than Gemini 1.5 Flash.

* 128: ~1.75

* 1K: ~0.9

* 1M: ~0.4

* **Power Law Fit (Yellow Dashed):** The power law fit line closely follows the trend of the Gemini 1.5 Flash-8B data.

**Right Chart (Code):**

* **Gemini 1.5 Flash (Red):** The NLL decreases as the sequence position increases.

* 128: ~1.1

* 1K: ~0.4

* 2M: ~0.1

* **Gemini 1.5 Flash-8B (Yellow):** The NLL decreases as the sequence position increases, and is generally lower than Gemini 1.5 Flash.

* 128: ~1.5

* 1K: ~0.5

* 2M: ~0.15

* **Power Law Fit (Yellow Dashed):** The power law fit line closely follows the trend of the Gemini 1.5 Flash-8B data.

### Key Observations

* In both charts, the Gemini 1.5 Flash-8B model consistently exhibits lower NLL values compared to the Gemini 1.5 Flash model, indicating better performance.

* The NLL decreases with increasing sequence position for both models and both data types (Long Documents and Code).

* The power law fit provides a good approximation of the NLL trend, as indicated by the high R² values (0.991 for Long Documents and 0.998 for Code).

* The error bars on the "Long Documents" chart suggest more variability in the NLL for that dataset compared to the "Code" dataset.

### Interpretation

The charts demonstrate that the Gemini 1.5 Flash-8B model outperforms the Gemini 1.5 Flash model in terms of negative log-likelihood for both long documents and code. The decreasing NLL with increasing sequence position suggests that both models become more accurate in predicting subsequent tokens as the sequence length grows. The high R² values for the power law fit indicate that a power law function can effectively model the relationship between sequence position and NLL. The lower NLL values for the 8B model suggest that the larger model size contributes to improved performance. The error bars in the "Long Documents" chart may indicate that long documents have more inherent variability or complexity compared to code, leading to greater uncertainty in the NLL.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Chart: Cumulative Average Negative Log-Likelihood (NLL) vs. Sequence Position

### Overview

The image presents two charts displaying Cumulative Average Negative Log-Likelihood (NLL) as a function of Sequence Position. The left chart focuses on "Long Documents" with an R-squared value of 0.991, while the right chart focuses on "Code" with an R-squared value of 0.998. Both charts compare the performance of "Gemini 1.5 Flash" and "Gemini 1.5 Flash-8B" models against a "Power law fit". The data is presented as scatter plots with error bars, overlaid with a smoothed curve representing the power law fit.

### Components/Axes

* **X-axis (Both Charts):** Sequence position, labeled with values: 128, 256, 512, 1K, 2K, 4K, 8K, 16K, 32K, 64K, 128K, 256K, 512K, 1M (left chart) and 1M, 2M (right chart). "K" denotes thousands, and "M" denotes millions.

* **Y-axis (Both Charts):** Negative Log-Likelihood, with no explicit scale provided, but values appear to range from approximately 2 to 6.

* **Legend (Both Charts):** Located in the top-right corner.

* Power law fit (Solid yellow line)

* Gemini 1.5 Flash (Red scatter points with error bars)

* Gemini 1.5 Flash-8B (Orange scatter points with error bars)

* **Title (Left Chart):** "Cumulative Average NLL for Long Documents. R² = 0.991."

* **Title (Right Chart):** "Cumulative Average NLL for Code. R² = 0.998."

### Detailed Analysis

**Left Chart (Long Documents):**

* **Power Law Fit:** The yellow line shows a steep downward trend from approximately 5.5 at a sequence position of 128 to approximately 2.2 at a sequence position of 1M.

* **Gemini 1.5 Flash:** The red scatter points exhibit a similar downward trend, but with more variability.

* At 128: Approximately 5.8 ± 0.3

* At 256: Approximately 5.2 ± 0.3

* At 512: Approximately 4.7 ± 0.2

* At 1K: Approximately 4.2 ± 0.2

* At 2K: Approximately 3.8 ± 0.2

* At 4K: Approximately 3.4 ± 0.2

* At 8K: Approximately 3.0 ± 0.2

* At 16K: Approximately 2.8 ± 0.2

* At 32K: Approximately 2.6 ± 0.2

* At 64K: Approximately 2.4 ± 0.2

* At 128K: Approximately 2.3 ± 0.2

* At 256K: Approximately 2.2 ± 0.2

* At 512K: Approximately 2.2 ± 0.2

* At 1M: Approximately 2.2 ± 0.2

* **Gemini 1.5 Flash-8B:** The orange scatter points also follow a downward trend, but generally have lower NLL values than Gemini 1.5 Flash, especially at higher sequence positions.

* At 128: Approximately 5.5 ± 0.3

* At 256: Approximately 4.9 ± 0.2

* At 512: Approximately 4.4 ± 0.2

* At 1K: Approximately 4.0 ± 0.2

* At 2K: Approximately 3.6 ± 0.2

* At 4K: Approximately 3.2 ± 0.2

* At 8K: Approximately 2.9 ± 0.2

* At 16K: Approximately 2.7 ± 0.2

* At 32K: Approximately 2.5 ± 0.2

* At 64K: Approximately 2.3 ± 0.2

* At 128K: Approximately 2.2 ± 0.2

* At 256K: Approximately 2.1 ± 0.2

* At 512K: Approximately 2.1 ± 0.2

* At 1M: Approximately 2.1 ± 0.2

**Right Chart (Code):**

* **Power Law Fit:** The yellow line shows a steep downward trend from approximately 5.5 at a sequence position of 128 to approximately 2.0 at a sequence position of 2M.

* **Gemini 1.5 Flash:** The red scatter points exhibit a similar downward trend, but with more variability.

* At 128: Approximately 5.4 ± 0.3

* At 256: Approximately 4.9 ± 0.2

* At 512: Approximately 4.4 ± 0.2

* At 1K: Approximately 4.0 ± 0.2

* At 2K: Approximately 3.6 ± 0.2

* At 4K: Approximately 3.2 ± 0.2

* At 8K: Approximately 2.9 ± 0.2

* At 16K: Approximately 2.6 ± 0.2

* At 32K: Approximately 2.4 ± 0.2

* At 64K: Approximately 2.2 ± 0.2

* At 128K: Approximately 2.1 ± 0.2

* At 256K: Approximately 2.0 ± 0.2

* At 512K: Approximately 2.0 ± 0.2

* At 1M: Approximately 2.0 ± 0.2

* At 2M: Approximately 2.0 ± 0.2

* **Gemini 1.5 Flash-8B:** The orange scatter points also follow a downward trend, but generally have lower NLL values than Gemini 1.5 Flash, especially at higher sequence positions.

* At 128: Approximately 5.2 ± 0.3

* At 256: Approximately 4.7 ± 0.2

* At 512: Approximately 4.2 ± 0.2

* At 1K: Approximately 3.8 ± 0.2

* At 2K: Approximately 3.4 ± 0.2

* At 4K: Approximately 3.0 ± 0.2

* At 8K: Approximately 2.7 ± 0.2

* At 16K: Approximately 2.5 ± 0.2

* At 32K: Approximately 2.3 ± 0.2

* At 64K: Approximately 2.1 ± 0.2

* At 128K: Approximately 2.0 ± 0.2

* At 256K: Approximately 2.0 ± 0.2

* At 512K: Approximately 2.0 ± 0.2

* At 1M: Approximately 2.0 ± 0.2

* At 2M: Approximately 2.0 ± 0.2

### Key Observations

* Both charts demonstrate a strong negative correlation between sequence position and NLL, indicating that the models perform better (lower NLL) as the sequence length increases.

* The R-squared values (0.991 and 0.998) confirm a very strong fit of the power law to the data.

* Gemini 1.5 Flash-8B consistently outperforms Gemini 1.5 Flash, particularly at longer sequence positions.

* The error bars indicate some variability in the NLL values, but the overall trends are clear.

### Interpretation

These charts demonstrate the scaling behavior of the Gemini 1.5 Flash models. The negative log-likelihood decreasing with sequence position indicates that the models are able to better predict the next token in a sequence as they process more context. The power law fit suggests that this improvement follows a predictable pattern. The superior performance of Gemini 1.5 Flash-8B, especially at longer sequence lengths, suggests that the smaller model benefits more from increased context. The high R-squared values indicate that the power law is a good model for predicting the performance of these models at different sequence lengths. The difference in R-squared values between the two charts (0.991 vs 0.998) might suggest that the power law fit is slightly better for code than for long documents, or that the data for code is less noisy. The charts provide strong evidence that these models exhibit strong scaling properties, making them well-suited for processing long sequences of text and code.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Line Charts with Error Bars]: Cumulative Average Negative Log-Likelihood (NLL) for Long Documents and Code

### Overview

The image displays two side-by-side line charts comparing the performance of two AI models, "Gemini 1.5 Flash" and "Gemini 1.5 Flash-8B," on two different tasks. The left chart evaluates performance on "Long Documents," and the right chart evaluates performance on "Code." Both charts plot the Cumulative Average Negative Log-Likelihood (NLL) against the sequence position. A "Power law fit" line is overlaid on each chart. The data suggests that as the sequence position increases (i.e., as the models process longer contexts), the average NLL decreases for both models and both tasks, following a power-law trend.

### Components/Axes

* **Chart Titles:**

* Left Chart: "Cumulative Average NLL for Long Documents. R² = 0.991."

* Right Chart: "Cumulative Average NLL for Code. R² = 0.998."

* **Y-Axis (Both Charts):** Label is "Negative Log-Likelihood." The scale is logarithmic, with major ticks at approximately 10, 100, and 1000 (inferred from the spacing and typical NLL values). The axis spans from a low value (near 10) to a high value (above 1000).

* **X-Axis (Both Charts):** Label is "Sequence position." The scale is logarithmic, with labeled ticks at: 128, 256, 512, 1K, 2K, 4K, 8K, 16K, 32K, 64K, 128K, 256K, 512K, 1M. The right chart extends to 2M.

* **Legend (Top-Right of each chart):**

* A dashed yellow line labeled "Power law fit."

* A red dot with error bars labeled "Gemini 1.5 Flash."

* A yellow dot with error bars labeled "Gemini 1.5 Flash-8B."

* **Data Series:**

1. **Gemini 1.5 Flash (Red):** Data points are red circles with vertical red error bars indicating variance or confidence intervals.

2. **Gemini 1.5 Flash-8B (Yellow):** Data points are yellow circles with vertical yellow error bars.

3. **Power law fit (Yellow Dashed Line):** A smooth, decreasing curve fitted to the data.

### Detailed Analysis

**Left Chart: Long Documents**

* **Trend Verification:** Both the red (Flash) and yellow (Flash-8B) data series show a clear downward slope from left to right, indicating decreasing NLL with longer sequence positions. The yellow series (Flash-8B) is consistently positioned above the red series (Flash) at every sequence position.

* **Data Points (Approximate NLL values read from the log-scale chart):**

* **Sequence 128:** Flash ~800, Flash-8B ~1200

* **Sequence 1K:** Flash ~200, Flash-8B ~350

* **Sequence 16K:** Flash ~50, Flash-8B ~90

* **Sequence 256K:** Flash ~20, Flash-8B ~35

* **Sequence 1M:** Flash ~15, Flash-8B ~25

* The "Power law fit" line (yellow dashed) closely follows the trend of the Flash-8B data points. The R² value of 0.991 indicates an excellent fit of this power-law model to the underlying data trend.

**Right Chart: Code**

* **Trend Verification:** Similar to the left chart, both data series slope downward. The yellow series (Flash-8B) is again consistently above the red series (Flash). The overall NLL values for Code appear lower than for Long Documents at comparable sequence positions.

* **Data Points (Approximate NLL values):**

* **Sequence 128:** Flash ~400, Flash-8B ~600

* **Sequence 1K:** Flash ~100, Flash-8B ~180

* **Sequence 16K:** Flash ~30, Flash-8B ~50

* **Sequence 256K:** Flash ~12, Flash-8B ~20

* **Sequence 1M:** Flash ~8, Flash-8B ~14

* **Sequence 2M:** Flash ~7, Flash-8B ~12 (Data point present only on this chart)

* The "Power law fit" line also tracks the data closely here. The R² value of 0.998 suggests an even stronger fit for the Code task compared to Long Documents.

### Key Observations

1. **Consistent Model Performance Gap:** Across both tasks and all sequence lengths, the "Gemini 1.5 Flash" model (red) achieves a lower Cumulative Average NLL than the "Gemini 1.5 Flash-8B" model (yellow). Lower NLL indicates better model performance (higher likelihood of the data).

2. **Power Law Scaling:** The performance improvement (decrease in NLL) follows a predictable power-law relationship with sequence length, as evidenced by the high R² values (0.991 and 0.998) for the fitted curves.

3. **Task Difficulty:** The NLL values for the "Long Documents" task are systematically higher than those for the "Code" task at equivalent sequence positions. This suggests that, for these models, predicting long documents is a more difficult task (results in lower likelihood) than predicting code.

4. **Diminishing Returns:** The rate of NLL decrease slows dramatically as sequence length increases. The drop from 128 to 1K is massive, while the drop from 256K to 1M is relatively small, illustrating the diminishing returns of additional context.

### Interpretation

This data provides a quantitative look at how two related language models scale their performance with context length on two distinct data modalities. The key takeaway is that **longer context consistently leads to better predictive performance (lower NLL), but this improvement follows a diminishing-returns power law.**

The fact that the smaller "Flash" model outperforms the larger "Flash-8B" model on this specific metric (Cumulative Average NLL) is a critical observation. It may suggest that for the task of next-token prediction over very long contexts, the smaller model is more efficient or better calibrated, or that the larger model's capacity is not being fully utilized or is being applied differently. This challenges the simple assumption that a larger model (8B parameters) will always have lower loss.

The near-perfect R² values for the power law fits are significant. They imply that the relationship between context length and predictive performance is highly predictable and follows a fundamental scaling law for these models. This allows for reliable extrapolation and benchmarking. The stronger fit for code (R²=0.998) might indicate that code has a more predictable, structured statistical pattern than natural language documents.

In summary, the charts demonstrate robust, predictable scaling of model performance with context length, reveal an unexpected performance inversion between model sizes, and highlight the relative difficulty of modeling natural language versus code.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Cumulative Average NLL for Long Documents and Code

### Overview

The image contains two side-by-side line graphs comparing the **Cumulative Average Negative Log-Likelihood (NLL)** across sequence positions for long documents and code. Both graphs show a strong negative correlation (R² = 0.991 and 0.998, respectively) between sequence position and NLL, with three data series: a power law fit (yellow dashed line), Gemini 1.5 Flash (red diamonds), and Gemini 1.5 Flash-8B (yellow squares). The graphs emphasize trends in model performance as sequence length increases.

---

### Components/Axes

- **X-axis (Sequence position)**: Logarithmically spaced values (128, 256, 512, 1K, 2K, 4K, 8K, 16K, 32K, 64K, 128K, 256K, 512K, 1M, 2M).

- **Y-axis (Negative Log-Likelihood)**: Continuous scale from ~0.5 to ~2.5.

- **Legends**:

- **Power law fit**: Yellow dashed line.

- **Gemini 1.5 Flash**: Red diamond markers.

- **Gemini 1.5 Flash-8B**: Yellow square markers.

- **R² values**:

- Left graph (Long Documents): 0.991.

- Right graph (Code): 0.998.

---

### Detailed Analysis

#### Left Graph (Long Documents)

- **Power law fit**: Starts at ~1.5 (128) and decreases to ~0.5 (1M), following a smooth, concave curve.

- **Gemini 1.5 Flash**: Starts at ~2.5 (128) and decreases to ~0.6 (1M), with error bars showing variability (~±0.1–0.2).

- **Gemini 1.5 Flash-8B**: Starts at ~1.8 (128) and decreases to ~0.5 (1M), with smaller error bars (~±0.05–0.1).

#### Right Graph (Code)

- **Power law fit**: Starts at ~1.2 (128) and decreases to ~0.4 (2M), slightly steeper than the left graph.

- **Gemini 1.5 Flash**: Starts at ~2.2 (128) and decreases to ~0.5 (2M), with larger error bars (~±0.1–0.3).

- **Gemini 1.5 Flash-8B**: Starts at ~1.6 (128) and decreases to ~0.4 (2M), with smaller error bars (~±0.05–0.1).

---

### Key Observations

1. **Consistent trend**: All data series show decreasing NLL as sequence position increases, indicating improved model performance with longer sequences.

2. **Power law fit**: Smoothly approximates the trend, suggesting a universal scaling behavior.

3. **Gemini 1.5 Flash vs. Flash-8B**:

- Flash-8B consistently outperforms Flash (lower NLL) across all sequence positions.

- Flash-8B’s error bars are smaller, indicating more stable performance.

4. **R² values**: Both graphs show near-perfect fits, but the code graph (R² = 0.998) has a slightly tighter correlation.

---

### Interpretation

The data demonstrates that **Gemini 1.5 Flash-8B** outperforms the standard Flash model in both long documents and code tasks, with lower NLL and greater stability. The power law fit suggests that NLL scales predictably with sequence length, but the Gemini models deviate slightly, indicating task-specific optimizations. The high R² values imply strong predictive power, though the code graph’s marginally higher R² suggests better generalization for shorter sequences. The error bars highlight variability in Flash’s performance, possibly due to computational constraints or task complexity. Overall, the graphs underscore the importance of model architecture (Flash-8B) in handling long sequences efficiently.

DECODING INTELLIGENCE...