## Line Graph: Predicting Final Outcome

### Overview

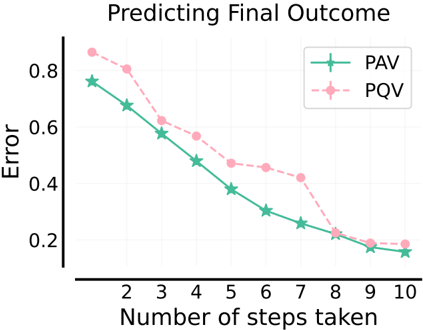

The image is a line graph comparing the error rates of two predictive methods (PAV and PQV) as the number of steps taken increases from 2 to 10. Both methods show a downward trend in error, with PAV consistently outperforming PQV across most steps.

### Components/Axes

- **Title**: "Predicting Final Outcome" (top center).

- **X-axis**: "Number of steps taken" (horizontal axis), labeled with integers 2 to 10.

- **Y-axis**: "Error" (vertical axis), scaled from 0.2 to 0.8 in increments of 0.2.

- **Legend**: Located in the top-right corner, with:

- **PAV**: Green line with star markers.

- **PQV**: Pink dashed line with circle markers.

### Detailed Analysis

- **PAV (Green Line)**:

- Starts at ~0.78 error at 2 steps.

- Decreases steadily to ~0.18 error at 10 steps.

- Key data points (approximate):

- Step 2: 0.78

- Step 3: 0.68

- Step 4: 0.58

- Step 5: 0.48

- Step 6: 0.38

- Step 7: 0.30

- Step 8: 0.25

- Step 9: 0.20

- Step 10: 0.18

- **PQV (Pink Dashed Line)**:

- Starts at ~0.85 error at 2 steps.

- Decreases to ~0.19 error at 10 steps.

- Key data points (approximate):

- Step 2: 0.85

- Step 3: 0.78

- Step 4: 0.65

- Step 5: 0.55

- Step 6: 0.48

- Step 7: 0.43

- Step 8: 0.38

- Step 9: 0.25

- Step 10: 0.19

### Key Observations

1. Both methods show a **monotonic decrease** in error as steps increase.

2. **PAV** maintains a **consistent lead** over PQV in error reduction across all steps.

3. The **gap between PAV and PQV narrows** at higher steps (e.g., at step 10, the difference is ~0.01).

4. **Steepest decline** occurs between steps 2–5 for both methods.

### Interpretation

The graph demonstrates that increasing the number of steps improves prediction accuracy for both PAV and PQV. PAV’s superior performance suggests it may be more efficient or robust in this context, though the diminishing gap at higher steps implies diminishing returns. The error reduction is most impactful in the early stages (steps 2–5), where both methods reduce error by ~0.5–0.6. This could indicate that computational resources should prioritize early-step optimization for maximum error reduction. The convergence at step 10 suggests that further steps yield minimal gains, highlighting a potential trade-off between step count and practical utility.