## Diagram: Multimodal Foundation Model Architecture

### Overview

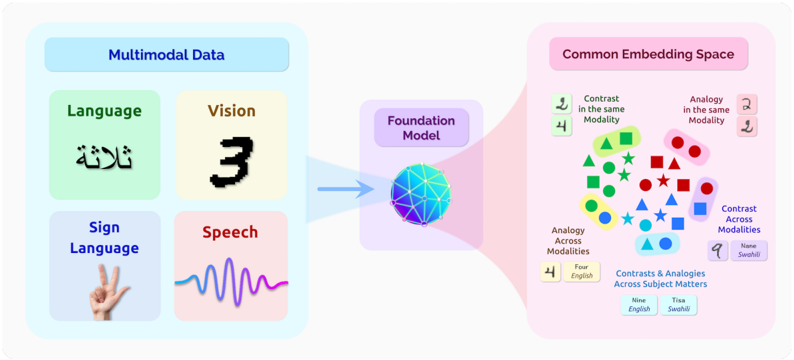

The image is a conceptual diagram illustrating the architecture and function of a multimodal foundation model. It depicts a process where diverse types of input data (multimodal data) are processed by a central foundation model, which then maps them into a unified "Common Embedding Space" where relationships between concepts across different modalities can be analyzed.

### Components/Axes

The diagram is divided into three primary regions, flowing from left to right:

1. **Left Region: "Multimodal Data"**

* A light blue container box with the title **"Multimodal Data"** at the top.

* It contains four distinct data modality boxes:

* **Top-Left (Green Box):** Label **"Language"**. Contains an icon of Arabic script (the word "لغة", meaning "language").

* **Top-Right (Yellow Box):** Label **"Vision"**. Contains an icon of a handwritten numeral "3".

* **Bottom-Left (Light Purple Box):** Label **"Sign Language"**. Contains an icon of a hand making the "V" or "2" sign.

* **Bottom-Right (Pink Box):** Label **"Speech"**. Contains an icon of a sound waveform.

2. **Center Region: "Foundation Model"**

* A purple container box with the title **"Foundation Model"**.

* Inside is a stylized, glowing blue sphere with a network of interconnected nodes, representing a neural network or complex model.

* A large, light blue arrow points from the "Multimodal Data" box into this sphere, indicating data ingestion.

3. **Right Region: "Common Embedding Space"**

* A large, light pink container box with the title **"Common Embedding Space"**.

* This space is populated with various geometric shapes (circles, squares, triangles, stars) in different colors (green, red, blue, yellow).

* **Legend/Annotations (Positioned around the shapes):**

* **Top-Left:** Icon of two green figures with the text **"Contrast in the same Modality"**.

* **Top-Right:** Icons of a magnifying glass and a document with the text **"Analogy in the same Modality"**.

* **Center-Right:** Icons of a blue star and a blue circle with the text **"Contrast Across Modalities"**.

* **Bottom-Left:** Icons of a yellow "4" and the text **"Four English"** with the label **"Analogy Across Modalities"**.

* **Bottom-Center:** Two boxes with text: **"Nine English"** and **"Tisa Seol"** (Korean: "아홉 영어" and "티사 씨올", likely a transliteration error for "아홉" (nine) and a name/term). The overarching label is **"Contrasts & Analogies Across Subject Matters"**.

* **Bottom-Right:** An icon of a globe with the text **"Swahili"**.

### Detailed Analysis

* **Data Flow:** The diagram shows a clear pipeline: raw multimodal data (language text, vision images, sign language gestures, speech audio) is fed into a central Foundation Model.

* **Embedding Space Function:** The output of the model is a shared vector space ("Common Embedding Space"). In this space:

* Concepts are represented by shapes and colors.

* The spatial proximity and relationships between these shapes encode semantic relationships.

* The annotations explicitly state that this space enables the model to understand:

1. **Contrast within a modality** (e.g., distinguishing different words in English).

2. **Analogy within a modality** (e.g., "king is to queen as man is to woman" within language).

3. **Contrast across modalities** (e.g., the difference between the sound of "four" and the visual symbol "4").

4. **Analogy across modalities** (e.g., the concept of "four" is the same whether expressed in English text, spoken English, or the sign language gesture).

5. **Contrasts & Analogies Across Subject Matters:** This suggests the space can handle more complex, multi-faceted relationships involving different topics or languages (e.g., linking the English word "nine" to its Korean counterpart "아홉" and potentially another term "Tisa Seol").

### Key Observations

* **Multimodality is Core:** The system is designed from the ground up to handle fundamentally different types of data (text, image, gesture, audio) simultaneously.

* **Unified Representation:** The key innovation depicted is the translation of all modalities into a single, common mathematical space (embeddings), enabling direct comparison and reasoning across them.

* **Rich Relational Encoding:** The embedding space isn't just for clustering similar items; it's structured to preserve specific types of relationships (contrast, analogy) both within and across the original data types.

* **Language Agnostic & Cross-Lingual:** The inclusion of Arabic script, English examples, Swahili, and Korean text indicates the model's intended capability to work across human languages.

### Interpretation

This diagram illustrates the core paradigm of modern multimodal AI. It suggests that a sufficiently powerful foundation model can learn a **unified semantic representation** where the meaning of a concept (e.g., the number "4", the concept of "language") is disentangled from its specific manifestation (text, speech, image, sign). This is a Peircean investigative process where the model learns to interpret different "signs" (the various modalities) as pointing to the same underlying "object" (the concept).

The practical implication is that such a model could perform tasks that require cross-modal understanding: describing an image in sign language, finding a video clip that matches a textual description, translating speech in one language to text in another while preserving nuance, or answering a question by synthesizing information from a diagram and a paragraph. The "Common Embedding Space" is the crucial innovation that makes this fluid translation and reasoning possible, moving beyond models that are siloed into a single data type. The inclusion of "Subject Matters" hints at the model's potential for complex, knowledge-grounded reasoning that transcends simple pattern matching.