## Diagram: AI System Lifecycle Phases

### Overview

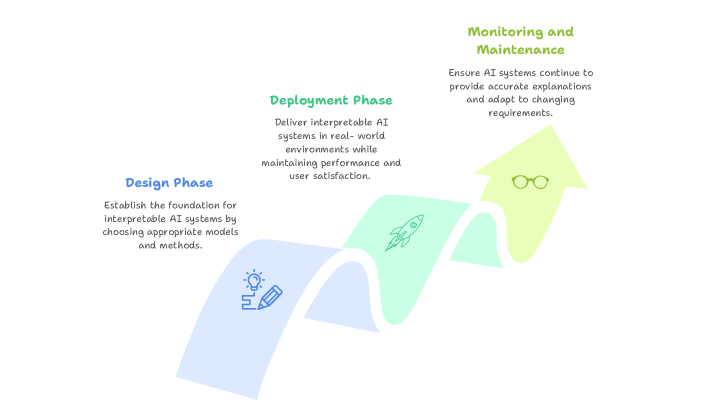

The image is a process flow diagram illustrating three sequential phases in the lifecycle of developing and maintaining interpretable AI systems. The diagram uses a single, continuous, upward-curving arrow divided into three colored segments to represent the progression from initial design to ongoing maintenance.

### Components/Axes

The diagram consists of three primary components, each associated with a specific phase and color:

1. **Design Phase (Bottom-Left Segment)**

* **Color:** Light blue

* **Title Text:** "Design Phase" (in blue font)

* **Description Text:** "Establish the foundation for interpretable AI systems by choosing appropriate models and methods."

* **Icon:** A line drawing of a lightbulb (representing an idea) with a pencil (representing design/creation).

2. **Deployment Phase (Middle Segment)**

* **Color:** Light green

* **Title Text:** "Deployment Phase" (in green font)

* **Description Text:** "Deliver interpretable AI systems in real-world environments while maintaining performance and user satisfaction."

* **Icon:** A line drawing of a rocket ship (representing launch and delivery).

3. **Monitoring and Maintenance (Top-Right Segment)**

* **Color:** Light yellow

* **Title Text:** "Monitoring and Maintenance" (in olive green font)

* **Description Text:** "Ensure AI systems continue to provide accurate explanations and adapt to changing requirements."

* **Icon:** A line drawing of a pair of eyeglasses (representing observation and oversight).

**Flow and Spatial Layout:** The arrow originates at the bottom-left with the "Design Phase," curves upward through the middle "Deployment Phase," and terminates at the top-right with "Monitoring and Maintenance." This spatial arrangement visually communicates a forward and upward progression through time or stages of maturity.

### Detailed Analysis

The diagram is purely conceptual and does not contain numerical data, charts, or axes. It presents a high-level, three-stage model for AI system management.

* **Phase 1 (Design):** Focuses on foundational choices—selecting models and methods that prioritize interpretability from the outset.

* **Phase 2 (Deployment):** Focuses on implementation—taking the designed system into practical, real-world use while balancing two key metrics: performance and user satisfaction.

* **Phase 3 (Monitoring & Maintenance):** Focuses on sustainability—ongoing oversight to ensure the system's explanations remain accurate and the system itself can evolve with new requirements.

### Key Observations

* The process is depicted as linear and sequential, with each phase leading directly into the next.

* The upward curve of the arrow suggests progress, improvement, or increasing system maturity over time.

* Each phase is given equal visual weight, implying they are of comparable importance in the overall lifecycle.

* The icons are simple, universal symbols that reinforce the core activity of each phase (ideation, launch, observation).

### Interpretation

This diagram presents a framework for responsible AI development that emphasizes **interpretability as a continuous concern**, not just a design-time feature. It argues that building a trustworthy AI system requires:

1. **Proactive Foundation:** Interpretability must be engineered in from the start ("Design Phase").

2. **Practical Validation:** The system must prove its value and usability in the real world ("Deployment Phase").

3. **Adaptive Governance:** The work isn't done at launch; systems require vigilant monitoring and updates to remain accurate and relevant ("Monitoring and Maintenance").

The model highlights a critical insight: the interpretability of an AI system is not a static property. It can degrade if not maintained, or if the environment it operates in changes. Therefore, the lifecycle extends indefinitely beyond deployment, requiring ongoing resources for monitoring and adaptation. This framework is likely intended for project managers, AI engineers, and stakeholders to structure their development and operational processes.