## Screenshot: Reinforcement Learning Simulation Environment

### Overview

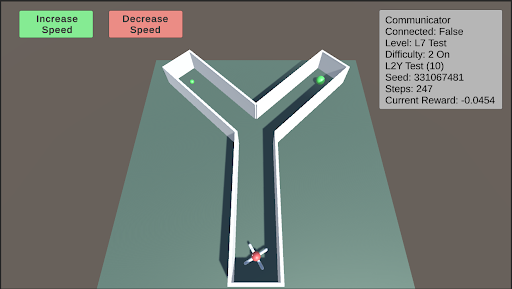

The image is a screenshot of a 3D simulation environment, likely used for training or testing a reinforcement learning agent. The scene features a Y-shaped maze or pathway on a flat plane, with a multi-colored agent at the entrance and two green spherical targets in the upper branches. User interface (UI) elements for controlling simulation speed and displaying environment status are overlaid on the scene.

### Components/Axes

The image can be segmented into three primary regions:

1. **Header/UI Overlay (Top of Screen):**

* **Top-Left:** Two rectangular buttons.

* Left Button: Green background, white text: "Increase Speed".

* Right Button: Red background, white text: "Decrease Speed".

* **Top-Right:** A semi-transparent grey panel titled "Communicator". It contains the following key-value pairs of text:

* `Communicator` (Title)

* `Connected: False`

* `Level: L7 Test`

* `Difficulty: 2 On`

* `L2Y Test (10)`

* `Seed: 1234567481`

* `Steps: 247`

* `Current Reward: -0.0454`

2. **Main Scene (Center):**

* **Environment:** A flat, teal-colored ground plane under a grey sky.

* **Maze Structure:** A white, Y-shaped pathway with raised edges. The stem of the "Y" is at the bottom center, branching into two arms that extend towards the top-left and top-right of the scene.

* **Agent:** A multi-colored (red, blue, white, black) object resembling a simple robot or vehicle, positioned at the very start of the maze's stem (bottom-center).

* **Targets:** Two identical, bright green spheres. One is located in the left branch of the maze, and the other is in the right branch.

3. **Footer/Background:** The lower portion of the image shows the continuation of the teal ground plane and the grey background/skybox. No additional UI or text is present here.

### Detailed Analysis

* **Spatial Layout:** The UI elements are anchored to the top corners of the viewport. The simulation scene is rendered in a perspective view, with the maze receding into the distance. The agent is in the foreground, and the targets are in the mid-ground.

* **State Information:** The "Communicator" panel provides a snapshot of the simulation's internal state:

* The agent is not currently connected to an external communicator (`Connected: False`).

* The task is identified as "L7 Test" with a difficulty setting of "2 On".

* A specific test variant is noted: "L2Y Test (10)".

* The simulation is deterministic, based on the provided seed `1234567481`.

* The episode has been running for `247` steps.

* The cumulative reward at this moment is negative (`-0.0454`), suggesting the agent may have incurred penalties (e.g., for time elapsed or inefficient movement).

### Key Observations

1. **Task Structure:** The Y-shaped maze presents a classic decision-making scenario for an AI agent: choose the left or right path to reach a goal.

2. **Agent State:** The agent is at the starting position, implying the beginning of an episode or a reset state.

3. **Reward Signal:** The negative current reward is a critical data point. In reinforcement learning, this indicates the agent's actions up to step 247 have not yet resulted in a net positive outcome, possibly due to a small time-step penalty.

4. **Control Interface:** The presence of "Increase/Decrease Speed" buttons indicates this is an interactive visualization tool, allowing a human observer to control the pace of the simulation for analysis.

### Interpretation

This image captures a snapshot of a reinforcement learning experiment in progress. The setup is designed to test an agent's ability to navigate a simple spatial decision problem (the Y-maze). The "L2Y Test" label likely refers to this specific "Left-2-Right" or "Y" maze configuration.

The data suggests the following narrative: The agent has been operating for 247 time steps within the "L7 Test" environment at difficulty level 2. Despite this runtime, it has not yet reached a goal (the green spheres), as evidenced by the negative cumulative reward. The reward function likely includes a small negative penalty per time step to encourage efficiency. The agent's next critical decision will be to choose which branch of the "Y" to explore. The outcome of this choice—whether it leads to a target and a positive reward—will determine if the `Current Reward` value becomes positive in subsequent steps.

The "Connected: False" status implies the agent is running autonomously based on its current policy, without receiving real-time guidance from an external system. This screenshot serves as a diagnostic view for researchers to monitor the agent's progress, environment configuration, and learning dynamics.