## Histograms: Comparison of Verification Length Distributions

### Overview

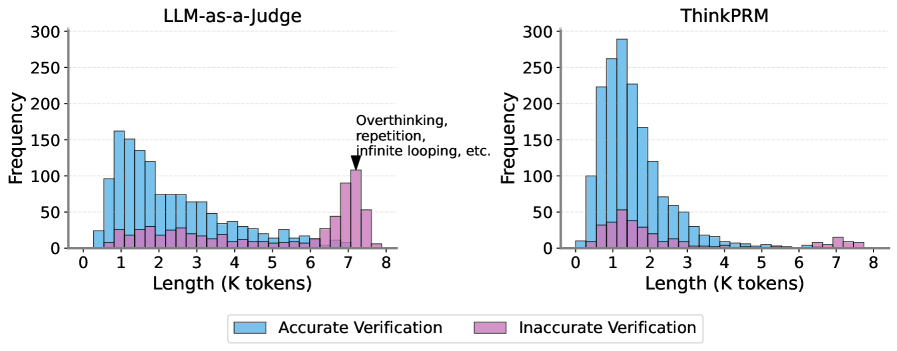

The image displays two side-by-side histograms comparing the distribution of verification lengths (in thousands of tokens) for two different methods: "LLM-as-a-Judge" and "ThinkPRM". Each histogram plots frequency against length, with data segmented into "Accurate Verification" (blue) and "Inaccurate Verification" (pink). An annotation highlights a specific pattern in the left chart.

### Components/Axes

* **Titles:**

* Left Chart: "LLM-as-a-Judge"

* Right Chart: "ThinkPRM"

* **X-Axis (Both Charts):** Label: "Length (K tokens)". Scale: Linear, from 0 to 8, with major tick marks at every integer (0, 1, 2, ..., 8).

* **Y-Axis (Both Charts):** Label: "Frequency". Scale: Linear, from 0 to 300, with major tick marks at intervals of 50 (0, 50, 100, ..., 300).

* **Legend:** Positioned at the bottom center of the entire image, below both charts.

* Blue square: "Accurate Verification"

* Pink square: "Inaccurate Verification"

* **Annotation:** Located in the top-right quadrant of the "LLM-as-a-Judge" chart. A black arrow points to the peak of the pink bars at approximately 7K tokens. The text reads: "Overthinking, repetition, infinite looping, etc."

### Detailed Analysis

**1. LLM-as-a-Judge (Left Chart):**

* **Accurate Verification (Blue):** The distribution is right-skewed. The highest frequency (approximately 160-170) occurs at a length of ~1K tokens. The frequency then steadily declines as length increases, approaching near-zero by 8K tokens.

* **Inaccurate Verification (Pink):** The distribution is bimodal. There is a small, low-frequency cluster between 0.5K and 3K tokens (peaking around 25-30). A second, much more prominent cluster appears between 6K and 8K tokens, with a sharp peak at ~7K tokens reaching a frequency of approximately 100-110. This peak is explicitly annotated as representing "Overthinking, repetition, infinite looping, etc."

**2. ThinkPRM (Right Chart):**

* **Accurate Verification (Blue):** The distribution is strongly right-skewed with a very high, sharp peak. The maximum frequency (approximately 280-290) occurs at ~1K tokens. The frequency drops off rapidly after 1.5K tokens and becomes very low (below 20) beyond 4K tokens.

* **Inaccurate Verification (Pink):** The frequencies are very low across the entire range. There is a minor, broad elevation between 0.5K and 2.5K tokens (peaking around 50) and another very slight increase around 7K tokens (peaking below 20). No significant spike is observed at the higher length ranges.

### Key Observations

1. **Peak Location & Magnitude:** Both methods show the highest frequency of accurate verifications at short lengths (~1K tokens). However, the peak for ThinkPRM is significantly higher (~290 vs. ~170) and narrower, suggesting a stronger concentration of accurate results at that length.

2. **Inaccurate Verification Pattern:** The most striking difference is in the distribution of inaccurate verifications. LLM-as-a-Judge shows a major secondary mode at high token lengths (~7K), which is explicitly linked to failure modes like overthinking. ThinkPRM shows no such pronounced secondary mode; its inaccurate verifications are low and spread thinly.

3. **Length Efficiency:** The ThinkPRM distribution for accurate verifications is more concentrated at the lower end of the length scale. The LLM-as-a-Judge distribution has a longer "tail" of accurate verifications extending to higher token counts, but at much lower frequencies.

### Interpretation

The data suggests a fundamental difference in the behavior and reliability of the two verification methods.

* **LLM-as-a-Judge** appears prone to a specific failure mode where inaccurate verifications are strongly associated with very long outputs (6K-8K tokens). The annotation implies this is due to unproductive loops or redundancy in the model's reasoning process. While it produces accurate verifications across a wide range of lengths, its inefficiency and the clear pattern of failure at high lengths are notable drawbacks.

* **ThinkPRM** demonstrates a more controlled and efficient profile. It achieves a higher density of accurate verifications at short lengths and, crucially, avoids the catastrophic "overthinking" failure mode seen in the other method. The near-absence of a high-length spike for inaccurate verifications indicates it is more robust against generating excessively long, erroneous outputs.

In essence, the charts argue that ThinkPRM is a more precise and reliable verification method, as it concentrates accurate results where they are most efficient (short lengths) and minimizes the specific type of lengthy, inaccurate output that plagues the LLM-as-a-Judge approach. The visual evidence strongly links excessive length with inaccuracy for LLM-as-a-Judge, a correlation that is largely absent for ThinkPRM.