## Diagram: Causal Inference and Learning Agent Illustration

### Overview

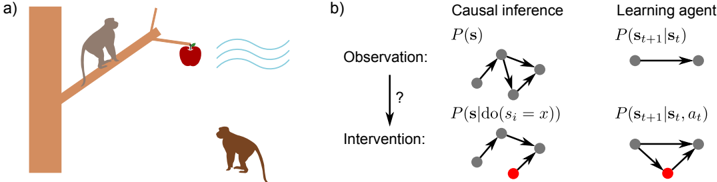

The image is a two-part technical diagram labeled **a)** and **b)**. Part **a)** is a pictorial illustration depicting a scenario involving monkeys, an apple, and a tree. Part **b)** is a conceptual diagram contrasting "Causal inference" and "Learning agent" models under "Observation" and "Intervention" conditions, using directed graphs and mathematical notation.

### Components/Axes

**Part a) - Pictorial Scene:**

* **Elements:** A brown tree trunk (left), a brown branch extending diagonally upwards to the right, a grey monkey sitting on the branch, a red apple hanging from the branch, three light blue wavy lines to the right of the apple, and a brown monkey on the ground below.

* **Spatial Layout:** The tree trunk is on the far left. The branch extends from the trunk towards the upper right. The grey monkey is seated on the branch, facing right. The apple hangs from the branch's end. The wavy lines are positioned to the right of the apple, suggesting a signal or sound emanating from it. The brown monkey is on the ground in the lower right quadrant, looking up towards the apple and branch.

**Part b) - Conceptual Diagram:**

* **Main Headings:** Two columns titled **"Causal inference"** (left) and **"Learning agent"** (right).

* **Row Labels:** Two rows labeled **"Observation:"** (top) and **"Intervention:"** (bottom), with a downward arrow labeled **"?"** between them.

* **Mathematical Notation:**

* Under "Causal inference": **P(s)** for Observation, **P(s|do(s_i = x))** for Intervention.

* Under "Learning agent": **P(s_{t+1}|s_t)** for Observation, **P(s_{t+1}|s_t, a_t)** for Intervention.

* **Graphical Elements (Directed Graphs):**

* **Causal inference - Observation:** A graph with 5 grey nodes. Arrows show a complex web of influence: one central node has arrows pointing to three others, and there are additional connections between nodes.

* **Causal inference - Intervention:** A similar graph structure, but one node (bottom center) is colored **red**. The arrows pointing *into* this red node are removed, indicating an intervention that fixes its state. Arrows *from* the red node to others remain.

* **Learning agent - Observation:** A simple graph with two grey nodes connected by a single rightward arrow: **s_t → s_{t+1}**.

* **Learning agent - Intervention:** The same two-node structure, but a third node (red, labeled **a_t**) is introduced below. Arrows point from both **s_t** and **a_t** to **s_{t+1}**.

### Detailed Analysis

**Part a) Scene Analysis:**

The illustration depicts a potential causal scenario. The grey monkey on the branch is proximate to the apple. The wavy lines suggest the apple is emitting a signal (e.g., sound, scent). The brown monkey on the ground is observing this scene. This setup visually represents an "observation" phase where an agent (brown monkey) perceives the state of the world (apple's location and signal).

**Part b) Graph and Notation Analysis:**

1. **Causal Inference Column:**

* **Observation (P(s)):** The graph represents the joint probability distribution of system states **s** under natural conditions. All variables are interconnected.

* **Intervention (P(s|do(s_i = x))):** The `do-operator` signifies an external intervention that sets a specific variable **s_i** to a value **x**. Graphically, this is shown by removing all incoming arrows to the intervened node (now red), breaking its causal parents. The resulting distribution is conditional on this forced state.

2. **Learning Agent Column:**

* **Observation (P(s_{t+1}|s_t)):** This represents a standard Markovian transition model. The next state **s_{t+1}** depends only on the current state **s_t**.

* **Intervention (P(s_{t+1}|s_t, a_t)):** This introduces an action **a_t** (red node). The next state now depends on both the current state *and* the agent's action, modeling a controlled or interventional setting.

**Cross-Reference & Spatial Grounding:**

* The red node in both "Intervention" graphs is consistently placed at the bottom of its respective graph cluster.

* The mathematical notation directly corresponds to the graphical changes: the `do(s_i = x)` notation matches the removal of incoming arrows to the red node in the causal inference graph. The addition of `a_t` in the learning agent notation matches the addition of the red action node.

### Key Observations

1. **Visual Analogy:** Part **a)** serves as an intuitive, real-world analogy for the abstract concepts in part **b)**. The brown monkey is the "learning agent" observing the state (apple location/signal). An intervention could be the grey monkey taking the apple, changing the state.

2. **Graph Transformation:** The core visual difference between "Observation" and "Intervention" in both models is the manipulation of a node's incoming connections. In causal inference, incoming links are severed. In the learning agent, a new controlling node (action) is added.

3. **Notation Consistency:** The mathematical notation is precise and standard for causal inference (`do-calculus`) and reinforcement learning/state-space models.

### Interpretation

This diagram explains the fundamental difference between **passive observation** and **active intervention** in modeling systems.

* **Causal Inference Perspective:** It demonstrates that to understand the effect of an intervention (like moving the apple), one must identify and "break" the natural causes of the variable being intervened upon. The resulting model (`P(s|do(...))`) is different from the observational model (`P(s)`). The monkey's world (part a) has many interconnected factors; changing one (intervention) requires understanding its specific causal parents.

* **Learning Agent Perspective:** It shows how an agent's model of the world must expand from simply predicting the next state (`s_{t+1}` from `s_t`) to predicting the outcome of its own actions (`s_{t+1}` from `s_t` and `a_t`). The brown monkey in part a) must learn not just what the apple will do, but what will happen if *it* acts (e.g., climbs the tree).

**Underlying Message:** The diagram argues that robust AI systems, whether reasoning about causality or learning to act, require models that can distinguish between seeing (`P(s)`) and doing (`P(s|do(...))` or `P(s'|s,a)`). The pictorial scene grounds this abstract principle in a simple, relatable narrative of observation and potential action.