\n

## Line Chart: Model Performance vs. Depth

### Overview

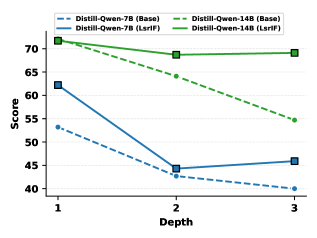

This line chart compares the performance "Score" of two language models, Distill-Qwen-7B and Distill-Qwen-14B, across different "Depth" levels (1, 2, and 3). Each model is evaluated with and without "LsrfF" (likely a feature or training method). The chart displays the score as a function of depth for each model and configuration.

### Components/Axes

* **X-axis:** "Depth" with markers at 1, 2, and 3.

* **Y-axis:** "Score" ranging from approximately 40 to 72.

* **Legend:** Located at the top-center of the chart.

* Distill-Qwen-7B (Base) - Solid Blue Line

* Distill-Qwen-7B (LsrfF) - Dashed Blue Line

* Distill-Qwen-14B (Base) - Dashed Green Line

* Distill-Qwen-14B (LsrfF) - Solid Green Line

### Detailed Analysis

**Distill-Qwen-7B (Base) - Solid Blue Line:**

The line slopes downward from Depth 1 to Depth 2, then slightly upward to Depth 3.

* Depth 1: Approximately 62.

* Depth 2: Approximately 43.

* Depth 3: Approximately 45.

**Distill-Qwen-7B (LsrfF) - Dashed Blue Line:**

The line slopes downward from Depth 1 to Depth 3.

* Depth 1: Approximately 53.

* Depth 2: Approximately 42.

* Depth 3: Approximately 40.

**Distill-Qwen-14B (Base) - Dashed Green Line:**

The line slopes downward from Depth 1 to Depth 2, then slightly upward to Depth 3.

* Depth 1: Approximately 71.

* Depth 2: Approximately 70.

* Depth 3: Approximately 70.

**Distill-Qwen-14B (LsrfF) - Solid Green Line:**

The line slopes downward from Depth 1 to Depth 3.

* Depth 1: Approximately 70.

* Depth 2: Approximately 68.

* Depth 3: Approximately 69.

### Key Observations

* Distill-Qwen-14B consistently outperforms Distill-Qwen-7B across all depths and configurations.

* The "LsrfF" feature generally decreases the score for both models, although the effect is more pronounced for Distill-Qwen-7B.

* The performance of Distill-Qwen-7B (Base) drops significantly between Depth 1 and Depth 2, then recovers slightly.

* Distill-Qwen-14B (Base) maintains a relatively stable score across all depths.

### Interpretation

The data suggests that Distill-Qwen-14B is a more robust model than Distill-Qwen-7B, as its performance is less sensitive to changes in depth. The "LsrfF" feature appears to have a detrimental effect on performance, potentially indicating that it is not well-suited for these models or this specific task. The drop in performance for Distill-Qwen-7B (Base) at Depth 2 could indicate a point of instability or a limitation in the model's ability to generalize to deeper levels. The consistent performance of Distill-Qwen-14B (Base) suggests it has a greater capacity to handle increasing depth without significant performance degradation. The chart demonstrates a trade-off between model size (7B vs 14B) and the application of the "LsrfF" feature. Further investigation is needed to understand the underlying reasons for these trends and to determine whether the "LsrfF" feature can be optimized for better performance.