\n

## Heatmap Set: Categorical Distribution Across Layers and Heads

### Overview

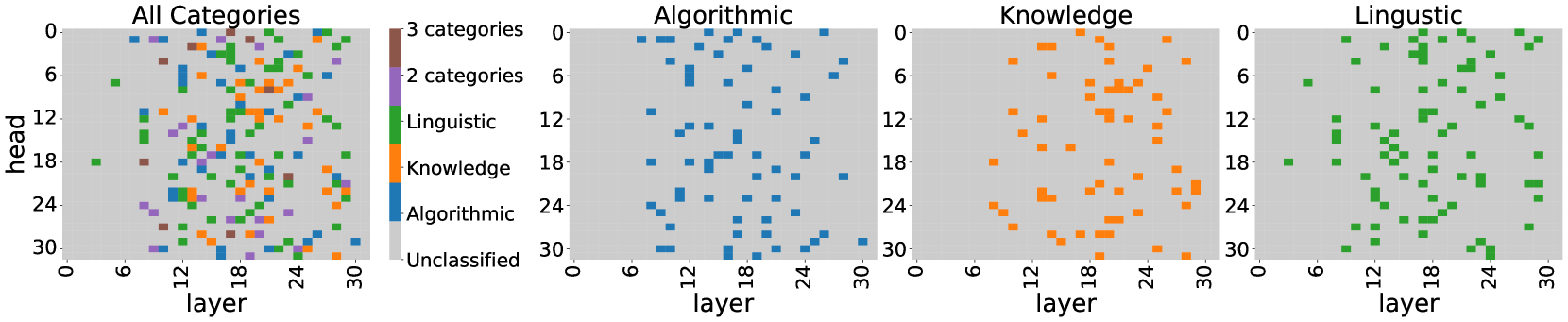

The image displays four horizontally arranged heatmaps, each plotting categorical data points on a grid defined by "layer" (x-axis) and "head" (y-axis). The leftmost heatmap, titled "All Categories," includes a legend and shows a composite view of all data. The subsequent three heatmaps isolate individual categories: "Algorithmic," "Knowledge," and "Linguistic." The visualization appears to map the presence or activation of specific functional categories within the layers and attention heads of a neural network model (likely a transformer).

### Components/Axes

* **Chart Type:** Four separate heatmaps (scatter plots on a grid).

* **Titles:**

* Leftmost: "All Categories"

* Second from left: "Algorithmic"

* Third from left: "Knowledge"

* Rightmost: "Linguistic"

* **Axes (Identical for all four charts):**

* **X-axis:** Label: "layer". Scale: 0 to 30, with major tick marks at 0, 6, 12, 18, 24, 30.

* **Y-axis:** Label: "head". Scale: 0 to 30, with major tick marks at 0, 6, 12, 18, 24, 30. The axis is inverted, with 0 at the top and 30 at the bottom.

* **Legend (Located on the left side of the "All Categories" heatmap):**

* **Brown square:** "3 categories"

* **Purple square:** "2 categories"

* **Green square:** "Linguistic"

* **Orange square:** "Knowledge"

* **Blue square:** "Algorithmic"

* **Light Gray square:** "Unclassified" (This corresponds to the background grid color).

### Detailed Analysis

**1. "All Categories" Heatmap (Leftmost):**

* **Content:** Displays a dense, mixed scatter of colored squares (blue, orange, green, purple, brown) across the entire grid. The background is light gray ("Unclassified").

* **Spatial Distribution:** Data points are scattered without a single dominant cluster, though there is a slight visual concentration in the central region (layers ~12-24, heads ~6-24). Brown ("3 categories") and purple ("2 categories") points are interspersed among the single-category points, indicating locations where multiple categories co-occur.

**2. "Algorithmic" Heatmap (Second from left):**

* **Content:** Shows only blue squares ("Algorithmic") on the light gray background.

* **Trend/Distribution:** The blue points are distributed across the grid but appear somewhat sparse. There is no strong, singular cluster, but a loose grouping is visible in the lower-left quadrant (layers ~0-18, heads ~12-30).

**3. "Knowledge" Heatmap (Third from left):**

* **Content:** Shows only orange squares ("Knowledge") on the light gray background.

* **Trend/Distribution:** The orange points show a more defined clustering pattern compared to the Algorithmic category. A notable concentration exists in the central to upper-right region (layers ~12-30, heads ~0-18). There are fewer points in the lower layers (0-12).

**4. "Linguistic" Heatmap (Rightmost):**

* **Content:** Shows only green squares ("Linguistic") on the light gray background.

* **Trend/Distribution:** The green points are widely scattered but show a visible density in the central and right portions of the grid (layers ~12-30). There is a relative sparsity in the very low layers (0-6) and the top rows (heads 0-6).

### Key Observations

1. **Category Co-occurrence:** The "All Categories" map reveals that specific layer-head positions (marked in brown and purple) are associated with two or three categories simultaneously, suggesting multifunctional components.

2. **Spatial Specialization:** While there is overlap, the individual category maps suggest a degree of spatial specialization:

* **Knowledge** points lean towards mid-to-high layers and mid-to-low heads.

* **Linguistic** points are prevalent in mid-to-high layers.

* **Algorithmic** points are more diffuse but have a presence in lower layers and heads.

3. **Coverage:** No single category uniformly covers the entire layer-head space. Significant portions of the grid remain "Unclassified" (light gray) in each individual category plot.

### Interpretation

This visualization likely analyzes the functional specialization within a large neural network, such as a transformer-based language model. Each "head" probably refers to an attention head within a specific "layer."

* **What the data suggests:** The model's processing is not monolithic. Different computational functions ("Algorithmic," "Knowledge," "Linguistic") are distributed across its architecture. The clustering patterns imply that certain regions of the network are more dedicated to specific types of processing. For instance, knowledge retrieval or storage might be concentrated in later layers, while linguistic syntactic processing could be more widespread.

* **Relationships:** The "All Categories" map is the union of the other three. The presence of multi-category (brown, purple) points is critical—it highlights components that serve integrated functions, bridging, for example, linguistic structure with factual knowledge.

* **Anomalies/Notable Trends:** The relative absence of points in the very first layers (0-6) and very last heads (24-30) across all categories is notable. This could indicate that the earliest and latest parts of the network perform more – -

## Textual Information Extraction

The image contains the following text, transcribed exactly as it appears:

**Titles:**

* All Categories

* Algorithmic

* Knowledge

* Linguistic

**Axis Labels:**

* head (Y-axis label for all charts)

* layer (X-axis label for all charts)

**Axis Markers:**

* Y-axis: 0, 6, 12, 18, 24, 30

* X-axis: 0, 6, 12, 18, 24, 30

**Legend Text (from top to bottom):**

* 3 categories

* 2 categories

* Linguistic

* Knowledge

* Algorithmic

* Unclassified