## Heatmap: AUROC for Projections a^T t

### Overview

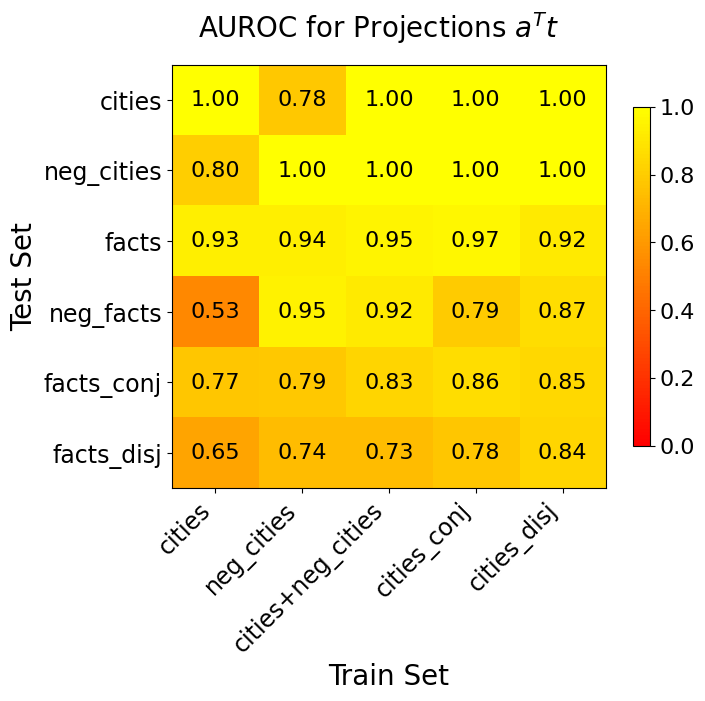

The image is a heatmap visualizing the Area Under the Receiver Operating Characteristic curve (AUROC) scores for a model's performance. The scores are presented for various combinations of training and test datasets, specifically related to "cities" and "facts" data types and their logical variants (negation, conjunction, disjunction). The title is "AUROC for Projections a^T t".

### Components/Axes

* **Chart Type:** Heatmap.

* **Title:** "AUROC for Projections a^T t" (located at the top center).

* **X-Axis (Horizontal):** Labeled "Train Set" (bottom center). It contains five categorical labels, rotated approximately 45 degrees for readability:

1. `cities`

2. `neg_cities`

3. `cities+neg_cities`

4. `cities_conj`

5. `cities_disj`

* **Y-Axis (Vertical):** Labeled "Test Set" (left center, rotated 90 degrees). It contains six categorical labels:

1. `cities`

2. `neg_cities`

3. `facts`

4. `neg_facts`

5. `facts_conj`

6. `facts_disj`

* **Legend/Color Scale:** Located on the right side of the heatmap. It is a vertical color bar indicating the AUROC score mapping:

* **Scale:** Ranges from 0.0 (bottom) to 1.0 (top).

* **Color Gradient:** Transitions from dark red (0.0) through orange and yellow to bright yellow (1.0). Higher scores are represented by brighter yellow.

* **Data Grid:** A 6-row by 5-column grid of colored cells. Each cell contains a numerical AUROC score (to two decimal places) and its background color corresponds to the value on the legend.

### Detailed Analysis

The following table reconstructs the heatmap data. Each cell value is the AUROC score for the corresponding Train Set (column) and Test Set (row).

| Test Set \ Train Set | `cities` | `neg_cities` | `cities+neg_cities` | `cities_conj` | `cities_disj` |

| :--- | :---: | :---: | :---: | :---: | :---: |

| **`cities`** | 1.00 | 0.78 | 1.00 | 1.00 | 1.00 |

| **`neg_cities`** | 0.80 | 1.00 | 1.00 | 1.00 | 1.00 |

| **`facts`** | 0.93 | 0.94 | 0.95 | 0.97 | 0.92 |

| **`neg_facts`** | 0.53 | 0.95 | 0.92 | 0.79 | 0.87 |

| **`facts_conj`** | 0.77 | 0.79 | 0.83 | 0.86 | 0.85 |

| **`facts_disj`** | 0.65 | 0.74 | 0.73 | 0.78 | 0.84 |

**Trend Verification & Color Cross-Reference:**

* **High-Performing Cells (Bright Yellow, ~1.00):** The top two rows (`cities`, `neg_cities` test sets) show perfect or near-perfect scores (1.00) when tested on models trained on related city data (`cities`, `cities+neg_cities`, `cities_conj`, `cities_disj`). The exception is training on `neg_cities` and testing on `cities` (0.78, a darker yellow/orange).

* **Mid-Performing Cells (Yellow-Orange, ~0.70-0.97):** The `facts` test set row shows consistently high scores (0.92-0.97) across all training sets. The `facts_conj` and `facts_disj` test sets show moderate performance (0.65-0.86).

* **Low-Performing Cell (Orange-Red, ~0.53):** The most significant outlier is the cell at the intersection of the `neg_facts` test set and the `cities` training set, with a score of 0.53. This is the only cell with a distinctly orange-red color, indicating performance barely better than random chance.

### Key Observations

1. **Strong Domain Performance:** Models trained on city-related data (`cities`, `neg_cities`, etc.) perform exceptionally well (AUROC ~1.00) when tested on city-related test sets, indicating strong within-domain generalization.

2. **Cross-Domain Generalization to 'facts':** Models trained on any variant of city data show surprisingly strong generalization to the `facts` test set (all scores >0.92).

3. **Critical Failure Case:** The model trained purely on `cities` data fails dramatically when tested on `neg_facts` (AUROC=0.53). This suggests the model's representation of "cities" is not just poor but potentially actively misleading for this specific logical negation task on facts.

4. **Impact of Training Data Composition:** Using combined (`cities+neg_cities`) or logically modified (`cities_conj`, `cities_disj`) training data generally improves robustness on the more challenging test sets (`neg_facts`, `facts_conj`, `facts_disj`) compared to using only `cities` or `neg_cities` alone.

5. **Asymmetry in Negation:** Performance on `neg_cities` test set is high (0.80-1.00), while performance on `neg_facts` test set is more variable and generally lower (0.53-0.95), indicating the negation task is harder or differently structured for facts.

### Interpretation

This heatmap evaluates how well a model's learned representations (specifically, projections of the form `a^T t`) transfer across different logical and semantic tasks involving "cities" and "facts".

* **What the data suggests:** The model learns highly effective and transferable representations for the "cities" domain. The near-perfect scores within city tasks indicate the core representation is robust. The strong transfer to the `facts` test set is notable, suggesting the model captures some generalizable semantic or logical structure beyond just city names.

* **How elements relate:** The axes represent a matrix of transfer learning experiments. The color intensity (AUROC) directly measures the success of this transfer. The outlier (0.53) is the most informative data point, revealing a specific weakness: the representation learned from raw `cities` data is catastrophically bad for evaluating the negation of facts.

* **Notable implications:** The results argue for the importance of **training data diversity and composition**. Simply adding negated examples (`cities+neg_cities`) or using conjunctive/disjunctive forms during training significantly improves the model's robustness on complex test cases (`neg_facts`, `facts_conj`). This has practical implications for building models that need to handle logical operations and negation reliably. The investigation would benefit from exploring *why* the `cities` -> `neg_facts` transfer fails so severely, as this points to a fundamental gap in the model's understanding.