\n

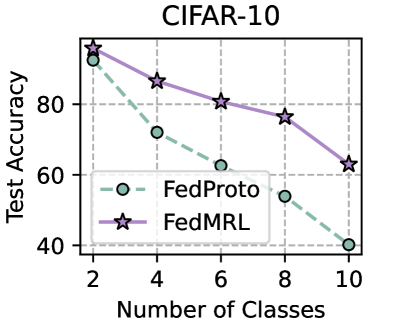

## Line Chart: CIFAR-10 Test Accuracy vs. Number of Classes

### Overview

This line chart displays the test accuracy of two federated learning protocols, FedProto and FedMRL, as a function of the number of classes in the CIFAR-10 dataset. The chart shows how performance degrades as the number of classes increases.

### Components/Axes

* **Title:** CIFAR-10 (centered at the top)

* **X-axis:** Number of Classes (ranging from 2 to 10, with markers at 2, 4, 6, 8, and 10)

* **Y-axis:** Test Accuracy (ranging from approximately 40 to 90, with markers at 40, 60, 80)

* **Legend:** Located in the bottom-left corner.

* FedProto (represented by a light blue dashed line with circular markers)

* FedMRL (represented by a purple solid line with star-shaped markers)

### Detailed Analysis

**FedProto (Light Blue, Circles):**

The FedProto line slopes downward.

* At 2 classes: Approximately 85% test accuracy.

* At 4 classes: Approximately 73% test accuracy.

* At 6 classes: Approximately 62% test accuracy.

* At 8 classes: Approximately 52% test accuracy.

* At 10 classes: Approximately 40% test accuracy.

**FedMRL (Purple, Stars):**

The FedMRL line also slopes downward, but less steeply than FedProto.

* At 2 classes: Approximately 88% test accuracy.

* At 4 classes: Approximately 82% test accuracy.

* At 6 classes: Approximately 80% test accuracy.

* At 8 classes: Approximately 77% test accuracy.

* At 10 classes: Approximately 64% test accuracy.

### Key Observations

* Both FedProto and FedMRL exhibit a decrease in test accuracy as the number of classes increases.

* FedMRL consistently outperforms FedProto across all tested numbers of classes.

* The performance drop is more pronounced for FedProto than for FedMRL.

* The difference in performance between the two protocols widens as the number of classes increases.

### Interpretation

The data suggests that both federated learning protocols struggle with increased class complexity in the CIFAR-10 dataset. The decline in accuracy indicates that as the number of classes grows, it becomes more challenging for the models to generalize effectively. FedMRL's superior performance suggests that its approach is more robust to this increased complexity. This could be due to the specific mechanisms employed by FedMRL to handle multi-class learning, such as better regularization or more effective knowledge transfer between clients. The widening gap in performance as the number of classes increases highlights the importance of developing federated learning algorithms that can scale effectively to handle complex, high-dimensional datasets. The chart demonstrates a clear trade-off between the number of classes and the achievable test accuracy in a federated learning setting.