## Scatter Plot Grid: Method Performance Across Fairness Scenarios

### Overview

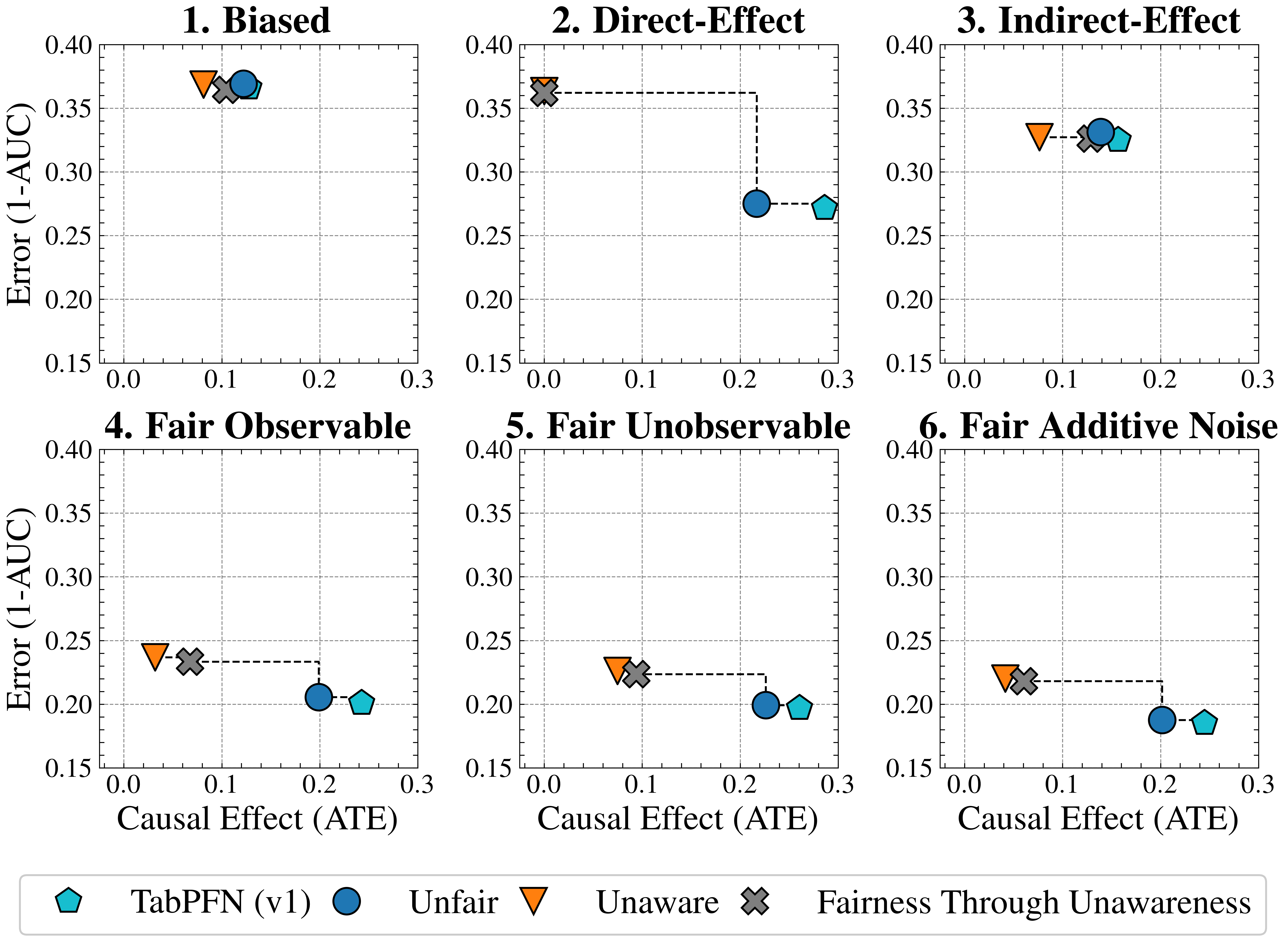

The image presents six scatter plots arranged in a 2x3 grid, comparing the performance of different fairness-aware machine learning methods across six scenarios: Biased, Direct-Effect, Indirect-Effect, Fair Observable, Fair Unobservable, and Fair Additive Noise. Each plot visualizes the relationship between **Causal Effect (ATE)** (x-axis) and **Error (1-AUC)** (y-axis), with data points connected by dashed lines to indicate trends.

---

### Components/Axes

- **X-axis**: Causal Effect (ATE) ranging from 0.0 to 0.3 in increments of 0.1.

- **Y-axis**: Error (1-AUC) ranging from 0.15 to 0.40 in increments of 0.05.

- **Legend** (bottom-center):

- **TabPFN (v1)**: Cyan pentagon (□)

- **Unfair**: Blue circle (●)

- **Unaware**: Orange triangle (▲)

- **Fairness Through Unawareness**: Gray cross (✘)

- **Plot Titles**: Each subplot is labeled with a scenario (e.g., "1. Biased", "2. Direct-Effect").

---

### Detailed Analysis

#### 1. Biased

- **Trend**: All methods cluster near the top-right (high error, low ATE).

- **Data Points**:

- TabPFN (v1): ~0.12 ATE, ~0.37 Error

- Unfair: ~0.10 ATE, ~0.36 Error

- Unaware: ~0.11 ATE, ~0.35 Error

- Fairness Through Unawareness: ~0.13 ATE, ~0.36 Error

#### 2. Direct-Effect

- **Trend**: Error decreases as ATE increases for most methods.

- **Data Points**:

- Unfair: ~0.15 ATE, ~0.35 Error

- TabPFN (v1): ~0.20 ATE, ~0.28 Error

- Unaware: ~0.18 ATE, ~0.29 Error

- Fairness Through Unawareness: ~0.22 ATE, ~0.27 Error

#### 3. Indirect-Effect

- **Trend**: Similar to Direct-Effect but with tighter clustering.

- **Data Points**:

- Unfair: ~0.14 ATE, ~0.34 Error

- TabPFN (v1): ~0.19 ATE, ~0.29 Error

- Unaware: ~0.17 ATE, ~0.28 Error

- Fairness Through Unawareness: ~0.21 ATE, ~0.27 Error

#### 4. Fair Observable

- **Trend**: All methods show lower errors compared to biased scenarios.

- **Data Points**:

- Unfair: ~0.18 ATE, ~0.21 Error

- TabPFN (v1): ~0.22 ATE, ~0.20 Error

- Unaware: ~0.19 ATE, ~0.22 Error

- Fairness Through Unawareness: ~0.23 ATE, ~0.21 Error

#### 5. Fair Unobservable

- **Trend**: Similar to Fair Observable but with slightly higher errors.

- **Data Points**:

- Unfair: ~0.17 ATE, ~0.22 Error

- TabPFN (v1): ~0.21 ATE, ~0.21 Error

- Unaware: ~0.19 ATE, ~0.23 Error

- Fairness Through Unawareness: ~0.22 ATE, ~0.22 Error

#### 6. Fair Additive Noise

- **Trend**: Error increases slightly compared to Fair Observable/Unobservable.

- **Data Points**:

- Unfair: ~0.16 ATE, ~0.23 Error

- TabPFN (v1): ~0.20 ATE, ~0.22 Error

- Unaware: ~0.18 ATE, ~0.24 Error

- Fairness Through Unawareness: ~0.21 ATE, ~0.23 Error

---

### Key Observations

1. **Biased Scenario**: All methods exhibit high error rates (~0.35–0.37), with TabPFN (v1) and Unaware performing marginally better.

2. **Direct/Indirect-Effect Scenarios**:

- Unfair methods consistently show the highest errors.

- TabPFN (v1) and Unaware methods demonstrate lower errors, with Fairness Through Unawareness slightly outperforming others in Indirect-Effect.

3. **Fair Scenarios**:

- All methods achieve lower errors (~0.20–0.24), with TabPFN (v1) and Unaware methods maintaining the best performance.

- Fairness Through Unawareness shows diminishing returns in Fair Additive Noise.

---

### Interpretation

The data suggests that **TabPFN (v1)** and **Unaware** methods generally outperform **Unfair** and **Fairness Through Unawareness** in reducing error rates across fairness scenarios. Notably:

- **Causal Effect (ATE)** inversely correlates with error in most scenarios, indicating that higher causal effects improve model performance.

- **Fairness Through Unawareness** underperforms in indirect-effect and additive noise scenarios, suggesting limitations in handling complex fairness constraints.

- **TabPFN (v1)** consistently achieves the lowest errors in fair scenarios, highlighting its robustness in balanced data distributions.

This analysis underscores the importance of method selection based on the fairness profile of the dataset and the nature of causal relationships.