TECHNICAL ASSET FINGERPRINT

d36fb0a319f79f2281501628

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Tool Calling Methods

### Overview

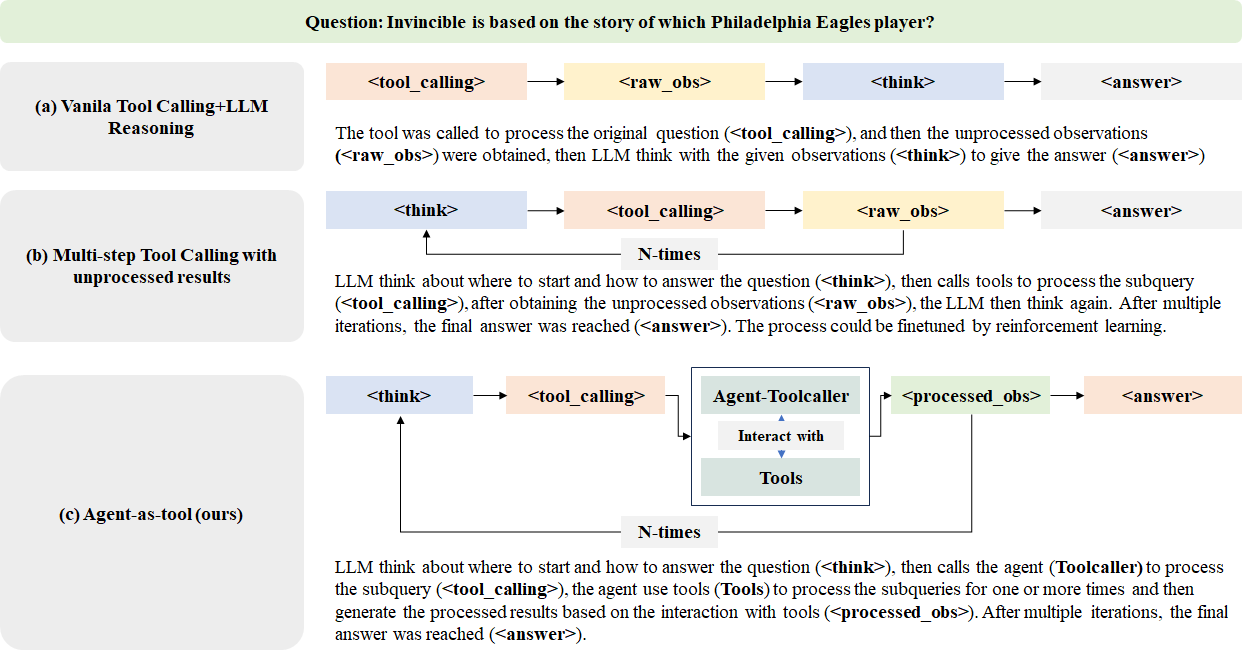

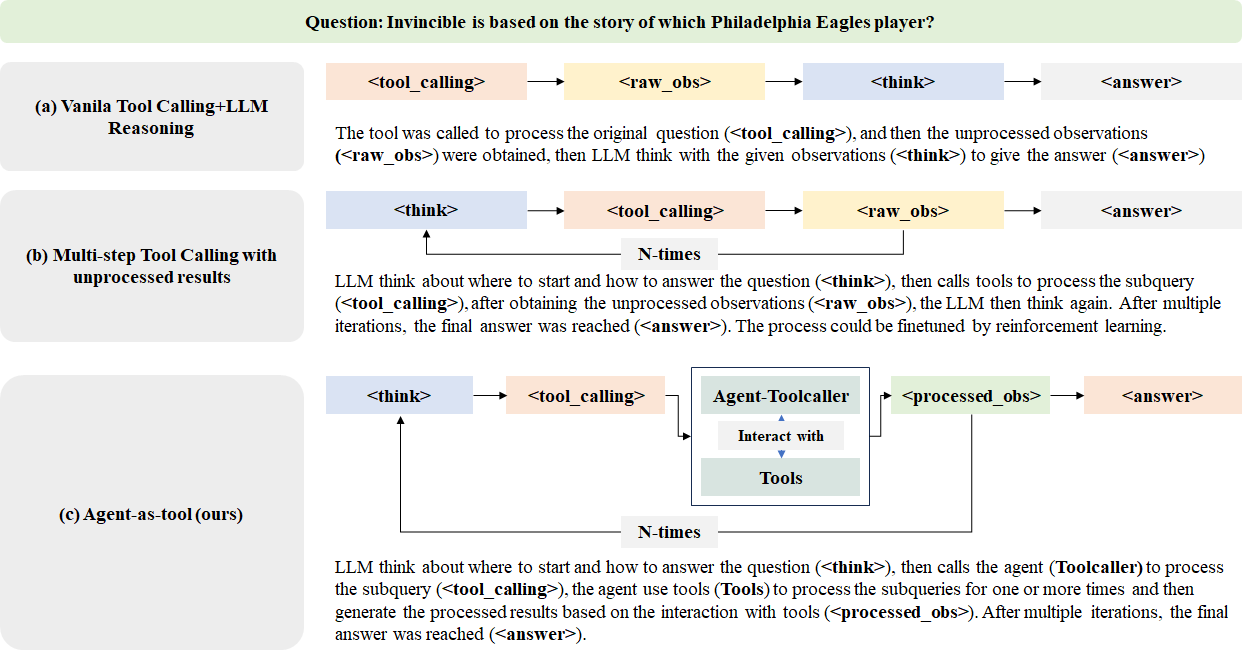

The image presents a diagram comparing three different methods of tool calling within a Language Model (LLM) framework. These methods are: Vanilla Tool Calling + LLM Reasoning, Multi-step Tool Calling with unprocessed results, and Agent-as-tool. The diagram illustrates the flow of information and processes involved in each method, highlighting the interactions between the LLM, tools, and the resulting observations/answers.

### Components/Axes

The diagram uses the following components:

* **Nodes:** Represent different stages or components in the tool calling process. These include:

* `<think>`: Represents the LLM's reasoning or thinking process.

* `<tool_calling>`: Represents the process of calling or using external tools.

* `<raw_obs>`: Represents raw, unprocessed observations obtained from the tools.

* `<processed_obs>`: Represents processed observations obtained from the tools.

* `<answer>`: Represents the final answer generated by the LLM.

* `Agent-Toolcaller`: Represents an agent that manages and interacts with tools.

* `Tools`: Represents the external tools used by the agent.

* **Arrows:** Indicate the flow of information or the sequence of processes.

* **Text Descriptions:** Provide detailed explanations of each method.

* **N-times:** Indicates a loop or iterative process.

### Detailed Analysis

**(a) Vanilla Tool Calling + LLM Reasoning**

* **Flow:** `<think>` -> `<tool_calling>` -> `<raw_obs>` -> `<think>` -> `<answer>`

* **Description:** The LLM thinks, calls a tool, receives raw observations, thinks again using the observations, and then provides an answer.

* **Text:** "The tool was called to process the original question (<tool_calling>), and then the unprocessed observations (<raw_obs>) were obtained, then LLM think with the given observations (<think>) to give the answer (<answer>)."

**(b) Multi-step Tool Calling with unprocessed results**

* **Flow:** `<think>` -> `<tool_calling>` -> `<raw_obs>` -> `<think>` (looping N-times) -> `<answer>`

* **Description:** The LLM thinks, calls a tool, receives raw observations, and then thinks again. This process loops multiple times (N-times) before generating the final answer.

* **Text:** "LLM think about where to start and how to answer the question (<think>), then calls tools to process the subquery (<tool_calling>), after obtaining the unprocessed observations (<raw_obs>), the LLM then think again. After multiple iterations, the final answer was reached (<answer>). The process could be finetuned by reinforcement learning."

**(c) Agent-as-tool (ours)**

* **Flow:** `<think>` -> `<tool_calling>` -> `Agent-Toolcaller` (interacts with `Tools` to produce `<processed_obs>`) -> `<processed_obs>` -> `<answer>`. There is a feedback loop from `<processed_obs>` back to `<think>` labeled "N-times".

* **Description:** The LLM thinks, calls the Agent-Toolcaller, which interacts with tools to process subqueries and generate processed observations. This process loops multiple times (N-times) before generating the final answer.

* **Text:** "LLM think about where to start and how to answer the question (<think>), then calls the agent (Toolcaller) to process the subquery (<tool_calling>), the agent use tools (Tools) to process the subqueries for one or more times and then generate the processed results based on the interaction with tools (<processed_obs>). After multiple iterations, the final answer was reached (<answer>)."

### Key Observations

* The Vanilla Tool Calling method involves a single iteration of tool calling and reasoning.

* The Multi-step Tool Calling method involves multiple iterations of tool calling and reasoning, allowing for refinement of the answer.

* The Agent-as-tool method introduces an agent (Toolcaller) that manages and interacts with tools, providing processed observations to the LLM.

* The Agent-as-tool method includes a feedback loop, allowing the LLM to refine its approach based on the processed observations.

### Interpretation

The diagram illustrates the evolution of tool calling methods in LLMs. The Vanilla approach is a simple, one-step process. The Multi-step approach adds iterative refinement. The Agent-as-tool approach introduces a dedicated agent to manage tool interactions, potentially improving efficiency and accuracy. The Agent-as-tool method, labeled as "ours," suggests that this is the method being proposed or used by the authors. The question at the top of the image "Invincible is based on the story of which Philadelphia Eagles player?" is likely the question being used to test these different tool calling methods.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: LLM Reasoning Approaches

### Overview

The image presents a comparative diagram illustrating three different approaches to Large Language Model (LLM) reasoning: Vanilla Tool Calling+LLM Reasoning, Multi-step Tool Calling with unprocessed results, and Agent-as-tool (the authors' approach). Each approach is depicted as a flow diagram showing the sequence of steps involved in answering a question. The question posed is: "Invincible is based on the story of which Philadelphia Eagles player?".

### Components/Axes

The diagram is structured into three horizontal sections, each representing a different reasoning approach (a, b, c). Each section contains a flow diagram with arrows indicating the sequence of operations. The key components within each flow are labeled as follows:

* `<tool_calling>`: Represents the process of calling a tool.

* `<raw_obs>`: Represents the raw observations obtained.

* `<think>`: Represents the LLM's thinking or reasoning step.

* `<answer>`: Represents the final answer generated.

* `Agent-Toolcaller`: A component specific to the "Agent-as-tool" approach, representing an agent that interacts with tools.

* `<processed_obs>`: Represents the processed observations obtained through tool interaction.

* "Interact with Tools": Describes the interaction between the Agent-Toolcaller and the tools.

* "N-times": Indicates that a certain process is repeated multiple times.

### Detailed Analysis or Content Details

**(a) Vanilla Tool Calling+LLM Reasoning:**

* The flow starts with `<tool_calling>`, followed by `<raw_obs>`, then `<think>`, and finally `<answer>`.

* The accompanying text states: "The tool was called to process the original question (<tool_calling>), and then the unprocessed observations (<raw_obs>) were obtained, then LLM think with the given observations (<think>) to give the answer (<answer>)".

**(b) Multi-step Tool Calling with unprocessed results:**

* The flow starts with `<think>`, then `<tool_calling>`, followed by `<raw_obs>`, then `<think>`, and finally `<answer>`. This sequence is repeated "N-times".

* The accompanying text states: "LLM think about where to start and how to answer the question (<think>), then calls tools to process the subquery (<tool_calling>), after obtaining the unprocessed observations (<raw_obs>), the LLM then think again. After multiple iterations, the final answer was reached (<answer>). The process could be finetuned by reinforcement learning."

**(c) Agent-as-tool (ours):**

* The flow starts with `<think>`, then `<tool_calling>`, which leads to an "Agent-Toolcaller" component.

* The "Agent-Toolcaller" interacts with "Tools" and produces `<processed_obs>`.

* `<processed_obs>` then leads to `<answer>`. This sequence is repeated "N-times".

* The accompanying text states: "LLM think about where to start and how to answer the question (<think>), then calls the agent (Toolcaller) to process the subquery (<tool_calling>), the agent use tools (Tools) to process the subqueries for one or more times and then generate the processed results based on the interaction with tools (<processed_obs>). After multiple iterations, the final answer was reached (<answer>)".

### Key Observations

* The "Vanilla" approach is the simplest, with a single iteration of tool calling and reasoning.

* The "Multi-step" approach involves multiple iterations of tool calling and reasoning with unprocessed results.

* The "Agent-as-tool" approach introduces an agent that mediates the interaction with tools, resulting in processed observations before the final answer.

* All three approaches involve an initial "think" step and a final "answer" step.

* The "N-times" notation indicates that the iterative processes in (b) and (c) can be repeated an arbitrary number of times.

### Interpretation

The diagram illustrates a progression in complexity of LLM reasoning approaches. The "Vanilla" approach represents a basic form of tool use, while the "Multi-step" approach attempts to improve reasoning through iterative refinement. The "Agent-as-tool" approach, presented as the authors' contribution, introduces a more sophisticated architecture with an agent that manages tool interactions and processes the results, potentially leading to more accurate and reliable answers. The use of "N-times" suggests that these approaches are designed to handle complex questions that require multiple steps of reasoning and tool use. The diagram highlights the importance of both the LLM's reasoning capabilities and the effective use of external tools in achieving robust question answering. The introduction of the "Agent-Toolcaller" suggests a move towards more modular and controllable LLM systems. The diagram is a conceptual illustration of the different approaches and does not provide any quantitative data on their performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Diagram: Comparison of AI Reasoning and Tool-Calling Architectures

### Overview

The image is a technical diagram comparing three different architectural approaches for an AI system (likely a Large Language Model) to answer a question by using external tools. The diagram is structured into three horizontal sections, each detailing a distinct method. A sample question is provided at the top as a common test case for all three methods.

### Components/Axes

The diagram is organized into three main rows, each with a title on the left and a corresponding flowchart on the right.

**Top Header:**

* **Question:** "Question: Invincible is based on the story of which Philadelphia Eagles player?"

**Section (a): Vanilla Tool Calling+LLM Reasoning**

* **Title (Left Column):** "(a) Vanilla Tool Calling+LLM Reasoning"

* **Flowchart (Right Column):** A linear sequence of four colored boxes connected by right-pointing arrows.

1. `<tool_calling>` (Light Orange Box)

2. `<raw_obs>` (Light Yellow Box)

3. `<think>` (Light Blue Box)

4. `<answer>` (Light Orange Box)

* **Descriptive Text:** "The tool was called to process the original question (`<tool_calling>`), and then the unprocessed observations (`<raw_obs>`) were obtained, then LLM think with the given observations (`<think>`) to give the answer (`<answer>`)"

**Section (b): Multi-step Tool Calling with unprocessed results**

* **Title (Left Column):** "(b) Multi-step Tool Calling with unprocessed results"

* **Flowchart (Right Column):** A cyclical sequence. It starts with a `<think>` box, which points to `<tool_calling>`, which points to `<raw_obs>`. An arrow from `<raw_obs>` loops back to `<think>`, labeled "N-times". A final arrow from `<raw_obs>` points to `<answer>`.

1. `<think>` (Light Blue Box)

2. `<tool_calling>` (Light Orange Box)

3. `<raw_obs>` (Light Yellow Box)

4. `<answer>` (Light Orange Box)

* **Descriptive Text:** "LLM think about where to start and how to answer the question (`<think>`), then calls tools to process the subquery (`<tool_calling>`), after obtaining the unprocessed observations (`<raw_obs>`), the LLM then think again. After multiple iterations, the final answer was reached (`<answer>`). The process could be finetuned by reinforcement learning."

**Section (c): Agent-as-tool (ours)**

* **Title (Left Column):** "(c) Agent-as-tool (ours)"

* **Flowchart (Right Column):** A cyclical sequence with a nested component. It starts with `<think>`, pointing to `<tool_calling>`. The `<tool_calling>` box points to a larger, central container labeled "Agent-Toolcaller". Inside this container are two sub-components: "Interact with" (in a white box) and "Tools" (in a light green box), connected by a double-headed vertical arrow. The "Agent-Toolcaller" container points to `<processed_obs>`, which points to `<answer>`. An arrow from `<processed_obs>` loops back to `<think>`, labeled "N-times".

1. `<think>` (Light Blue Box)

2. `<tool_calling>` (Light Orange Box)

3. **Central Component:** "Agent-Toolcaller" (Large box containing "Interact with" and "Tools")

4. `<processed_obs>` (Light Green Box)

5. `<answer>` (Light Orange Box)

* **Descriptive Text:** "LLM think about where to start and how to answer the question (`<think>`), then calls the agent (Toolcaller) to process the subquery (`<tool_calling>`), the agent use tools (`Tools`) to process the subqueries for one or more times and then generate the processed results based on the interaction with tools (`<processed_obs>`). After multiple iterations, the final answer was reached (`<answer>`)."

### Detailed Analysis

The diagram explicitly contrasts three workflows for integrating tool use with LLM reasoning:

1. **Method (a) - Vanilla:** A simple, single-pass pipeline. The LLM calls a tool once, receives raw observations, thinks about them, and produces an answer. There is no iteration or refinement.

2. **Method (b) - Multi-step with Raw Results:** An iterative loop. The LLM thinks, calls a tool, gets raw observations, and then thinks again. This loop (`<think>` -> `<tool_calling>` -> `<raw_obs>`) can repeat "N-times". The final answer is derived from the last set of raw observations. The text notes this process is suitable for reinforcement learning fine-tuning.

3. **Method (c) - Agent-as-tool (Proposed):** An iterative loop with an intermediary agent. The LLM thinks and then calls an "Agent-Toolcaller". This agent autonomously interacts with tools one or more times to produce *processed observations* (`<processed_obs>`), which are presumably more refined than raw observations. This processed output is then used by the LLM in its next thinking cycle or to generate the final answer. The loop (`<think>` -> `<tool_calling>` -> [Agent Process] -> `<processed_obs>`) also repeats "N-times".

**Key Textual Elements & Tags:**

* `<think>`: Represents the LLM's reasoning step.

* `<tool_calling>`: Represents the action of invoking an external tool or agent.

* `<raw_obs>`: Represents unprocessed output from a tool.

* `<processed_obs>`: Represents refined output from an agent's interaction with tools (unique to method c).

* `<answer>`: The final output.

* "N-times": Indicates an iterative loop in methods (b) and (c).

### Key Observations

* **Progression of Complexity:** The methods evolve from a linear pipeline (a) to a simple iterative loop (b) to a more complex loop with an encapsulated agent (c).

* **Introduction of an Agent:** The core innovation in method (c) is the "Agent-Toolcaller" component, which acts as an intermediary between the main LLM's tool-calling command and the actual tools. This agent can perform multiple interactions internally.

* **Data Refinement:** A critical distinction is the shift from `<raw_obs>` in (a) and (b) to `<processed_obs>` in (c). This implies the agent in (c) performs synthesis, filtering, or formatting on the tool outputs before returning them to the main LLM.

* **Spatial Layout:** The titles are consistently placed in a left-aligned column. The flowcharts are centered in the right area. The "Agent-Toolcaller" box in (c) is the most visually complex element, positioned centrally within its flowchart to emphasize its role as a new subsystem.

### Interpretation

This diagram illustrates a research progression in AI system design, moving from direct tool use towards more autonomous, agent-based tool orchestration.

* **What it demonstrates:** It argues for the architectural advantage of method (c), "Agent-as-tool." By delegating the multi-step interaction with tools to a specialized sub-agent, the main LLM's reasoning loop (`<think>`) is potentially simplified. It receives higher-quality, processed information (`<processed_obs>`) instead of raw data, which could lead to more accurate and efficient final answers.

* **Relationship between elements:** The LLM remains the central "reasoning engine" in all three methods. The evolution is in how it *obtains information*. Method (c) introduces a layer of abstraction—the agent—that encapsulates the complexity of tool use, making the overall system more modular and potentially more robust.

* **Notable implications:** The label "(ours)" on method (c) indicates this is the authors' proposed approach. The diagram serves as a visual thesis: that offloading tool management to an intermediary agent is a superior paradigm for complex question-answering tasks requiring external knowledge. The sample question about the movie "Invincible" acts as a concrete example of a factual query that would benefit from such a tool-augmented reasoning process.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Comparative Analysis of LLM Tool-Calling Methods for Question Answering

### Overview

The image presents a comparative analysis of three methods for answering a question using Large Language Models (LLMs) and tool calling. The question posed is: "Invincible is based on the story of which Philadelphia Eagles player?" The diagram illustrates three approaches:

1. **Vanilla Tool Calling+LLM Reasoning**

2. **Multi-step Tool Calling with unprocessed results**

3. **Agent-as-tool (ours)**

Each method is represented as a sequential workflow with color-coded steps, demonstrating differences in processing logic and iteration.

---

### Components/Axes

#### Diagram Structure

- **Three Main Sections**: Labeled (a), (b), and (c), each representing a distinct method.

- **Color-Coded Steps**:

- **Pink**: `<tool_calling>`

- **Yellow**: `<raw_obs>` (raw observations)

- **Blue**: `<reasoning>` (LLM reasoning)

- **Green**: `<final_answer>`

#### Methods

1. **Vanilla Tool Calling+LLM Reasoning**

- Sequential steps: Tool calling → Raw observations → LLM reasoning → Final answer.

2. **Multi-step Tool Calling with Unprocessed Results**

- Iterative steps: Tool calling → Raw observations → Tool calling → Raw observations → LLM reasoning → Final answer.

3. **Agent-as-tool (ours)**

- Integrated steps: Tool calling → Raw observations → LLM reasoning → Tool calling → Raw observations → LLM reasoning → Final answer.

---

### Key Differences

- **Vanilla Tool Calling**: Single iteration of tool calling and reasoning.

- **Multi-step Tool Calling**: Multiple iterations of tool calling without intermediate reasoning.

- **Agent-as-tool**: Combines tool calling and reasoning in an iterative loop for enhanced accuracy.

---

### Conclusion

The flowchart highlights the trade-offs between simplicity, iteration, and integration in LLM-based question-answering systems, with the "Agent-as-tool" method offering a balanced approach for complex queries.

DECODING INTELLIGENCE...